ai tool vs code: A Thorough Comparison for Developers

A analytical comparison of AI tool vs code, covering use cases, integration, costs, security, and outcomes for developers, researchers, and students exploring how AI tooling complements traditional coding.

ai tool vs code: According to AI Tool Resources, the decision hinges on automation, collaboration, and scale. The AI Tool Resources team found that an AI tool can automate repetitive tasks, suggest code, and accelerate debugging, while a code-focused approach emphasizes control, transparency, and performance. In this comparison, we evaluate use cases, costs, and outcomes for developers, researchers, and students.

What constitutes an AI tool in coding?

In modern software development, an AI tool for coding refers to software that uses machine learning or generative AI to assist with writing, testing, debugging, and reviewing code. This can manifest as code completion assistants, natural-language to code translators, automated refactors, or test-generation systems. AI tool vs code becomes a real choice when you weigh risk, speed, and learning curve. A true AI tool integrates with your existing IDE, runs locally or in the cloud, and respects project conventions and security policies. This line between assistant and co-developer can blur when models learn from your repository and adapt to your domain. For researchers building experimental pipelines, AI tooling offers reproducible prompts and evaluation hooks, while students can leverage interactive hints to learn faster. The AI Tool Resources team notes that adoption requires discipline in versioning prompts and auditing outputs. Understanding ai tool vs code helps you map capabilities to goals.

Core dimensions: automation, accuracy, and control

When comparing ai tool vs code, three core dimensions matter: automation potential, accuracy and reliability of outputs, and the level of human control retained over the process. AI tools can automate repetitive tasks such as boilerplate generation, test scaffolding, and refactoring suggestions, freeing developers to focus on higher-level design. However, accuracy varies with data quality and prompt design, so outputs should be reviewed like any machine-generated artifact. Control can come from local execution, clear prompt boundaries, and strict auditing of prompts and responses. The balance between automation and oversight determines risk tolerance, especially in sensitive domains. For researchers, reproducibility hinges on versioned prompts and transparent model configurations. For students, guided prompts can boost learning without eroding understanding. In the ai tool vs code debate, the optimal approach blends automated assistance with explicit, reviewable decision points.

Use cases by user type: developers, researchers, students

Developers often use AI-enabled assistants for rapid scaffolding, API usage examples, and code completion that respects project conventions. They value speed and the ability to explore multiple implementation paths quickly. Researchers lean on AI tooling to prototype ideas, generate experimental scripts, and run quick checks across datasets, but they require traceable results and auditable prompts. Students benefit from real-time feedback, hint-based learning, and guided exercises that shorten the learning curve while preserving core concepts. Across all groups, the best outcomes arise when AI tools are used to augment, not replace, human judgment. The ai tool vs code spectrum thus supports both fast iteration and careful validation, depending on the task.

Integration landscape: IDE plugins, APIs, and standalone tools

AI tools commonly integrate through IDE plugins (e.g., VS Code, JetBrains), cloud-based APIs, or standalone assistants. IDE plugins offer tight editor coupling, inline suggestions, and automatic refactoring within a familiar workflow. APIs enable custom pipelines, enabling researchers to plug AI capabilities into data processing or model evaluation tasks. Standalone tools provide specialized functions like test generation or documentation synthesis. The challenge is ensuring consistency across environments, avoiding prompt leakage, and keeping up with evolving model capabilities. When evaluating ai tool vs code integrations, consider compatibility with your tech stack, version control practices, and organizational security policies. The right mix often combines an AI-powered editor with(select) API-backed services to balance latency, reliability, and control.

Performance and latency considerations

Latency is a practical constraint that shapes user experience. Cloud-based AI services may introduce network delays, while on-device or edge deployments reduce latency but can limit capability. For coding tasks, even small delays disrupt the developer's flow, so local-first configurations or cached prompts can help maintain responsiveness. Bandwidth, model size, and request batching influence performance, as do authentication overhead and rate limits. When choosing ai tool vs code, consider whether your team prioritizes immediate feedback during editing or broader, batch-driven analysis for large codebases. Users should measure average response times for typical tasks and simulate peak-load scenarios to understand real-world behavior under different tool configurations.

Cost and licensing models

Pricing for AI coding tools ranges from low-cost monthly subscriptions to enterprise-level licenses that cover multiple seats and governance features. Many vendors use tiered plans based on usage, features, and data-handling capabilities. In evaluating ai tool vs code, teams should account for runtime usage, data retention policies, and the cost of potential retries or versioned prompts. It is also important to understand how licensing scales with team size and whether prompt-sharing rules apply across projects. Transparent pricing and predictable costs help ensure long-term adoption without budget surprises.

Data privacy, security, and compliance

Data privacy is critical when AI tools process source code and project data. Cloud-based models may transmit code snippets to external servers for processing, raising concerns about intellectual property, confidentiality, and compliance with industry regulations. Teams should assess data handling practices, retention windows, and access controls. Where possible, prefer tools that offer on-premises or private-cloud options, robust audit trails, and fine-grained permissions. Establish clear guidelines for what code can be sent to AI services and implement secure authentication, encryption, and data governance policies. Balancing innovation with governance is essential in any ai tool vs code deployment.

Quality and reproducibility: evaluating AI-generated code

Quality assessment involves more than syntactic correctness; it requires evaluating consistency, security, and maintainability of AI-generated code. Establish benchmarks, run unit and integration tests, and require human code reviews for critical paths. Reproducibility depends on versioned prompts and stable model configurations. Students and researchers should document prompts and expected outputs to enable replication and learning. For enterprise contexts, enforce provenance tracking and explainability so teams understand why a given AI suggestion was adopted. The ai tool vs code conversation often centers on balancing fast iteration with durable, auditable results.

Best practices for evaluating AI tools for coding

Start with a pilot in a controlled project to observe real-world impact and gather feedback from developers, researchers, and students. Define success metrics such as time-to-delivery, defect rate, and learning outcomes. Use a structured evaluation rubric that covers accuracy, reliability, latency, security, and governance. Require clear prompts and keep prompts under version control. Regularly audit model outputs, prune sensitive data, and update prompts to reflect evolving requirements. Establish an AI usage policy that aligns with organizational standards and educational goals. The goal is to create a repeatable, evidence-based process for choosing between ai tool and code options.

Adoption strategies and organizational readiness

Successful adoption hinges on governance, training, and incremental rollout. Start with a small pilot team, set guardrails for data handling, and create feedback loops to capture issues and improvement ideas. Provide hands-on training that emphasizes when to rely on AI assistance and when to rely on human judgment. Build a central knowledge base with best practices, prompts, and templates. As teams mature, extend AI tooling to more projects, ensuring consistent conventions and security compliance. The key is to align tooling with development workflows so AI support feels like a natural extension of coding practices rather than a disruptive shift.

The road ahead: trends and predictions

Expect AI tools to improve explainability, multi-language support, and context-sensitive suggestions that better align with your project goals. As models become more configurable, teams will demand finer-grained control over prompts, data handling, and evaluation hooks. We anticipate stronger governance features, enhanced security, and better integration with CI/CD pipelines. AI tool vs code will continue to map to varied use cases—from rapid prototyping to mission-critical systems—by enabling a hybrid model that combines speed with accountability. The AI Tool Resources team foresees a trend toward modular toolchains where code-first practices persist alongside AI-assisted workflows, rather than competing with them.

Practical checklist before choosing between ai tool and code

- Define core objectives: speed, quality, or learning outcomes.

- Assess data handling, security, and governance requirements.

- Test integration with your main IDE and version control.

- Pilot with a small team to measure impact and gather feedback.

- Establish metrics for success and a plan for scaling or adjusting tools.

- Ensure prompts and configurations are versioned and auditable.

- Plan for ongoing training and documentation to support long-term use.

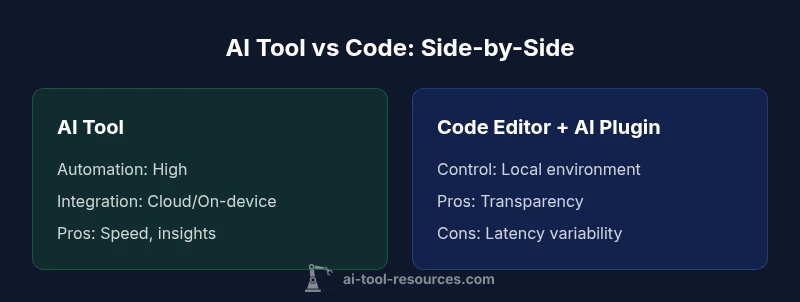

Comparison

| Feature | AI Tool | Code Editor + AI Plugin |

|---|---|---|

| Automation level | High | Moderate |

| Code quality guidance | Contextual, data-driven | Contextual with human oversight |

| Latency | Variable (cloud or on-device) | Low to moderate (local) |

| Cost range | Mid-range to enterprise | Low to mid-range |

| Best for | Rapid prototyping, scale, data-driven insights | Precise control, offline work, auditability |

Upsides

- Speeds up repetitive tasks and boilerplate generation

- Improves exploration and learning through proactive hints

- Supports rapid iteration across teams

- Helps standardize coding patterns across projects

Weaknesses

- Risk of overreliance and skill gaps if used improperly

- Security and privacy concerns with cloud-based models

- Possible hallucinations or incorrect outputs requiring verification

- Costs can accumulate with heavy usage and multiple licenses

AI-assisted coding generally offers speed and collaboration advantages, but traditional coding remains essential for control and auditability.

For teams prioritizing speed and scalable insight, AI tools provide clear benefits. For projects requiring strict provenance, offline operation, and deep customization, code-first workflows are preferable. The AI Tool Resources team notes that the best approach often blends both, leveraging AI for augmentation while preserving human oversight.

FAQ

What exactly is an AI tool in coding?

An AI tool in coding is software that uses machine learning or generative AI to help write, test, and review code. It can generate boilerplate, propose implementations, and surface potential issues.AI tools are designed to augment your workflow, not replace essential developer judgment. For many teams, the key is to integrate AI capabilities in a way that preserves clarity and accountability.

An AI tool in coding helps you write and check code faster, but it’s there to assist, not replace your judgment.

Can AI tools replace human developers?

No, AI tools are best used as assistants to speed up routine tasks and provide suggestions. They can handle repetitive work and offer insights, but humans remain essential for design decisions, complex problem solving, and ensuring quality. Effective use blends AI support with strong human oversight.

They don’t replace developers; they amplify what developers can do.

Are AI tools safe for enterprise use?

Enterprise safety depends on governance, data handling, and vendor security practices. Look for on-prem or private-cloud options, robust access controls, audit trails, and clear data-retention policies. Pair tooling with organizational policies and risk assessments to mitigate potential issues.

Security and governance are key when using AI tools in big teams.

How should I evaluate AI tools for coding?

Create a structured evaluation plan that tests accuracy, latency, integration, and governance. Use pilot projects, collect developer feedback, and measure outcomes like speed, quality, and learning impact. Document prompts and configurations for reproducibility.

Test, measure, and document how the tool performs in real projects.

What is the typical cost of AI coding tools?

Pricing varies widely, from low-cost subscriptions to enterprise licenses with governance features. Consider total cost of ownership, including usage, data handling, and training. Look for predictable pricing and understand licensing terms for teams.

Costs can range from affordable to enterprise-level, depending on features.

Key Takeaways

- Assess automation potential before adopting AI tools.

- Prioritize data security and governance in integrations.

- Pilot with a small group to gauge impact.

- Measure quality and reproducibility after prompts.

- Balance speed with explicit control for critical components.