Playground OpenAI API: A Practical Prototyping Guide

A comprehensive guide to using the Playground OpenAI API for rapid prompt prototyping, model comparison, and safe experimentation before production deployments.

Playground OpenAI API is a web-based interface that enables prototyping and testing prompts against OpenAI models without building a full application.

What is the Playground OpenAI API?

According to AI Tool Resources, the Playground OpenAI API is a web based sandbox that lets you experiment with prompts and model outputs without writing a complete application. It uses the same underlying models as the production API, so you can compare responses side by side and adjust settings like tokens, temperature, and top_p. In educational settings, researchers often use the Playground to explore prompt patterns, behavior across model families, and latency implications. As the AI Tool Resources team notes, the playground is ideal for rapid idea testing, especially when you are learning new capabilities. It is not a substitute for production integration; rather it is a staging ground for assessment, refinement, and learning.

How the Playground fits into the AI development workflow

In a typical workflow, developers begin with prompt experiments in the Playground to define the best prompts and model configurations. Once a prompt behaves consistently, the next step is to translate those findings into production code or API calls with proper error handling and monitoring. The Playground excels at quick iterations, model comparisons, and early data collection for evaluation. Based on AI Tool Resources research, teams save time by validating prompts before coding, reducing the back and forth between experimentation and implementation. This accelerates onboarding for new engineers and makes exploratory data analysis more approachable.

Getting started: access and setup

To begin, sign in to your OpenAI account and access the Playground environment. Choose a model, set core parameters such as maximum tokens and temperature, and start typing prompts. You can save prompts for later reuse, organize sessions, and compare results across models in separate panels. Remember to manage sensitive content and avoid uploading private data. The Playground will mirror the behavior of the API endpoints, so plan your testing strategy as if you were wiring calls in your application. The AI Tool Resources team recommends keeping a simple test dataset and documenting the prompts you try for reproducibility.

Core features you will use

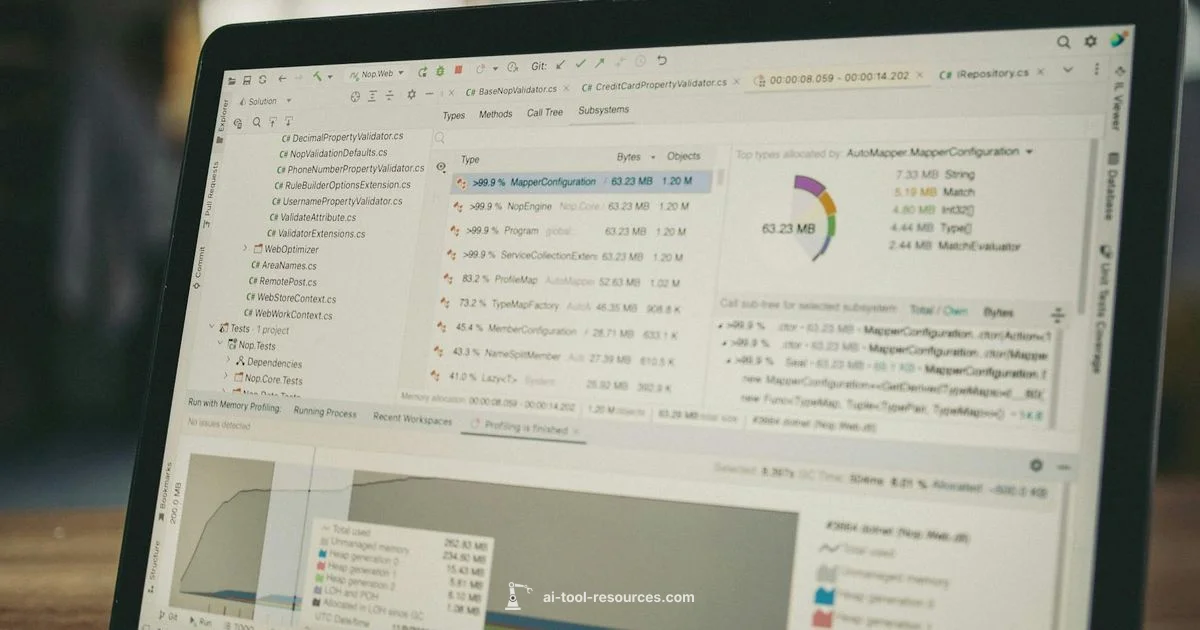

Key features include a rich prompt editor, real time output previews, session management, and model comparison views. You can tweak sampling settings like temperature and top_p, adjust response length, and test alternative phrasings without writing code. Some workspaces support multiple prompts within a single session for cross prompt experiments, enabling you to benchmark prompt strategies side by side. For researchers, this is invaluable for hypothesis testing and prompt engineering workflows. AI Tool Resources analysis shows that a structured playground workflow improves understanding of model behavior and facilitates collaborative review.

Practical prompts: templates and examples

Prompts in the Playground often follow patterns you can reuse across tasks:

- Instructional prompts: Tell the model to explain a concept in simple terms with steps.

- Comparative prompts: Ask the model to compare two ideas or approaches.

- Template prompts: Use a consistent format for data extraction or transformation.

Example templates you can adapt:

- Translate a technical instruction into plain language and list key terms.

- Summarize a long document into bullet points with the main ideas.

- Generate test cases for a given function or API.

These templates help you build reliable prompts faster. Try variations of wording and check how model outputs respond, then refine accordingly.

Common pitfalls and how to avoid them

Be mindful of token limits and the risk of non deterministic results. Small wording changes can lead to large output differences. Always validate results with a diverse test set and avoid sharing sensitive information in prompts. If outputs look inconsistent, adjust temperature or add system level constraints to stabilize behavior. The Playground environment also helps reveal model bias or unsafe responses early in the development cycle, so address issues before you proceed to production.

From playground to production: best practices

Treat the Playground as a sandbox for discovery, not a final interface. Capture the successful prompts and configurations, then port them into your application code with proper error handling, rate limiting, and logging. Keep prompts deterministic by using fixed seeds or system messages where appropriate. Establish a review process to ensure that prompts meet safety and compliance guidelines before deployment.

Real world considerations: safety, governance, and cost awareness

While the Playground reduces risk during early exploration, always apply governance checks when moving to production. Review prompts for sensitive data, avoid leaking system information, and set up logging to monitor behavior. Remember that token usage translates to cost, so plan testing sessions to maximize value while controlling spend. The combination of careful prompt design and responsible testing is essential for sustainable AI workflows.

FAQ

What is the Playground OpenAI API and how is it different from the production API?

The Playground OpenAI API is a sandbox for prototyping prompts and evaluating model behavior without building a full application. It uses the same models and settings but is designed for quick experimentation and learning rather than deployment. You still need access to your account and appropriate permissions to test prompts.

The Playground API is a sandbox for quick prompt testing and model comparison, not a full production environment.

Do I need an API key to use the Playground?

Yes. Access generally requires an OpenAI account with appropriate API permissions. The Playground uses your account credentials to run prompts against selected models.

Yes, you typically need an OpenAI account with API access to use the Playground.

Can I test multiple models side by side in Playground?

Yes. You can run prompts against different models within the same workspace or in parallel sessions to compare outputs and tuning settings. This helps identify the best fit for your task.

Yes, you can compare models side by side to see which one works best for your task.

Is there a data privacy concern when using Playground?

Prompts may be stored for research and improvement purposes depending on settings. Avoid sharing sensitive or personally identifiable information, and enable any privacy features offered by the platform.

Be mindful of data privacy and avoid sharing sensitive data in prompts.

What should I do after prototyping in Playground?

Document successful prompts and configurations, migrate them into production code with proper API calls, and implement tests and monitoring to ensure reliability and safety.

Export and translate your prototypes into production code with tests and monitoring.

Are there cost considerations I should be aware of?

Token usage drives cost, so plan and monitor testing sessions. Review pricing and quotas on the official pricing page and set up limits to avoid surprises.

Yes, monitor token usage and understand the pricing and quotas to manage costs.

Key Takeaways

- Prototype prompts rapidly in the Playground to validate ideas

- Compare model behavior side by side before coding

- Save sessions and prompts for reproducibility

- Be mindful of data privacy and token usage