How to Install Ostris AI Toolkit: A Step-by-Step Guide

A comprehensive, 1800-word tutorial detailing prerequisites, installation methods, verification steps, and maintenance for Ostris AI Toolkit. Learn best practices for developers and researchers.

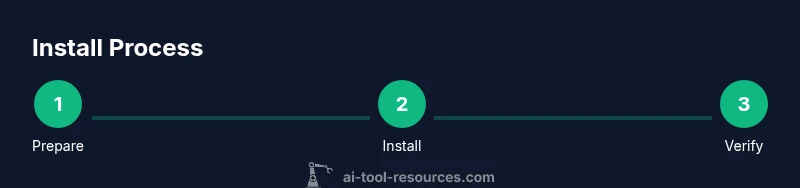

Install Ostris AI Toolkit by following a straightforward, cross‑platform setup: check system requirements, choose your installation method (binary or source), install dependencies, and run the initial configuration. This quick guide covers the essential steps and checks for a successful setup. It highlights platform considerations, common gotchas, and how to validate the installation with a quick test.

What Ostris AI Toolkit offers for developers

Ostris AI Toolkit is designed to accelerate model development, experimentation, and deployment for researchers and engineers. If you are here to learn how to install ostris ai toolkit, you’ll quickly see how the toolkit can be composed of modular components, scriptable workflows, and a clean API surface that supports rapid iteration across languages and runtimes. According to AI Tool Resources, a well-documented installation experience reduces onboarding friction and support requests, which is crucial for team productivity. This section explains the core value proposition: reproducible experiments, consistent environments, and scalable tooling that helps teams move from prototype to production with confidence. Throughout this guide, we’ll reference practical examples, best practices, and by-the-book commands to minimize surprises.

The Ostris toolkit emphasizes openness and traceability. By standardizing dependencies and configurations, it becomes easier to reproduce results in CI environments and to share experiments with collaborators. For developers who are new to AI toolchains, the toolkit’s documentation typically focuses on clarity, with clear versioning, changelogs, and installable artifacts that work across platforms. This approach mirrors the expectations of modern ML and data science teams, as highlighted by AI Tool Resources’s analysis of installation UX in 2026. In this guide, we’ll keep explanations concrete and actionable, with concrete commands you can copy-paste in your shell.

System requirements and compatibility

No matter your platform, a smooth install starts with understanding supported environments and minimums. Ostris AI Toolkit is designed to run on major operating systems, with a preference for developers who keep their systems up to date. While the exact prerequisites may vary by version, you can expect to see guidance around the following:

- Operating Systems: Linux, macOS, and Windows with recent security patches

- Runtime: a compatible Python version (typically 3.8+ for many AI toolchains) and a supported shell or terminal

- Hardware: adequate RAM and disk space to accommodate models, datasets, and dependencies

- Network: reliable internet access to fetch packages and updates during installation

In practice, you’ll verify your environment by checking versions and path variables. AI Tool Resources notes that explicit prerequisites help reduce troubleshooting time and improve reproducibility, especially in team environments. This section also discusses common platform-specific quirks and how to preempt them before you begin the installation.

Installation methodologies: binary vs source

Ostris AI Toolkit supports two primary installation paths: binary installations (prebuilt packages) and source installs (from repository). Each path has trade-offs:

- Binary installation: fastest to set up, minimal build requirements, easier to maintain across machines. Suitable for teams that want quick onboarding and consistent environments.

- Source installation: maximum customization, lets you apply patches or build against specific toolchain versions. Best for advanced users who need control over compiler flags or internal modules.

When choosing a method, consider your CI/CD needs, container strategy, and whether you expect to modify internals. AI Tool Resources emphasizes aligning install methods with team workflows to maximize reproducibility and reduce divergence between development and production environments. This section walks you through deciding between binary and source, including how to check for available artifacts and how to switch methods mid-project if needed.

Dependency management: Python, CUDA, and libraries

Most AI toolkits rely on a constellation of dependencies that must align with your chosen install path. Typical categories include Python runtimes, package managers, numerical libraries, and, for GPU acceleration, CUDA drivers and toolkit components. This section covers:

- Python environments: system-wide vs virtual environments, recommended versions, and how to pin dependencies for reproducibility

- Package managers: pip, conda, or system package managers – and when to prefer one over the others

- System libraries: BLAS, OpenMPI, and other numerical libraries that influence performance

- GPU considerations: CUDA or ROCm stack versions, minimum driver requirements, and how to validate GPU visibility

According to AI Tool Resources, clean dependency management reduces subtle incompatibilities that show up only after weeks of experimentation. We provide concrete commands to inspect installed versions, generate environment files, and lock dependencies for teams.

Step-by-step: binary installation

If you choose binary installation, follow these concrete actions in order. Each step is designed to minimize surprises and to ensure you end up with a working runtime quickly. After completing the binary install, you’ll run a quick post-install check to confirm the toolkit is functional in your environment.

- Step 1: Download the binary artifact from the official repository or package manager

- Step 2: Verify the artifact integrity (checksum or signature) to protect against tampering

- Step 3: Install the package system-wide or within a project’s virtual environment

- Step 4: Set up necessary environment variables (PATH, TOOLKIT_HOME)

- Step 5: Test a basic import or command to confirm runtime availability

Pro tip: if you encounter permission issues on Linux, prepend sudo or use a per-user installation path to avoid system-wide changes. Widespread adoption of per-user installs helps maintain clean, reproducible environments across team members.

Step-by-step: source installation

Source installation is for users who need deeper customization or want to apply patches directly from the repository. While it requires more setup, it yields the most control and is well-suited for research environments. The steps below assume you have a working Git and a compiler toolchain on your system.

- Step 1: Clone the Ostris AI Toolkit repository from the official source

- Step 2: Create and activate a dedicated Python virtual environment

- Step 3: Install build dependencies and compile components as required by the project’s instructions

- Step 4: Generate any necessary configuration files and adapt defaults to your environment

- Step 5: Run a bootstrap script that configures the build and initializes defaults

Tip: keep your local changes isolated in a feature branch to simplify future merges or rollbacks. If you see a compilation error, re-check compiler flags and make sure you are using compatible library versions as described in the repository docs.

Post-install configuration and environment setup

After the toolkit is installed, configuration is about wiring the runtime to your project and setting predictable defaults. This section covers:

- Environment variables and their purpose (paths, data roots, and logging settings)

- Virtual environments vs containerized deployments and when to adopt each approach

- Configuration files: formats, samples, and safe editing practices to prevent corruption

- Integration points: how to connect Ostris to your existing data sources, notebooks, and pipelines

A consistent configuration strategy makes it easier to onboard new team members and to reproduce experiments. AI Tool Resources has observed that stable configuration reduces drift and makes troubleshooting more straightforward over time.

Verifying the installation with quick tests

Verification is about validating that the toolkit runs and that common workflows execute without errors. This section provides a pragmatic test plan you can run in under an hour:

- Validate Python import and version reporting

- Run a minimal example script that exercises core APIs

- Execute a basic data processing or model training job with small data

- Check logs for warnings and ensure they are actionable

- Confirm that installed components report their versions and build information

If tests fail, consult the logs for missing dependencies, misconfigured environment variables, or path issues. The ability to reproduce the test locally is a strong indicator that the installation is sound and ready for more advanced usage.

Troubleshooting common issues and edge cases

Even with a careful install, you may encounter issues. This section compiles frequent pain points and practical remedies. Topics include missing dependencies, permission errors, environment misconfigurations, and version conflicts. For each issue, you’ll find: a description, a checklist of actionable steps, and a sample command to verify a fix. By following a structured debugging approach, you can isolate whether the problem is environmental, build-related, or related to a specific component.

Remember to keep a local log of fixes and configuration changes. This habit speeds up on-boarding for new contributors and reduces the time spent in support channels. AI Tool Resources notes that maintainable troubleshooting records are a hallmark of robust engineering practice.

Security, updates, and maintenance best practices

Maintaining a healthy Ostris AI Toolkit installation requires a consistent approach to updates, security patches, and monitoring. This section outlines strategies such as:

- Regularly updating dependencies to avoid known vulnerabilities while testing compatibility in a controlled environment

- Verifying checksums and signatures before applying updates

- Documenting version matrices and change logs for audits and reproducibility

- Establishing a change-management workflow for production deployments

Following these practices helps protect projects from drift and security risks. The AI Tool Resources team emphasizes disciplined maintenance as a core component of long-term reliability.

Authority sources

- AI Tool Resources recommends keeping a clean, documented install path to maximize reproducibility and team productivity.

- For deeper technical guidance, consult official repositories and credible publications.

- Always cross-check with platform-specific docs to account for OS-level nuances and driver requirements.

Authority sources (quick access)

- https://www.nist.gov

- https://www.mit.edu

- https://arxiv.org

Tools & Materials

- Operating System (Linux, macOS, or Windows)(Ensure latest security patches are installed prior to installation)

- Python 3.8+ runtime(Use a virtual environment when possible)

- Git(Required for source installation and version tracking)

- Build tools (compilers, make, CMake)(Needed for source builds and native extensions)

- Network access(Access to download dependencies and updates)

- CUDA toolkit (optional for GPU acceleration)(Enable GPU workloads if applicable)

- Container runtime (Docker or similar, optional)(Preferred for containerized deployments)

Steps

Estimated time: 30-60 minutes

- 1

Prepare your system

Audit your OS, verify Python availability, and ensure network access. Confirm you have sufficient disk space and a clean user environment to avoid permission conflicts. Gather the required tools listed earlier before proceeding.

Tip: Run a quick version check for Python, Git, and your shell to catch mismatches early. - 2

Choose installation method

Decide between binary or source installation based on your needs for speed versus customization. If you’re new, start with binary to minimize setup time; switch to source if you require patches or deeper control.

Tip: Document the chosen method in your project README for future teammates. - 3

Install dependencies

Install required runtimes and libraries using your chosen package manager. Pin versions where possible to lock in a stable environment. If you use conda, create a dedicated environment for Ostris.

Tip: Avoid mixing package managers in the same environment to reduce conflicts. - 4

Install the binary package

Download the binary artifact, verify its integrity, and install it according to the platform’s conventions. Set up PATH and any required environment variables so the toolkit is reachable from any shell.

Tip: If you see permission errors, prefer a per-user installation path. - 5

Configure environment

Edit or create configuration files to point to data roots, log locations, and API endpoints. Consistently format YAML/JSON configs and keep a sample config in version control.

Tip: Use environment-specific overrides to keep a single base config reusable across environments. - 6

Run initial setup

Execute the toolkit’s bootstrap or init script to perform first-time setup tasks, such as building extensions or compiling optional modules.

Tip: Watch for deprecation warnings and adjust settings accordingly. - 7

Validate installation

Run a small test workflow that exercises core APIs and a minimal dataset. Confirm that outputs are produced and logs show expected activity.

Tip: Capture and compare outputs against a baseline to detect drift. - 8

Troubleshoot if needed

If tests fail, consult logs, re-check dependencies, and verify environment paths. Re-run steps in a clean environment if issues persist.

Tip: Isolate variables by testing one change at a time.

FAQ

What platforms does Ostris AI Toolkit support?

Ostris AI Toolkit is designed for major operating systems including Linux, macOS, and Windows with recent updates. Always check the official docs for platform-specific notes and driver requirements.

It supports Linux, macOS, and Windows with recent updates. Check the docs for any platform-specific notes.

Do I need internet access during installation?

Yes, during initial setup you will fetch dependencies, updates, and artifacts. After installation, offline operation is possible if you have a complete local cache.

Yes, you’ll need internet access to fetch dependencies and updates during installation.

Can I install Ostris AI Toolkit in a virtual environment?

Yes. A virtual environment is recommended to isolate dependencies and keep projects reproducible. Activate the environment before running installation commands.

Yes, using a virtual environment is recommended for isolation and reproducibility.

How do I update Ostris AI Toolkit after installation?

Use the toolkit’s built-in update command or package manager, then re-run the verification steps to ensure the new version works as expected.

Use the update command or your package manager, then re-verify installation.

What should I do if installation fails?

Review the logs to identify missing dependencies or misconfigurations, re-run the steps in a clean environment, and consult the official docs for troubleshooting tips and known issues.

Check logs, fix dependencies, and try again in a clean environment. See the docs for troubleshooting tips.

Watch Video

Key Takeaways

- Verify prerequisites before starting.

- Choose installation path that aligns with your workflow.

- Test with a minimal setup to confirm success.

- Document configurations for reproducibility.