What Tools Do Colleges Use to Detect AI

Learn which tools colleges use to detect AI in student work, how detectors and policy checks fit together, and best practices for transparent, fair evaluation.

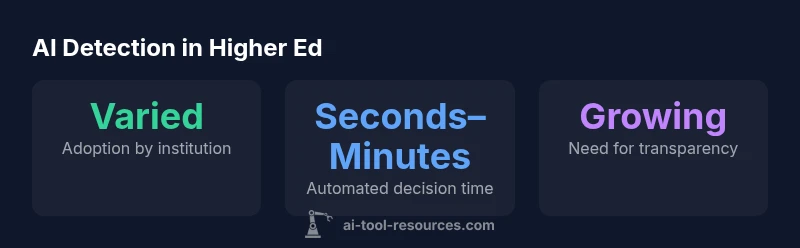

According to AI Tool Resources, colleges detect AI-generated work through a layered approach that blends automated detectors, policy checks, and human review. Tool categories include text-based detectors that flag suspicious phrasing, code detectors for programming assignments, and cross-claim checks against institutional policy. Importantly, many campuses emphasize transparency, student rights, and calibration to minimize false positives.

How colleges detect AI today

In the ongoing conversation about what tools do colleges use to detect ai, institutions rely on a layered approach that combines automated detectors, policy checks, and human review. Text-based detectors assess the linguistic style, cadence, and telltale artifacts of AI-generated writing. Code detectors examine structure, syntax, and function-level similarity to known AI patterns. Complementary policy checks ensure alignment with course requirements, citation standards, and institutional expectations. Finally, human review interprets flags in light of context—student intent, collaboration, and prior performance—before any final decision is made. Privacy considerations and data minimization are central to these workflows, especially in programs with sensitive or personal content. This multicomponent setup helps balance efficiency with fairness, while recognizing that no single tool guarantees perfect accuracy.

Beyond the classroom, colleges also consider how detection fits into broader academic integrity frameworks, including appeals processes, transparency commitments, and guidance about permissible collaboration. The discussion often centers on optimizing signal-to-noise ratios and ensuring that students understand what constitutes acceptable work. As higher education expands its use of AI, campuses are increasingly prioritizing documentation about how tools are used, how results are interpreted, and how students can contest outcomes when necessary.

This article addresses the question what tools do colleges use to detect ai and explains how institutions align technology with ethics, privacy, and educational goals. It also outlines practical steps for administrators, instructors, and students to navigate AI-related concerns in a constructive, policy-driven way.

Categories of detection tools and methods

Colleges deploy a range of tool types to detect AI involvement in student work. Text detectors analyze writing style, repetitive phrasing, and statistical cues that may indicate AI authorship. Code detectors scrutinize programming assignments for unusual abstraction levels or structure inconsistent with student practice. Policy enforcement tools verify compliance with citation standards and assignment guidelines, while human reviewers bring nuance to ambiguous cases. Many campuses also integrate plagiarism-detection platforms with AI-detection capabilities to create a unified signal stream. Importantly, the effectiveness of these tools depends on the assignment type, language proficiency, and the evolving sophistication of AI models. Institutions often disclose high-level information about their detection stack in policy documents to maintain trust and accountability.

From an engineering perspective, detection systems typically operate in a layered pipeline: automated scanning, risk scoring, policy interpretation, and human adjudication. Architects design these systems to minimize unnecessary friction for legitimate authors while preserving the integrity of assessments. For students and instructors, this means learning how the system flags content and what steps follow if a concern is raised.

In practice, colleges frequently pair detectors with education about academic integrity and clear expectations for originality. The combination of automated signals and contextual evaluation helps ensure that AI-assisted learning remains a legitimate tool rather than a shortcut. This approach also supports responsible experimentation with AI tools inside the boundaries set by the institution, thus fostering innovation without compromising fairness.

Implementation and workflow in higher education

A practical detection workflow begins with submission or intake of work where students declare sources and collaboration. Automated detectors run first, producing a risk score and flagged items. If a flag emerges, a policy check analyzes alignment with course guidelines, citation requirements, and collaboration rules. A human reviewer then examines the flagged content, weighing context such as prior work, prompt wording, and the student’s explanation. If the result remains uncertain, an appeals pathway offers students a chance to present evidence of authorship or rightful use of AI assistance. Institutions often publish timelines for reviews to manage expectations and avoid unnecessary delays.

Security and privacy controls guide data handling throughout the process. Access to submitted materials is restricted to authorized personnel, and data retention policies govern how long artifacts are stored. In environments with multiple departments (e.g., writing centers, IT, and academic integrity offices), cross-functional teams collaborate to ensure consistent interpretation of signals and fair outcomes. For administrators, a well-documented workflow supports accountability and continuous improvement by tracking outcomes, reviewer decisions, and policy updates.

From a student perspective, understanding the workflow helps in preparing high-quality work. When in doubt, students should document their writing process, cite sources accurately, and seek guidance from instructors about appropriate use of AI tools. Transparent communication reduces misunderstandings and fosters trust between learners and the institution.

Challenges, ethics, and transparency

Despite the best efforts of colleges, AI-detection tools face significant challenges. False positives can impact students who write in non-native varieties or who use AI to brainstorm but not produce final work. Evasion tactics by AI models and rapid updates to generation tools can render detectors less effective over time. Privacy concerns arise when institutions analyze personal drafts or submissions; transparent data practices and minimization of data collection are vital. Ethically, relying solely on automated signals risks bias and inequitable treatment if certain groups are disproportionately flagged. Therefore, many campuses emphasize an explanation of results, student rights, and a clear process for contesting decisions. Finally, the evolving nature of AI necessitates ongoing calibration, independent validation, and periodic policy reviews to balance innovation with integrity.

Transparency is central to this balance. Institutions increasingly publish high-level policies about what constitutes AI-generated work, how detectors are used, and how students can respond to findings. Some colleges provide examples of allowed AI assistance, detail the criteria for evaluation, and explain how flags are resolved in class-specific contexts. By coupling automated tools with human judgment and open communication, colleges strive to build trust and maintain academic standards while accommodating legitimate AI-assisted learning.

Ethical stewardship also means aligning with broader regulatory and accreditation expectations. Universities are urged to document their methodologies, disclose the limitations of detectors, and maintain opportunities for students to appeal or appeal outcomes. This approach helps ensure that AI-detection practices support learning, protect privacy, and uphold fairness across diverse student populations.

Best practices for institutions and students

For institutions, the best practice is a layered, transparent approach that combines technology with policy and people. Start with a clear policy that defines acceptable AI use and citation requirements, followed by a public-facing description of the detection workflow. Invest in detector validation, regularly review outcomes for bias, and maintain an accessible appeal pathway. Train reviewers to interpret results contextually, not as absolute judgments, and ensure timelines are realistic to minimize disruption to learning. Finally, communicate with students about what is being measured and why, reinforcing a culture of integrity and opportunity for growth.

For students, the key is to focus on originality and proper attribution. Keep drafts that show your development process, annotate AI-assisted edits, and cite any AI-tool usage explicitly when allowed. Seek guidance early from instructors or writing centers if you plan to experiment with AI as a learning aid. If flagged, use the official appeal mechanism, provide evidence of authorship, and request a transparent explanation of the determination. By approaching AI with honesty and curiosity, students can benefit from innovative tools while preserving academic standards.

Overview of tool types and their trade-offs in college AI-detection workflows

| Tool Type | What It Detects | Typical Limitations |

|---|---|---|

| Text-based detectors | Patterned language, stylometry, AI-writing artifacts | High false positives for non-native writers; evolving AI models can evade detection |

| Code detectors | Program structure and similarity to training data | False negatives with original or unique code; privacy concerns |

| Policy & process checks | Adherence to citation standards and assignment rules | Subjectivity; requires human judgment and clear appeal rights |

| Human review | Contextual evaluation and final decision | Resource-intensive; potential reviewer bias; time delays |

FAQ

What tools do colleges use to detect AI?

Colleges use a mix of text detectors, code detectors, policy checks, and human review. They pair automated signals with contextual evaluation and student rights considerations.

Colleges use a mix of detectors and policy checks, plus human review.

Are AI-detection tools reliable?

No detector is perfect. Detection accuracy varies by writing style, assignment type, and model generation methods. Institutions typically use multiple signals and an appeal process to mitigate errors.

No detector is perfect; most schools use multiple signals and an appeal path.

Do colleges inform students about detection methods?

Most colleges publish general policies and penalties, while details of specific detectors are often shared in syllabus or privacy documents. Institutions are increasing transparency to maintain trust.

Policies are shared, but detector specifics may be described in public docs.

What should students do to avoid false positives?

Keep original work, cite sources, and discuss your collaboration and writing process. If flagged, request an appeal and provide evidence of authorship.

Keep originals, cite sources, and use the appeals process if flagged.

How should colleges handle flagged work in exams vs assignments?

Exams follow proctoring and policy checks, while assignments emphasize context and potential for collaboration disputes. Both require fair, transparent review.

Exams use different checks; both need fair review.

“AI detection in higher education works best when it complements policy transparency and human judgment, not as a standalone gatekeeper.”

Key Takeaways

- Understand layered detection: automation, policy checks, and human review.

- Expect adoption variability across institutions.

- Prioritize transparent policies and student rights.

- Calibrate thresholds to reduce false positives.

- Offer clear appeals when a flag is raised.