Text to Art AI: How Words Create Images

Learn how AI that makes art from words converts textual prompts into visuals, with practical prompts, underlying tech, use cases, and ethics for developers, researchers, and students.

ai that makes art from words is a type of AI image generator that converts textual descriptions into visual imagery. It typically uses diffusion or transformer models to render images from natural language prompts. In practice, a concise prompt guides the model to produce a visual interpretation.

What ai that makes art from words is

ai that makes art from words is a type of AI image generator that converts textual descriptions into visual imagery. It typically uses diffusion or transformer models to render images from natural language prompts. In practice, users craft a sentence or two describing a scene, mood, or style, and the model produces one or more visuals that match the prompt.

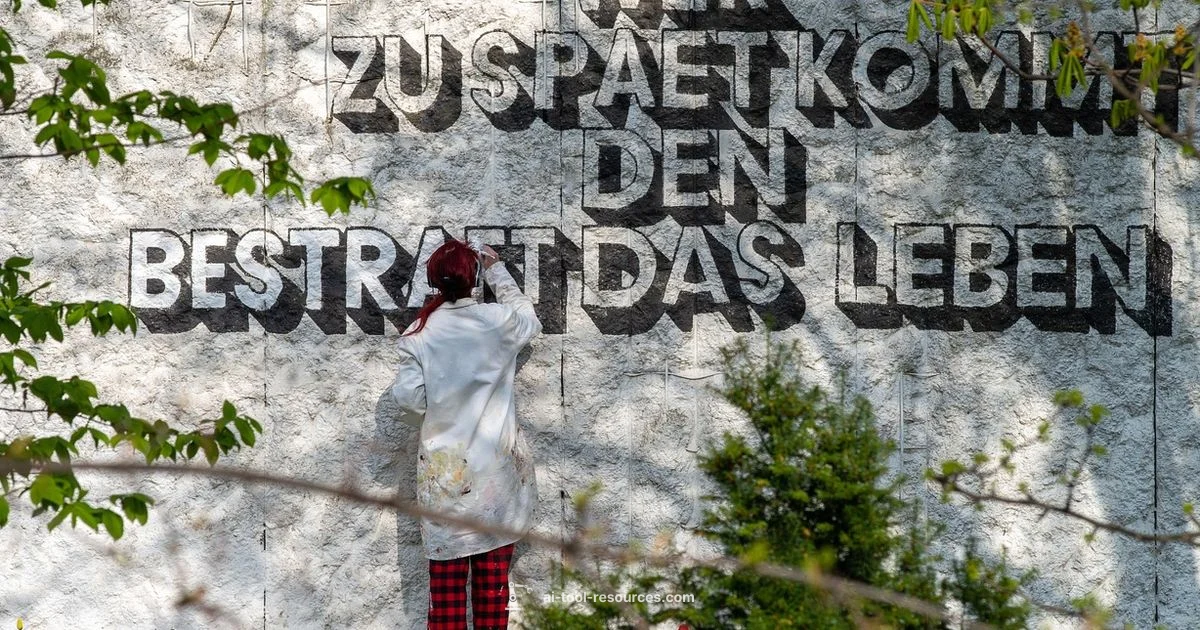

According to AI Tool Resources, this technology has moved from niche experiments to widely used tools across design, education, and research. You can generate concept art for a storyboard, mock up product visuals, or illustrate a lecture slide without hiring an illustrator. The prompts can be simple like a sunset over a calm sea, or richly detailed with lighting, camera angles, and textures. Because the output depends on the input prompt, a single idea can yield dozens of distinct interpretations.

Prompt engineering—the craft of writing prompts that guide the model toward your intended result—is critical. Small changes in adjectives, lighting, or composition can dramatically alter mood and style. Many creators begin with a baseline prompt and iteratively refine it, selecting outputs to mix and remix. The result is a fast, iterative workflow that complements traditional art creation rather than replacing it.

For learners and practitioners, a disciplined approach to prompting, versioning, and evaluation helps keep outputs consistent and useful. With careful prompts and ethical considerations, text to image tools become powerful co-creators rather than mere generators.

How it works in practice

At a high level, text to image AI takes a text prompt and converts it into an image through a sequence of learned transformations. First, the system encodes the words into a numerical representation that the model can process. Then a diffusion or autoregressive process gradually builds an image, guided by the textual embedding and learned associations between language and visuals. The model samples many iterations, evaluating how well the current image matches the prompt, and refines steps to improve alignment.

In newer systems, a separate vision-language model helps compare the generated image to the prompt, using a score that favors features described by the user. Some workflows include an upscaling step to improve resolution and a post-processing pass to adjust color balance or sharpness. Designers often run multiple prompts in parallel to produce a small gallery from which they select the strongest compositions. While results can be astonishing, they still depend on factors like prompt clarity, model capabilities, and licensing terms for the generated assets. For researchers, these tools provide a sandbox to test hypotheses about vision-language alignment and multimodal learning.

Core technologies that empower text to image AI

Text to art AI relies on a trio of technologies to bridge language and visuals. Diffusion models progressively refine noise into coherent images guided by a textual prompt. The diffusion process learns to denoise images in small steps, gradually revealing structure, color, and texture that match the input description. The second pillar is a text encoder that converts natural language into numerical representations the model can understand. A guidance system, often using a vision-language model like CLIP or similar, helps steer outputs toward semantic features described in the prompt. Finally, image priors such as variational autoencoders or other generative priors help map the latent representation to realistic or stylized visuals, depending on the desired outcome.

Popular families include diffusion-based architectures and transformer-guided systems. Many implementations combine a diffusion sampler with a learned image prior and a powerful text encoder, enabling a wide range of styles from photorealism to painterly textures. While specific flavors vary, the core idea remains the same: align language and visuals through joint training or synchronized guidance, enabling artists and engineers to experiment with prompts and outputs rapidly.

Use cases across fields

The versatility of ai that makes art from words spans several domains:

- Education: create engaging diagrams, storytelling visuals, and lecture slides that illustrate complex concepts without traditional drawing.

- Design and marketing: generate concept art, mood boards, and product visuals to accelerate ideation and align teams around a shared visual language.

- Gaming and animation: prototype character designs, environment art, and concept frames to iterate on worldbuilding quickly.

- Research visualization: illustrate abstract ideas, datasets, or experimental results with visually accessible representations.

- Personal art and exploration: experiment with styles or themes, producing pieces for portfolios, prints, or prompts that seed human-made art.

Across these domains, the balance between speed, control, and licensing determines how these tools fit into workflows. When used responsibly, they complement traditional methods and expand creative possibilities.

Quality, style, and control: prompts and constraints

Control in text to image generation comes from how you craft prompts and manage the generation process. Start with a clear subject, setting, and mood. Add style descriptors such as watercolor, photorealistic, or digital painting to guide texture and technique. Negative prompts can steer away from unwanted elements, while specifying lighting, camera angle, and composition helps contextualize the scene.

Practical prompt techniques include:

- Layered prompts: build a base scene, then add style modifiers in separate phrases.

- Style prompts: specify artists, eras, or genres to anchor the look while retaining subject fidelity.

- Seed and randomness: fix the seed to reproduce outputs or vary seeds for exploration.

- Resolution and aspect ratio: define desired dimensions and framing to match downstream uses.

Iterate by generating multiple variants, comparing outcomes, and compiling a brief dataset of preferred renders. Documentation of prompts and seeds makes it easier to reproduce results and refine strategies over time.

Risks, ethics, and copyright

Text to image AI brings ethical considerations that practitioners should respect from day one. Licensing terms for generated visuals vary by platform and model, so verify whether outputs can be used commercially or require attribution. Copyright remains a nuanced area; prompts that imitate living artists or reproduce protected styles can raise legal concerns. When in doubt, favor original prompts and avoid direct emulation of specific artists without permission.

Bias in training data can influence outputs, producing stereotypes or inaccurate depictions. Ethical use means reviewing outputs for sensitive content, avoiding harmful representations, and maintaining transparency about the role of AI in the creative process. For researchers and educators, documenting prompts, settings, and licensing helps build responsible workflows and sets expectations for collaborators and audiences.

Getting started: a practical plan for learners

To begin, define your goal. Are you prototyping a concept, creating educational visuals, or exploring artistic styles? Choose a platform with clear licensing terms and a straightforward prompt interface. Start with simple prompts and a few style keywords, then review outputs and refine your prompts based on what you observe.

A practical starter workflow:

- Draft a one sentence prompt describing the scene.

- Generate a small gallery of 6–9 variations.

- Pick 2–3 favorites and refine with style and lighting tweaks.

- Upscale the chosen images for presentation or publication.

- Document prompts, seeds, and results in a prompt ledger for reproducibility.

For learners, pair practice with reflection: note what changes in the prompt move the output toward your intention. This builds a reusable skill set that accelerates future projects.

Future directions and keeping learning going

As techniques mature, text to image AI will likely offer tighter integration with creative software, better control over composition, and more robust safety and licensing controls. Researchers continue to explore multimodal alignment, conditional generation, and real-time feedback loops that enable interactive artwork. For students and professionals, ongoing learning means following communities, experimenting with new prompts, and validating outputs against defined goals.

To stay current, engage with open resources, tutorials, and community prompts that illustrate best practices. The AI Tool Resources Team encourages learners to experiment with prompts, track outcomes, and share findings responsibly to advance the field while honoring creators’ rights and permissions.

FAQ

What is ai that makes art from words?

ai that makes art from words is a text-to-image AI system that converts written prompts into visual artwork. It uses learned patterns from large data sets to render images that align with user descriptions.

AI that makes art from words turns written prompts into images using learned patterns. It helps you create visuals quickly by describing what you want.

How does text to image AI work in practice?

In practice, a text prompt is encoded into a mathematical representation, guiding a generative model to produce an image. Diffusion or autoregressive processes iteratively refine the image to match the prompt, with optional upscaling and post-processing for quality and style.

You type a prompt, the model builds an image step by step, and you can refine it with prompts or style tweaks.

Can I use generated images commercially?

Commercial use depends on the platform’s licensing terms and the model’s training data. Some tools allow broad commercial use, while others require attribution or have restrictions. Always check the license before using outputs for products or publications.

Check the tool’s license terms before using outputs commercially to avoid copyright issues.

Are these tools free or do they cost money?

Many text to image tools offer free tiers with limited credits or features, plus paid plans for higher resolution, more prompts, or commercial use. Costs vary by provider and usage level, so compare features and licenses before committing.

Most offer free options for learning, with paid plans for higher limits and commercial use.

What makes prompts effective for art generation?

Effective prompts are clear, specific, and well structured. Include subject, setting, lighting, mood, and intended style. Iteration is key—start broad, then narrow with adjectives, artists, or techniques to steer the result.

Be specific and iterative. Start broad, then refine details and style until you get the look you want.

What are common limitations or risks?

Limitations include inconsistent outputs, bias from training data, and licensing concerns. Outputs may resemble protected styles unintentionally, and quality can vary across prompts and platforms. Use prompts responsibly and evaluate results for fairness and accuracy.

Outputs can be inconsistent and may raise licensing or bias concerns, so review results carefully.

Key Takeaways

- Learn how prompts drive image generation

- Experiment with multiple models for styles

- Be mindful of licensing and copyright

- Use seeds and aspect ratios to control results

- Document prompts for reproducibility