03 ai tool: Definition, uses, and evaluation guide

Explore the 03 ai tool, a versatile AI software category that blends data preparation, model development, and deployment. Learn what it is, key features, use cases, evaluation criteria, and best practices for adoption.

03 ai tool is a type of AI software that combines data preprocessing, model development, and deployment workflows to help developers and researchers build, test, and scale AI solutions.

What is 03 ai tool and why it matters

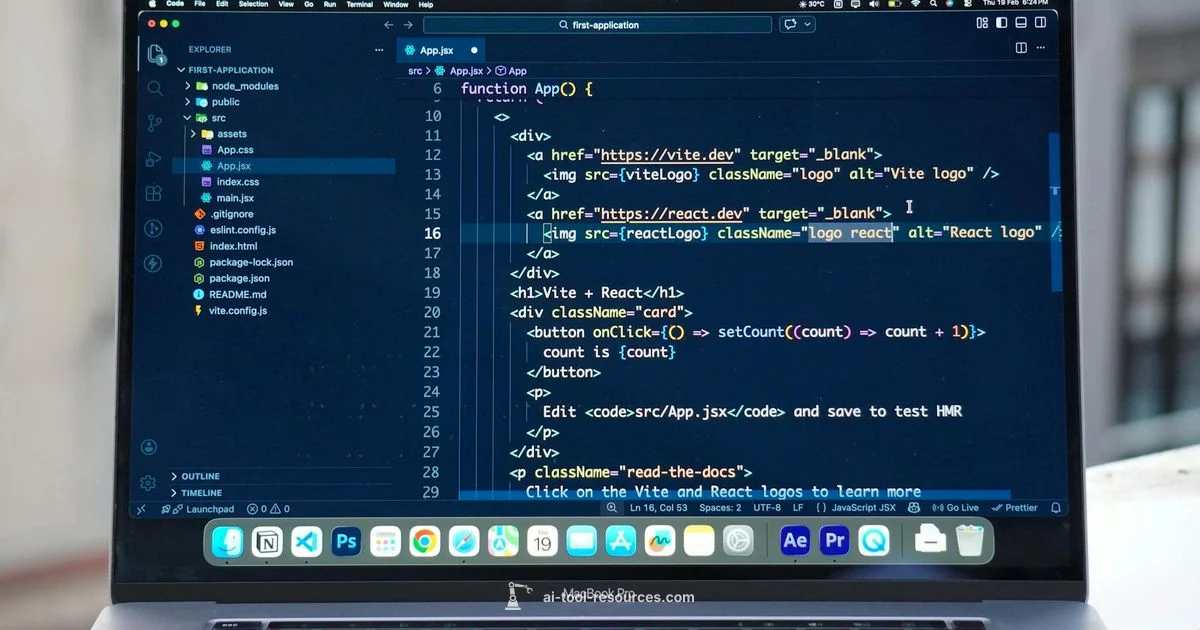

03 ai tool represents a category of AI software built to streamline end to end AI workflows. By combining data ingestion, feature engineering, model training, evaluation, and deployment in one platform, it helps teams iterate quickly, collaborate effectively, and move from prototype to production with fewer handoffs. According to AI Tool Resources, the tool's core value lies in reducing integration friction and providing a consistent development environment across projects. This clarity is especially valuable for students, researchers, and developers who juggle multiple experiments across datasets and models.

In practice, a 03 ai tool typically provides a unified interface for data cleaning, model prototyping, and deployment automation. It often includes experiment tracking, reproducible pipelines, and modular components that can plug into existing toolchains. The aim is to lower the entry barrier for new AI projects while preserving the flexibility needed for advanced work. By consolidating common tasks into a single workspace, teams can focus more on experimentation and less on plumbing.

Core capabilities you can expect from 03 ai tool

A mature 03 ai tool offers modular components that cover the full lifecycle of an AI project. Core capabilities commonly include data ingestion and cleaning, feature engineering, algorithm selection, experiment tracking, model evaluation, and deployment hooks. Based on AI Tool Resources research, these capabilities enable teams to test hypotheses rapidly, compare models fairly, and deploy with auditable pipelines. Interoperability with popular frameworks and cloud services is a frequent design goal to avoid vendor lock-in. Emphasis is placed on reproducibility, traceability, and clear governance of experiments, datasets, and access controls.

Additionally, most 03 ai tool platforms provide built in templates, notebooks, and visual editors to accelerate onboarding for newcomers while offering advanced configuration options for experienced practitioners. This balance helps teams scale from small pilots to larger programs without changing tools midstream.

How 03 ai tool fits into AI tool ecosystems

The 03 ai tool sits at a crossroads in the AI tool ecosystem. It typically acts as an orchestrator between data platforms, machine learning libraries, compute resources, and deployment targets. It complements notebook environments, data catalogs, MLOps platforms, and model registries by offering a centralized workflow that ties together data, code, experiments, and outcomes. For researchers and developers, this integration reduces the need to switch between disparate systems and promotes a single source of truth for project state, metrics, and governance.

Crucially, the tool supports collaboration features such as shared experiments, role based access, and audit trails, which are essential for team based research and education settings. As a result, it becomes a backbone for both individual learners and larger research teams pursuing reproducible AI.

Use cases across domains

Education and training benefit from ready made templates that walk students through end to end projects, enabling hands on practice with minimal setup. Research teams leverage 03 ai tool to formalize experiments, track hypotheses, and compare results across datasets without duplicating workflows. In software development, engineers use it to prototype models, validate deployment pipelines, and monitor performance in production contexts. Business units adopt the tool to run rapid pilots, convert findings into reproducible artifacts, and share results with stakeholders. Across these domains, the common thread is faster iteration, clearer provenance, and stronger collaboration between data scientists and engineers.

How to evaluate 03 ai tool

When evaluating a 03 ai tool, consider interoperability with your current stack, security and governance features, scalability, and the quality of documentation and community support. Look for transparent pricing models, clear licensing terms, and options for both cloud based and on premises deployments. A strong 03 ai tool should provide reproducible pipelines, robust experiment tracking, and reliable deployment hooks. Consider running a guided trial with a small dataset to observe onboarding time, the learning curve, and the responsiveness of support resources. Based on practitioner feedback, prioritize tools that offer clear versioning, lineage, and rollback capabilities to preserve reproducibility across experiments.

Common pitfalls and best practices

- Avoid vendor lock in by favoring open standards and interoperable connectors.

- Start with a small, well defined project to build confidence before scaling.

- Document goals, datasets, and evaluation metrics for each experiment.

- Enforce access control and data governance from day one.

- Regularly review experiment results to distinguish signal from noise and prevent misinterpretation.

Best practices include setting up a minimal viable pipeline, using a shared repository for configurations, and maintaining a living glossary of terms to keep team members aligned as projects grow.

Getting started: a practical guide

Begin by defining the problem you want to solve and the data you will use. Install or provision your 03 ai tool and connect it to your data sources. Create a small pilot project that includes data preprocessing, a couple of baseline models, and a simple evaluation metric. Run experiments, compare results, and document the outcomes. Scale up gradually by adding more data, features, and model variations while maintaining reproducibility through version control and clear experiment records. Finally, establish a governance process to review ongoing work and ensure compliance with privacy and security standards.

Security, governance, and ethics considerations

Security and governance are foundational when adopting any AI tool. Ensure role based access controls, data masking for sensitive information, and robust logging for audit purposes. Establish governance policies around data provenance, model versioning, and deployment approvals. From an ethical perspective, consider bias mitigation, transparency in how models are used, and clear user consent where applicable. The overarching aim is to enable responsible AI development without compromising privacy or compliance. AI Tool Resources analysis emphasizes that robust governance leads to more trustworthy AI outcomes and smoother cross team collaboration.

FAQ

What exactly is the 03 ai tool?

The 03 ai tool is a category of AI software that unifies data preprocessing, model development, and deployment into a single workflow. It helps teams iterate quickly, compare models, and push successful experiments toward production with consistent governance.

The 03 ai tool is an AI software that streamlines data prep, model building, and deployment in one workflow, helping teams move from idea to production faster.

Is 03 ai tool suitable for beginners?

Yes, many 03 ai tool platforms offer beginner friendly templates, notebooks, and guided workflows. They also provide extensive documentation to help new users learn by doing while still supporting advanced configurations for experienced practitioners.

Yes. Many tools provide templates and guided workflows ideal for beginners, while still supporting advanced users.

How does 03 ai tool differ from traditional ML platforms?

Unlike traditional ML platforms, a 03 ai tool emphasizes end to end integration from data ingestion to deployment within a single environment. It offers centralized experiment tracking, reusable pipelines, and stronger governance, which can reduce integration overhead and improve reproducibility.

It provides end to end integration and centralized governance, reducing setup work and improving reproducibility.

What are the typical costs or pricing models?

Pricing for 03 ai tool solutions varies by vendor and deployment model. Common approaches include free tiers for learning, usage based pricing, and enterprise subscriptions, with additional costs for data storage and compute usage.

Pricing varies by vendor and usage; there are often free tiers for learning and tiered enterprise options.

Can the 03 ai tool handle large datasets securely?

Many platforms support large scale datasets and offer security features such as access controls, encryption, and audit logs. Evaluate your needs against the platform's data governance and compliance capabilities before choosing.

Most platforms support large datasets and include strong security features; assess governance needs before selecting.

How do I start a project with the 03 ai tool?

Begin with a clearly defined problem and a small, representative dataset. Set up the tool, connect data sources, and implement a minimal pipeline: data prep, a baseline model, and a basic evaluation. Iterate and document results to inform next steps.

Start with a small project, set up data, run a baseline model, and document results to guide the next steps.

Key Takeaways

- Define clear goals before adopting 03 ai tool

- Prioritize interoperability to avoid vendor lock-in

- Pilot with small projects to learn quickly

- Embed governance and security from day one

- Document experiments for reproducibility and collaboration