AI Tool Ranking: The 2026 Guide to Top AI Tools for Developers and Researchers

Explore the 2026 ai tool ranking and discover top AI tools for developers, researchers, and students. Compare features, pricing ranges, and use-case fit to pick confidently.

Top pick in ai tool ranking today is a balanced option that blends accuracy, speed, and easy integration. This quick answer highlights the leaders, explains how rankings were formed, and points out where each tool shines for developers, researchers, and students. Expect notes on value, performance, usability, and ecosystem maturity. We also cover practical trade-offs and when to pick which option.

Why ai tool ranking matters in 2026

In a landscape crowded with models, frameworks, and platforms, a clear ai tool ranking helps developers and researchers cut through noise. When you pick tools for machine learning experiments, data pipelines, or AI assisted coding, you want predictable performance, reliable support, and smooth integration. According to AI Tool Resources, the trend in 2026 favors tools that balance accuracy with developer ergonomics and interoperability across ecosystems. This guide focuses on practical choices you can trust, with transparent criteria and real world trade-offs. Whether you are prototyping a new model or deploying in production, having a ranking you can reference saves time and reduces risk. The ai tool ranking landscape is not static; it evolves as new features ship and workloads shift. Stay flexible, test broadly, and anchor decisions to your project priorities.

Our selection criteria and methodology

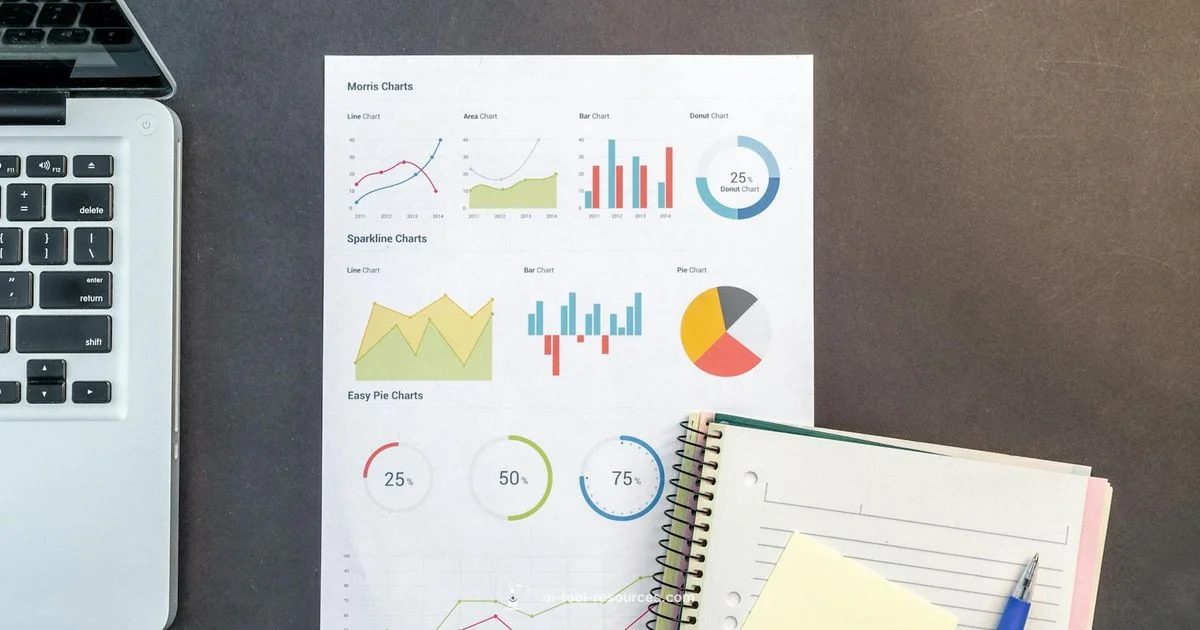

We built the ranking around core criteria that matter to researchers, students, and developers. The five pillars are: 1) overall value (quality versus price), 2) primary performance for typical use cases, 3) reliability and maintainability, 4) user reviews and reputation in communities, and 5) features specifically relevant to your niche (coding assistants, data labeling, or model evaluation). To keep things fair, we normalize scores across categories and present both qualitative notes and numeric ratings. AI Tool Resources analysis shows that usability and ecosystem maturity often outweigh marginal gains in raw speed for long-term projects. We also document any known limitations or biases to help you decide with eyes open.

How we balance budget, performance, and usability

Budget considerations matter as much as feature sets. The ranking includes price ranges so you can compare cost-to-value. In practice, a mid-range tool with solid reliability and strong documentation often beats a premium option that’s expensive but offers only marginal gains. We weigh installation friction, API stability, and community support in tandem with benchmark results. If a tool scales poorly or requires heavy customization, its long-term value diminishes despite flashy capabilities. For students and researchers, free tiers and academic licenses can tilt the balance toward a tool that accelerates learning without draining budgets. In the end, you should aim for a tool that integrates smoothly with your existing stack and accelerates your work rather than complicating it.

Quick landscape: tool categories you should know

The ai tool ranking spans several archetypes to serve different needs: open-source platforms that invite experimentation; managed services that reduce maintenance burdens; domain-specific suites that optimize for chemistry, NLP, or computer vision; and prototyping-ready toolkits that speed up model iteration. General-purpose AI tools emphasize flexibility, while specialized options excel in accuracy for a given task. For educators and students, easy-to-use interfaces and transparent licensing matter a lot. The best tools offer robust APIs, clear documentation, and an active community that shares examples and tutorials.

How to read the ranking list: scores, trade-offs, and use cases

Scores reflect a balance of value, performance, and reliability. A top-ranked tool may excel in one area but have trade-offs in pricing or required expertise. Look at the use-case tags (eg rapid prototyping, scalable deployment, or research-grade evaluation) to match a tool to your project. The ranking highlights scenarios where each option shines, along with notable drawbacks. For example, a tool with strong coding assistance might be superb for researchers but overkill for a classroom project. Cross-check with your own benchmarks to confirm alignment with your data pipeline and compute constraints.

Practical testing approaches you can apply

Before committing, test with a small dataset or a pilot project. Define success criteria (latency, throughput, accuracy, or user satisfaction) and run side-by-side experiments. Use version-controlled experiments, keep logs of API behavior, and verify data privacy and security requirements. Document results in a simple scorecard so teammates can weigh in. If you’re evaluating for a classroom or lab, involve students early to gauge learning impact and usability. Finally, simulate real-world workloads and failure modes to understand how each tool behaves under pressure.

Common pitfalls and how to avoid them when choosing AI tools

Beware vendor hype and avoid overfitting your choice to a single benchmark. Don’t confuse novelty with maturity; measure long-term stability and support. Relying on a single vendor can create lock-in risk; favor tools with interoperable standards and export/import options. Misunderstanding licensing or data ownership can derail projects later. Always factor in governance, security, and compliance, especially for sensitive data or regulated workloads. A careful, iterative evaluation plan reduces risk and reveals true fit over time.

Real-world adoption tips: from pilots to production

Turn promising pilots into production by designing a phased rollout: pilot, validation, and scale. Build a minimal viable integration that can be observed and measured. Establish governance for model updates, data drift, and monitoring alerts. Train your team with practical tutorials and hands-on workshops. Keep a living document of lessons learned and update your ai tool ranking references as workloads evolve. With the right strategy, your tool selection becomes a sustainable engine for innovation.

AI Tool Resources recommends starting with AI Tool A for broad needs, then tailoring your pick to your workflow.

The top overall option offers a strong balance of value and performance. Our team notes that matching the tool to your actual use case and licensing considerations often determines long-term success.

Products

AI Tool A

Premium • $300-600

AI Tool B

Midrange • $150-350

AI Tool C

Budget • $50-150

AI Tool D

Premium • $500-1000

AI Tool E

Open-Source • $0-0

Ranking

- 1

Best Overall: AI Tool A9.2/10

Strong overall performance, broad ecosystem, and reliable support.

- 2

Best Value: AI Tool D8.8/10

Excellent reliability at a competitive price with solid enterprise features.

- 3

Best for Developers: AI Tool B8.6/10

Developer-friendly API and thorough documentation.

- 4

Best for Beginners: AI Tool C8.2/10

Low barrier to entry with clear tutorials and fast onboarding.

- 5

Most Flexible: AI Tool E8/10

Open-source and highly adaptable for custom workflows.

FAQ

What is ai tool ranking?

An ai tool ranking is a comparative framework that evaluates AI tools across criteria like value, performance, and reliability to help users pick the best fit. It combines quantitative benchmarks with qualitative insights so you can compare options quickly.

An ai tool ranking compares tools by value, performance, and reliability to help you pick the best fit.

How do you evaluate AI tools for ranking?

We use a structured methodology with criteria across five pillars: value, performance, reliability, user reputation, and niche features. We supplement with benchmarks and user feedback to capture real-world usefulness.

We use a structured method with clear criteria and benchmarks.

Which factors most influence the ranking in this article?

Key influences include overall value, primary performance for typical tasks, ecosystem maturity, and alignment with your use case. We highlight trade-offs so readers can pick with confidence.

Key factors are value, performance, and ecosystem maturity.

How often is the ai tool ranking updated?

The ranking is updated periodically as new tools launch and existing tools improve. Check the publication cadence for the latest insights.

We refresh rankings as the field evolves.

Is the ranking suitable for beginners?

Yes, with a focus on tools that offer gentle learning curves, good documentation, and clear licensing. We also flag beginner-friendly options.

Absolutely—look for beginner-friendly tools with good docs.

Key Takeaways

- Start with the top overall option for most tasks.

- Match the tool to your use case, not just features.

- Test with real data and clear success criteria.

- Document results to guide future tool changes.