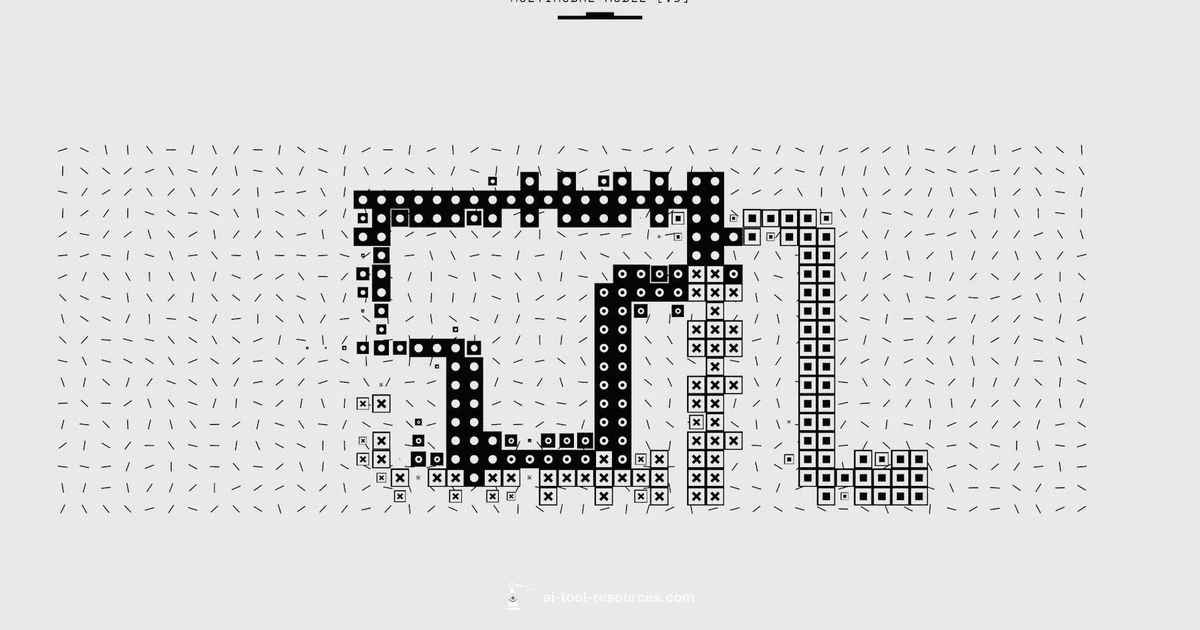

Understanding the Multimodal AI Tool: Cross Modal Intelligence

Explore what a multimodal ai tool is, how it processes text, images, and audio, and practical guidance for building, evaluating, and deploying cross modal AI in research and production.

Multimodal ai tool is a type of artificial intelligence system that can process and integrate multiple data modalities—such as text, images, audio, and video—to perform complex tasks.

What sets a multimodal AI tool apart and why it matters

Multimodal ai tool refers to systems that can understand and combine information from multiple data streams, such as text, images, audio, and video. For developers and researchers, these tools enable richer interpretation than single modality models and support more natural user experiences. According to AI Tool Resources, multimodal tooling is rapidly expanding beyond research labs into products, because real world problems often involve multiple kinds of data at once. By fusing modalities, these tools can answer questions that require visual context, linguistic nuance, and acoustic cues all together. In practice, teams start with a clear use case, map the data modalities involved, and design a workflow that lets the model align representations across streams. This early planning reduces scope creep and helps you choose the right models, data pipelines, and evaluation criteria. In short, a multimodal ai tool is not just a bigger model; it is a coordinated system that learns from how different data types relate to each other.

Core capabilities you should expect from a multimodal AI tool

A robust multimodal ai tool typically offers a core set of capabilities that enable cross modal reasoning. At minimum, look for: (1) cross‑modal representation learning, where the model builds shared embeddings for words, pixels, and sounds; (2) aligned generation, which lets outputs be coherent across modalities—such as a caption for an image or a description of a video scene; (3) multimodal inference, allowing questions to be answered using combined evidence from multiple inputs; and (4) streaming or interactive modes that support real time collaboration with users. Many platforms also provide adapters or connectors to ingest data from common sources, plus evaluation dashboards that show cross modal accuracy and latency. When evaluating options, consider your primary modalities, deployment constraints, and whether you need generation, classification, or reasoning tasks. If you are building research tools, it helps to pick architectures that support modular components so you can swap encoders or decoders without rearchitecting the entire system.

How cross modal understanding is achieved

Cross modal understanding relies on how the model encodes and aligns different data streams. Techniques include joint embedding spaces, where text, images, and audio share a common representation; contrastive learning to pull related pairs closer and push unrelated ones apart; and attention mechanisms that dynamically focus on relevant parts of each modality. Training typically uses large, diverse datasets that pair modalities meaningfully, though synthetic data and self supervision are increasingly common to scale coverage. A practical design choice is to separate perception (encoding) from interpretation (reasoning), which makes it easier to improve individual components and test new modalities. You will often see pipelines that first extract features from each input, then fuse them with cross‑attention layers before producing a final output. Understanding these ideas helps you diagnose performance issues and tailor models to your specific domain.

Typical architectures and design patterns

Architectural templates for multimodal ai tools vary, but several patterns recur. One common pattern is a shared backbone with modality‑specific heads, where a central encoder handles all inputs and specialized decoders produce text, images, or audio outputs. Another approach uses modality‑specific encoders whose outputs are fused through a multimodal fusion layer or a transformer cross‑attention module. For real time tasks, streaming pipelines with asynchronous processing and caching help manage latency across modalities. From a software engineering perspective, it helps to design with clear data contracts, versioned interfaces, and modular components so you can swap models or modalities without destabilizing the whole system. Finally, monitoring and observability are essential: keep instrumented metrics for each modality and cross‑modal tasks, so you can identify bottlenecks and ensure predictable behavior in production.

Real world use cases across industries

Across industries, multimodal ai tools enable tasks that were hard or impossible with single modality systems. In customer support, they power chatbots that understand screenshots, transcripts, and voice tones to route issues more accurately. In media and entertainment, they help summarize scenes by analyzing visuals, dialogue, and soundtracks. In healthcare, multimodal tools integrate patient notes with medical images and sensor data to aid diagnosis and triage, while in manufacturing they monitor equipment through video feeds combined with telemetry. For developers, the practical route is to start with a concrete problem that benefits from cross modal insight, assemble representative datasets, and run small experiments to measure incremental gains. AI Tool Resources analysis shows increasing adoption in research labs and product teams who want to reduce context switching for users and improve decision quality through richer data signals.

Evaluation metrics, benchmarking, and risk management

Evaluating a multimodal ai tool requires more than standard accuracy. Metrics should cover per‑modality performance, alignment quality, and cross‑modal reliability. Common approaches include retrieval accuracy for paired data, caption and description fidelity, and calibration checks that measure confidence across modalities. Benchmarks often combine synthetic and real world data to test generalization, robustness to distribution shifts, and failure modes. It is also important to assess data quality, bias, and potential safety risks: inconsistent outputs, privacy concerns, and the possibility of sensitive information leakage across modalities. When benchmarking, document the evaluation protocol, reproducibility, and any data anonymization steps. A disciplined approach helps you compare models fairly and make informed tradeoffs between accuracy, latency, and resource usage.

Implementation best practices for teams

To translate theory into production, follow a structured implementation plan. Start with a minimal viable cross modal setup focusing on one key modality pair, then gradually add support for others. Build modular pipelines with clear interfaces and version control for data and models. Invest in end‑to‑end tests that cover multi‑modal scenarios, including failure recovery paths. Use lightweight monitoring and alerting to catch drifts in data quality or model behavior across modalities. Document expectations for latency, throughput, and user experience, and align governance with data usage policies and compliance requirements. Finally, adopt a continuous learning loop: retrain on fresh, high quality multi‑modal data and validate improvements on real user tasks.

Ethical, legal, and security considerations

Multimodal ai tools raise unique concerns about privacy, consent, and bias that span multiple data channels. Ensure you have explicit data source permissions for text, image, audio, and video inputs, and implement robust privacy controls in how data is stored and processed. Guard against bias that can emerge when one modality dominates the signal or when data collections are unrepresentative. Consider model explainability across modalities so users understand why a given cross modal decision was made. Security is also crucial: protect model weights, pipelines, and data pipelines against tampering and leakage. Finally, stay aligned with regulatory requirements for data handling and safety testing, especially in healthcare, finance, or education.

FAQ

What is a multimodal ai tool and why is it important?

A multimodal ai tool integrates multiple data modalities into a single model. It can process and fuse information from text, images, audio, and video to perform complex tasks. This cross modal capability enables richer understanding and more flexible applications.

It’s an AI system that uses text, images, and sound together to understand and act on complex data.

Which modalities are commonly supported in multimodal tools?

Most tools support text and images, with audio and video increasingly included. Some platforms also handle structured data and sensor streams.

Text and images are common, with growing support for audio and video.

What are typical challenges when building multimodal AI tools?

Challenges include aligning data across modalities, high compute costs, and data privacy concerns. Benchmarking multi modal tasks is often more complex due to varied inputs.

Key challenges are aligning data across modalities and the higher compute needs.

How do you evaluate multimodal AI tools?

Use metrics for each modality and cross modal performance, test robustness to distribution shifts, and assess latency and safety. Include human evaluations for quality.

Evaluate both per modality and cross modal tasks, plus latency and safety.

What are best practices for deployment and monitoring?

Start with a small cross modal pilot, implement end to end tests, monitor data quality, and ensure governance and privacy controls are in place.

Begin with a small pilot, monitor carefully, and plan for privacy.

What is the AI Tool Resources verdict on adopting multimodal tools?

The AI Tool Resources team recommends starting with a clear, narrow use case, validating data quality, and iterating across modalities. Scale cautiously with measurable outcomes.

Start small, validate data, and iterate with measurable results.

Key Takeaways

- Learn what a multimodal ai tool does

- Understand cross modal data integration

- Identify common architectures and challenges

- Apply best practices for development and evaluation

- Consider ethical and security implications