What Is the Strongest AI? A Comprehensive Comparison

Explore what makes the strongest AI, compare general-purpose versus domain-specific systems, and learn how to evaluate AI strength across metrics like generalization, safety, latency, and deployment.

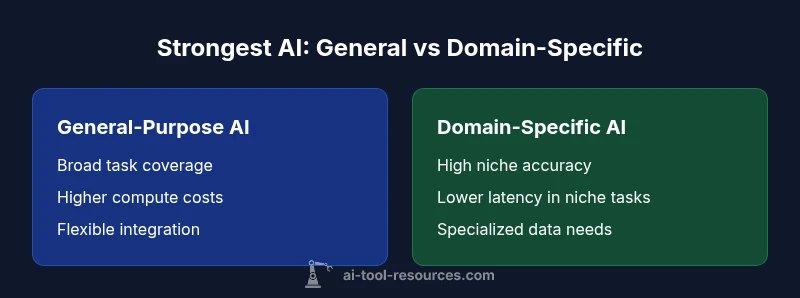

Across most AI tasks, there is no single strongest AI that dominates every domain. The strongest AI depends on the metric: generalization, safety, latency, cost, and deployment context all matter. In practice, general-purpose multimodal AI systems tend to outperform domain-specific models on broad tasks, while specialized systems excel in niche areas.

what is the strongest ai? Defining the question

According to AI Tool Resources, what is the strongest ai? Defining the question is the first step toward a meaningful comparison. The phrase is inherently ambiguous because strength can be measured across many axes: generalization to new tasks, accuracy within a domain, speed and latency, energy and cost efficiency, interpretability, resilience to distribution shifts, safety, and governance. In practice, evaluating AI strength means choosing a task framework and a success criterion, then aligning that criterion with available data, compute budgets, and risk tolerance. For developers and researchers, this means moving beyond hype and selecting metrics that reflect real-world usage: can the system learn from limited data? Does it maintain stable behavior under distribution shifts? How expensive is it to train, deploy, and maintain? By clarifying the context, teams can avoid chasing a phantom champion and focus on a tool that delivers durable value. The overarching goal is practical utility over abstract dominance.

AI Tool Resources analysis shows that context matters most when assessing strength; the same model can be superb in one setting and merely adequate in another.

The metrics that define strength

Strength in AI is multidimensional. Practitioners should weigh generalization, data efficiency, and adaptability against efficiency, latency, and deployment costs. Generalization measures how well a model performs on unseen tasks or distributions, not just on training data. Data efficiency asks how much data is required to reach acceptable performance, which is crucial when data is scarce or expensive to collect. Efficiency includes compute requirements, energy use, and the ability to run on target hardware. Safety, robustness, and alignment address whether models behave predictably under adversarial inputs or evolving user requirements. Finally, governance considerations cover auditability, reproducibility, and compliance with policies. When you combine these axes, the apparent strength of an AI varies: a nimble, low-cost system may be stronger in resource-constrained environments, while a larger, safety-focused system may win in regulated sectors. In the end, the strongest AI is the one that best fits the intended use case and constraints.

General-Purpose vs Domain-Specific Strengths

Two broad archetypes emerge when comparing the strongest AI: general-purpose multimodal systems and domain-specific specialists. General-purpose systems excel at breadth, handling a wide range of tasks with a single interface, and they benefit from large, diverse training signals and robust tooling ecosystems. They tend to require more compute and energy and may struggle to achieve peak performance in highly specialized tasks without fine-tuning. Domain-specific AI, by contrast, is crafted for a narrow set of tasks or industries. These models can leverage specialized data, curated workflows, and tailored safety controls to achieve superb accuracy and faster inference in their niche. The trade-off is reduced versatility and higher dependency on data quality for a particular domain. For teams, the choice often hinges on whether breadth or depth matters more for the project’s goals.

Benchmarks and Real-World Tasks

Benchmarks play a critical role in comparing AI strength, but benchmarks are imperfect proxies for real-world performance. Standardized benchmarks can measure generalization, reasoning, and consistency, but they may underrepresent deployment realities such as latency constraints, data privacy requirements, and system integration challenges. Real-world tasks reveal how models handle messy inputs, ambiguous prompts, and evolving user needs. To evaluate strength effectively, practitioners should combine bench scores with domain-specific tests, user studies, and pilot deployments. Consider scenarios like content moderation, code generation, scientific reasoning, or medical triage, and assess which model produces reliable results within the required safety and regulatory constraints. Remember that a model that shines on a test set may lag in production if it cannot integrate with existing systems or scale in your environment.

Cost, Efficiency, and Deployment Trade-offs

Strong AI strength also hinges on total cost of ownership and practical deployment considerations. High-performing general-purpose models often demand substantial compute, memory, and energy budgets, which translates into higher operational costs and longer onboarding when integrated into existing pipelines. Domain-specific AIs can deliver improved efficiency and faster inference by exploiting targeted architectures and optimized data pipelines, but may require ongoing data curation and maintenance for each domain. Deployment choices — on-cloud versus edge, batch versus real-time, and vendor-supported versus open-source — influence latency, reliability, and long-term viability. When deciding which path to pursue, map out the total cost of ownership, performance requirements, and governance needs. The strongest AI is the one that balances performance with sustainable, maintainable operations over its lifecycle.

Safety, Alignment, and Reliability

Strength without safety is risky, especially in high-stakes domains. Alignment ensures the AI’s behavior matches user intent and organizational policies, while reliability focuses on stability under varied inputs and long-running usage. Strength metrics must include safety tests, adversarial robustness checks, explainability, and auditability. In regulated environments like healthcare or finance, you may prioritize alignment and governance as much as raw performance. The strongest AI in such contexts is not only accurate but also transparent and controllable. As a practical guideline, embed safety checks early, require human-in-the-loop review for critical decisions, and establish monitoring dashboards to detect drift and unexpected outputs. This approach protects users and preserves long-term trust in AI deployments.

Practical Evaluation: A Step-by-Step Guide

To evaluate strength for your project, start with clear requirements. Define success metrics reflecting real-world use: task coverage, accuracy thresholds, latency targets, data requirements, and governance constraints. Collect a representative test set and plan a staged evaluation, including offline benchmarking and live pilot testing. Compare candidate systems against these criteria, document assumptions, and run sensitivity analyses to understand how results vary with data quality, load, and deployment context. Include safety and reliability tests, especially for high-stakes tasks, and verify that the system integrates with your existing data pipelines and security controls. Finally, perform a cost-benefit analysis to determine whether the incremental gains in performance justify the additional resources and risks. The strongest AI for your project will be the one that passes all these checks without compromising safety or maintainability.

Case Illustrations: When Strength Matters

Real-world decisions about AI strength often hinge on context. In a fast-moving development environment with diverse tasks, a general-purpose model may yield the best overall value due to flexibility and ecosystem support. In a tightly scoped domain, such as regulatory-compliant document analysis or high-precision chemical simulations, a domain-specific AI can surpass broader systems by leveraging curated data and specialized validation. These case illustrations remind us that the strongest AI is situational: it reflects your goals, constraints, and risk tolerance rather than a universal champion. When designing AI-enabled solutions, start with the problem, not the hype, and choose the tool whose strengths align with measurable, meaningful outcomes.

How to Choose the Strongest AI for Your Goals

The final choice should align with your specific objectives and constraints. Begin by listing key success criteria: accuracy in your domain, speed, cost, data availability, and governance requirements. Assess any regulatory or ethical constraints that could affect your deployment. Consider a phased approach: pilot a general-purpose model to establish a baseline, then test domain-specific enhancements or modular hybrids to close gaps in niche performance. Factor in vendor support, interoperability with your tech stack, and the potential need for ongoing data curation. The strongest AI for your organization is the one that delivers the best balance of performance, safety, and maintainability while satisfying your operational goals.

Comparison

| Feature | General-Purpose Multimodal AI | Domain-Specific AI |

|---|---|---|

| Generalization across tasks | High (broad capability) | Moderate (domain-focused) |

| Domain mastery / niche accuracy | Moderate to high (depends on data) | Very high (domain-tuned) |

| Latency and throughput | Higher (larger models, broader prompts) | Lower (tailored pipelines) |

| Deployment cost | Higher (compute, data, and tooling needs) | Lower (focused data and smaller scoped models) |

| Best for | Broad, flexible use-cases and prototyping | Niche tasks with strict accuracy requirements |

Upsides

- Broad task coverage and flexibility

- Strong ecosystem and tooling support

- Easier to adapt across projects

Weaknesses

- Higher resource requirements

- May underperform in niche tasks without customization

- Potentially longer deployment cycles due to scale

General-purpose AI often offers the strongest overall position; domain-specific AI excels in niche tasks.

Choose general-purpose AI for versatility and rapid iteration. Opt for domain-specific AI when performance in a narrow domain is non-negotiable and data supports targeted optimization.

FAQ

What does 'strongest AI' mean in practice?

In practice, 'strongest AI' depends on the chosen success criteria. It is not a universal champion but a model that best meets the task, constraints, and governance requirements for a given context.

In practice, strongest AI refers to the model that best fits your task and constraints, not a universal champion.

Can there be a universal strongest AI?

No. Strength is task-dependent. A model that excels in one domain may underperform in another. The strongest AI for a project is the one aligned with its specific goals and constraints.

No universal champion exists; strength depends on the task and constraints.

How should I compare AI systems for a project?

Define success criteria, assemble representative test data, benchmark across metrics, and validate with real-world pilots. Include safety, governance, and deployment considerations in your comparison.

Set criteria, benchmark, and pilot-test with safety and deployment in mind.

What role do safety and alignment play in determining strength?

Safety and alignment are integral to strength in practice. A model with high accuracy but poor alignment can cause harm or drift, undermining long-term value.

Safety and alignment are essential; accuracy alone isn’t enough.

What are common pitfalls when comparing AI strengths?

Relying on single benchmarks, ignoring deployment realities, and neglecting governance can mislead comparisons. Always combine benchmarks with real-world testing and risk assessments.

Avoid single benchmarks and ignore deployment realities.

Where can I find reliable benchmarks for AI strength?

Look for benchmarks from recognized research labs and institutions; supplement with domain-specific tests and pilot deployments to ground results in your context.

Use reputable academic and industry benchmarks, plus domain tests.

Key Takeaways

- Define success criteria before choosing AI

- Balance breadth with domain-specific depth

- Assess deployment costs and latency early

- Prioritize safety, alignment, and governance

- Test with real-world tasks and data