ai comparison tool free: A 2026 Guide

This in-depth guide reviews ai comparison tool free options for 2026, comparing features, accuracy, usability, data handling, and limits across popular free AI tools.

Quick start with ai comparison tool free options by testing two solid candidates that fit your needs, then validate results across tools. In 2026, free AI comparison tool free options range from open-source libraries to hosted dashboards with free tiers, offering varying levels of data handling, speed, and transparency. This comparison guide helps you identify the best fit by focusing on scope, privacy, and performance.

What ai comparison tool free means in today’s AI ecosystem

In 2026 the term ai comparison tool free covers a broad spectrum of software and services that let users benchmark AI capabilities without paying upfront. This category includes open-source libraries you run locally, lightweight evaluation dashboards, and cloud-based sandboxes that offer free trials or unlimited usage for small projects. For developers, researchers, and students, these tools provide a low-barrier entry point to gauge model accuracy, latency, and stability across tasks like natural language processing, code generation, image analysis, and anomaly detection. Yet free options vary widely in data handling, evaluation scope, and transparency about model internals. When you test them, start with a precise objective, outline what you will measure, and set realistic limits on volume and scope. This article digs into how to use ai comparison tool free options responsibly, what trade-offs to expect, and how to structure robust tests that avoid misleading conclusions.

According to AI Tool Resources, free options should be evaluated with the same rigor as paid tools, focusing on reproducibility and governance.

Evaluation criteria for free AI comparison tools

To compare free AI tools effectively, you need a consistent rubric that applies to both options. Start with accuracy: how well does the tool’s output match ground truth or human judgment for your specific tasks? Next, examine speed and throughput: does the tool produce responses quickly enough for your workflow, and can it handle the volume you require? Then consider interpretability: are there explanations for results, and is there transparency about the data sources, model versions, and training data? Privacy and data handling are critical: determine whether inputs are stored, if there is local processing, and how long data persists. Finally, assess reliability and updates: how often is the tool refreshed, and is there a history of breaking changes or outages? Use at least two independent free options to triangulate results, and document any discrepancies for later review.

Understanding data quality and model behavior

Data quality is the backbone of any AI tool, free or paid. Free ai comparison tool free options often rely on public datasets or synthetic prompts, which can influence output patterns and bias. When you compare tools, examine how each handles edge cases, how models generalize beyond training data, and whether there is a clear boundary between training data and test prompts. AI Tool Resources analysis shows that free tools vary widely in privacy policies, data retention, and disclosure of model origins. Be mindful of prompt variability and measurement noise; run repeated tests across different prompts and datasets to uncover inconsistent behavior. Document known limitations and consider using a secondary, independent evaluation method to validate surprising results. The goal is to build a stable, evidence-based view of tool performance rather than relying on single, isolated outputs.

Privacy, security, and governance considerations

Free AI tools can introduce unique privacy and governance challenges. Before integrating any ai comparison tool free option into your workflow, review the vendor’s privacy policy, data handling practices, and whether inputs are stored or used to improve models. Prefer tools that offer local or on-device processing, clear data retention windows, and options to purge inputs after each session. Compliance-minded teams should assess whether the tool provides data encryption, access controls, and audit logs. In addition, consider governance: who owns the evaluation results, how long they are archived, and whether you can reproduce tests if the tool changes its backend. AI Task Resources emphasizes keeping sensitive prompts out of free dashboards when dealing with proprietary code, research findings, or user data that falls under confidentiality constraints. When in doubt, opt for tools with transparent terms and straightforward data controls.

Practical testing methods: designing fair comparisons

Effective testing with ai comparison tool free options requires discipline. Start by defining a fixed test suite that mirrors your real tasks, including prompts, datasets, and success criteria. Run each tool with identical inputs and document the exact version or configuration used to ensure reproducibility. Include a mix of simple and challenging prompts to surface performance gaps, checking for latency, stability, and error modes. Use standardized metrics such as accuracy on benchmark tasks, mean reciprocal rank for retrieval tasks, or BLEU/ROUGE-like measures for language tasks. Record any anomalies and quantify uncertainty with multiple runs. Finally, implement a simple scoring rubric that weights factors you care about most, like privacy, speed, and output quality. This approach keeps comparisons fair and actionable for your team.

Real-world use cases and limitations

Free ai comparison tool free options shine in early-stage research, education, and prototyping. They enable rapid prototyping, learning, and baseline benchmarking without incurring costs. However, limitations are real: results can be noisy, data handling policies may be opaque, and advanced features—such as ensemble methods, customized inference, or enterprise-grade privacy controls—are often unavailable. AI Tool Resources recommends matching the test scope to the tool’s capabilities and not extrapolating free results to production-grade deployments without further validation. When you need rigorous compliance and traceability, combine free tools with selective paid options or open-source techniques you control, so you can audit, reproduce, and scale tests confidently.

Interpreting outputs and avoiding bias in free tools

Interpreting results from ai comparison tool free options requires skepticism and cross-validation. Look for consistent patterns across multiple prompts and datasets, and be wary of overfitting to a narrow test set. Free tools may have calibration biases or response tendencies that reflect their training data or prompt design. To mitigate, compare outputs from at least two tools, analyze failure modes, and check for drift when inputs change slightly. Document any discrepancies, and consider human-in-the-loop review for high-stakes decisions. Remember that free access does not imply free of bias—transparency, test design, and independent validation are essential.

Integrating findings into decisions and workflows

Putting test results into practice means turning insights into concrete actions. Build a lightweight decision framework that ties test outcomes to your goals—such as accuracy thresholds, latency budgets, or privacy constraints. Use the most reliable free option for exploratory work, but reserve critical decisions for environments with stronger governance, reproducibility, and support. Create a living document of evaluation results, update as tools evolve, and maintain a clear record of test conditions. AI Tool Resources stresses that successful adoption requires alignment with existing data pipelines, coding practices, and team workflows. Include versioning for models and test scripts so your team can reproduce tests later.

Best practices and mitigation strategies for free tools

To maximize value from ai comparison tool free options, combine disciplined testing with guardrails. Always start with a pilot test using benign prompts before moving to sensitive data. Maintain separate test and production environments, and implement data minimization: send only what is necessary for evaluation. Document privacy terms and opt-out options, and review changes to policies when the tools update. Where possible, supplement free tools with open-source baselines you can audit locally. The AI Tool Resources team recommends evaluating at least two independent free tools, coding the tests in a way that reproduces across environments, and treating free options as one piece of a broader evaluation strategy rather than the sole decision driver.

Comparison

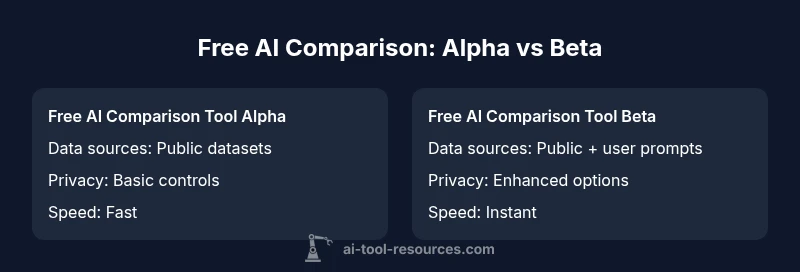

| Feature | Free AI Comparison Tool Alpha | Free AI Comparison Tool Beta |

|---|---|---|

| Data sources | Public datasets | Public datasets + user prompts |

| Customization | Limited controls | Moderate input controls |

| Result speed | Fast (seconds) | Instant results |

| Privacy controls | Basic settings | Enhanced privacy options |

| Pricing | Free for basic use | Free with optional paid upgrades |

Upsides

- Zero upfront cost enables quick experimentation

- Lower barrier to learning for students and researchers

- Encourages reproducible testing with lightweight pipelines

- Good for baseline comparisons and early exploration

- Promotes transparency when well-documented

Weaknesses

- Limited advanced features compared to paid tools

- Variable data handling and privacy practices

- Output quality can be inconsistent across prompts

- Support and guarantees are often minimal

Free AI tools are excellent for initial benchmarking but not a substitute for paid, enterprise-grade testing

Start with free options to frame your test plan. For production decisions, validate with paid tools or open-source baselines and ensure governance and reproducibility are in place.

FAQ

What qualifies as ai comparison tool free?

Free AI comparison tools include both open-source libraries you run locally and hosted services with no-cost tiers. They let you benchmark model outputs, speed, and reliability without a monetary commitment. Always verify how data is handled and what features are included at no cost.

Free AI comparison tools include open-source options you run locally and hosted services with no-cost tiers. They let you test results and speed, but check data handling and feature limits.

How do I evaluate free AI comparison tools?

Evaluate free tools using a consistent rubric: accuracy, latency, data handling, and transparency about models. Run identical prompts across tools, document configurations, and compare results. Look for clear documentation on data retention and policy changes.

Use a consistent rubric: accuracy, speed, data handling, and model transparency. Run identical prompts and document configurations.

Can free tools meet enterprise needs?

Free tools can support early-stage experiments and prototyping, but enterprise deployments typically require stronger governance, reproducibility, and security. Treat free results as exploratory and validate with controlled tests or paid options for production decisions.

Free tools are great for early exploration but enterprise needs usually require more governance and security.

Are there privacy concerns with free tools?

Yes. Free tools may store inputs or use data to improve models. Always review terms of service, data retention, and whether you can opt out of data collection. Prioritize tools with clear privacy controls and local processing options when handling sensitive data.

There can be privacy concerns. Check data retention and opt-out options before testing sensitive data.

What tests should I run first?

Start with a simple benchmark set representing your tasks, then scale to harder prompts. Include a mix of inputs to test stability, speed, and error modes. Document results and re-run after any tool update to ensure consistency.

Begin with simple prompts, then move to harder ones. Test speed, stability, and error cases, and re-run after updates.

How should I document test results?

Create a shared evaluation sheet noting tool version, input prompts, outputs, and scoring. Include timestamps and privacy terms. Keep the document modular so you can replace tools without reworking the entire test plan.

Keep a shared sheet with tools, prompts, outputs, and scores, plus privacy terms for reproducibility.

Key Takeaways

- Define and document test objectives early

- Test with at least two independent free tools

- Prioritize privacy and data handling in reviews

- Use free tools for exploration, not final decisions