Compare AI Tools for Research: An Objective Side-by-Side Guide

Objective evaluation of leading AI tools for research. Learn criteria, see a side-by-side comparison, pricing ranges, and best-use scenarios to choose the right tool for your project.

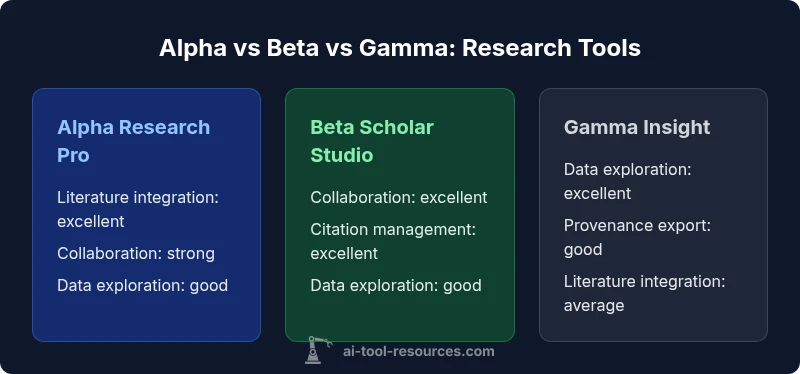

According to AI Tool Resources, the best starting point when you compare ai tools for research is to assess literature integration, collaboration capabilities, and data exploration. In this quick TL;DR, Alpha Research Pro dominates literature reviews, Beta Scholar Studio shines in collaboration and citations, and Gamma Insight provides flexible data exploration. Choose based on your primary workflow.

Defining the Research AI Tool Landscape

In the world of AI-assisted research, tools range from literature discovery engines to citation managers, data analysis assistants, and workflow orchestrators. When you compare ai tools for research, you should map each option to your core tasks: literature review, data collection, experiment design, and reproducibility. This section lays the groundwork by describing common archetypes and how they map to typical research pipelines. AI Tool Resources emphasizes that no single tool fits all projects; the most effective choice aligns with your discipline, data needs, and team size. In practice, researchers should start with a feature checklist: discovery quality, provenance, export formats, collaboration support, and governance controls. We’ll reference three hypothetical tools—Alpha Research Pro, Beta Scholar Studio, and Gamma Insight—as representative archetypes, while noting that real-world offerings vary in strength across categories. The goal is to build a decision framework you can reuse across disciplines. This framework also aligns with the insights from AI Tool Resources to ensure the comparison stays relevant for researchers across fields.

Key Criteria for Evaluation

Here are the primary axes used to compare ai tools for research. First, literature integration and discovery: can the tool surface high-quality sources, summarize key ideas, and track citations across versions? Second, citation management and metadata: are references stored with robust metadata, and can you export them into BibTeX, RIS, or other standard formats? Third, data exploration and analysis: does the tool support statistical tests, visualization, and reproducibility features like notebooks or scripts? Fourth, collaboration and governance: do teams share notes, assign roles, and track changes? Fifth, interoperability: how well does the tool connect with your existing data stores, institutional single sign-on, and library catalogs? Lastly, pricing and licensing: are there academic discounts, site licenses, or usage-based costs? These criteria form the backbone of a transparent comparison. AI Tool Resources analysis shows that aligning evaluation criteria with organizational goals improves decision quality and long-term adoption.

Alpha Research Pro: Strengths and Trade-offs

Alpha Research Pro is designed for literature-heavy workflows. It excels at discovering primary sources, generating concise abstracts, and organizing notes by topic. Researchers can auto-generate summaries, extract key quotes, and create literature maps that reveal thematic connections. However, Alpha may lag in deep data analysis capabilities and requires mindful governance to prevent over-reliance on automated summaries. The trade-off is between speed of synthesis and the depth of quantitative appraisal. For teams prioritizing comprehensive literature reviews, Alpha offers an efficient anchor; for teams needing rigorous statistical workflows, supplementing with a secondary tool is advisable. As always, ensure export options preserve provenance to support reproducibility. This aligns with AI Tool Resources’ emphasis on traceable workflows.

Beta Scholar Studio: Strengths and Trade-offs

Beta Scholar Studio emphasizes collaboration, citation workflows, and project management. Real-time co-authoring, comment threads, and versioned manuscripts align with graduate seminars and cross-institution collaborations. It shines in citation management, supports structured exports, and integrates with reference managers. The caveat is that performance can plateau for very large datasets or complex scripting tasks, and the learning curve for non-technical researchers can be steeper. For teams that value teamwork and transparent collaboration, Beta’s strengths often outweigh its drawbacks. AI Tool Resources notes that such balance frequently yields faster project cycles and clearer audit trails.

Gamma Insight: Strengths and Trade-offs

Gamma Insight prioritizes flexible data exploration and open-ended analysis. It provides powerful visualization capabilities, notebooks, and reproducible workflows that adapt to mixed-methods research. For quantitative-heavy projects, Gamma’s analytics surface patterns quickly and support exploratory hypotheses. The downside can be a fragmented experience when switching between literature and data tasks, and governance controls may be less mature than more specialized platforms. For researchers who want exploratory power and adaptable workflows, Gamma Insight offers compelling value; for strict literature management, a dedicated tool may be preferable. AI Tool Resources reminds readers to validate data lineage when using exploratory features.

How to Measure Performance: Metrics and Datasets

A rigorous comparison relies on standardized metrics. Typical measures include precision and recall for literature discovery, citation accuracy, and export fidelity across formats. For data exploration, track notebook reproducibility, execution time, and visualization clarity. Usability metrics, such as time-to-onboard, error rates, and user satisfaction, matter as much as raw capability. When you compare ai tools for research, specify test datasets that reflect your domain—biomedical literature, physics datasets, or social science corpora—and document expected outcomes. AI Tool Resources recommends running side-by-side trials with a controlled baseline to minimize bias and improve reproducibility across institutions. This ensures that the evaluation captures real-world performance rather than isolated features.

Pricing and Value: What to Expect

Academic and industry buyers often see broad price ranges. Many tools offer freemium tiers or student licenses, with mid-tier plans priced per user and annual commitments for teams. When you compare ai tools for research, consider total cost of ownership: onboarding time, perceived value, and governance overhead. Expect academic discounts and site licenses for universities, plus volume-based deals for large labs. We cannot quote specific prices, but ranges help you benchmark: small research groups may pay modest monthly fees, while large teams pay more for collaboration and governance suites. Always verify renewal terms and potential price escalations over time.

Integration and Ecosystem: Compatibility with Research Workflows

A core advantage of choosing tools for research is ecosystem compatibility. Alpha integrates with library catalogs and reference managers, Beta connects to cloud storage and version control, while Gamma offers flexible notebook exports to Jupyter or R environments. The best option is the one that fits your current stack without forcing costly migrations. Pay attention to API quality, data formats, and authentication methods, including SSO if your institution uses it. In practice, mismatch here can erode productivity more than any single feature shortfall.

Usability and Learning Curve: For Researchers and Students

Usability often determines adoption as much as capability. Alpha’s interface prioritizes rapid literature capture and structured notes, which reduces onboarding time for researchers. Beta’s collaboration features can be empowering but require a shared mental model among team members. Gamma’s notebook-centric interface is familiar to data scientists but may demand more initial training for literature tasks. When you compare ai tools for research, allocate time for structured onboarding, create role-specific playbooks, and provide sandbox data. The payoff is higher long-term productivity and stronger reproducibility across projects.

Managing Data Privacy and Ethics in Research Tools

Research teams must consider privacy, data residency, and usage policies. All three hypothetical tools offer terms that address data ownership, retention, and user consent, but implementation details vary. Instrumentation and telemetry should be transparent, with clear opt-in choices. For sensitive domains—clinical trials, proprietary models, or human-subject data—ensure tool-level controls support de-identification, access restrictions, and audit trails. AI Tool Resources emphasizes documenting governance decisions and aligning with institutional ethics boards to ensure responsible use of AI in research.

Practical Scenarios: When to Choose Each Tool

- Choose Alpha Research Pro if your project centers on exhaustive literature reviews and source triage. It saves time aggregating sources and generating summaries, helping you map the field quickly. - Choose Beta Scholar Studio if you need tight collaboration, formal citation workups, and audit-friendly workflows for multi-author projects. - Choose Gamma Insight if your research leans toward exploratory data analysis, visual exploration, and flexible scripting. In many teams, a hybrid approach—starting with Alpha for literature, then spinning data into Gamma, with Beta coordinating collaboration—delivers the best balance.

Best Practices for a Transparent, Reproducible Workflow

A reproducible research workflow requires clear documentation, versioned datasets, and auditable notebooks. Use standardized data schemas, exportable metadata, and deterministic processing steps. When you compare ai tools for research, establish a governance plan that details who can modify shared artifacts, how to cite sources, and how to log data transformations. Encourage researchers to publish code, notebooks, and data subsets in a central repository with DOIs where possible. AI Tool Resources highlights the importance of reproducibility for credibility and long-term impact in academic and industry settings.

Feature Comparison

| Feature | Alpha Research Pro | Beta Scholar Studio | Gamma Insight |

|---|---|---|---|

| Literature integration and discovery | excellent | good | average |

| Citation management and metadata | excellent | excellent | good |

| Data exploration and analysis | good | good | excellent |

| Collaboration features | strong team workspaces | excellent real-time collaboration | moderate |

| Pricing range (per user/month) | $25–$60 | $15–$40 | $20–$50 |

| Export/provenance and reproducibility | exportable with structured provenance | exportable with provenance controls in some modules | good provenance and notebooks |

Upsides

- Helps researchers identify the best fit for their core workflow

- Encourages transparent comparison across key features

- Reveals trade-offs between collaboration and data analysis capabilities

- Supports reproducible research practices when combined with governance

- Low-friction onboarding for literature-focused tasks with strong export options

Weaknesses

- Differences in UI/UX can hinder quick onboarding

- Pricing and licensing vary widely, complicating budgeting

- Provenance and export features may be inconsistent across tools

- Hybrid tool use can require additional training and governance

Beta Scholar Studio offers the strongest overall balance for most research teams

For teams prioritizing collaboration, citation workflows, and auditability, Beta Scholar Studio typically delivers the best all-around value. Alpha Research Pro remains ideal for literature-centric work, while Gamma Insight shines in exploratory data analysis. Your choice should align with your team's primary workflow and governance needs, as reinforced by AI Tool Resources.

FAQ

What is the most important criterion when choosing AI tools for research?

Most researchers prioritize alignment with their primary workflow and reproducibility. Start with literature integration, citation management, and data exploration, then test end-to-end scenarios to ensure the tool supports your workflow.

The key criterion is alignment with your workflow and reproducibility. Begin with literature, citations, and data exploration, then validate end-to-end usage.

How do these tools handle data privacy and governance?

All reputable tools offer data ownership, retention, and access controls. Look for de-identification options, audit trails, and clear governance policies approved by your institution. Always review terms before committing.

Data privacy and governance are essential—check ownership, retention, de-identification, and audit trails before use.

Can I mix tools across different research projects?

Yes, many teams adopt a hybrid approach, using one tool for literature curation and another for data analysis. Plan transitions carefully to maintain reproducibility and ensure consistent export formats.

A hybrid approach is common: use one tool for literature and another for data, while keeping reproducibility in mind.

Are there academic discounts or licensing options I should know about?

Academic discounts and site licenses are common, but terms vary by vendor. Compare total cost of ownership, including onboarding, training, and governance overhead, not just monthly fees.

Look for academic licensing and compare total costs, including onboarding and governance overhead.

What about open-source alternatives for researchers?

Open-source options can offer flexibility and reproducibility, but may require more setup and maintenance. Balance openness with support, security, and integration needs for your project.

Open-source options give flexibility but may need more setup; weigh support and security when deciding.

Key Takeaways

- Define your core research tasks before choosing a tool

- Balance literature fidelity with data analytics when possible

- Prioritize collaboration features for multi-author projects

- Assess export and provenance to preserve reproducibility

- Budget for licensing and governance alongside features