AI Tool for Health Care: A Practical Guide for 2026

Explore how ai tool for health care enhances diagnostics, workflow efficiency, and patient outcomes. This educational guide covers use cases, governance, integration, ethics, and practical adoption tips for developers, researchers, and students.

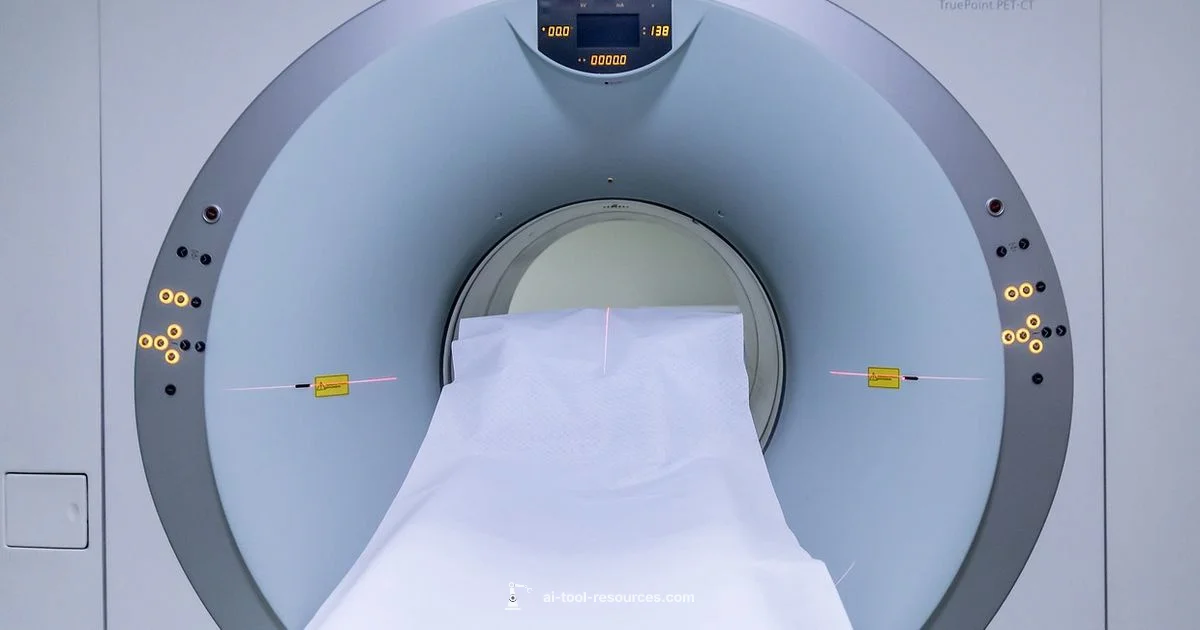

An ai tool for health care combines data analytics, imaging interpretation, and decision support to assist clinicians while protecting patient privacy. It boosts diagnostic accuracy, speeds workflows, and standardizes care across departments. These tools can augment radiology, pathology, and primary care by providing real-time recommendations, flagging anomalies, and automating routine documentation. Properly deployed, they support clinicians without replacing human judgment.

What is an AI tool for health care?

According to AI Tool Resources, an ai tool for health care is software that uses artificial intelligence to transform data into actionable clinical insight. These tools span a broad spectrum—from algorithms that interpret medical images to natural language processing that converts physician notes into structured data, and from predictive models that flag patients at risk to automation that handles routine administrative tasks. While definitions vary across domains, the common thread is that these systems augment human clinicians, not replace them. Effective implementations are built around clear clinical goals, patient safety, data quality, and regulatory compliance, with governance and meaningful success criteria to guide adoption.

In practice, organizations typically start with a narrow problem, such as reducing turnaround time for imaging reports or improving risk stratification for high-risk patients. Successful projects combine domain expertise, high-quality data, and transparent evaluation. The human-in-the-loop model — where clinicians review AI outputs before action — remains essential to preserve clinical judgment and patient trust. This article uses a pragmatic lens, focusing on real-world workflows, governance burdens, and practical steps that teams can follow to move from pilot to scalable deployment.

Core capabilities and use cases

Modern AI tools in health care deliver capabilities that touch almost every department. In radiology, AI-powered image analysis highlights potential findings and triages studies for faster reading. In pathology, image-based classifiers assist with tissue characterization. In primary care and hospital medicine, predictive analytics identify patients at risk of deterioration, enabling proactive interventions. NLP-driven systems extract data from unstructured notes to populate structured fields in EHRs, reducing manual entry and enabling better data quality. Administrative workflows benefit from automation of appointment scheduling, coding, and claims processing, freeing clinicians to focus on direct patient care. Across all use cases, explainability and clinician collaboration are critical; models should produce interpretable outputs, and clinicians should validate results within their local context. Additional benefits include decision support at the point of care, patient engagement through personalized education, and monitoring systems that detect adverse events in real time. While each domain has unique needs, the core value remains: faster, more accurate insights that support better outcomes when paired with domain expertise and robust governance.

Data, privacy, and governance

Health care AI relies on high-quality data, from imaging files to structured EHR fields and free-text notes. Data quality, lineage, and provenance matter because models only perform well when fed clean, representative data. Privacy and security are non-negotiable: patient consent, data de-identification, and strict access controls are essential, and organizations must comply with applicable regulations and industry standards. Governance structures—cross-disciplinary committees, risk scoring, and formal escalation paths—help manage bias, drift, and safety concerns. AI vendors should provide transparent documentation on data sources, validation cohorts, and performance on diverse populations. Regular audits, third-party evaluations, and clear incident response procedures reinforce trust. AI Tool Resources analysis shows that the most successful deployments pair strong governance with clear metrics and clinician involvement to ensure outputs align with clinical reality.

Integrating AI tools into clinical workflows

Integration with existing systems, especially electronic health records (EHRs), is a major success factor. Interoperability standards such as FHIR and well-documented APIs enable data exchange between AI tools and health information systems. Clinicians should be involved early to map decision points, ensure explainability, and tailor interfaces to minimize cognitive load. Pilot programs should use a measurable, time-limited scope with defined success criteria, such as reduction in report turnaround time or improvement in a specific patient outcome. Training and change-management activities—retraining staff, updating protocols, and clarifying accountability—are essential to adoption. Monitoring dashboards that track accuracy, latency, user satisfaction, and patient safety events help teams adjust in real time. When designed thoughtfully, AI-enabled workflows reduce duplication, shorten cycles, and support clinicians without increasing administrative burden.

Validation, safety, and risk management

Validation is a multi-layered process that extends beyond initial accuracy numbers. Prospective and retrospective studies, cross-site validation, and human-in-the-loop review are common components of robust assessment. It is critical to define performance thresholds that align with clinical risk, and to establish monitoring for model drift—changes in input data distributions that degrade performance over time. Safety checks should include failure modes, fallback rules, and alarms for unusual outputs. Regulatory considerations vary by jurisdiction, but most mature programs implement rigorous governance, incident reporting, and post-market surveillance. Transparent reporting on limitations and expected use cases helps clinicians understand when to trust AI outputs and when to seek human judgment. A strong emphasis on reproducibility and audit trails supports accountability and continuous improvement.

Ethics, bias, and patient trust

Bias in training data can translate into unequal care, so diverse datasets and ongoing fairness assessments are essential. Patient autonomy and consent principles apply to AI-driven recommendations, particularly in sensitive areas like mental health or genetics. Clinicians must retain ultimate decision-making authority, and patients should be informed about AI involvement in their care. Clear communication about what the AI does, its limitations, and how it affects outcomes builds trust. Organizations should publish plain-language summaries of model performance and governance practices, and provide channels for patients to ask questions or raise concerns. Ethical AI in health care also means avoiding overreliance on automation and preserving human-centered care.

Implementation roadmap and best practices

A practical implementation follows a phased roadmap. Phase 1: define the clinical problem and success metrics; assemble a data readiness assessment; build stakeholder buy-in. Phase 2: select a vendor or open-source approach, perform due diligence on data handling, and run a small-scale pilot. Phase 3: expand to additional sites with governance gates, refine the user interface, and integrate with workflows. Phase 4: scale and monitor, with ongoing retraining as data shifts. Best practices include starting with an easy win, maintaining clinician-led governance, keeping documentation transparent, and establishing clear accountability. Documentation should cover data sources, model versioning, validation results, and incident response plans. Regular training and refresher sessions help sustain adoption.

Costs, ROI, and feasibility

Costs for AI in health care vary widely, driven by scope, data needs, integration complexity, and ongoing support. Organizations should distinguish between upfront investment (data preparation, software licenses, and integration work) and ongoing costs (maintenance, monitoring, and governance). Because every setting is different, it is prudent to build a strong business case that estimates time-to-value, potential efficiency gains, and improved outcomes. ROI can come from reduced completion times, fewer errors, and better patient management, but it requires careful measurement and governance to ensure AI outputs translate into real improvements. When evaluating vendors, seek transparent pricing, clear service-level agreements, and explicit data-handling commitments.

The future: skills and teams for developers and researchers

The next wave of health care AI will emphasize practical deployment, reliability, and safety. Developers should focus on robust ML engineering practices, including MLOps, secure APIs, model monitoring, and reproducible pipelines. Clinician-facing design matters: intuitive interfaces, interpretable explanations, and accessible documentation facilitate adoption. Teams should invest in data governance, privacy-by-design, and bias mitigation from day one, with continuous learning loops that incorporate user feedback and real-world outcomes. Finally, ongoing collaboration between engineers, clinicians, patients, and regulators will shape responsible AI that expands access to high-quality care. The AI Tool Resources team recommends ongoing education, cross-disciplinary collaboration, and transparent reporting to sustain progress across 2026 and beyond.

FAQ

What is an AI tool for health care?

An AI tool for health care is software that uses machine learning and data analytics to support clinical decision-making, automate routine tasks, and improve patient outcomes. These systems can analyze images, extract insights from notes, and predict risk to guide care.

An AI tool for health care uses AI to support clinicians with data-driven decisions and automation, improving outcomes without replacing human judgment.

How do I start implementing an AI tool in a health care setting?

Begin with a clearly defined clinical problem, assemble high-quality data, establish governance, and run a small, measurable pilot. Involve clinicians from the start and plan for integration, training, and ongoing evaluation.

Start with a specific problem, gather good data, pilot with clinicians, and measure impact before expanding.

Which AI tool is better for imaging versus EHR data?

Imaging AI focuses on visual data from scans, while EHR AI processes text and structured data in patient records. Both require domain validation and integration, with the best results coming from complementary use across the care continuum.

Imaging AI helps read scans; EHR AI analyzes notes and numbers in patient records.

What are common challenges or risks of AI in health care?

Data quality, bias, regulatory compliance, and integration hurdles can limit effectiveness. Address these with governance, ongoing monitoring, clinician involvement, and clear escalation paths.

Data quality and bias are common challenges; mitigate with governance and continuous monitoring.

How much does an AI tool for health care cost?

Costs vary by scope, data needs, and vendor. Expect upfront investments in data prep and integration, plus ongoing maintenance. Build a business case to quantify time-to-value and patient outcomes.

Costs depend on scope and data; look for clear pricing and a solid ROI plan.

What are best practices to ensure safety, privacy, and ethics?

Establish governance, validate models, and ensure privacy-by-design. Involve diverse stakeholders and monitor for drift, always keeping patient trust and clinician oversight at the center.

Use governance, validation, and privacy-by-design with ongoing monitoring and clinician oversight.

Key Takeaways

- Define a clear clinical problem before selecting AI tools.

- Prioritize data governance and patient privacy from day one.

- Pilot programs validate value and guide scaling.

- Continuously monitor AI performance and involve clinicians in governance.