How to Get the Most Out of AI Coding Tools

Discover practical steps to maximize AI coding tools—from tool selection and IDE integration to testing, governance, and continuous improvement for developer teams.

This guide provides practical, actionable steps to maximize AI coding tools by selecting the right tools, integrating them into your workflow, and applying best practices for testing and collaboration. You’ll learn setup, usage patterns, and risk management to boost productivity and code quality. Expect concrete steps you can implement today, with examples for Python, JavaScript, and data pipelines. Requirements: access to at least one AI coding tool, a capable IDE, and version control.

Why AI coding tools matter

AI coding tools have the power to accelerate development, reduce boilerplate, and surface alternative approaches to problems. When used thoughtfully, they can help you write cleaner code faster, explore multiple design options, and catch edge cases you might miss. However, tools are only as good as how they are used. The most effective developers treat AI assistants as collaborators, not crutches. According to AI Tool Resources, the best practitioners blend human judgment with AI suggestions, using the tools to handle repetitive tasks and to accelerate ideation without sacrificing code quality or security. This balance—humans + AI—drives sustainable productivity and better outcomes across languages and domains.

Understanding where AI coding tools fit in your workflow is the first step. They excel at boilerplate, pattern generation, quick scaffolding, and rapid prototyping. They are least helpful when handed full responsibility for critical decisions or when used without guardrails. Use AI to augment your cognitive load, not dominate it. This mindset sets the foundation for a scalable, maintainable development process.

By framing your goals clearly and aligning tool capabilities with your architecture, you can unlock consistent gains without sacrificing readability, testability, or security. The strongest teams document decision criteria for tool use, maintain shared templates, and review AI-generated outputs just like any other code change.

{"custom":true}

How to choose the right AI coding tool

Selecting the right AI coding tool requires a clear map of your needs, your tech stack, and your risk tolerance. Start by listing core tasks you want to augment: code completion, boilerplate generation, test scaffolding, or documentation support. Then evaluate tools along several axes: language and framework support, model quality, latency, and cost. Privacy and data handling matter, especially if your codebase includes proprietary logic. Prefer tools with local execution options for sensitive projects or robust on-premises offerings for large teams. Consider the ecosystem: integrations with your IDE, version control, CI/CD, and your favorite linters or formatters. Look for reproducible results across languages and clear prompts/templates to maintain consistency. Finally, run a small pilot on a real task and measure outcomes (time to complete, defect rate, and reviewer effort). This helps you quantify value before scaling.

As you compare options, prioritize transparency: how models are updated, how outputs are logged, and how feedback loops are managed. The right choice aligns with your team’s skill level, velocity, and governance requirements. AI Tool Resources analysis shows that teams that document tool usage patterns, maintain shared templates, and set guardrails consistently outperform those that treat AI as a black box. This diligence builds trust and long-term productivity.

"custom":true}

Integrating AI coding into your workflow

Integration is where theory becomes practice. Start by embedding AI-assisted workflows into your IDE. Install an AI plugin or extension that supports your language and tooling, and configure it to match your coding standards. Create a small set of templates and prompts for common tasks (e.g., function skeletons, tests, and documentation snippets) and version them in your repository. Establish a process for prompting: who prompts, when prompts are amended, and how outputs are reviewed. Replace ad-hoc usage with a guided routine: run a prompt, edit the result, run tests, and push. This discipline keeps quality high and helps teammates learn consistent patterns.

Promote transparency by logging AI-generated changes in your VCS along with rationale and review notes. Keep a central library of prompts and templates, with notes on what works best for specific tasks and languages. Encourage pair programming to review AI outputs and provide feedback. Over time, your AI-enabled workflow becomes a repeatable machine for speed—without compromising code readability or maintainability.

"custom":true}

Safe coding practices when using AI tools

Safety is non-negotiable when relying on AI for development. First, treat AI-generated code as a draft: review, test, and refactor. Implement guardrails such as linting, unit tests, and security scans for every AI-generated snippet. Avoid feeding sensitive credentials, secrets, or proprietary logic into AI tools, especially cloud-based services. Use local or on-premise options when possible, or rely on providers with strong data handling policies and transparent data-use terms. Establish ownership: even if AI writes a patch, a human should approve it before merging.

Document prompts and outputs to preserve traceability. Maintain a rollback plan for AI-assisted changes, and ensure that critical paths (security, authentication, and data access) receive extra scrutiny. Finally, educate your team about limitations: AI may generate syntactically correct code that fails under edge cases or introduces subtle bugs. Cultivate a culture of critical review rather than blind trust.

"custom":true}

Practical patterns and examples

Put AI tools to work with concrete patterns that scale. Use AI to generate boilerplate tests and skeletons, then rewrite them to fit your project’s style. Create prompts that produce well-structured, idiomatic code rather than single-line hacks. Leverage AI for documentation generation—inline comments, README sections, and API docs can all be drafted quickly and refined by humans.

In practice, you might start with a small module and have the AI propose multiple implementation options. Compare approaches, run tests, and select the best path. Use AI to review diffs, surface potential issues, and suggest improvements. For data pipelines, AI can help with schema generation, validation rules, and unit tests for ETL steps. The key is to keep outputs modular, testable, and aligned with your architecture.

As you scale, codify these patterns into templates and scripts that your team can reuse. Public templates speed onboarding for new engineers and maintain consistency across projects. By building a living library of AI-assisted patterns, you turn experimentation into repeatable value.

"custom":true}

Measuring impact and continuous improvement

To sustain momentum, track measurable outcomes from AI-assisted development. Common metrics include time-to-delivery, defect density, and review cycles. Measure how AI usage changes your throughput without increasing regression risk. Collect qualitative feedback from developers about confidence, clarity, and maintainability of AI-generated code. Use this data to refine prompts, update templates, and adjust guardrails.

For governance, establish a quarterly review of AI tool performance, model updates, and security posture. Use a dashboard to monitor prompts usage, outputs, and reviewer actions. The goal is a data-informed loop: improve prompts, enhance templates, and incrementally raise standards. AI Tool Resources analysis shows that teams who systematically evaluate tool impact every few months realize more consistent productivity gains and fewer regressions. Maintain a bias toward continuous improvement rather than a one-off optimization.

Finally, celebrate small wins and share lessons across the organization. This builds trust in AI tooling and accelerates adoption while preserving quality and safety.

"custom":true}

Overcoming common pitfalls and myths

Many teams encounter myths that AI will magically solve all programming problems or that humans will become redundant. The reality is more nuanced: AI accelerates cognitive tasks, but human judgment remains essential for design, architecture, and critical decisions. A common pitfall is over-reliance on AI outputs without validation, which can introduce subtle bugs or security risks. Another issue is fragmented tool usage—without shared templates and standards, teams waste time reconciling conflicting outputs.

To avoid these traps, implement a governance model that includes defined ownership, code reviews for AI-generated changes, and a central repository of approved prompts. Emphasize learning: encourage engineers to study AI-generated patterns and understand why certain outputs are chosen. Finally, maintain a growth mindset: continuous practice with AI tools, combined with rigorous testing and review, leads to sustainable improvements rather than temporary productivity spikes.

"custom":true}

Tools & Materials

- AI coding tool(s) access(License or free tier with API access)

- Integrated development environment (IDE)(e.g., VS Code, JetBrains, with AI plugin support)

- Version control system(Git repository with remote hosting)

- Sample project or dataset(Starter repo to test AI tooling on real tasks)

- Code quality and testing suite(Linters, unit tests, CI integration)

- Documentation resources(Access to official docs, tutorials, prompts library)

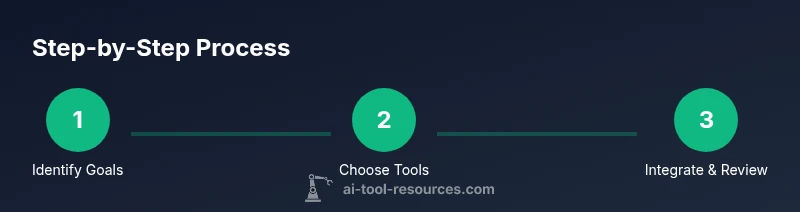

Steps

Estimated time: 90-120 minutes

- 1

Identify goals and constraints

Define what you want AI coding tools to achieve in this project (e.g., speed, consistency, reduced boilerplate). Establish success metrics and constraints (security, performance, and maintainability) to guide tool selection and usage.

Tip: Document measurable goals and map them to tool capabilities to avoid scope creep. - 2

Inventory tools and compare capabilities

List candidate tools, compare language support, integration options, and data policies. Run a small pilot to compare outputs on representative tasks.

Tip: Create a side-by-side matrix of features, pricing, and governance terms. - 3

Set up your workspace

Install required plugins or extensions, configure prompts/templates, and connect to your repositories. Create a baseline project to test workflows.

Tip: Version prompts with your codebase for reproducibility. - 4

Design prompts and templates

Develop a set of prompts for common tasks (scaffolding, tests, documentation). Include example inputs/outputs and edge cases.

Tip: Keep prompts modular and language-specific for consistency. - 5

Integrate AI into the IDE

Enable AI-assisted features in the editor, wire prompts to templates, and ensure outputs flow into your code review process.

Tip: Require at least one human review for all critical changes. - 6

Establish testing and guardrails

Set up linting, unit tests, and security scans for AI-generated changes. Implement a rollback plan.

Tip: Treat AI outputs as drafts requiring verification. - 7

Collaborate and review results

Use pair programming or code reviews to validate AI suggestions and capture feedback for prompts.

Tip: Log decisions and rationale in the commit history. - 8

Measure impact and iterate

Track time savings, defect rates, and reviewer effort. Refine prompts and templates based on data.

Tip: Schedule regular review cycles to sustain gains.

FAQ

What are the best AI coding tools for beginners?

For beginners, start with widely adopted tools that offer strong language support, clear examples, and good documentation. Prioritize tools with local execution options to avoid data sharing whenever possible. Build familiarity through templates and simple projects before expanding usage.

For beginners, start with well-documented tools that support your language and offer clear examples, then grow your use with templates.

How do I avoid security risks when using AI code tools?

Avoid sending sensitive credentials or proprietary code to cloud AI services. Use on-premises or trusted providers with explicit data-handling policies, and implement static testing, code reviews, and authorization checks for any AI-generated outputs.

Don’t send sensitive data to AI tools and use reviews and tests to keep security strong.

Can AI coding tools replace human developers?

No. AI coding tools accelerate and augment development, but humans remain essential for design decisions, architecture, and complex problem solving. The best teams use AI to handle repetitive tasks while focusing human effort on high-value work.

AI helps, but it doesn’t replace human developers who make key design decisions.

How should I measure the impact of AI coding tools?

Track metrics like time-to-delivery, defect density, and review cycles before and after adopting AI tools. Combine objective data with team feedback to gauge confidence and maintain quality.

Measure time savings, quality, and team satisfaction to see the real impact.

What should I do if AI suggestions are wrong?

Treat outputs as drafts. Reproduce the issue, explain why it’s wrong, and adjust prompts or templates. Use tests to catch mistakes and prevent regressions.

If AI suggestions are off, review, adjust prompts, and test before applying.

Watch Video

Key Takeaways

- Choose tools that fit your stack and team

- Integrate AI into your IDE for speed

- Establish guardrails and test extensively

- Track impact and iterate on prompts