How to Use AI in Testing: A Practical Guide

Discover how to apply AI to testing workflows—from data prep to CI/CD integration—through practical steps, metrics, and governance to boost quality.

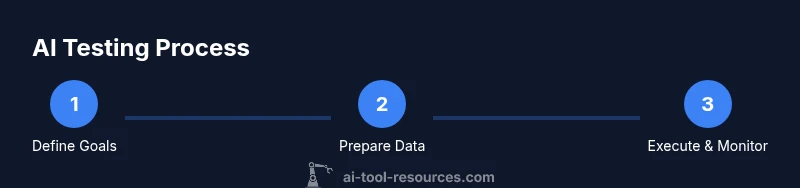

You can dramatically improve testing quality and speed by applying AI across the test life cycle. This guide shows how to design AI-assisted tests, generate data, execute and monitor results, and govern risk. Start with clear objectives, suitable data, and a CI/CD pipeline to integrate AI testing into your workflow.

Why AI in testing matters

AI in testing offers efficiency, coverage, and early defect detection. According to AI Tool Resources, teams that adopt AI in testing report improvements in feedback speed and better hit rates on flaky tests. In practice, AI helps automate repetitive tasks, expose edge cases, and scale test coverage beyond what manual scripting can achieve. This section explains where AI adds value across the testing lifecycle and where human judgment remains essential. By embracing AI, development teams can shift from purely scripted checks to intelligent, data-driven validation. The result is faster feedback loops, more consistent test results, and the ability to identify failure modes that humans might miss. However, AI is not a silver bullet; it complements, not replaces, skilled testers who understand domain logic and risk. AI Tool Resources highlights that success comes from balancing automation with governance, clear ownership, and continuous learning. The tone here is practical: identify where AI adds the most value and establish guardrails from day one.

Defining AI testing objectives

Before investing in AI for testing, define what you want to achieve. The most effective AI testing programs start with concrete outcomes such as increasing automated coverage on critical paths, reducing flaky tests, or shortening the time to diagnose failures. AI Tool Resources analysis shows that measurable goals help teams prioritize features like test data generation, intelligent test selection, and anomaly detection. Translate goals into specific metrics (coverage % on risk areas, average time to detect a regression, rate of false positives) and align them with product roadmap milestones. In practice, set quarterly targets and reassess after each sprint. Remember that some goals may require more data quality and governance than others; evaluate risk and feasibility early. The aim is to build a roadmap that evolves as your AI testing capabilities mature.

Data strategy for AI testing

Data is the lifeblood of AI testing. You’ll need labeled test data, production data with privacy safeguards, and synthetic data to cover edge cases. Start by inventorying data sources, defining data quality rules, and establishing data lineage. Data governance is critical: ensure compliance with privacy regulations, anonymize sensitive fields, and enforce access controls. Create a data collection plan that captures outcomes of past tests, failure modes, and environmental variations. Maintain versioned datasets so tests are reproducible and auditable. AI approaches rely on diverse, representative data; biased or narrow data leads to blind spots and misleading results. Finally, implement data drift monitoring to detect when data distributions change and adapt tests accordingly.

AI techniques for test design and data generation

AI can transform how you design tests and generate data. Core techniques include:

- Generative test case design: produce new test scenarios from requirements or user stories.

- Model-based testing: use abstract models to derive concrete test inputs and sequences.

- Data augmentation: create variant inputs to expand coverage without manual scripting.

- Anomaly and drift detection: flag unexpected results or environment changes.

- Coverage-guided exploration: prioritize tests that maximize fault detection potential.

- Rule-based and hybrid methods: combine domain rules with ML insights for balanced results. In practice, select a mix of deterministic and probabilistic strategies to balance reliability with discovery. Keep guardrails to prevent test suites from exploding in size, and ensure traceability from generated tests back to requirements.

AI in test execution and monitoring

Once AI-generated tests are ready, you need robust execution and monitoring. AI-powered test runners can prioritize tests based on risk, allocate resources dynamically, and run tests in parallel across environments to reduce wall-clock time. Monitoring should focus on stability, detection rate, and false-positive frequency. Instrument test results with explanations for why a test passed or failed, enabling faster diagnosis. Implement dashboards that show trend lines for coverage, defect discovery, and test flakiness. Use explainable AI approaches so testers understand AI decisions rather than treating them as black boxes. Regularly review model outputs, validate results with human judgment, and schedule periodic recalibration when data drifts or requirements change.

Integrating AI testing into CI/CD and toolchains

To unlock end-to-end benefits, embed AI testing into your CI/CD pipelines. Start by versioning datasets and models used in tests, then register AI tests as first-class citizens in your test suite. Integrate with build automation so AI tests run alongside traditional checks, with clear pass/fail criteria and rollback plans. Use environment isolation, reproducible experiments, and audit trails to maintain reliability. Tie AI test results into release gates and product quality metrics. Document the rationale for AI-driven tests, track changes across pipeline runs, and ensure rollback paths exist if AI tests cause flakiness. Finally, establish a feedback loop from production outcomes to AI models so tests stay aligned with real-world behavior.

Governance, safety, and risk management

AI testing introduces new risks that require governance. Manage data privacy, bias, model drift, and explainability with transparent policies and approvals. Establish guardrails to prevent unsafe experiments, enforce access controls for data and models, and maintain an auditable history of decisions. Use hybrid testing strategies: AI-driven tests for exploration and coverage, traditional deterministic tests for critical paths. Regularly conduct risk assessments and safety reviews, especially for safety-critical domains. Prepare a communication plan that shares results with stakeholders and documents how AI tests influence product quality. The goal is to balance innovation with accountability while keeping customer trust intact.

Practical patterns and case studies

Real-world patterns illustrate how AI in testing works in practice. Pattern 1: generate test cases from user stories and acceptance criteria, then validate coverage against requirements. Pattern 2: apply anomaly detection to test logs and outputs to surface flaky behavior early. Pattern 3: use synthetic data to create boundary conditions that are hard to reach with manual testing. Pattern 4: integrate AI tests with defect triage so machine-generated signals feed specialists quickly. Case studies across teams show improved coverage, faster feedback, and better detection of edge cases, provided governance and clear ownership are in place. The takeaway is to start small, measure impact, and scale once you have repeatable results.

Tools & Materials

- CI/CD pipeline(E.g., GitHub Actions, GitLab CI, or CircleCI; enables AI test runs in build pipelines)

- Data workspace(Central place for datasets, versions, and test data generation artifacts)

- AI tooling platform(ML/AI services for test design, data generation, and analytics)

- Notebook or IDE(Jupyter, VS Code, or similar for experimenting with AI test ideas)

- Data privacy and governance checklist(Policies and controls to safeguard sensitive data)

- Monitoring and observability tools(Dashboards to track coverage, failures, and drift)

Steps

Estimated time: 6-8 weeks

- 1

Define testing objectives

Clarify what AI testing should achieve in your context—coverage, speed, reliability, or defect insight. Align objectives with product goals and establish measurable success criteria.

Tip: Document the decision rationale and required metrics for future audits. - 2

Assemble data and tooling

Inventory data sources, choose datasets, and set up the AI tooling stack. Ensure privacy controls and versioning are in place before experiments begin.

Tip: Use a data catalog to track provenance and quality attributes. - 3

Choose AI techniques

Select a mix of AI methods suited to your goals—test case generation, anomaly detection, and scenario exploration work well in many contexts.

Tip: Start with a small pilot set of techniques and expand based on results. - 4

Implement test generation

Develop AI-generated test cases and data variations, ensuring traceability to requirements and maintainability of the test suite.

Tip: Keep generated tests lightweight and readable for long-term maintenance. - 5

Integrate with CI/CD

Hook AI tests into the pipeline with clear gating, reproducible runs, and rollback paths if AI tests degrade stability.

Tip: Version-control datasets and models used in tests for traceability. - 6

Monitor, retrain, and govern

continuously monitor AI test performance, retrain models as data shifts, and enforce governance policies to mitigate risk.

Tip: Establish a feedback loop from production to AI testing to stay current.

FAQ

What is AI testing?

AI testing uses machine learning and data-driven techniques to design, execute, and evaluate tests. It complements traditional testing by expanding coverage and automating repetitive tasks, while human oversight ensures safety and domain accuracy.

AI testing uses machine learning to design and run tests, expanding coverage and automating tasks, with human oversight for safety and accuracy.

What are common AI testing techniques?

Common techniques include generative test case design, anomaly detection on test outputs, data augmentation for coverage, and model-based testing to derive input sequences from specifications.

Common techniques include generating new test cases, detecting anomalies, augmenting data, and model-based testing from specifications.

Data needed to start AI testing?

You need labeled testing data, diverse inputs, and access to production-like environments. Start with a small, governed dataset and progressively expand with synthetic data to cover edge cases.

Begin with labeled data and simulated scenarios, then expand using synthetic data as you scale.

How do I measure success of AI testing?

Measure improvements in coverage, defect detection speed, and test stability. Track metrics over iterations and compare against baselines defined before the pilot.

Track coverage, detection speed, and stability, and compare against your baseline after each iteration.

Is AI in testing safe for production apps?

AI testing should augment, not replace, proven tests. Use governance, auditing, and human review, especially for safety-critical features.

It's a supplement to careful testing—governance and human review are essential for safety-critical areas.

What are common pitfalls to avoid?

Relying on AI without governance, data leakage, and overfitting to historical data. Start small, measure impact, and scale thoughtfully with clear ownership.

Avoid governance gaps, data leakage, and overfitting. Start small and measure impact before scaling.

Key Takeaways

- Define clear AI testing objectives.

- Prepare high-quality, governed data.

- Integrate AI tests into CI/CD with traceability.

- Monitor, evaluate, and retrain regularly.