Gen AI Tools for Image Generation: A Practical Guide

Discover how gen ai tool for image generation works, compare diffusion and GAN approaches, and learn to select prompts and evaluate AI image generators for research and education.

Gen ai tool for image generation refers to software that uses generative AI to create images from prompts or inputs, typically via diffusion or GAN models.

What is a gen ai tool for image generation?

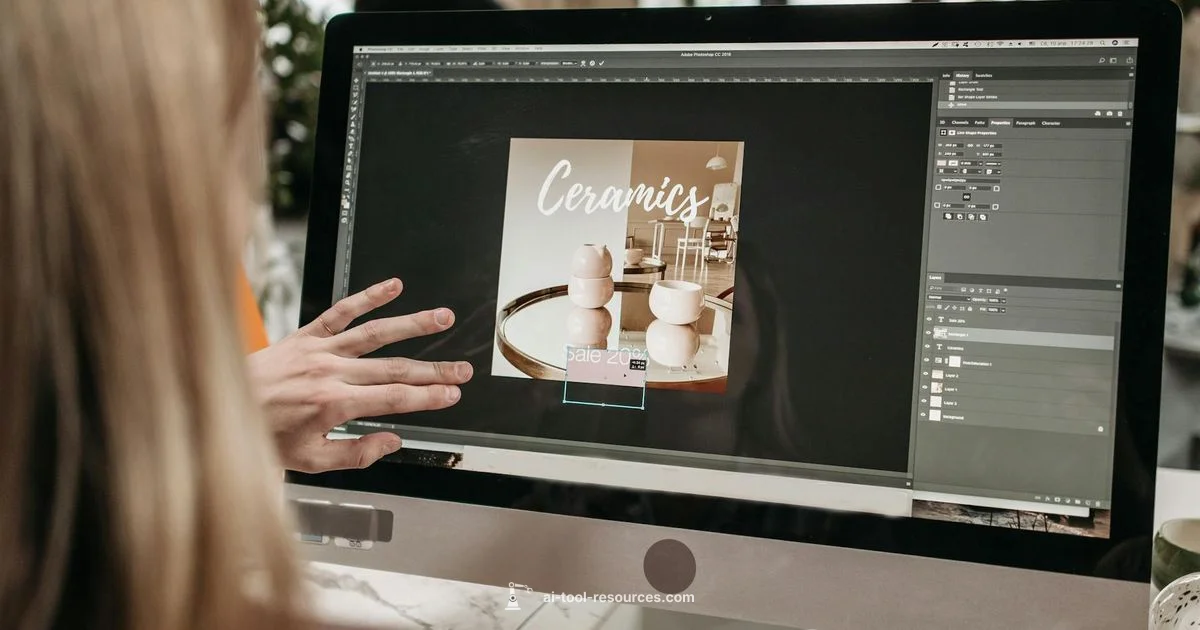

According to AI Tool Resources, a gen ai tool for image generation is software that uses generative AI to create images from prompts or inputs, typically leveraging diffusion or GAN models. These tools translate abstract ideas into visual representations, enabling rapid ideation without traditional design pipelines. They are widely used by developers, researchers, and students to prototype concepts, teach concepts, and explore creative possibilities. In practice, you provide a prompt, define constraints, and the model returns an image that matches the request, often with options to iterate, refine, or upscale. The term emphasizes the generation aspect rather than classification or editing, focusing on the creation of original visuals. Across industries, these tools support quick exploration, iterative design, and new forms of visual storytelling.

How image generation models work behind the scenes

Most modern image generation models rely on two broad families: diffusion models and generative adversarial networks (GANs). Diffusion models learn to progressively refine noise into coherent images guided by a prompt and learned priors, while GANs use a generator and a discriminator to converge on plausible visuals through adversarial training. Both rely on large datasets to learn style, structure, and realism, then apply prompts to steer results. You can influence outcomes with seed values, conditioning signals, or control nets that guide mood, lighting, or object placement. Understanding these mechanics helps researchers craft better prompts and developers design safer deployment pipelines that balance creativity with controls like content filters and licensing compliance.

Core capabilities and use cases

Gen ai tools for image generation excel at rapid ideation and scalable visuals. Typical capabilities include text-to-image synthesis, style transfer, and image inpainting or editing. Use cases span concept art for games and film, product visualization and marketing mockups, educational diagrams and illustrations, architectural renderings, and synthetic data generation for model training. When combined with versioning and prompts, teams can explore dozens of variants quickly, reducing turnaround time from days to hours. For researchers, these tools also enable experiments in multimodal semantics, visual reasoning, and cross-domain design exploration. In education, learners can visualize complex concepts and prototyping ideas with minimal resources.

Risks, licensing, and data considerations

Creating images with AI tools raises questions about data provenance, licensing, and the rights to outputs. Training data may include copyrighted material, and licenses governing generated outputs can vary by provider. It is essential to review terms of service, model licenses, and any usage restrictions for commercial work. Bias, representation, and potential harms should be considered, particularly when generating visuals for public-facing materials. Additionally, data privacy practices matter if prompts or inputs could reveal sensitive information. Clear documentation of prompts, provenance, and licensing helps teams stay compliant and ethical as they scale.

Comparing approaches and platforms

There is no one-size-fits-all solution. Diffusion-based systems generally deliver high-fidelity images with broad style flexibility, while GAN-based approaches can excel in faster iterations for specific domains. Cloud-based platforms often offer ease of use, API access, and scalable compute, whereas on‑premise or self-hosted options prioritize data control and customization. When choosing a platform, consider latency, rate limits, API stability, model updates, and the availability of fine-tuning or training on your own datasets. Importantly, assess how each option handles safety, licensing, and attribution to ensure responsible use in research and education.

How to evaluate quality and safety

Quality in image generation means fidelity to the prompt, coherence of composition, and acceptable level of artifacts. Evaluate consistency across prompts, the ability to reproduce requested styles, and the relevance of details. Safety features such as content filters, watermarking, and provenance tracking help prevent misuse. It is also important to test outputs on multiple prompts to gauge how well the model captures nuanced instructions. Document evaluation results to guide prompt engineering and tool selection, especially in research settings where reproducibility matters.

Choosing the right gen ai tool for image generation

When selecting a tool, start with your primary goal: concept art, product visuals, or educational diagrams. Consider output quality, prompt controllability, and the ease of integrating with your existing workflows. Check licensing terms for commercial use, data handling practices, and the availability of APIs or SDKs for automation. Assess cost versus benefit, including compute credits, usage limits, and potential volume discounts. Look for community support, documentation, and examples that speed up onboarding. Finally, evaluate safety features, such as content policies and bias mitigation, to ensure responsible deployment in academic or professional contexts.

Getting started with your first prompt

Begin with a clear objective and a compact prompt. Start simple, then incrementally add details like lighting, mood, and perspective. Use seeds to reproduce favorable results and try multiple styles to discover what resonates with your goals. Save successful prompts as templates and build a library of examples for your team. Finally, set up a feedback loop: collect outputs, rank them against criteria, and refine prompts and settings to steadily improve results over time.

FAQ

What is a gen ai tool for image generation?

A gen ai tool for image generation is software that uses generative AI to create images from text prompts or inputs. It enables rapid visualization, prototyping, and creative exploration across fields such as design, research, and education.

A gen ai tool for image generation creates pictures from prompts using artificial intelligence, helping you quickly visualize ideas and prototypes.

How do these tools create images?

Most tools rely on diffusion or GAN models trained on large image datasets. You provide prompts and constraints, and the model iteratively produces an image that matches the request while trying to minimize artifacts.

They use advanced AI models trained on lots of images to turn prompts into pictures, refining the result until it matches your prompt.

What are the primary risks with image generation tools?

Risks include licensing ambiguity, data provenance, potential biases, copyright concerns, and the possibility of generating inappropriate content. It is crucial to review terms, monitor outputs, and implement safety controls when deploying these tools.

Key concerns are licensing, bias, and safety. Always review terms and monitor outputs when using these tools.

Who owns the generated images?

Ownership depends on the tool's terms of service and licensing. Some providers grant broad usage rights, while others impose restrictions on commercial use or redistribution. Always verify ownership and usage rights before publishing.

Ownership is defined by the tool's license. Check terms to know what you can do with outputs.

Can I train or fine-tune these models on my data?

Many platforms offer fine tuning or custom training, but this depends on the provider and model. Be mindful of licensing, data privacy, and the need for quality-curated datasets.

Some tools allow fine tuning with your data, but you must follow the provider's licensing and privacy rules.

Is image generation suitable for educational use?

Yes, for visual explanations and concept illustrations. Ensure prompts align with learning objectives and respect licensing terms, especially in classroom or publication contexts.

It's great for teaching visuals and diagrams, as long as you follow licensing terms.

Key Takeaways

- Define your goal before prompting.

- Prompts drive results; start simple and iterate.

- Check licensing and data policies before use.

- Balance speed with control and safety features.

- Document prompts to improve reproducibility.