ai tool.comparable to chatgpt: A Comprehensive Comparison

An analytical comparison of AI tools comparable to ChatGPT, highlighting capabilities, safety, and use cases for developers, researchers, and students.

ai tool.comparable to chatgpt refers to AI tools that match ChatGPT in core language capabilities while offering different strengths in safety, privacy, and integration. This comparison highlights the main contenders, how they stack up on critical criteria, and when each option shines for developers, researchers, and students.

Defining ai tool.comparable to chatgpt: What makes a tool comparable?

In practice, ai tool.comparable to chatgpt describes AI tools that balance natural language generation, reasoning, and contextual understanding with robust safety controls and integration options. The phrase signalizes a spectrum rather than a single benchmark: tools may approach ChatGPT's fluency, while differing in governance, data handling, and extensibility. For AI Tool Resources, the goal is to present a neutral framework that helps developers, researchers, and students assess comparability based on function, risk, and value. This article uses the keyword ai tool.comparable to chatgpt as a lighthouse term to guide you through capabilities, trade-offs, and decision criteria in 2026.

According to AI Tool Resources, comparability is not about duplicating ChatGPT’s exact outputs but about achieving similar usefulness under varied constraints—such as cost, privacy, or enterprise-scale deployment. Expect a balance of language quality, safety features, API ergonomics, and ecosystem support when evaluating tools that sit in the same class as ChatGPT. By anchoring the discussion to practical tasks—coding assistance, research literature summarization, and education support—you can better gauge which tool aligns with your project goals.

Core capabilities to compare: generation quality, safety, and integration

The most weighty differentiators are core capabilities and how they map to your tasks. Language quality matters for writing, tutoring, and problem solving; however, the acceptable level of accuracy varies by domain. Safety controls determine guardrails for sensitive topics, disallowed content, and user data handling. Integration touches on API design, SDK availability, plugin ecosystems, and plug-and-play workflows with your existing tooling stack. When you look at tools that are ai tool.comparable to chatgpt, you should assess four principal axes: (1) linguistic fluency and reasoning, (2) safety posture and content policies, (3) multimodal reach and context awareness, and (4) developer experience and ecosystem maturity. These factors collectively shape how well a tool will serve researchers, developers, and students across different domains.

An important nuance is the trade-off between raw generation quality and policy strictness. A tool with very aggressive language capability may require tighter guardrails to satisfy enterprise privacy or compliance requirements. Conversely, tighter safety may occasionally constrain creative or exploratory tasks. In evaluating these tools, aim for a balance that fits your use case, data governance needs, and expected user interactions. The landscape evolves rapidly, so prioritize extensibility and clear upgrade paths over short-term novelty.

Practical use cases across research, development, education

Researchers often need strong summarization, precise citation handling, and the ability to reason about unfamiliar topics. Developers seek robust code generation, testing scaffolds, and API reliability. Students require easy-to-understand explanations, tutoring support, and accessible examples. Tools that are ai tool.comparable to chatgpt should excel in these domains by offering: (a) transparent attribution and citation generation, (b) reproducible behavior with configurable randomness, (c) integration with popular datasets and code repositories, and (d) privacy-conscious modes for handling sensitive coursework or proprietary research. In practice, choosing a tool means mapping your core tasks to four lenses: accuracy, safety, speed, and cost. For instance, a research project may tolerate slower response with stronger guardrails, while a development task may prioritize rapid iterations and richer API tooling. Education scenarios often favor interpretability, explainability, and easy-to-deploy classroom licenses. Recognize that the most capable tool for one task may not be the best fit for another, underscoring the value of a modular, multi-tool workflow.

As you prototype, document decision criteria and include team feedback. AI Tool Resources emphasizes that a structured evaluation plan—covering pilot tasks, privacy considerations, and integration tests—reduces bias in selection and aligns tool choice with project milestones. This disciplined approach helps ensure that your final decision improves productivity without compromising safety or governance.

Comparative landscape: major players and how they stack up

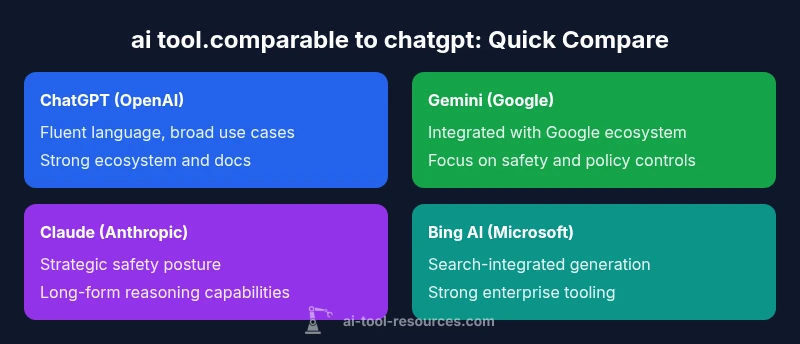

ChatGPT remains a widely used baseline for many users due to its broad coverage, accessible tooling, and strong community support. Alternatives like Claude, Gemini, and Bing AI bring distinct strengths: Claude often emphasizes safety-first responses and long-form reasoning; Gemini integrates with Google’s ecosystem and may offer tighter coupling with search and enterprise tools; Bing AI leans on Microsoft’s ecosystem and can blend search with generation for information retrieval tasks. When evaluating tools that are ai tool.comparable to chatgpt, you should consider how each handles memory and context length, the quality of code generation, and the ability to tailor outputs to specific audiences. You’ll also want to examine policy flexibility, data handling practices, and the availability of developer-friendly dashboards for policy tuning. In 2026, the market is increasingly defined by platform interoperability, open standards, and the ability to deploy in on-premises or regulated environments. AI Tool Resources notes that adoption often hinges on how these tools integrate with existing pipelines and how transparent the vendor is about data usage and model updates.

Evaluation framework: scoring criteria and tests

A rigorous evaluation should rely on a consistent scoring framework. Core criteria include: (1) linguistic quality and coherence, (2) factual correctness and citation capabilities, (3) safety enforcement and content policy clarity, (4) latency and reliability under load, (5) API ergonomics and tooling, (6) privacy posture and data handling controls, (7) cost and scalability, and (8) governance features for enterprise deployments. For each tool, create a test suite that mirrors your typical tasks—journal article drafting, code generation, data analysis, and student tutoring—and document results with objective metrics where possible. While you won’t publish raw metrics in every case, you should summarize trends and provide actionable guidance on when to prefer one tool over another. This method helps teams compare ai tool.comparable to chatgpt on a level playing field and supports project milestones with transparent decision rationales. AI Tool Resources’s framework for evaluation emphasizes reproducibility, auditable outputs, and clear governance narratives.

Real-world testing and caveats: data privacy, cost, latency

Real-world deployment exposes practical constraints that go beyond theoretical capabilities. Data privacy concerns include how prompts and outputs are stored, who has access to logs, and whether you can opt out of training data usage. Cost considerations vary widely: some tools employ per-token pricing, others offer monthly subscription tiers with bundled quotas, and enterprise agreements may include usage ceilings. Latency and stability matter in classroom settings or real-time coding sessions, where long response times disrupt progress. Vendor lock-in is another factor: how easily you can extract data or switch tools without disrupting current workflows. AI Tool Resources advocates running parallel pilots with multiple options to quantify performance in your specific environment and to avoid premature commitments. Budgeting should incorporate not only monthly subscription costs but also development time, integration effort, and long-term maintenance.

Getting started: a step-by-step approach to trial and selection

Begin with a narrow pilot: select two or three ai tool.comparable to chatgpt candidates that align with your task profile. Define success criteria upfront and build a baseline task set that covers writing, reasoning, and code generation. Set up sandbox environments to compare outputs side by side, capture user feedback, and measure objective metrics like accuracy and response time. Expand the pilot to cover edge cases and domain-specific tasks, such as technical documentation or academic summaries. Document governance considerations—data handling, retention, and compliance—and ensure you have a clear plan for onboarding and training team members. Finally, synthesize findings into a decision brief that contrasts strengths, weaknesses, and recommended use cases for each tool. This systematic approach helps you select ai tool.comparable to chatgpt options that best fit your project’s goals, risk profile, and resource constraints.

Getting started with adoption: governance, integration, and scale

As you scale from pilot to production, focus on governance and interoperability. Establish clear data-handling policies, including retention, anonymization, and audit trails. Build integration layers that allow you to swap out or combine tools as needed, rather than locking in a single provider. Consider implementing feature flags to switch between engines for specific tasks, enabling safer testing and incremental rollout. Finally, maintain an ongoing, data-informed review cadence to reassess your choice as models evolve and new capabilities emerge. This disciplined path ensures you remain aligned with your research and development objectives while staying mindful of privacy, cost, and performance trade-offs.

Feature Comparison

| Feature | ChatGPT (OpenAI) | Claude (Anthropic) | Gemini (Google) | Bing AI (Microsoft) |

|---|---|---|---|---|

| Core language quality | High fluency with strong contextual reasoning | Emphasis on safety and grounded responses | Strong readability with ecosystem-aware prompts | Good factual alignment with search results |

| Safety & policy controls | Mature guardrails and enterprise policies | Rigorous safety constraints and content controls | Balanced safety with creative exploration | Policy controls integrated with Bing search results |

| Multimodal capabilities | Text-first with some image support in tiers | Moderate multimodal capabilities with emphasis on safety | Growing multimodal features tied to ecosystem tools | Integrated web search with generation and visuals |

| Developer experience | Robust API, strong docs, extensive examples | Clear safety settings and documentation | Mature SDKs and integration guides | Extensive enterprise tooling and integration options |

| Pricing model | Subscription-based with usage quotas | Tiered pricing and special enterprise terms | Per-user or per-project licensing options | Hybrid models with search-enabled pricing |

| Best for | General-purpose language tasks and coding help | Safety-focused research and analysis | Unified workflow in Google ecosystem | Hybrid search plus AI-assisted tasks in Microsoft stack |

Upsides

- Broad ecosystem and interoperability across platforms

- Strong language generation and flexible prompts

- Mature developer tooling and extensive docs

- Varied deployment options for different use cases

- Active communities and ongoing model improvements

Weaknesses

- Policy and safety constraints can limit exploration

- Cost structures can be complex for large-scale use

- Vendor-specific features may complicate cross-tool portability

- Data handling policies vary and require careful review

ChatGPT remains a solid baseline, but Claude, Gemini, and Bing AI offer compelling advantages in safety, ecosystem integration, and search-enhanced context.

Choose a tool based on your priorities: general-purpose writing and coding favor ChatGPT; safety-focused or ecosystem-integrated tasks may favor Claude, Gemini, or Bing AI. For research and education, align with governance and data handling needs before committing.

FAQ

Which AI tool is most comparable to ChatGPT for generic tasks?

Several tools are considered ai tool.comparable to chatgpt for general tasks, including Claude, Gemini, and Bing AI. Each offers strong language capabilities with different safety and ecosystem strengths. Your choice should reflect your priority between safety, integration, and cost.

Tools like Claude and Gemini are strong alternatives to ChatGPT for general tasks, depending on what matters most to you—safety, ecosystem, or price.

Do pricing models differ significantly between these tools?

Pricing models vary, with subscriptions, usage quotas, and enterprise terms. While all offer tiered plans, the total cost depends on usage volume, API calls, and required features like enterprise governance or advanced analytics.

Yes. Prices differ by plan type and usage, so evaluate total cost by your expected volume and required features.

How important is data privacy when comparing tools?

Data privacy is a critical consideration, especially in research and education. Review data retention, training usage policies, and options to opt out of data collection to protect sensitive information.

Privacy matters a lot. Check retention policies and opt-out options to avoid unwanted data usage.

Can these tools handle multimodal inputs?

Many tools offer multimodal capabilities, incorporating text and images, with varying levels of support. Confirm the specific modalities supported and how they affect latency and accuracy in your use case.

Yes, several support images or other data types, but check the exact modalities offered by each tool.

Are open-source alternatives viable replacements for ChatGPT?

Open-source options exist and can be viable for specific projects, especially where control over data and customization matters. They may require more in-house engineering and governance effort.

Open-source options exist, but they often need more setup and governance work.

What about model updates and API stability?

Model updates and API changes can affect workflows. Favor tools with transparent update schedules and backward-compatible changes to minimize disruption.

Look for predictable updates and stable APIs to keep projects running smoothly.

Key Takeaways

- Assess comparability across four axes: quality, safety, multimodal capability, and developer experience

- Prioritize governance and data handling for research and education use cases

- Test tools in parallel pilots to avoid vendor lock-in and optimize ROI

- Choose tools that fit your ecosystem and scale with your organization