AI Coding Tools: A Practical Comparison

A rigorous, developer-focused comparison of coding AI tools similar to ChatGPT, covering chat-based assistants, inline copilots, and IDE plugins, with criteria, tables, and practical guidance for selection.

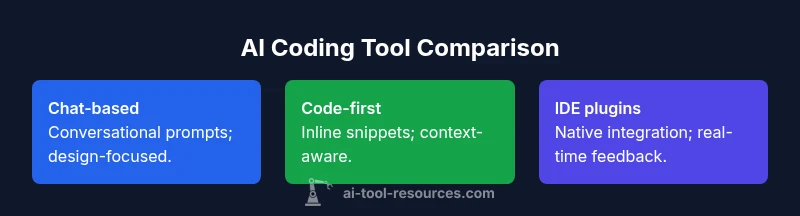

An ai tool like chatgpt for coding acts as a coding assistant, offering code generation, explanations, and debugging support. See our detailed comparison chart now to help you decide which approach fits your coding workflow best.

Defining an ai tool like chatgpt for coding

According to AI Tool Resources, an ai tool like chatgpt for coding combines natural language understanding with code-aware generation to assist developers. In practice, these tools act as coding teammates that can draft boilerplate, explain errors, propose refactors, and sometimes suggest tests. The goal is not to replace a human engineer but to accelerate problem-solving, provide quick explanations, and surface alternatives during development workflows. Users should treat the output as a draft to be reviewed and tested. This article focuses on three archetypes: chat-based assistants that you talk to, code-first copilots that insert suggestions inside your editor, and IDE-integrated plugins that feel like native features. By understanding these categories, you can map your needs to a tool class that fits your team's processes.

How these tools fit into modern coding workflows

The modern development workflow frequently blends exploratory coding, pair-programming, and automated checks. Chat-based assistants shine when you want to pose questions in plain language, discuss architecture, or translate specs into initial code, while code-first copilots excel at drafting concrete snippets with contextual awareness. IDE plugins offer the tightest integration, acting as proactive advisors as you type. When evaluating options, consider how your team codes most often: rapid prototyping, large-scale refactors, or compliance-focused tasks. The right mix often includes more than one category, connected through a shared authentication and governance policy to ensure consistent outputs across projects.

Core capabilities you should expect

Modern coding AI tools vary in features, but most share core capabilities that matter for daily work. Code generation on request can produce scaffolding, function bodies, or entire modules given requirements. Explanations help you understand algorithms, patterns, and why a particular approach was chosen. Error analysis and debugging support can identify possible issues and propose fixes. Context awareness across a file or project improves relevance, while multi-language support broadens applicability. Finally, quality guards such as linting suggestions, unit-test scaffolding, and safety checks help you evolve code responsibly. When selecting a tool, verify that it supports your primary languages, aligns with your workflow, and offers easy integration with your editor or IDE.

Evaluation criteria to compare tools

To evaluate ai coding tools effectively, use consistent criteria: accuracy and reliability of generated code, speed and latency during interactions, and the usefulness of explanations. Consider integration depth with your editor or IDE, the quality of error diagnostics, and how outputs are validated (linting, tests, or static analysis). Security and privacy are critical: confirm data handling policies, whether prompts are logged, and if on-premises options exist. Licensing and cost models should be understood—look for per-user vs per-seat pricing, free tiers, and enterprise plans. Finally, assess governance and compliance implications for your organization, including code ownership and data retention rules.

Potential pitfalls and risk management

Despite strong benefits, coding AI tools introduce risks. Hallucinations or incorrect code can slip in, especially with complex logic or edge cases. Treat outputs as drafts requiring human review and testing. Data privacy concerns arise when sending proprietary code to cloud services; prefer tools with transparent data handling and opt for on-premises options when possible. Dependencies on internet connectivity can disrupt workflows in offline scenarios. Licensing constraints may impact how generated code can be used in commercial products—read terms carefully. Establish a coding AI governance policy that includes review procedures, test coverage expectations, and roles for developers and reviewers.

Practical integration scenarios

Teams often blend tools to cover diverse needs. For rapid prototyping, a chat-based assistant can translate features into starter code, create API diagrams, and propose test ideas. For ongoing development, a code-first copilot within the editor can draft snippets, fill in boilerplate, and suggest refactors while maintaining project structure. In large organizations, IDE plugins with policy controls can enforce coding standards, run automated checks, and integrate with ticketing or CI pipelines. A typical workflow might start with a chat session for design questions, move to inline suggestions during coding, and finish with automated tests and code reviews that incorporate AI-generated prompts as justification notes.

Security, privacy, and governance considerations

Security-conscious teams must review how prompts and code are transmitted and stored. Prefer tools that offer data handling disclosures, encryption in transit and at rest, and options for private instances. Governance should define who can use AI tools for particular codebases, what data can be uploaded, and how outputs are audited. Ensure that generated code does not inadvertently leak secrets or proprietary strategies. Establish clear guidelines for when human review is required and how to document AI-assisted decisions for future audits.

Performance, latency, and reliability factors

Latency matters when you depend on AI assistance for real-time coding. Tools with robust local context and efficient models reduce wait times, but may trade off some depth of reasoning versus larger cloud models. Consider uptime guarantees, regional availability, and fallbacks if the AI service experiences outages. For mission-critical projects, maintain parallel human reviews and do not rely on AI outputs as the sole source of truth. Reliability also includes consistent formatting, adherence to project conventions, and the ability to explain decisions in a reproducible manner.

Team adoption patterns and training

Successful adoption hinges on clear onboarding and ongoing education. Provide developers with quick-start playbooks, example prompts, and templates for common tasks (e.g., creating a REST API, writing tests, or performing refactors). Encourage a culture of code reviews that explicitly address AI-generated outputs. Track learning outcomes, measure changes in cycle time, and gather feedback on tool ergonomics. Establish a champion or guild within the team to share best practices and update prompts as the codebase evolves.

How to run a hands-on evaluation

A practical evaluation starts with a representative set of tasks: a feature from your backlog, a debugging session, and a small refactor. Run these tasks across at least two tools or tool classes to compare outcomes, latency, and the ease of integration. Capture metrics like time-to-first-result, code quality after human review, and the number of iterations required to reach a satisfactory solution. Involve developers from different subteams to gauge usability across roles. Document findings with reproducible scripts and share learnings in a mid-cycle review.

The future of coding assistants

The landscape of AI coding tools is evolving toward deeper integration, better multi-language support, and stronger governance features. Expect improved code synthesis that respects project-wide patterns and domain constraints, along with more transparent explanations and traceable decision logs. As models mature, the emphasis will shift from raw capability to reliability, safety, and alignment with team standards. Staying engaged with the AI Tool Resources community can help teams adopt best practices and stay ahead of changes.

Feature Comparison

| Feature | Chat-based coding assistants | Code-first copilots | IDE plugins |

|---|---|---|---|

| Primary interaction model | Conversational prompts for coding tasks and explanations | Inline code suggestions guided by context | Native editor features with AI-assisted commands |

| Latency and responsiveness | Variable latency depending on workload and model size | Low-latency, near-instant snippets during typing | Low to moderate latency tied to IDE performance |

| Code quality and correctness checks | Depends on prompt quality; requires strong human review | Contextual suggestions with internal checks and tests | Tightly coupled with project rules, linting, and tests |

| Data privacy and on-prem options | Primarily cloud-based; prompts may be logged | Cloud-based with potential private instances | Often supports on-prem or enterprise deployments |

| Pricing model (illustrative) | Usage-based or per-seat cloud pricing | Subscription or tiered plans | Bundled with IDE or platform licenses |

| Best for | Exploratory coding, learning, and brainstorming | Rapid drafting, boilerplate generation, and micro-tasks | Deep IDE integration and seamless editor experience |

Upsides

- Boosts coding speed and reduces boilerplate

- Supports multiple languages and frameworks

- Enhances learning with explanations and examples

- Can be integrated into editors and CI workflows

Weaknesses

- Risk of incorrect or hallucinated code without human review

- Privacy concerns around proprietary code and prompts

- Dependency on cloud services; potential uptime issues

- Licensing and usage restrictions can complicate distribution

Choose a mixed approach: IDE-integrated AI tools for production workflows; chat-based assistants for design and learning.

For day-to-day coding, IDE plugins with governance offer the strongest reliability and integration. Chat-based tools excel in exploration and learning, complementing IDE-based workflows rather than replacing them. A layered setup reduces risk and maximizes productivity.

FAQ

What is an ai tool like chatgpt for coding?

An ai tool like chatgpt for coding is an AI assistant designed to help developers write, understand, and debug code. It can generate snippets, explain logic, and offer alternatives. These tools are typically accessed via chat interfaces, inline editors, or IDE plugins and should be used as aids rather than sole sources of truth.

An AI coding assistant helps you draft and explain code, but you should still review and test everything it suggests.

Should I use a chat-based tool or an IDE plugin first?

The choice depends on your workflow. Chat-based tools are great for design discussions and learning, while IDE plugins offer tighter integration, lower latency, and stronger enforcement of project standards. For most teams, a combination provides both flexibility and reliability.

Start with an IDE plugin for day-to-day coding, and use chat tools for design questions and learning.

How do I evaluate accuracy and safety of outputs?

Test outputs against known requirements, run automated tests, and perform code reviews to catch mistakes. Review data-handling policies to understand what information is sent to the AI service and how outputs are stored or logged.

Always run tests and have a human review AI-generated code before merging.

Can these tools replace developers?

No. These tools augment developers by handling repetitive tasks, suggesting ideas, and accelerating research. Human expertise remains essential for design decisions, critical thinking, and quality assurance.

They augment, not replace, human developers.

What about privacy and licensing when using AI for coding?

Check whether prompts are logged, whether code is stored, and what rights you have to reuse AI-generated code. Prefer vendors with transparent policies and consider enterprise controls for sensitive projects.

Understand data handling and licensing before adopting a tool for proprietary code.

Are there risks with multi-language support?

Multi-language support expands capabilities but can introduce inconsistent quality across languages. Validate outputs with language-specific linters and tests to maintain reliability.

Quality varies by language; verify with tests and linters.

Key Takeaways

- Prioritize tool integration with your editor for best UX

- Treat AI-generated code as draft—review and test thoroughly

- Define governance to manage data, licensing, and ownership

- Evaluate across tasks: prototyping, debugging, and refactoring

- Plan for a mix of tool classes to cover diverse needs