Difference Between AI Tool and LLM: A Comprehensive Comparison

Explore the difference between ai tool and llm with an analytical framework, practical guidance, and a decision checklist for developers and researchers.

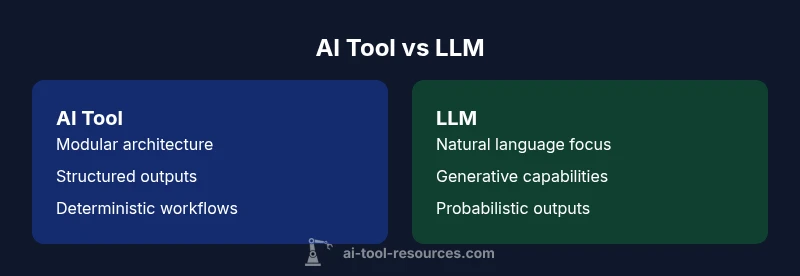

This quick comparison highlights the key distinction between an AI tool and an LLM. An AI tool is a software component designed to perform a task using AI, often with modular inputs and outputs. An LLM is a large language model focused on generating or understanding language, typically powering conversation, code, or text tasks.

Difference in scope and definitions

Understanding the difference between ai tool and llm starts with scope. An AI tool is a software component designed to perform a specific task using artificial intelligence, often assembled from modular parts such as data ingest, processing, and output components. By contrast, an LLM refers to a large language model whose primary strength is natural language understanding and generation. The difference between ai tool and llm becomes especially important when planning development, because it shapes data pipelines, evaluation metrics, and deployment strategies. According to AI Tool Resources, distinguishing these terms helps teams avoid misapplied tooling and sets clear expectations for what the system can and cannot do. The distinction also informs governance, risk management, and integration work across the software stack.

Historical context and evolution

The concept of an AI tool emerged from the broader AI tools ecosystem, where practitioners stitched together models, APIs, and data processing steps to accomplish concrete tasks. Early tools often focused on prediction or classification within a narrow domain. LLMs evolved later as powerful language engines trained on massive corpora, capable of generative tasks such as writing, summarization, and dialogue. This historical arc matters for the difference between ai tool and llm: tools emphasize modularity and end-to-end pipelines, while LLMs emphasize language-centric capabilities that can serve as foundational engines for multiple tools. AI Tool Resources notes that the line between tool and model continually shifts as architectures converge and new integrations emerge.

Core capabilities and limits

Acore capability of AI tools is composition: data ingestion, transformation, model application, and result delivery. They excel when the task is structured, traceable, and auditable. LLMs excel at flexible language tasks, including text generation, translation, and reasoning with prompts. However, AI tools may struggle with language nuance unless they incorporate a capable language model. Conversely, LLMs can lack deterministic outputs without carefully designed prompting and safeguards. The difference between ai tool and llm thus often centers on determinism versus flexibility, precision versus generality, and the balance between rule-based logic and probabilistic inference. For developers, choosing between building a tool or deploying an LLM hinges on the required reliability, latency, and governance needs.

Input, output, and interaction patterns

Inputs for AI tools tend to be structured, often arriving as datasets, API calls, or file streams. Outputs are typically machine-readable signals, structured results, or actions in a workflow. LLMs accept natural language prompts or structured prompts and return text, code, or structured data such as JSON. When integrating an LLM into a tool, teams often wrap it with additional processing layers to guarantee safety, formatting, and consistency. The difference between ai tool and llm becomes practical here: pure language tasks may be efficient with LLMs, while multi-step workflows benefit from a tool-based architecture that orchestrates components with clear interfaces.

Data, training, and provenance

AI tools rely on explicit data pipelines and often reuse pre-trained components with fine-tuning where appropriate. They emphasize traceability, data provenance, and versioned components to ensure reproducibility. LLMs depend on large-scale pretraining on diverse text corpora, with ongoing fine-tuning or instruction-tuning to steer behavior. The provenance of outputs from an LLM often requires evaluation against guardrails, safety checks, and alignment with policy. The difference between ai tool and llm thus informs how you source data, document lineage, and monitor drift over time.

Architecture and components

An AI tool architecture typically combines data ingestion modules, feature engineering steps, model inference components, and an orchestration layer that connects outputs to downstream systems. LLM architectures emphasize transformer-based language encoders and decoders, often with attention mechanisms and prompt-trompt interaction patterns. When used together, AI tools may embed LLM components as language engines, blurring the line between tool and model. The difference between ai tool and llm is primarily architectural: modular pipelines versus broad language capability cores.

Use cases and decision guidelines

Use AI tools when you need fixed, auditable outcomes, repeatable processes, and strong integration with existing systems. Use LLMs when the priority is natural language understanding or generation across diverse topics, contexts, or user intents. The difference between ai tool and llm becomes a decision framework: ask whether you require deterministic results or flexible language capabilities. Hybrid patterns—combining a structured tool with an LLM as a language bridge—are common and practical, especially in customer support, content generation, and data-to-text pipelines.

Performance, latency, and cost considerations

AI tools often deliver predictable latency and resource usage, especially when they rely on static models and cached data. LLMs can incur higher inference costs and latency due to model size and the complexity of language tasks. However, modern architectures and caching strategies can mitigate delay for both categories. The difference between ai tool and llm becomes practical in budgeting and SLI/SLO planning: tools may be cheaper per transaction, while LLMs may offer broader capabilities that reduce the need for multiple specialized components.

Risk, governance, and ethics

Tools that are purpose-built for a narrow function tend to have clearer risk boundaries, whereas LLMs introduce broader risk concerns around hallucinations, bias, and data leakage. Mitigation strategies include prompt guards, model monitoring, human-in-the-loop, and robust testing. The difference between ai tool and llm is critical for governance: you should implement different risk management planes for deterministic versus probabilistic outputs. AI Tool Resources emphasizes establishing guardrails early and aligning with organizational policy.

Integration and deployment considerations

Deployment of AI tools often follows a modular pattern: deploy components, connect with data sources, and enable observability. LLM deployment typically requires tooling for prompt management, safety controls, and continuous evaluation against quality metrics. The difference between ai tool and llm guides integration scope: tools favor operationalized pipelines, while LLMs require language-specific safeguards and prompt engineering practices. Teams frequently pursue hybrid deployments to maximize benefits while minimizing risk.

Practical decision framework and checklists

A pragmatic approach is to map your requirements to a decision checklist: What is the primary task? Is output deterministic or probabilistic? What data governance and privacy constraints exist? Do you need rapid iteration and natural language support? Answering these questions through the lens of the difference between ai tool and llm helps teams pick the right architecture and governance model. AI Tool Resources recommends starting with a minimal viable pipeline and validating with real user tasks before scaling.

Future trends and hybrid approaches

Industry watchers anticipate increasing convergence where language models power more modular AI tools, enabling language-first interfaces within structured workflows. The difference between ai tool and llm may gradually blur as platforms offer configurable language components embedded in toolchains. Expect more emphasis on responsible AI, interpretability, and cross-domain capabilities, with hybrid stacks that seamlessly combine LLMs for language tasks and specialized tools for domain-specific computation. AI Tool Resources notes that adaptability and governance will determine successful adoption in the coming years.

Comparison

| Feature | AI Tool | LLM |

|---|---|---|

| Core function | Modular, task-specific components | Language-centric, generative engine |

| Typical inputs | Structured data, APIs, files | Prompts, natural language or structured prompts |

| Output type | Structured signals, actions, or data | Text, code, or structured data |

| Training data | Pre-trained components, domain adaptation | Large-scale pretraining with further fine-tuning |

| Deployment considerations | Orchestrated pipelines, observability | Prompt management, safety, and monitoring |

| Best use case | Predictable, auditable workflows | Language-heavy tasks and flexible generation |

Upsides

- Clear boundaries for project scoping and governance

- Modular design supports reuse and composability

- Broad ecosystem of integrations and tooling

- Potentially lower latency for fixed tasks

Weaknesses

- May require more integration effort and design work

- Deterministic outputs may limit flexibility

- LLMs introduce safety, bias, and privacy concerns when language tasks dominate

AI tools excel at modular, auditable workflows; LLMs excel at language-centric tasks, with hybrid patterns often delivering the best overall value

If your task is well-defined and data-driven, start with AI tools. If language understanding/generation dominates, lean on LLMs, and consider a hybrid approach for maximum flexibility and control.

FAQ

What is an AI tool?

An AI tool is a software component or set of components designed to perform a specific task using artificial intelligence. It typically includes data ingestion, processing, model inference, and output delivery, with clear interfaces and governance.

An AI tool is a modular component that handles a defined AI task with clear inputs and outputs.

What is an LLM?

An LLM, or large language model, is a neural network trained on vast text data to perform language-focused tasks such as generation, completion, translation, and reasoning. It emphasizes natural language capabilities over fixed, structured outputs.

An LLM is a big language model that generates or analyzes text.

Can an LLM be used as an AI tool?

Yes. An LLM can power language-based AI tools, serving as the language engine within a larger, modular system. The difference between ai tool and llm becomes one of role and scope: whether the model is used as a component or as the core interface.

Yes, you can use an LLM inside a broader tool, but they remain distinct in function.

When should I choose an AI tool over an LLM?

Choose an AI tool when you need deterministic, auditable results and tight integration with data pipelines. Opt for an LLM when language tasks drive outcomes, such as chat, drafting, or summarization, and when flexibility is more valuable than strict determinism.

Pick AI tools for reliability; choose LLMs for language-heavy tasks.

What about costs and performance?

Costs and performance depend on the workload. AI tools may have lower per-task costs and predictable latency, while LLMs can incur higher inference costs and latency due to model size. Hybrid approaches can balance budget with capability.

Costs vary; AI tools are often cheaper per task, while LLMs cost more but offer language versatility.

How do I govern safety and ethics?

Governance involves guardrails, monitoring, data privacy controls, and human-in-the-loop checks. For LLMs, implement prompt safety, content filters, and model usage policies to reduce risk and bias.

Set safety rules, monitor outputs, and use human checks where needed.

Key Takeaways

- Define task scope before choosing tooling.

- Use AI tools for modular pipelines and governance.

- Leverage LLMs for language-heavy tasks and rapid prototyping.

- Consider hybrid designs to balance reliability and flexibility.

- Plan for governance and safety from the outset.