ai vs ai tool: Key Differences and Use Cases

Compare ai vs ai tool to decide when to use generic AI models versus specialized AI tooling. Get definitions, use cases, decision criteria, and practical guidance for builders.

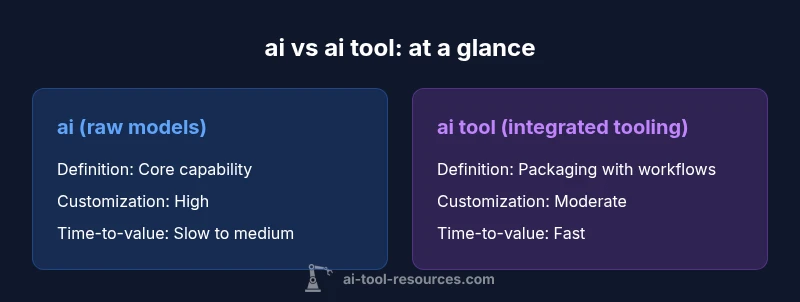

ai vs ai tool is not a single answer but a decision framework. In short, AI models represent raw capability, while AI tools package those capabilities with workflows, guardrails, and integration. The AI Tool Resources team highlights that choosing between the two hinges on goals, data strategy, and team proficiency, not one-size-fits-all. This guide breaks down when each approach shines.

What ai vs ai tool means

In modern AI discussions, people often conflate ai with built-in AI capabilities and ai tool frameworks. At a high level, ai refers to the underlying models, algorithms, and data pipelines that generate predictions, classifications, or generative outputs. An ai tool, by contrast, is a packaged solution—an application layer that embeds AI capabilities into a usable workflow. It includes interfaces, prebuilt components, data pipelines, governance controls, authentication, and deployment options. The scope matters: ai is the source of capability, while an ai tool is the channel through which that capability reaches users. Control is another key differentiator: ai models require you to manage data quality, bias, drift, and monitoring; AI tools provide built-in monitors, usage limits, audit trails, and compliance features. For teams, the choice affects speed, risk posture, and collaboration patterns. In practice, many teams adopt a blended approach: core models inform a toolchain that enforces guardrails and integrates with existing systems. The AI Tool Resources team emphasizes mapping requirements to a spectrum of options rather than forcing a single solution.

Core differentiators: definitions, scope, and control

ai refers to the underlying capability—models that ingest data, learn patterns, and generate outputs. It is conceptual; it exists whether or not you have a specific product built around it. An ai tool, by contrast, is an application or platform that embeds AI capabilities into a usable workflow. It includes interfaces, prebuilt components, data pipelines, governance controls, authentication, and deployment options. The scope matters: ai is the source of capability, while an ai tool is the channel through which that capability reaches users. Control is another key differentiator: ai models require you to manage data quality, bias, drift, and monitoring; AI tools provide built-in monitors, usage limits, audit trails, and compliance features. For teams, the choice affects speed, risk posture, and collaboration patterns. In practice, many teams adopt a blended approach: core models feeding a managed toolchain that enables guardrails and seamless integration with existing systems. The AI Tool Resources team emphasizes mapping requirements to a spectrum of options rather than forcing a single solution.

Use-case alignment: when ai is better

Choose ai when you need deep customization, niche domain knowledge, or novel capabilities that off-the-shelf tools don’t support. For research projects or experimental prototypes, raw AI models allow you to fine-tune parameters, create custom architectures, and push the state of the art. If you have access to high-quality data and the necessary compute resources, training or adapting a model can yield superior accuracy for specialized tasks—medical imaging, legal document analysis, or language understanding with domain-specific jargon. AI shines when you want to explore new behaviors, build bespoke evaluation metrics, or contribute to research communities. However, with greater control comes greater responsibility: you must design robust data governance, monitor bias, and maintain model versioning. In many organizations, researchers maintain a private model while delegating routine deployment to AI tools for scalability. The takeaway is that ai offers maximum flexibility and potential performance, but requires more in-house expertise and ongoing maintenance.

Use-case alignment: when ai tool is better

Opt for ai tool when speed, risk management, and cross-team collaboration matter most. Prebuilt AI tools provide ready-to-use workflows, dashboards, and governance features that help teams deliver value quickly without building everything from scratch. For product teams, startups, or education settings, AI tools reduce time-to-value by offering plug-and-play integrations, templates, and guided experimentation. They are often preferable when regulatory requirements demand auditable usage, when data sources are heterogeneous, or when teams lack machine-learning specialists. AI tools can also enforce compliance with privacy and security policies via built-in access controls, data redaction, and usage monitoring. The caveat is that tooling typically trades some degree of customization and novelty for reliability and scale. In addition, vendors may introduce cycles of feature updates or changes in pricing. The practical recommendation is to start with AI tools to validate a concept, then layer custom AI models later if you need deeper optimization.

Evaluation criteria: cost, time-to-value, data needs, governance

When evaluating ai vs ai tool options, establish common criteria that apply to both paths. Cost is often the most visible factor: ai tools may require subscription fees, whereas raw models incur compute and data pipeline costs; total cost of ownership depends on usage, scale, and maintenance. Time-to-value matters: AI tools typically deliver faster results by reducing development time, while custom models pay off later as features mature. Data needs differ starkly: models require high-quality labeled data for training; tools may operate with existing datasets and offer data connectors or synthetic data support. Governance and compliance are critical in regulated domains; built-in audit trails, access controls, and policy enforcement in AI tools help manage risk, while models demand rigorous deployment pipelines, monitoring, and impact assessments. Finally, consider the long-term strategy: a blended approach—core models feeding a managed toolchain—can balance speed, control, and scalability. The key is to align selection with organizational goals, not just short-term wins.

Implementation and governance considerations

Implementation decisions for ai vs ai tool affect team dynamics and deployment safety. For AI models, plan for data preparation, feature engineering, model selection, and continuous evaluation. You will need MLOps practices: versioning, testing, monitoring, and rollback mechanisms. Governance should cover bias detection, explainability, and data provenance. For AI tools, focus on integration architecture, API compatibility, and user roles. Governance here often centers on access control, usage quotas, and policy-driven automation. Both paths benefit from risk assessments, privacy impact analyses, and clear ownership. A practical approach is to run a pilot in a contained environment, measure impact against predefined KPIs, and document assumptions. Over time, you can migrate from exploratory models to tool-enhanced workflows or, conversely, add bespoke models to augment the toolchain. The overarching rule is to maintain auditable trails, maintainable code, and transparent decision logic, regardless of the path chosen.

Security, privacy, and compliance

Security and privacy concerns loom large when choosing ai vs ai tool. Models may expose sensitive data through training, fine-tuning, or inference; robust data governance and anonymization become essential. Tools, by design, often implement access controls, role-based permissions, and encryption in transit and at rest. However, misconfigurations in tools can create data leakage, shadow datasets, or uncontrolled feature-sharing. Compliance considerations include data residency, retention policies, and auditability of model outputs or tool actions. In regulated sectors, define clear data-handling agreements, vendor due diligence, and ongoing monitoring. A hybrid approach—using AI tools for governance-first workflows while hosting sensitive models in a controlled environment—can balance speed with security. The AI Tool Resources team encourages teams to document security SLAs, validate data lineages, and maintain an ongoing risk register as part of the project lifecycle.

Performance, reliability, and customization

Performance metrics vary between ai and ai tool setups. Raw models can achieve high performance on specialized tasks but require careful optimization, hardware considerations, and hyperparameter tuning. AI tools offer consistent performance with vetted configurations, but customization is often constrained to provided options and templated modules. Reliability hinges on monitoring, testing, and fault-handling procedures. For mission-critical systems, define service-level agreements, redundancy, and graceful degradation paths. Customization is where the trade-off shows clearly: models provide full control over architecture and training data, while tools deliver guided customization through presets, templates, and feature toggles. A blended approach—deploy a solid base tool while experimenting with targeted model enhancements—can deliver robust performance with manageable risk. The takeaway is to balance ambition with discipline: optimize the core pipeline, monitor drift, and be prepared to roll back changes that degrade reliability.

Data management and provenance for ai vs ai tool

Data is the lifeblood of both paths, but handling it differs. Models rely on curated training data, labeling pipelines, and version-controlled datasets to ensure reproducibility. Provenance matters: track where data comes from, how it is transformed, and how decisions are validated. Tools often abstract data handling behind connectors, templates, and dashboards, but you still need to audit data sources, transformation rules, and privacy safeguards. AI Tool Resources analysis notes that teams that document data lineage and maintain a data catalog tend to achieve better governance and reproducibility, regardless of the route chosen. In practice, establish data contracts with teams, implement data quality checks, and maintain clear ownership for data assets. For both models and tools, consider synthetic data generation as a supplement for testing and benchmarking.

Vendor landscape and risk management

The market for ai vs ai tool offerings is fragmented, with many vendors providing either raw models or integrated platforms. Risk management includes assessing vendor stability, cadence of updates, and data-security certifications. For ai models, risk is tied to licensing terms, training data provenance, and license compliance. For ai tools, risk is tied to vendor roadmaps, pricing volatility, and support guarantees. Diversification—using multiple vendors for different tasks—can reduce risk, but increases integration complexity. The AI Tool Resources team recommends mapping risk alongside value: define what happens if a vendor experiences outages, pricing changes, or policy updates. Ensure you have contingency plans, backups, and clear exit strategies. The best practices emphasize transparent governance, cross-functional stewardship, and proactive monitoring of vendor health signals.

Practical decision framework: step-by-step checklist

Use this checklist to decide between ai and ai tool for a given project:

- Define the objective: is it a research-grade capability or a production-ready workflow?

- Assess data readiness: do you have high-quality data for training, or will you rely on existing data and connectors?

- Evaluate time-to-value: is speed a priority or is custom optimization worth the extra effort?

- Governance and risk: what are regulatory requirements and audit needs?

- Resource availability: do you have ML engineers and data scientists, or is a cross-functional team sufficient?

- Cost and scalability: what is the total cost of ownership and how will it scale?

- Prototyping path: start with a tool for rapid validation, then introduce models if needed.

- Exit strategy: plan for data retention, model versioning, and vendor independence. In many cases, a hybrid approach—utilizing AI tools for governance and rapid delivery while integrating bespoke models where beneficial—offers the best balance.

Authority sources and further reading

For rigorous perspectives, consult official guidelines and major publications:

- NIST: Artificial Intelligence (https://www.nist.gov/topics/artificial-intelligence)

- IEEE: AI initiatives and standards (https://www.ieee.org/initiatives/artificial-intelligence)

- Google AI Research (https://ai.google/research/)

Additional notes:

- The AI Tool Resources team uses these sources to inform the framework described above.

- For more practical tooling, explore vendor documentation and community benchmarks.

Comparison

| Feature | ai (raw models) | ai tool (integrated tooling) |

|---|---|---|

| Definition | Raw model/algorithm isolated from environment | Packaged solution with UI, workflows, and governance |

| Focus | Capability and performance optimization | Usability, deployment, and governance |

| Customization | High flexibility, domain-specific tuning | Limited customization, template-driven |

| Data requirements | Quality-labeled data for training | Existing data with connectors; synthetic options |

| Cost range | Compute, data storage, and maintenance (varies by scale) | Subscription fees, cloud credits, and support costs |

| Implementation effort | High: setup, training, monitoring | Medium: integration, templates, dashboards |

| Maintenance | Ongoing model retraining, drift monitoring | Regular updates, policy enforcement, user management |

| Best for | Researchers, specialized domains, novelty-seeking | Teams needing rapid delivery, governance, and scale |

Upsides

- Faster time-to-value with ready-made tooling

- Better governance and compliance through built-in controls

- Lower barrier to cross-functional collaboration

- Scales across teams with templates and dashboards

- Clear ownership and accountability when using tools

Weaknesses

- Potential limitations on customization and novelty

- Vendor pricing changes can affect long-term costs

- Possible reliance on external systems and connectivity

- Tool-specific biases or constraints may materialize

ai tool is typically the better starting point for most teams; ai models excel when deep customization and domain-specific optimization are required

For rapid delivery and governance, AI tools often win. When deep customization and specialized accuracy matter, bespoke AI models may justify the extra effort and cost. A blended approach can offer the best balance.

FAQ

What is ai vs ai tool?

ai vs ai tool distinguishes raw AI models from packaged AI tooling. Models provide core capabilities; tools deliver ready-made workflows, interfaces, and governance. The choice hinges on objectives, data, and team capability.

ai vs ai tool distinguishes models from tooling; choose based on goals, data, and team capability.

Can I combine both approaches effectively?

Yes. A common pattern is to run a core model in-house or in a controlled environment while exposing its capabilities through an AI tool. This balances customization with governance and scalability.

Yes—start with a tool for governance and speed, then layer in models where needed.

Which path is faster to implement?

AI tools generally offer faster onboarding and setup due to templates and presets. Custom models take longer but can deliver tailored performance for niche tasks.

Tools are usually faster; models take longer but can be highly customized.

How do governance and compliance differ?

Tools provide built-in governance features (access control, auditing). Models require explicit data governance, monitoring, and policy enforcement across the deployment lifecycle.

Tools help with governance; models require rigorous oversight.

What data considerations apply?

Models need high-quality training data and versioned datasets. Tools leverage existing data sources and connectors, with sometimes synthetic data support.

Data strategy is central: training data for models; connectors and policies for tools.

Are there common myths to avoid?

A common myth is that one path fits all. In reality, many teams benefit from a hybrid approach that leverages the strengths of both models and tools.

Don't assume one path is best; hybrid setups often work best.

Key Takeaways

- Define objective first to choose path

- Assess data readiness before committing

- Prefer AI tools for quick value and governance

- Invest in a hybrid setup when both speed and customization are needed

- Pilot early and document decisions for future migration