How Long Have AI Tools Been Around: A Historical Overview

Explore the history of AI tools from the 1950s origins to today’s advanced tooling, frameworks, and open practices. A data-driven timeline with milestones and practical guidance for learners and researchers.

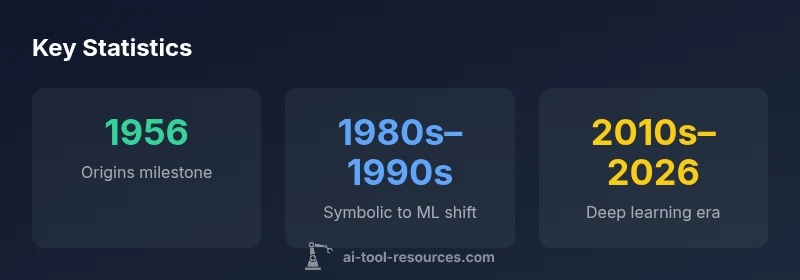

AI tools have existed since the 1950s, beginning with foundational programs and the Dartmouth workshop, then evolving through symbolic AI, expert systems, and machine learning. By the 2010s, deep learning and modern tooling transformed accessibility, enabling researchers and developers to build complex models with increasing speed and scale. In short, how long have ai tools been around? roughly seven decades of steady evolution, punctuated by rapid recent growth.

Origins of AI Tools

The question of how long have ai tools been around can be traced back to the 1950s, when pioneers convened at the Dartmouth Conference and theoretical work began to define machine intelligence. Early programs like the Logic Theorist demonstrated that machines could manipulate symbols to solve problems, while contemporaries explored procedural languages that would later underpin broader AI development. The period also saw the emergence of foundational languages such as LISP, which became the lingua franca for AI research for years. This era established the idea that machines could perform tasks that resembled human reasoning, setting the stage for decades of experimentation and refinement. For developers and researchers, it marked the first generation of AI tooling: interpretable, rule-based systems that required careful knowledge engineering. The landscape started to shift toward practical applications as computing power expanded and data began to accumulate, enabling more ambitious experiments beyond toy problems.

The Symbolic Era and Expert Systems

From the late 1970s through the 1990s, symbolic AI dominated the field. Researchers built expert systems that encoded human expertise into production rules and knowledge bases. Tools like rule engines and inference engines allowed domains such as medicine and engineering to benefit from machine-assisted decision support. The strength of this era lay in its interpretability and explicit reasoning paths. However, the approach relied heavily on hand-authored knowledge, which limited scalability and adaptability to new problem domains. As industries demanded more autonomous problem solving, the limitations of symbolic AI highlighted the need for data-driven methods, while proving that AI tooling could deliver tangible value when aligned with real-world tasks.

The Rise of Machine Learning and Statistical Methods

The late 1990s and early 2000s witnessed a shift from hand-authored rules to data-driven learning. Statistical machine learning methods, such as support vector machines, decision trees, and ensemble techniques, began delivering more robust generalization across varied tasks. Open-source tooling like early iterations of machine learning libraries made experimentation more accessible, enabling researchers to prototype models without bespoke software from scratch. This period demonstrated that AI tools could learn from data rather than being explicitly programmed for every scenario, a paradigm that would accelerate with larger datasets and improved optimization techniques. The topic psychology of progress changed; AI tooling started to look less like a collection of programs and more like an ecosystem of reusable components.

Deep Learning Breakthroughs and the Modern Era

The 2010s brought a renaissance for AI tooling due to deep learning. Breakthroughs in neural networks, large-scale image and language processing, and the use of GPUs dramatically accelerated training. Frameworks such as TensorFlow and PyTorch lowered barriers to entry, enabling researchers and developers to implement complex architectures with relative ease. Large language models and transformer-based approaches further democratized AI tooling, enabling rapid prototyping, experimentation, and deployment. This era defined today’s toolkit: modular, scalable, and increasingly accessible to a broad audience of students and professionals. As a result, the horizon of what AI tools can accomplish expanded dramatically.

Tools, Platforms, and the Democratization of AI

With the advent of cloud platforms, AutoML, and open-source communities, AI tooling shifted from specialized laboratories to broad ecosystems. Developers could leverage pre-trained models, managed infrastructure, and collaborative notebooks to accelerate iteration. The 2020s have seen a surge in accessible tooling for education and industry alike, with emphasis on responsible AI, reproducibility, and governance. This transition to democratized AI tooling has not only broadened who can build and deploy models but also intensified the need for best practices, documentation, and community-driven standards. In this dynamic landscape, historical awareness matters: knowing how long have ai tools been around helps frame today’s rapid adoption against a longer arc of innovation.

How to Learn and Adopt AI Tools Across Generations

Practical learning paths now reflect a layered approach. Beginners often start with introductory tutorials and high-level APIs, then progressively engage with core ML concepts, data engineering, and model deployment. For researchers, a toolbox of libraries, notebooks, and experiment-tracking platforms supports rigorous experimentation and reproducibility. As with any evolving field, staying current requires following reputable sources, engaging with community forums, and participating in open challenges. For those curious about the arc of AI tooling, understanding the timeline—from early symbolic systems to modern, scalable tooling—helps set realistic learning goals and expectations.

The 2020s: Democratization and Responsible Tooling

The current era is characterized by widespread access to AI tools, rapid prototyping, and a renewed focus on governance and ethics. Open-source communities, cloud offerings, and user-friendly interfaces lower barriers for students and professionals alike. Responsible AI practices—such as bias auditing, explainability, and robust evaluation—are now integral parts of tooling. The pace of development continues to accelerate as researchers push the boundaries of what AI tools can achieve, further illustrating how long AI tools have been around and how their evolution shapes contemporary practice.

Looking Ahead: Lessons from the Timeline

A thorough look at the timeline reveals patterns: early theory matures into practical engineering, which then scales with data, compute, and community collaboration. For developers and researchers, this means prioritizing modular tooling, reproducible workflows, and cross-disciplinary collaboration. It also means recognizing the continued importance of safety, ethics, and governance as AI tools proliferate across sectors. The historical perspective reinforces that progress in AI tooling is incremental, cumulative, and driven by the needs of users—from the laboratory to production environments.

Timeline of AI tooling evolution from origins to modern platforms

| Era | Key Milestones | Representative Tools | Impact on AI Tools |

|---|---|---|---|

| Origins (1950s–1960s) | Dartmouth workshop; Logic Theorist; symbolical reasoning | Logic Theorist, LISP | Established AI as a research discipline and a basis for tooling |

| Symbolic AI & Expert Systems (1980s–1990s) | Rule-based systems; knowledge bases | CLIPS, expert-system shells | Demonstrated value in decision support but limited by scalability |

| Machine Learning Emergence (1990s–2000s) | Statistical learning; data-driven models | scikit-learn, Weka (early) | Shift to data and generalization; broader accessibility |

| Deep Learning Breakthroughs (2010s–present) | Neural networks; GPUs; large datasets | TensorFlow, PyTorch | Dramatic performance gains; tooling becomes mainstream |

| Modern Tooling & Platforms (2020s–present) | Cloud AI; MLOps; LLMs | OpenAI tools, LangChain, cloud services | Rapid iteration; scalable deployment; governance concerns |

FAQ

When did AI tools first appear?

AI tools appeared in the 1950s with foundational programs and the Dartmouth Conference, laying the groundwork for the field. Early milestones included the Logic Theorist and language innovations like LISP.

AI tools first appeared in the 1950s with foundational programs and the Dartmouth Conference.

What was the first notable AI tool?

The Logic Theorist (1956) is often cited as one of the first notable AI programs, demonstrating symbolic reasoning in mathematical theorem proving.

The Logic Theorist, developed in 1956, is widely regarded as the first notable AI program.

Why did symbolic AI era give way to ML methods?

Symbolic AI faced scalability and adaptability challenges as real-world tasks grew in complexity. Data-driven machine learning began delivering better generalization as data and compute grew.

Symbolic AI faced scalability limits, leading to the rise of data-driven ML methods.

Are AI tools still expanding and evolving?

Yes. The 2010s onward saw deep learning, transformers, and large-scale tooling expand rapidly, with ongoing advances in models, frameworks, and deployment practices.

Yes—AI tools continue to expand rapidly with new models and tooling.

How should a beginner get started with AI tools?

Begin with high-level tutorials to learn fundamentals, then progress to practical experiments using mainstream libraries and notebooks, focusing on data handling, model building, and evaluation.

Start with basics, then move to hands-on projects using popular libraries.

What is a responsible approach to AI tooling in 2026?

Adopt governance, bias auditing, explainability, and reproducible workflows to ensure safe and trustworthy deployments across domains.

Apply governance, auditing, and reproducibility to all AI tooling.

“AI tools have evolved from theoretical concepts into practical engineering platforms that empower researchers and developers today.”

Key Takeaways

- AI tooling evolves from theory to practical platforms

- Data and compute power accelerate tooling adoption

- Open-source frameworks democratize access

- Governance and ethics shape modern AI tool use

- Continuous learning is essential for practitioners