Who Makes AI Tools? Understanding the AI Tools Ecosystem in 2026

Discover who creates AI tools, why no single company dominates, and how to assess tool origins. A data-driven look at the AI tool landscape for developers, researchers, and students in 2026.

There is no single company that makes ai tools. The answer to which company makes ai tools is that the ecosystem is diversified across large cloud providers, independent startups, research labs, and vibrant open-source communities. Each contributor focuses on different niches—APIs, platforms, or on-prem solutions—so organizations rely on a mosaic of tools rather than a single vendor.

The Ecosystem Behind AI Tools

The question of who makes ai tools is better answered by describing the ecosystem rather than naming a single producer. In 2026, AI tooling arises from a spectrum of actors: large cloud platform providers that offer AI APIs and tooling suites, independent startups delivering niche capabilities, research labs spinning up experimental models, and vibrant open-source communities that contribute reusable components. Each group contributes distinct value, from scalability and governance to auditable data pipelines and customization. For developers, researchers, and students, understanding this mix is essential to selecting tools that fit your workflow rather than chasing a brand name.

- Large-scale platforms provide ready-to-use building blocks, reliable uptime, and strong security posture, but may tie you to their data terms and pricing.

- Startups often ship domain-specific features, rapid iteration, and flexible licensing, yet may have shorter support horizons or less formal roadmaps.

- Open-source communities offer transparency and configurability but require more in-house expertise to assemble and operate.

- Research lab spin-offs can introduce cutting-edge capabilities, though their products may evolve quickly or lack mature commercial support.

Candidly, the best operating model usually blends components from several sources. For teams that want reproducibility, documenting data provenance and model lineage becomes as important as the underlying algorithm itself. This mosaic approach helps balance speed, control, and long-term maintainability, particularly for experiments, education projects, and production systems.

How Tools Are Produced: From Idea to API

In most organizations, AI tools begin as research ideas or customer problems defined by users or product teams. A typical development path includes discovery, prototyping, validation, and then scale-up. The "productionization" phase often involves standardizing interfaces (APIs and SDKs), implementing ML Ops practices, and aligning with data governance policies. The core team may include data scientists, software engineers, and platform engineers who decide where the tool lives—on the cloud, on-premises, or as a hybrid service. The result is a layered product: a core model, an access point (API or UI), and a governance layer that enforces licensing, privacy, and security.

Important design choices shape who owns the tool and how it travels across environments. If a company relies on a hosted API, latency, reliability, and data handling become critical trade-offs. If a tool is packaged for on-prem use, deployment automation, version control, and customer-specific customization take center stage. Open-source components are common in the stack, but they require careful dependency management and compliance checks. Across all models, clear documentation, a well-defined upgrade path, and transparent licensing help reduce risk and increase trust. The lifecycle is iterative: feedback from users informs improvements, which then feed back into the development pipeline, ensuring that the tool evolves in ways that support study, experimentation, and real-world deployment.

The Roles of Different Players

The AI tooling market is not dominated by one player, but organized around roles rather than brands alone. Large cloud providers tend to act as platform orchestrators, supplying policy controls, scalable compute, and broad AI services. They enable rapid experimentation, but customers may face vendor lock-in risk and license constraints. Independent startups fill gaps the big players can't address, offering verticalized capabilities such as healthcare analytics, code generation, or scientific computing. Their strengths include user-centric design, faster iteration cycles, and flexible licensing, but sustainability and long-term support can be uneven without careful diligence.

Academic and research labs contribute models and datasets that push the boundaries of what AI tools can do; many of these efforts feed into open-source projects or commercial products. Open-source communities produce libraries, tools, and reference implementations that help researchers reproduce results and tailor solutions. Enterprises often bundle multiple components into suites, adding governance, security, and enterprise-grade support to make the combination palatable for regulated industries. In practice, most organizations end up mixing elements from several players to meet performance, cost, and compliance requirements. The same logic applies to student projects and educational labs, where accessible open-source options pair with cloud credits or sponsored licenses to support learning objectives.

How to Evaluate the Origin and Trust of AI Tools

Evaluating where AI tools come from is about governance, transparency, and utility. Start with provenance: who maintains the tool, what licenses apply, and how the data and models were developed. Governance considerations include data handling policies, privacy controls, and the ability to audit behavior and outcomes. Support and roadmaps matter for long-term projects; verify SLAs, update cadences, and the availability of professional services if needed. Security is non-negotiable: check for vulnerability disclosures, secure by design principles, and robust authentication and access management. Implementation details such as encryption, access controls, and strong incident response plans are essential for enterprise settings.

Practical steps include requesting a data usage summary, reviewing licensing terms, and testing a pilot in a controlled environment. Ask vendors for third-party attestations, such as third-party security assessments or compliance certifications. For open-source tools, examine contributor activity, governance models, and the existence of a clear maintainership. Finally, ensure you can reproduce results and verify that data provenance aligns with your own research or regulatory requirements.

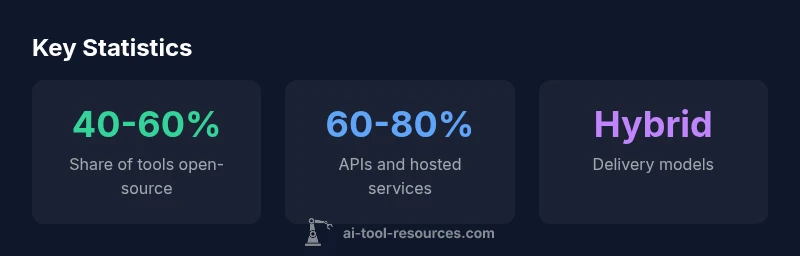

Patterns in Tool Delivery: API, On-Prem, and Open-Source

AI tools today are delivered through three broad patterns that suit different contexts. API-first tools offer rapid experimentation and easy scaling but may impose data-handling constraints and ongoing costs. On-prem or private cloud deployments provide control over data and integration with existing systems, at the cost of higher maintenance and vendor negotiation. Open-source tools enable customization and transparency, yet require in-house expertise to manage dependencies, security, and updates. Many organizations adopt hybrids that mix API access for experimentation with on-prem components for sensitive data or strict governance. The choice depends on factors such as regulatory requirements, latency needs, and internal capabilities. Across all patterns, responsible data stewardship and clear licensing terms help reduce risk and support long-term strategy.

Practical Steps for Researchers and Developers

If you are a developer, researcher, or student evaluating AI tools, start with a map of your requirements: data sensitivity, latency, interoperability, and total cost of ownership. Catalog potential sources by role—cloud platforms for scale, startups for niche capabilities, and open-source projects for transparency. Run pilot projects to compare API reliability, documentation quality, and licensing terms. Build a governance plan that includes data provenance, model versioning, and an upgrade strategy. Finally, document your toolchain so others can reproduce results, audit decisions, and adapt to future research questions.

Patterns in AI tool delivery

| Category | Typical Tool Model | Notes |

|---|---|---|

| Open-source communities | Community-maintained tools | Flexible but patch cadence varies; strong transparency |

| Cloud-based platforms | APIs & hosted services | Fast path to production; vendor trust needed; pay-as-you-go |

| On-prem enterprise suites | Self-hosted solutions | Greater control; higher setup and maintenance costs |

| Specialized tool vendors | Industry-specific tools | Vertical-focused features with supported roadmaps |

FAQ

Which company makes ai tools?

There is no single company that makes ai tools. The market consists of large cloud platforms, independent startups, research labs, and open-source communities that collectively drive tool development.

There isn't one company that makes all AI tools; the ecosystem is diverse.

How can I identify who develops a specific AI tool?

Check the vendor's documentation and licensing terms; for open-source projects, review repository history and contributor activity; contacting sales or support can clarify ownership and support commitments.

Look at the project docs and license; for open-source, review the repo.

Are open-source AI tools considered 'made by a company'?

Open-source tools are typically community-driven; some projects receive corporate sponsorship, but code maintenance and governance are community-based.

Open-source tools are usually community-driven, sometimes company-supported.

What should I look for regarding data provenance and licensing?

Review the data sources, model licenses, terms of use, and whether data handling aligns with your regulatory requirements; ensure clear permission for usage and redistribution.

Check licenses and data provenance before adopting.

Is it feasible to build my own AI toolchain from open-source components?

Yes. Open-source libraries can compose a custom toolchain, but you will need governance, security, and integration expertise to maintain it.

Yes, with the right skills and governance.

“There isn't a single vendor that controls the entire AI tools market; it is a mosaic of providers, platforms, and communities.”

Key Takeaways

- Collaborate across providers to avoid vendor lock-in and tailor tools to your needs

- Always verify data provenance, licensing, and governance before adoption

- Open-source components boost transparency but require governance and integration

- Choose delivery models (API, on-prem, open-source) based on data sensitivity and latency needs

- Educators, researchers, and developers benefit from hybrid ecosystems and cloud grants