Free AI Tools Compared: A Practical Side-by-Side Guide 2026

A rigorous, objective side-by-side comparison of free AI tools to help developers, researchers, and students choose the right no-cost options. Learn criteria, trade-offs, and best paths for sustainable experimentation.

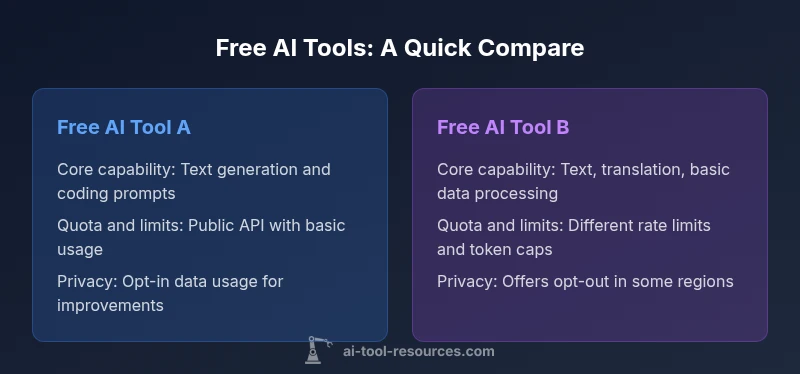

According to AI Tool Resources, when you compare ai tools free, start with capability, limits, and governance. This quick verdict highlights the core decision: free tiers vary dramatically in what they offer and how data is handled. In essence, focus on core features, usage quotas, privacy controls, and upgrade paths to avoid surprises later. This approach helps you choose tools that fit your research or development tasks without immediate paid commitments.

Why compare AI tools free matters

The surge of free AI tools has lowered the barrier to experimentation for developers, researchers, and students. Free tiers empower rapid prototyping, but they also create a landscape where performance, data handling, and licensing differ widely. For teams that rely on consistent outputs, a mismatch between expectations and reality can derail projects, budgets, and timelines. According to AI Tool Resources, free options are often best treated as a sandbox rather than production-grade stacks. Framing a comparison around your concrete use cases—text generation, code assistance, data analysis, multilingual processing—helps you filter out tools that won’t scale or won’t protect your data. Documenting your evaluation criteria and decisions makes it easier to onboard teammates and revisit the choice as needs evolve. This section sets up a repeatable approach: define must-have capabilities, map quotas to workflow demands, assess privacy controls, and plan for future upgrades. The goal is to have a transparent, auditable process that can be reproduced across teams and projects, minimizing risk when you rely on no-cost resources for critical work.

What free tiers typically include (and what they don't)

Free tiers usually offer a subset of the full platform's capabilities—enough to prototype ideas or learn how the tool behaves. You will typically see a capped number of API requests or tokens per period, access to a limited set of models, slower response times during peak hours, and basic documentation or community support. In exchange, you may face restrictions like no access to enterprise-grade security features, limited or no private data handling, and a lack of guaranteed uptime. Some providers also restrict usage to non-commercial projects, educational contexts, or a single project. Conversely, a few tools provide generous free quotas aimed at long-term adoption, but they often require you to provide credit card details for verification or to sign up for a waitlist. For researchers, the ability to run experiments across multiple prompts and datasets is valuable, but quotas can make large-scale testing impractical. When evaluating free tiers, map each tool to your primary task: document drafting, code generation, data transformation, or model evaluation. Beware that some vendors offer “free forever” plans with hidden telemetry that may feed back into model training, or with limited privacy controls that do not align with sensitive projects. In short, make a simple two-column matrix: must-have features and nice-to-have features, then test each tool against your use case to see which one truly meets your needs without turning into a paid commitment.

Data privacy and governance in free AI tools

Privacy and governance considerations are often overlooked in free AI tool comparisons, yet they can determine whether a tool is suitable for your project, especially in regulated environments. First, examine data handling policies: does the provider claim ownership of user inputs, whether data may be used to improve models, and whether options exist to opt out of such data usage? Look for explicit statements about data retention periods, deletion procedures, and export rights. Governance questions include whether you can separate personal data from enterprise data, if there are access logs, and whether you can restrict API keys to specific IPs or applications. AI Tool Resources notes in its 2026 analysis that many free tiers minimize security features to reduce cost, which can leave sensitive prompts exposed. For students and hobbyists privacy may be less critical, but researchers handling proprietary ideas should demand stronger controls or a clear opt-out path. If a free tier lacks essential privacy protections, consider pairing it with local testing, private datasets, or a separate sandbox environment. Finally, review any terms about model updates and training data disclosures. Even when data isn't sold, it may be used to train or fine-tune future iterations. A careful read of the terms of service and a simple risk assessment can save hours of rework and potential leakage of sensitive information.

How to evaluate accuracy, safety, and latency in free tiers

When comparing free AI tools, accuracy, safety, and responsiveness are the most practical high-signal criteria. Start by defining objective test prompts that mirror your real tasks and measure outputs against human benchmarks or reference datasets. Consider error modes: hallucinations, bias, or sensitive content generation, and note how each tool handles guardrails or safe prompts. Latency is more than a feeling; it affects decision cycles in coding, writing, and data analysis. Document peak vs. off-peak performance and average response times to set expectations. Safety features, such as content filtering, prompt-macking controls, and user data handling, vary widely across free plans and can influence how comfortable you are using the tool in your workflow. The lack of a stable free tier often means you should design experiments to tolerate interruptions or quota resets. Where possible, run side-by-side tests with at least two tools to identify consistent gaps or strengths. Finally, keep track of error rates and success criteria across multiple runs, and decide whether the free tier meets your minimum viable performance or if you must invest in a paid option to reach the required reliability.

Practical workflows: writing, coding, data analysis with free AI tools

Free AI tools are particularly useful for iterative writing, preliminary coding, and lightweight data analysis. For writers, set up a structured workflow: draft prompts, generate multiple versions, compare outputs, and edit for tone and accuracy. For developers, begin with a small module or function, generate boilerplate code, then run tests locally; avoid relying on the tool for critical logic without validation. For data scientists, run exploratory analyses or transform datasets with simple prompts, but route sensitive data through local preprocessing when possible. In all cases, track prompts, outputs, and revisions in a shared log so teammates can audit decisions later. Be mindful of per-day quotas and model limitations; distribute work across multiple free tools to reduce risk of hitting a single tool's limit. This approach supports rapid prototyping while preserving the option to scale with paid plans when the project matures. Finally, regularly compare results across tools to determine which combination yields the best balance of speed, quality, and cost for your specific use case.

Common pitfalls when relying on free AI tools

Relying on free AI tools can lead to several pitfalls if you don't plan accordingly. Quotas can suddenly reset, interrupting workflows and causing data gaps. Model updates can alter outputs without notice, requiring re-validation of results. Privacy concerns may surface if you unknowingly share sensitive prompts or datasets; always verify data handling policies before integrating a tool into a production workflow. Inconsistent performance across tools makes it hard to reproduce results; maintain a cross-tool audit trail with versioned prompts and outputs. Free plans may impose restrictions on commercial use, API access, or distribution rights, which can conflict with your project’s licensing. The absence of official support means you must rely on community forums, which may not respond quickly during critical deadlines. By documenting limitations, implementing a small test harness, and setting guardrails for data handling, you can prevent the most common errors and keep projects moving forward even on no-cost resources.

How to structure a fair side-by-side comparison

To create a robust side-by-side comparison, start by listing the scenarios you care about most: rapid prototyping, academic exploration, open-source collaborations, or production-like testing. Then define a fixed rubric with categories such as capability, quotas, privacy, stability, and upgrade options. Assign simple scores or checkmarks to each category for every tool, and keep notes about edge cases. Use a two-column decision matrix: must-have vs nice-to-have, with explicit pass/fail criteria for each tool. Incorporate qualitative judgments (e.g., "better for coding tasks" or "strong content guardrails") alongside objective metrics. Finally, document any trade-offs you observed and how they influence your recommended path. The result should be a clear, repeatable method that your team can reuse for future comparisons. This structure helps prevent bias and ensures that decision-making remains transparent, especially when you expand to more tools or add paid options.

Comparison

| Feature | Free AI Tool A | Free AI Tool B |

|---|---|---|

| Core capabilities | Text generation, simple prompts, and basic coding snippets | Text generation with code assistance and data extraction features |

| Usage limits | Limited API requests or tokens per period with rate caps | Different quota structure and rate limits |

| Data privacy controls | Basic privacy; inputs may be used to improve models | Privacy options vary; some opt-out may exist |

| API access | Public API with basic access and standard endpoints | Public API with stricter quotas and endpoint limits |

| Quality of results | Moderate quality suitable for exploration | Generally solid results within free tier constraints |

| Upgrade path | Upgrading to paid tier unlocks higher quotas | Paid tier optional but recommended for scale |

| Best for | Students and hobbyists testing ideas | Researchers and early-stage projects exploring options |

Upsides

- No upfront cost for basic testing and exploration

- Easy to compare multiple tools quickly

- Lower risk of long-term commitment

- Accessible to students and independent researchers

Weaknesses

- Limited capabilities that may not meet production needs

- Usage quotas can interrupt workflows

- Privacy controls may be weaker than paid plans

- Quality and stability can vary across tools

Free tiers are excellent for exploration; plan a paid path if you need production reliability.

Use free options to prototype and compare; escalate to paid plans when quotas or quality constraints hinder your work.

FAQ

What should I look for in a free AI tool when comparing options?

Prioritize capabilities you actually need, such as text generation quality, coding assistance, and basic data processing. Check quotas, latency, and whether the provider allows commercial use. Review privacy policies and whether there is an opt-out for data usage in training.

Look for capabilities, quotas, and data practices; ensure the tool fits your use case without forcing an early upgrade.

Do free AI tools share data with the provider or use inputs to train models?

Many free tiers involve data usage for model training, testing, or improvement. Some offer opt-out, but availability varies. Always read the privacy policy and terms of service before integrating into a workflow.

Be mindful of data usage policies; opt out if possible and handle sensitive data locally when needed.

Can free AI tools handle production workloads?

Free tools are typically not designed for production-scale workloads due to quotas, latency variations, and lack of guaranteed uptime. Treat them as testing or learning resources and migrate to paid plans for reliability.

Free tools are great for experiments, but for production you’ll usually need paid tiers.

Are there risks using free AI tools for confidential data?

Yes. Confidential data can be exposed if privacy controls are weak or data is stored or used to train models. Use locality, anonymization, or on-premise solutions when handling sensitive information.

Handle sensitive data carefully; prefer tools with strong privacy options or local processing.

What happens when I exceed free usage limits?

Exceeding limits typically pauses access or triggers automatic upgrades to paid tiers. Plan test intervals to avoid surprises and design workflows that gracefully handle quota resets.

Quota resets can interrupt work; plan tests accordingly.

How do I transition from free to paid tools without friction?

Outline a staged upgrade path with predicted quotas, budget, and migration steps. Validate critical workflows on paid plans before full migration and maintain backward compatibility where possible.

Plan a staged upgrade with clear milestones and test critical paths first.

Key Takeaways

- Define must-have features before testing tools

- Track quotas and upgrade thresholds in writing

- Assess data privacy and governance early

- Test at least two tools for critical workflows

- Document decisions for reproducibility