Comparing AI Tools: A Practical Side-by-Side Guide

An analytical, practical guide to comparing ai tools across capabilities, data governance, and cost. Learn a structured framework and view a side-by-side table to decide confidently for developers, researchers, and students.

For most teams, the best path is a structured comparison of features, costs, and governance. In short, comparing ai tools means evaluating capabilities, data handling, integration, and total cost of ownership across several candidates. This guide summarizes a practical framework and a side-by-side approach to help you pick tools that fit your use case and constraints.

What doing a comparison really means

According to AI Tool Resources, comparing ai tools is about more than a checklist of features. It requires aligning tool capabilities with real workflows, governance needs, and operational constraints. In practice, teams start by mapping goals, data sources, and integration points, then generate a short list of candidates to evaluate with the same rubric. This approach promotes transparency and helps auditors understand why one tool outperforms another.

In research and development settings, the idea of comparing ai tools also emphasizes reproducibility. Evaluations should be repeatable using the same datasets and scoring method, even as the project scales or participants change. The goal is not to claim the single most powerful model but to identify the tool that delivers reliable results within your environment.

The AI Tool Resources team stresses that a well-structured comparison reduces bias, speeds decision-making, and clarifies what a given tool can actually do for specific problems. When everyone shares a definition of success, outcomes can be quantified and apples-to-apples comparisons can be made across candidates. The final aim is a platform that aligns with data policies, latency needs, and your team’s learning curve.

Key criteria that drive an effective comparison

A robust comparison covers more than raw performance. You should weigh data handling and governance, cost of ownership, integration flexibility, model governance, user experience, and support. When you compare ai tools, also consider reproducibility, security posture, and compliance with applicable regulations. Quantitative metrics are important, but so are qualitative signals like developer experience, documentation quality, and community activity. A good rubric assigns weights to each criterion and applies them uniformly across all candidates. For teams with diverse stakeholders, a transparent scoring process helps maintain trust and reduces political bias. Throughout this section, you will see the phrase comparing ai tools used to emphasize the ongoing nature of evaluation rather than a one-time decision.

Tool categories and typical evaluation needs

Different tool families serve different purposes. Language-model APIs might excel in generation tasks but lag on structured reasoning; code-assist tools may automate repetitive patterns but require careful data governance. When you begin the exercise, categorize potential tools as follows: 1) general-purpose copilots, 2) domain-specific assistants, 3) coding and data-science oriented platforms, 4) research-grade models with reproducibility guarantees. For each category, define a baseline set of evaluation criteria—accuracy, latency, data retention policies, integration options, and cost structure—and apply them consistently across tools. This helps you avoid overvaluing flashy features and underweighting governance requirements. In every category, remember to factor the long-term implications of onboarding, such as training needs and maintenance overhead. The focus remains on handlers and developers who rely on these tools to improve throughput while maintaining quality and compliance.

Building a fair, repeatable evaluation framework

A fair framework starts with a clear, agreed-upon purpose. Create a scoring rubric with 5–8 criteria that matter most to your use case, such as accuracy on your data, latency under peak load, safety controls, fine-tuning capabilities, and data privacy. Assign weights to each criterion and predefine pass thresholds. Use a common dataset that reflects real-world tasks and ensure the evaluation is reproducible by restricting changes to inputs, prompts, or configuration during the test. Document every decision in a centralized rubric so stakeholders can audit the process later. Add a pilot period in which you test top candidates in a sandbox environment, capture user feedback, and measure impact on existing workflows. The result should be a defensible, transparent ranking rather than a guess based on anecdotes. AI Tool Resources recommends keeping the framework lightweight enough to adapt as needs evolve while robust enough to resist cherry-picking by vendors.

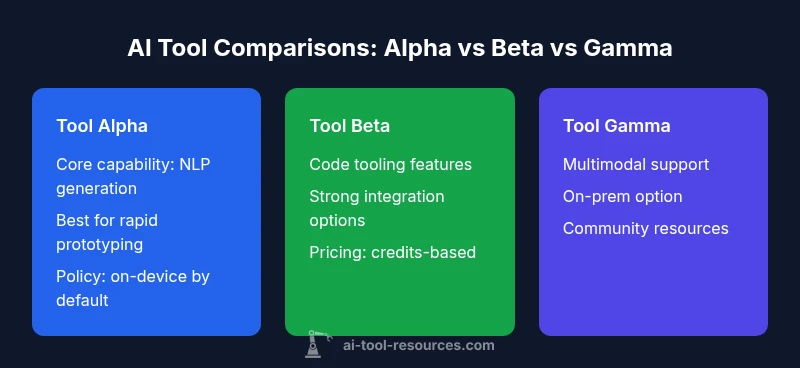

Case study: three hypothetical AI writing tools

Consider three hypothetical tools—Alpha, Beta, and Gamma—designed for content generation, editing, and research assistance. Alpha emphasizes speed and succinct outputs, Beta focuses on style control and tone consistency, while Gamma offers deep research capabilities and citation management. In our side-by-side framework, you would assess each tool against core criteria: generation quality, prompt robustness, data governance, integration into your CMS, pricing model, and available APIs. For example, Alpha might score high on latency and ease of use, but Beta could outperform in maintaining voice across long-form content. Gamma may provide strongest research features but require more complex setup and governance. When comparing ai tools for writing tasks, it’s essential to test on real-world prompts, analyze returned citations for accuracy, and assess how well the tools handle edge cases such as ambiguous queries or sensitive topics. The goal is to identify a primary tool that fits most of your needs, with secondary tools as backups for specialized tasks.

Common pitfalls and how to avoid them

One frequent trap is treating the comparison as a one-time event. Reevaluate periodically as tools evolve and your projects change. Another pitfall is overemphasizing superficially impressive features while neglecting governance, privacy, and compliance. Ensure your rubric includes data handling, model updates, and access controls. Beware vendor bias: collect independent benchmarks and use a standardized dataset rather than vendor-provided prompts. Finally, don’t skip pilot testing in your actual environment; results can differ in production from a lab setting. To stay objective, document your evaluation steps and maintain versioned rubric templates.

Practical checklist and templates you can reuse

- Define goals: what problem are you solving with ai tools?

- List criteria: accuracy, latency, governance, privacy, integration, cost, usability

- Create a scoring rubric: assign weights and thresholds

- Prepare test prompts: ensure representative, diverse inputs

- Run pilots: test in real workflows with a controlled user group

- Gather feedback: capture qualitative impressions and quantitative metrics

- Document decisions: store rubric results and rationale for auditing

- Plan next steps: decide pilots for deployment, strike a roadmap for rollout

Interpreting results and making a decision

Translate rubric scores into a decision. Identify which tools meet mandatory criteria and offer the best total value. Use a simple go/no-go decision or a weighted score to rank finalists. Consider risk factors such as vendor stability, security posture, and long-term maintenance. After decision, plan onboarding, governance checks, and retraining needs to ensure a smooth transition. The ultimate aim is a tool that not only performs well on benchmarks but also integrates into your organization’s workflows, culture, and compliance requirements. The AI Tool Resources team would advise tying the selection to measurable business outcomes and a clear implementation plan.

Feature Comparison

| Feature | Tool Alpha | Tool Beta | Tool Gamma |

|---|---|---|---|

| Core capabilities | NLP generation with fast latency | Tone and style control | Deep research features with citations |

| Data handling & privacy | On-device processing with optional cloud | Strong data governance options | Hybrid approach with audit trails |

| Model types | Open models with customizable prompts | Hybrid open/proprietary models | Proprietary models with provenance controls |

| Pricing model | Tiered subscription | Usage-based credits | Enterprise license with quotas |

| Integration options | API/SDK; CMS plugins | Webhooks; IDE plugins | Batch & streaming data connectors |

| Support & community | Docs and community forums | Dedicated support tier | Active researcher community |

| Best for | Rapid prototyping in teams | Voice and style consistency | Research-grade accuracy and citations |

Upsides

- Helps align stakeholders with objective criteria

- Reveals total cost of ownership beyond sticker price

- Encourages consistent evaluation across teams

- Promotes transparency and auditability

Weaknesses

- Can be time-consuming to implement

- Requires disciplined data governance and maintenance

- May be biased by rubric design if not carefully constructed

- Overemphasis on metrics can overlook user experience

Structured, multi-criteria evaluation is the clearer path to reliable choices.

The AI Tool Resources Team recommends a formal rubric and multiple candidate tools evaluated in pilots. This minimizes bias, clarifies trade-offs, and supports auditable decisions that align with governance and data policies.

FAQ

What should a comparison framework for ai tools include?

A good framework includes goals, evaluation criteria (accuracy, latency, governance, privacy, integration, cost), a standardized dataset, a scoring rubric, and a documented decision process. Reproducibility is essential.

A framework should cover goals, scores, and reproducibility so decisions are auditable.

How many tools should I compare?

Start with 3–5 candidates to balance depth and breadth. Include a primary target and 1–2 backups to cover edge cases and vendor diversity.

Three to five options is a practical sweet spot.

Should I consider on-prem vs cloud deployments?

Yes. Deployment model affects latency, data sovereignty, cost, and governance. Align your choice with regulatory requirements and internal policies.

Deployment choice matters for compliance and performance.

How do I ensure fair scoring across tools?

Use a single, pre-approved rubric, test with the same prompts, and blind the evaluators to tool identities when possible to reduce bias.

Keep the scoring unbiased by using the same prompts for all tools.

What are common mistakes to avoid?

Rushing to deployment, ignoring data governance, and relying solely on a single metric like speed can lead to poor long-term outcomes.

Don’t shortcut on governance or long-term costs.

Key Takeaways

- Define a clear goal before comparing ai tools

- Use a weighted rubric to score every candidate

- Pilot finalists in realistic workflows

- Document decisions to support audits and governance