AI Tool Comparison for Coding: Practical Guide 2026

An in-depth AI coding tool comparison focused on accuracy, IDE integration, privacy, and cost to help developers, researchers, and students choose the right AI coding assistant.

TL;DR: For coding tasks, prioritize AI tools with strong autocomplete, reliable code generation, and safe IDE integration. This comparison evaluates accuracy, speed, privacy, and team fit across three representative options to help you pick the best match for your coding workflow. Consider language support, testing capabilities, and governance when deciding which tool to pilot long-term.

Scope and Definitions

The phrase ai tool comparison for coding refers to evaluating artificial intelligence–driven coding assistants, code generators, and pair-programming copilots across a consistent set of criteria. For developers, researchers, and students, the goal is to identify tools that improve code quality, reduce boilerplate, and fit existing workflows without compromising security or privacy. In this article, we apply a systematic comparison framework to three representative AI coding tools, highlighting how each performs on core tasks such as autocompletion, correctness, and integration with popular development environments. By focusing on actionable signals rather than hype, we help you answer: which tool is best for my coding tasks, team size, and tech stack? Throughout, we reference general principles of tool evaluation and avoid claims about specific products beyond their described capabilities. This ai tool comparison for coding also emphasizes reproducibility: you can reproduce our pilot tests and compare results with your own projects using the guidelines provided.

Key Selection Criteria for Coding Tools

Effective evaluation starts with criteria that match real coding work. First, accuracy and reliability: how often does the tool suggest correct, safe code, and how quickly does it recover from mistakes? Second, IDE integration: does the tool plug into the editor you already use (VS Code, JetBrains, Vim), and can it participate in your existing workflows (linting, testing, debugging)? Third, language support and code quality: which languages are covered, and do generated snippets adhere to style guides? Fourth, security and data handling: what is sent to the service, who has access, and how is your code treated after submission? Fifth, cost and licensing: is the pricing model transparent, scalable, and predictable for your team size? Sixth, governance and support: what SLAs exist, and how easy is it to obtain help or report issues? Finally, ecosystem and community: availability of extensions, templates, and user-generated examples. By weighing these criteria, teams of any size can create a fair, apples-to-apples comparison that informs a purchase or pilot decision.

Per-Tool vs Per-Category Approach

Some teams compare tools directly against each other, item by item, while others summarize capabilities by category (code generation, autocomplete, testing, documentation). A direct tool-to-tool comparison is precise but can become brittle as features shift; a category-based view shows where a given tool excels and where it lags. The best practice is to combine both: start with a high-level category map to identify candidates, then run side-by-side tests on a shared codebase. Document your test harness, including sample tasks, language mix, project size, and CI/CD constraints. This approach reduces bias and makes it easier to explain choices to non-technical stakeholders. In this article, we present a three-tool comparison that balances depth with clarity while remaining agnostic about product names.

How We Measure Quality in AI Coding Assistants

We assess tools using a framework that blends human judgment with objective signals. First, correctness: do recommendations respect language semantics and security constraints? Second, context awareness: does the tool consider surrounding code, imports, and project structure when suggesting changes? Third, latency and throughput: how responsive is the tool during typical editing sessions? Fourth, robustness: how often does the tool generate syntactically valid code or fail gracefully? Fifth, safety: does the tool avoid unsafe patterns or insecure library usage? Sixth, language coverage: which languages and frameworks are supported, and how well do templates adapt to different styles? Seventh, auditing and traceability: can you review, reproduce, and revert AI-generated changes? By combining quantitative scores with qualitative notes, teams gain a realistic sense of how the tool will perform under real-world workloads.

Common Pitfalls When Comparing AI Coding Tools

Beware bias toward novelty: a newer feature may look impressive but offer little practical value. Assume every provider’s model will require updates, so plan for ongoing evaluation rather than a one-off decision. Data privacy is critical: ensure that your proprietary code is not exposed to third parties without consent, and review retention policies. Integrity risk arises when tools draft large swaths of code without human review; establish guardrails and mandatory reviews for critical components. Compatibility risk matters as well: consider how a tool coexists with your linting rules, test suites, and deployment pipelines. Finally, cost illusions can creep in; a tool with a low upfront price may incur high maintenance costs over time through API usage or add-ons. Anticipating these pitfalls helps you design a robust evaluation plan rather than chasing the latest buzzword.

Real-World Scenarios: When to Choose Tool A vs Tool B

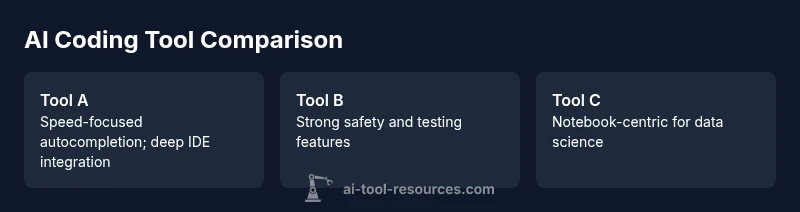

Scenario 1 — Rapid boilerplate generation for a greenfield API service: Tool A shines, offering fast scaffolding and sensible defaults that align with common frameworks. Scenario 2 — Code review and refactoring in a large TypeScript project: Tool B provides stronger type awareness and safer change suggestions, reducing churn. Scenario 3 — Data science notebooks and experiments: Tool C offers better support for Python, notebooks, and visualization libraries. In each case, the decision should consider the team's current stack, security requirements, and the value of speed versus precision. A practical approach is to pilot two tools on a representative project, capture concrete outcomes (time saved, defect rate, reviewer effort), and decide based on those results rather than marketing claims.

Side-By-Side Feature Highlights

Here we describe three hypothetical AI coding assistants—Tool A, Tool B, and Tool C—focusing on core capabilities that developers care about. For Tool A, autocompletion is fast and context-aware, with deep integration into VS Code and a broad language set. For Tool B, code generation emphasizes correctness and safety, with strong linting and unit-test generation features. Tool C offers notebooks and data-science oriented support, excellent for Python and SQL, and a flexible collaboration workflow. Across all tools, consider data handling policies, on-device options, and the ability to customize prompts and templates. The takeaway is not that one tool is universally best, but that each excels in distinct contexts. Your choice should map to your projects, team size, and governance requirements.

Implementation Considerations for Teams

Adopting AI coding tools requires more than buying licenses. Start with governance: decide who owns tool evaluation, who approves pilots, and how results are measured. Align with security standards: review data handling, access controls, and code provenance. Plan CI/CD integration: ensure compatibility with your pipelines, build steps, and automated tests. Establish usage policies: avoid leaking sensitive data, require code review for critical areas, and define acceptable use cases. Choose a pilot scope that’s representative but manageable, such as a single repository or service. Develop a rollback plan in case the tool creates regressions or integration issues. Finally, monitor impact: track cycle time, defect rates, and developer satisfaction to determine whether to expand, pause, or sunset a tool. This framework helps teams scale AI-assisted coding responsibly.

Practical Roadmap to Your Tooling Decision

- Define objectives and success metrics aligned with coding outcomes, such as reduced cycle time or fewer defects. 2) Gather requirements across languages, frameworks, and IDEs to create a fair evaluation matrix. 3) Shortlist candidates based on criteria and risk, using a fixed evaluation cadence. 4) Run controlled pilots on representative projects, collecting quantitative and qualitative data. 5) Compare results using a standardized scoring rubric and a transparent decision log. 6) Validate security and privacy practices with stakeholders and legal/compliance teams. 7) Plan rollout and training to ensure user adoption. 8) Establish ongoing governance: periodic reviews, updates after releases, and continuous learning cycles.

Feature Comparison

| Feature | AI Coding Assistant A | AI Coding Assistant B | AI Coding Assistant C |

|---|---|---|---|

| Autocompletion accuracy | Excellent | Good | Moderate |

| Code generation quality | Excellent | Good | Average |

| IDE integration | Deep integration | Partial integration | Basic integration |

| Languages supported | Wide (JS, Python, Java, Go, etc.) | Moderate | Narrow (few languages) |

| Security & data handling | Strong privacy controls | Standard privacy | Basic privacy |

| Pricing model | Subscription-based | Usage-based | Freemium/Trial |

| Best for | Speed-focused teams | Quality-sensitive teams | Data science-focused teams |

Upsides

- Clarifies relative strengths across tools

- Helps align tooling with existing workflows

- Reveals gaps in features and support

- Supports objective decision-making

Weaknesses

- May oversimplify nuanced tool performance

- Time investment to run pilots

- Requires ongoing updates as tools evolve

Tool A generally offers the best balance of speed and accuracy for most teams; B and C excel in niche contexts

Choose Tool A for broad applicability and ROI. Tool B is ideal for type-aware projects, while Tool C fits data-science workloads.

FAQ

AI coding tool compare?

An AI coding tool comparison evaluates AI-powered coding assistants on criteria like accuracy, integration, security, and cost, to help teams pick the right tool for their development workflow.

An AI coding tool compare helps you pick the best AI helper for your coding workflow.

How many tools to compare?

Aim for three to five well-scoped options. Too few may miss good fits; too many can make pilots unwieldy and hard to manage.

Start with three to five solid options and pilot them.

What criteria matter most for teams?

Core factors are accuracy, IDE integration, and data privacy. Then consider cost, governance, and alignment with team workflows.

Focus on accuracy, integration, and privacy first.

Can AI replace developers?

No. AI coding tools augment developers by handling repetitive tasks and providing suggestions, but human review and design decisions remain essential.

They augment, not replace, human skills.

How should I handle data privacy?

Review provider data usage policies, prefer on-premises or private cloud options when possible, and enforce code-review policies during pilots.

Check data policies and use private options when available.

Key Takeaways

- Define your coding objectives before evaluation

- Pilot three tools on representative tasks

- Prioritize integration and privacy first

- Document results to justify decisions

- Plan for governance and ongoing review