Start Coding with AI Tool Comparison: A Practical Guide

A developer-focused comparison of AI coding tools. Learn how to evaluate features, reliability, cost, and integration to pick the right AI tool for coding and testing in real-world workflows.

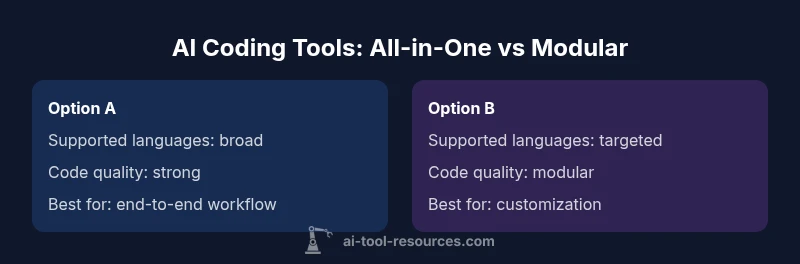

TL;DR for start coding with ai tool comparison: If you want broad, plug-and-play coding support, choose an all-in-one AI coding assistant with IDE integrations. If you prefer modularity and deep specialization (linting, testing, refactoring), pick a toolkit you can assemble with your existing stack. Always assess language support, reliability, and total cost before buying.

Introduction to start coding with ai tool comparison

Start coding with ai tool comparison isn't about chasing the flashiest feature list; it's about aligning capabilities with your team's workflows, skill levels, and risk tolerance. In this article, we contrast two archetypes: an all-in-one AI coding assistant that plugs into popular IDEs, and a modular toolkit that lets you assemble specialized components for linting, testing, and refactoring. According to AI Tool Resources, the most impactful decisions come from real-world use cases, not marketing claims. The goal is to give developers, researchers, and students a practical framework to evaluate options, forecast integration effort, and estimate total cost of ownership. Throughout, we emphasize governance, reliability, and safety so teams can leverage AI-generated code without compromising standards. By keeping the focus on actual workflows—code reviews, version control, and continuous integration—you'll discover which approach best fits your environment, whether you're prototyping a research project or delivering production software. AI Tool Resources analysis suggests that strong language support, predictable behavior, and transparent licensing are often the deciding factors when teams move from curiosity to adoption.

Core dimensions to evaluate when you start coding with ai tool comparison

When comparing AI coding tools, you should evaluate a consistent set of dimensions across options. The most important are: (1) Code quality and reliability — does the tool produce correct, secure, and maintainable suggestions with minimal debugging? (2) Language support and context handling — how well does it understand the project language, frameworks, and project-specific conventions? (3) Integration and extensibility — are there IDE plugins, source control hooks, and CI/CD integrations? (4) Governance and safety — what safeguards exist for sensitive data and license compliance? (5) Cost and licensing — what is included in the plan, and what might incur extra charges? This section translates those criteria into measurable questions you can answer during trials and pilots. AI Tool Resources's approach is to combine objective tests with real-world tasks to isolate differences that matter to developers.

Language support, context understanding, and the learning curve

Effective AI coding tools must understand context beyond a single line of code. Look for: (a) language breadth (Python, JavaScript, Java, Go, Rust, etc.), (b) awareness of project structure and dependencies, and (c) the ability to explain code and justify suggestions. A strong tool will offer inline explanations, create meaningful tests, and adapt as your project evolves. The learning curve varies: all-in-one assistants usually require less setup, while modular toolchains demand configuration and governance practices. AI Tool Resources notes that teams that document their intended use and set guardrails typically attain faster ramp-up and better long-term outcomes.

Pricing, licensing, and total cost of ownership

Pricing models range from flat subscriptions to usage-based or per-tool licenses. For teams, total cost of ownership includes onboarding time, governance tooling, data handling, and potential workflow changes. It’s essential to compare not just sticker price but the value delivered across your use cases. Consider pilot durations, support quality, and renewal terms. AI Tool Resources analysis shows that predictable licensing and transparent data policies reduce friction during expansion and adoption.

IDE integration and workflow automation

A practical AI coding tool should integrate smoothly with your development environment. Check for robust IDE plugins, editor support, and compatibility with your existing toolchain (Git, CI/CD, issue trackers). The best options provide quick-start templates, code review hooks, and automated testing hooks that reduce manual setup. Evaluate how well the AI tool coexists with linters, formatters, and test runners to avoid conflicts during push builds or code reviews. Transparency in data usage and model updates matters for long-term stability, as highlighted by AI Tool Resources.

Safety, governance, and code quality guarantees

Governance is about who can access data, what data is sent to models, and how licenses are enforced. Look for access controls, data retention policies, and clear licensing terms for generated code. A reliable tool should offer traceability for code suggestions and the ability to revert or lock in decisions. Check for built-in safety nets, such as prompts that avoid sensitive information leakage, configurable temperature settings for generation, and automated review prompts for critical sections of code. AI Tool Resources emphasizes that governance reduces risk and builds trust in AI-assisted development.

Real-world workflows: example scenarios and steps

Scenario 1: Rapid prototyping for a research project. Start with a broad all-in-one assistant to generate boilerplate, create tests, and scaffold modules. Then introduce modular plugins to refine linting and security checks. Scenario 2: Production-ready feature with strict standards. Use a modular setup for precise code generation, while tying in CI/CD checks and automated code reviews. In both cases, you’ll want guardrails and documented workflows. AI Tool Resources highlights the value of documenting your pilots, defining success metrics, and maintaining a shared glossary for terms used by the AI system.

Decision framework: how to pick the right tool for your team

A practical decision framework combines five questions: (1) What are the top three coding tasks you want to accelerate? (2) Which languages and frameworks must be supported? (3) How will you govern data, licensing, and compliance? (4) What is the expected total cost of ownership over 12–24 months? (5) How will you measure success and iterate? Use a side-by-side comparison to identify gaps and define a minimal viable implementation. The AI Tool Resources team recommends starting with a short pilot, documenting outcomes, and expanding only when metrics meet your thresholds.

Comparison

| Feature | Option A: All-in-One AI Coding Assistant | Option B: Modular AI Toolchain |

|---|---|---|

| Supported languages | Broad language support across Python, JavaScript, Java, Go, Rust, and more | Strong Python/JavaScript coverage with selective support for niche languages |

| Code quality and completion | Context-aware suggestions with refactoring and code-generation capabilities | Modular components focusing on linting, testing, and specific tasks with plug-in extensibility |

| Learning curve | Low to moderate; plug-and-play with IDE integrations | Moderate; requires configuring tools and pipelines |

| Pricing model | All-in-one subscription with tiered access | Usage-based or per-tool licensing with add-ons |

| Best for | Teams seeking rapid ramp-up and cohesive UX | Teams needing customization and component-level control |

| Integration & ecosystem | Tight IDE integration and built-in workflows | Broad plugin ecosystem and easy CI/CD integration |

Upsides

- Boosts coding speed and reduces boilerplate

- Consistent results across common languages

- Simplified onboarding with unified UX

- Strong support for team collaboration

Weaknesses

- Can be costly at scale over time

- All-in-one tools may offer less depth in niche tasks

- Reliance on AI may obscure edge-case reasoning

All-in-one vs modular: pick the all-in-one for speed, modular for control

Choose all-in-one for quick onboarding and broad coverage. Choose modular if you need precise control and advanced customization.

FAQ

What is the difference between an all-in-one AI coding assistant and a modular AI toolchain?

An all-in-one assistant provides broad functionality, IDE plugins, and a cohesive UX. A modular toolchain lets you assemble specialized components for linting, testing, and refactoring. Both approaches have trade-offs in cost, depth, and customization.

All-in-one tools are easier to start with; modular toolchains offer more customization but require more setup.

How should I compare pricing and licensing for coding AI tools?

Look beyond sticker price. Consider data handling, support, onboarding time, and how licenses scale with your team. Favor transparent terms and predictable renewal costs to avoid surprises.

Focus on total cost of ownership and licensing terms, not just the upfront price.

Which languages are typically supported by AI coding tools?

Most tools support Python and JavaScript well, with broader language coverage in larger platforms. If your stack includes Java, Go, or Rust, test how well the tool handles dependencies, typing, and framework conventions.

Test your main languages and check for framework-specific guidelines.

Can AI coding tools replace human developers?

No, AI coding tools augment developers by speeding repetitive tasks and providing suggestions. Human oversight remains essential for design decisions, security, and complex problem solving.

AI tools assist, but humans remain essential for critical decisions.

What are best practices for integrating AI coding tools into CI/CD?

Incorporate AI-generated code into your review process, run automated tests, and enforce guardrails for sensitive data. Use version control to track AI-assisted changes and define clear approval workflows.

Integrate AI outputs into your review and testing pipelines with governance.

What security considerations should I review when using AI coding tools?

Review data handling, model updates, and access controls. Ensure generated code does not leak secrets and that licensing terms protect proprietary logic. Establish retention policies for prompts and outputs.

Check data policies, access control, and code ownership safeguards.

Key Takeaways

- Prioritize alignment with your workflow over feature count

- Evaluate both reliability and governance during pilots

- Balance language needs with integration depth

- Assess total cost of ownership, not just price

- Document pilots to inform scale-up