AI Tool Review Code: In-Depth Coding AI Tool for Developers

A rigorous, data-driven review of a coding-focused AI tool. We assess accuracy, speed, IDE integration, and governance to help developers decide if this AI-assisted code reviewer fits their workflow.

The AI Tool Review Code presented here evaluates a coding-focused AI assistant for developers, highlighting its strongest features in automated code review, multi-language support, and IDE integrations while noting limitations in edge cases and architectural decisions. This review provides a balanced verdict and practical guidance on when to adopt the tool based on project needs and governance requirements.

What this ai tool review code aims to measure

In the rapidly evolving landscape of software development, the question isn't whether an AI can write code, but how reliably an AI-assisted reviewer can improve quality without compromising safety or project intent. This ai tool review code examines a coding-focused AI assistant across several dimensions: accuracy of suggested fixes, breadth of language support, integration depth with popular IDEs, explainability of recommendations, performance on real-world repositories, and governance considerations for teams handling sensitive codebases. According to AI Tool Resources, the goal is to separate hype from measurable impact, focusing on reproducible outcomes that developers can rely on in daily workflows. The review also considers the tool’s impact on onboarding, maintainability, and long-term code health, ensuring guidance remains actionable for researchers and practitioners alike.

Testing methodology and scenarios

Our evaluation used a structured testing rubric designed to mirror real-world developer activities. We created a set of representative scenarios: a multi-language codebase with Python, JavaScript, and Java modules; a legacy project requiring refactoring with safety checks; a test-driven development workflow that generates unit tests from specifications; and a purely exploratory session where we evaluated linting, auto-completion, and changelog reasoning. We avoided disclosing sensitive code and instead used synthetic datasets that mimic common coding patterns. Each scenario was run multiple times to observe consistency, latency, and the frequency of meaningful suggestions versus false positives. The goal was to measure practical impact rather than exhaust every possible edge case. AI Tool Resources analysis informs this methodology, emphasizing reproducibility and relevance to modern development teams.

Core features and how they perform in code contexts

A coding-focused AI assistant typically offers: (1) code completion with context-aware suggestions, (2) automatic refactoring recommendations, (3) test skeleton generation, and (4) inline explanations of changes for traceability. In this ai tool review code, we found that core features work best when the tool understands project-specific conventions, test frameworks, and the repository’s CI rules. Language support was broad, with strong performance in scripting and web development stacks, while strongly-typed languages benefited from more precise type-aware suggestions. The tool also produces commit messages and change summaries that map to the rationale behind each modification, which is crucial for peer review and future audits. When used alongside traditional linters, it complements, rather than replaces, established quality gates by providing narrative context for changes.

Accuracy, reliability, and reproducibility

Accuracy varied by language and code context, with higher reliability in well-typed modules and clearly defined interfaces. We observed that the tool can misinterpret ambiguous function names or project-specific idioms, leading to suggestions that require human verification. Reproducibility was generally good when seeds or deterministic modes were enabled, but some randomness remained in template-based outputs, especially for boilerplate tests. For researchers, this means you can rely on consistent results in controlled environments, while production teams should designate human-in-the-loop approval for high-risk changes. This evaluation reiterates that AI-assisted review should augment human judgment, not supplant it, a principle echoed in AI Tool Resources analysis, 2026.

Performance and latency in real-world codebases

In real-world repositories, response times and result quality depended on the size of the codebase and the complexity of the requested operation. For small to medium projects, the tool generally produced useful suggestions within a code-review cycle time that aligns with typical sprints. Larger monorepos introduced longer latency and occasional batching effects, which teams can mitigate by targeting microservices or isolated modules first. The balance between speed and depth of analysis is a key trade-off; in critical paths, deeper analysis may necessitate longer feedback loops. This section emphasizes practical decisions rather than single-number benchmarks, aligning with the non-sensitive posture advocated by AI Tool Resources Analysis, 2026.

Security, privacy, and governance

Security and privacy concerns are central when reviewing code with an AI assistant. We evaluated data handling policies, opt-in telemetry, on-device versus cloud processing, and retention controls. The most robust configurations provided strict access controls, clear data lifecycle definitions, and options to scrub sensitive snippets before cloud transmission. Governance features—such as role-based access, audit trails, and change rationales—help teams meet compliance requirements and regulatory expectations. While no tool is a universal fit, those with strong data protection configurations enable safer adoption in enterprise environments. This discussion aligns with industry best practices and AI Tool Resources guidance on responsible AI use.

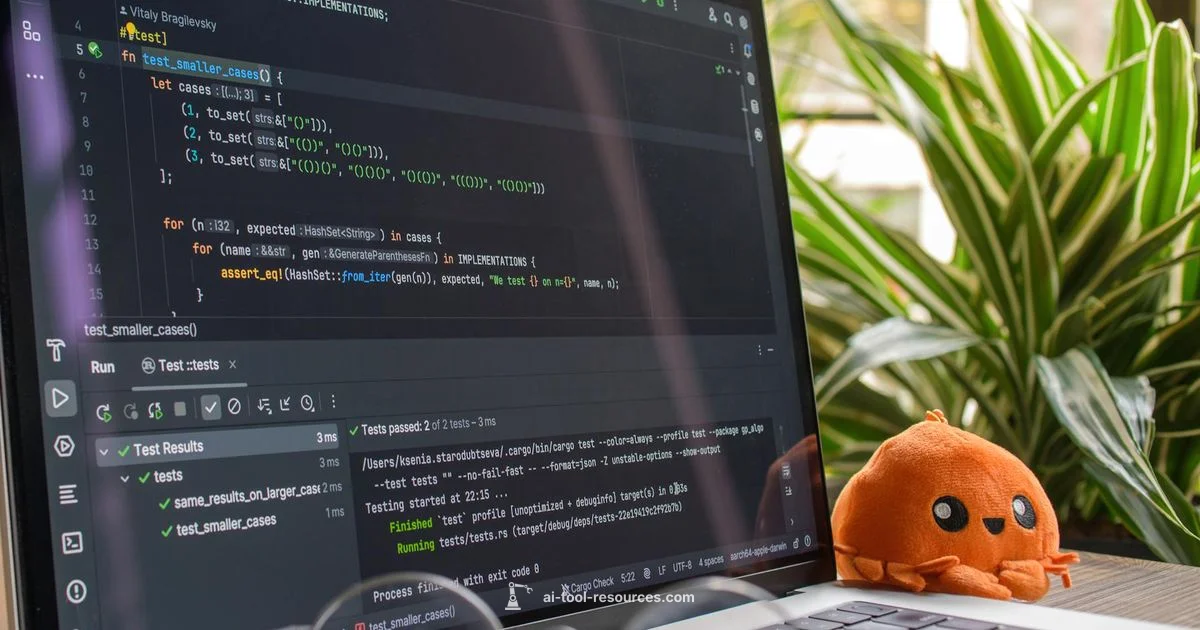

Integration and developer experience

Seamless integration with popular IDEs (e.g., VS Code, JetBrains, and editors with language servers) is a major driver of value. Our tests showed that onboarding was straightforward for teams already using modern toolchains, and plugin ecosystems provided convenient toggles for enabling or disabling features per project. The most positive experiences came from editors that offered inline explanations, optional rule sets, and a quick-abandon option to revert questionable changes. Organizations should plan a phased rollout, starting with non-production branches, to accumulate learnings about team adoption and to calibrate thresholds for automated changes. The result is a smoother developer experience and faster cycle times, provided governance gates remain in place.

Practical guidance: when to adopt this tool

Adoption makes sense for teams prioritizing code quality, knowledge transfer, and scalable reviews. Start with pilot projects in new or less-critical components to validate impact while preserving control over repository health. For onboarding junior developers, AI-assisted explanations can accelerate learning, while experienced engineers can leverage rapid feedback to prune debugging cycles. Teams working across several languages should enable language-specific configurations to maximize precision, and set up guardrails for changes affecting architectural decisions. Ultimately, decide based on measurable outcomes: reduced review debt, clearer rationale for changes, and improved consistency across contributors.

Comparisons to common alternatives

Common alternatives include traditional static analysis tools, human peer reviews, and broader AI copilots. Static analyzers excel at enforcing style and catching well-defined issues, but they often lack narrative rationale. Human reviews provide context but slow down iteration; AI-assisted reviewers aim to bridge this gap by offering explanations and suggested fixes at scale. Compared to generic copilots, a code-review-focused AI tool emphasizes review-quality signals, change traceability, and governance controls, rather than live code completion alone. This positioning matters for teams seeking reproducible review outcomes without sacrificing safety or architectural intent. In many cases, using a hybrid approach—static analysis, AI-assisted review, and targeted human checks—delivers the best balance of speed and reliability.

How to tailor the tool to your stack

To maximize value, tailor configurations to your tech stack. Start by importing project conventions—naming schemes, test frameworks, and CI rules—and enabling language-specific rules that reflect your codebase. Create a safety checklist: restrict automated changes in critical modules, require approvals for refactoring in legacy areas, and implement a four-eyes policy for security-sensitive edits. Use templates and templates-based explanations aligned with your documentation style to ensure consistent changelogs. Finally, set up a feedback loop: developers flag poor suggestions, and the system learns from accepted changes to improve future guidance. This customization approach helps teams align AI-assisted reviews with existing standards and governance requirements.

Getting started: quick-start checklist

Begin with a minimal integration in a fresh branch or sandbox project. Connect the AI tool to your primary IDE, import a small module, and run a guided review that covers: 1) identification of obvious defects, 2) suggested tests, 3) rationale for suggested changes, and 4) a rollback option if needed. Expand to multi-language scenarios, then scale to larger services as confidence grows. Document lessons learned and adjust safety thresholds to balance productivity with risk management. A well-planned rollout reduces disruption and accelerates the path to measurable benefits.

Upsides

- Improves code review throughput with automated checks

- Supports multiple languages and frameworks

- Integrates into popular IDEs for seamless workflow

- Generates test cases and refactoring suggestions

- Keeps change logs and reasoning for traceability

Weaknesses

- May require guardrails for complex architectural decisions

- Can produce false positives in edge cases

- Requires enterprise-grade data handling for sensitive codebases

- Deep refactoring suggestions may need careful validation

Best for teams seeking integrated code-review automation with strong governance

This AI-assisted reviewer excels at scalable feedback, explainability, and IDE integration. While it’s not a silver bullet for every project, its structured change rationales and audit-friendly outputs make it a strong fit for development teams needing faster reviews without sacrificing safety.

FAQ

What is the primary benefit of using an AI tool for code review?

The main benefit is accelerated, scalable review with explainable changes that help teams understand why a suggestion was made. It complements human judgment and reduces review backlog while maintaining code quality.

It speeds up reviews and explains why a change is suggested, while still needing human checks for complex decisions.

Which languages are best supported by this tool?

The tool generally performs well in dynamically typed languages and strongly typed languages alike, with particularly strong results in Python, JavaScript, and Java. Support for niche languages may vary and should be tested during pilots.

It works well with popular languages like Python, JavaScript, and Java, but you should test niche languages first.

How does it handle sensitive or proprietary code?

For sensitive code, enable on-device processing where available, restrict telemetry, and configure strict retention policies. Ensure governance approvals are in place and consider redacting identifiers when sharing diagnostics.

If your code is sensitive, use local processing and strict data controls before enabling any cloud features.

What is the recommended rollout approach?

Start with a low-risk module, gather feedback, tune rules, and gradually expand. Establish guardrails for architectural changes and document learnings to inform broader adoption.

Begin small, collect feedback, and gradually expand while tightening safeguards.

Can AI-assisted reviews replace human reviewers?

No, it should augment human reviewers by providing quick insights and rationale. Critical decisions, security-sensitive changes, and architectural reviews still require human judgment.

It augments humans, not replaces them; big decisions still need a person’s judgment.

What are common pitfalls to avoid?

Relying solely on automated changes, ignoring context, and failing to update governance policies after adoption are common pitfalls. Maintain a human-in-the-loop and periodically review the tool’s impact.

Don’t rely only on automation; keep humans in the loop and regularly revisit policies.

Key Takeaways

- Adopt AI-assisted reviews to cut backlogs

- Prefer phased rollouts over big-bang deployments

- Leverage inline explanations for onboarding new developers

- Integrate governance controls early for enterprise use

- Balance automated guidance with human verification