Compare AI Tools for Coding: An Objective Guide

This analytical guide compares AI tools for coding, detailing evaluation criteria, features, privacy, and pricing to help developers pick the best fits for their workflow.

To pick AI coding tools, you should compare them along core dimensions like code quality, language coverage, privacy, and ecosystem integrations. For developers, teams, and researchers, the right choice balances accuracy with governance and cost. This quick guide highlights the key decision factors and sets you up for the detailed comparison that follows.

Why compare ai tools for coding matters

In modern software development, teams increasingly rely on AI tools to assist with code generation, debugging, documentation, and learning. The question is not whether to adopt such tools, but how to compare ai tools for coding to ensure coverage across languages, maintain governance, protect sensitive data, and avoid vendor lock-in. A thoughtful comparison helps you map your workflow to features like accuracy, latency, and safety, while also considering organizational needs such as collaboration, compliance, and cost. For researchers evaluating tool capabilities, a rigorous comparison also clarifies which models and data sources are used behind the scenes. Finally, for students exploring AI-assisted coding, a structured approach reveals which tools provide transparent feedback, learning resources, and reproducible results. Throughout this guide, you’ll see practical criteria and concrete benchmarks you can apply to your own environment.

The primary keyword, compare ai tools for coding, will appear naturally as you assess each tool against concrete metrics rather than relying on hype. This section sets the stage for a rigorous, repeatable evaluation process tailored to developers and researchers alike.

}

Key differentiators in AI coding tools

AI coding tools vary across several core dimensions that directly impact real-world outcomes. First, model architecture and training data shape accuracy and bias; some tools employ advanced transformer models with retrieval-augmented generation to improve factual correctness, while others rely on template-based completions. Second, data handling and privacy options determine whether code and prompts stay on-device or flow through the cloud, affecting security, compliance, and latency. Third, language support and domain coverage matter if you work with multi-language stacks or niche frameworks. Fourth, ecosystem and integrations—CI/CD pipelines, editors, IDE plugins, and project management tools—drive adoption and team velocity. Fifth, customization options, such as fine-tuning or personal risk controls, influence how well a tool adapts to your codebase and governance standards. Finally, cost models and licensing affect scale and total cost of ownership, especially for large teams and educational programs.

Evaluation criteria: what to measure

When you set out to compare ai tools for coding, align on a consistent rubric. Start with accuracy and usefulness: how often do code completions compile, run, and align with your intended logic? Assess latency (response time) under typical workloads, and evaluate stability across languages. Consider language coverage—are you working in JavaScript, Python, Java, Rust, or domain-specific languages? Examine safety features: how well does the tool avoid sensitive data leakage, unsafe code patterns, or insecure APIs? Look at integration depth: does it plug smoothly into your editor, version control, issue tracker, and build systems? Pricing and licensing must be clear, with transparent per-seat or usage-based costs. Data provenance and governance—who owns the prompts, and can you audit model behavior? Finally, assess learning value: does the tool provide explanations, documentation, and reproducible prompts that help developers grow over time.

Feature-by-feature: code generation vs debugging vs learning support

AI coding tools typically offer several feature clusters. Code generation focuses on boilerplate, refactoring, and function scaffolding, often excelling in common patterns and boilerplate-heavy tasks. Debugging and error analysis features aim to surface root causes, provide patch suggestions, and explain failures in plain language. Learning support involves explanations, examples, and interactive tutorials tied to your codebase. Some tools specialize in linting and style enforcement, while others emphasize test generation and coverage analysis. When comparing, map your needs to these clusters: if your team writes lots of boilerplate, prioritize generation quality; if reliability and correctness are critical, emphasize robust debugging and validation; for education or onboarding, prioritize explanations and learning paths.

Practical benchmarking: how to run your own tests

To benchmark AI coding tools in a reproducible way, start with a representative benchmark suite drawn from your codebase or a standard open-source corpus. Define metrics such as compile success rate, time saved per task, bug detection rate, and adherence to project conventions. Run parallel tests across multiple languages and frameworks to assess cross-language consistency. Capture qualitative feedback from developers on clarity, maintainability of generated code, and usefulness of explanations. Establish a regular review cadence—quarterly or after major tech-stack changes—to re-evaluate tool performance. Document decisions and create a living playbook that explains why certain tools are adopted or retired, including privacy and governance considerations.

Real-world use-cases: startups, researchers, students

Startups often seek rapid iteration: AI coding tools can accelerate MVP development, enabling teams to ship features faster while keeping code quality high through automated checks. Researchers might prioritize tool transparency, provenance, and research-friendly features such as reproducible prompts and logs for experiments. Students benefit from guided learning paths, accessible explanations, and examples that map to coursework. In each case, success rests on aligning tool capabilities with present workflows, ensuring that AI assistance augments rather than distracts from core skills, and maintaining guardrails to protect sensitive data and intellectual property.

Adoption considerations: privacy, licensing, governance

Adoption hinges on governance and risk management. Scrutinize data handling policies: where code, prompts, and models are stored, who has access, and whether prompts are logged for training. Review licensing terms for enterprise use, academic settings, and derivative works. Establish usage policies that balance productivity with risk controls, such as limiting access to sensitive repositories or enabling code review workflows that require human oversight. Consider vendor stability and long-term roadmaps to prevent sudden deprecation of critical tooling. Finally, implement auditing and telemetry that respects developer privacy while providing actionable insights for optimization.

Making the decision: a structured checklist

Use a structured checklist to finalize tool selection. Include criteria such as (1) language support (2) reliability and accuracy (3) safety features (4) privacy and on-premises options (5) integration depth (6) pricing clarity (7) governance capabilities (8) team feedback and onboarding impact (9) scale for future growth (10) vendor support and roadmap alignment. Run a pilot with a small cross-functional group, collect both quantitative metrics and qualitative feedback, and document the decision rationale. This disciplined approach reduces bias and helps you justify the final choice to stakeholders.

Open-source vs proprietary AI tools: trade-offs

Open-source options offer transparency, customizable models, and community-driven improvements, which can align with research or education goals. Proprietary tools often provide polished interfaces, stronger enterprise support, and robust safety controls out of the box. The trade-off centers on control versus convenience: open-source demands more internal expertise for deployment and governance, while proprietary solutions can decouple you from model maintenance but may constrain customization and data handling. When comparing, assess licensing terms, governance features, and the ability to audit or modify behavior to fit your policies.

Future-proofing your selection: staying current

AI tooling evolves rapidly, with frequent updates to models, APIs, and integration ecosystems. Build a future-proof strategy by prioritizing tools that offer backward-compatible versioning, clear deprecation notices, and a roadmap aligned with your tech stack. Maintain a rotating evaluation schedule and keep a record of learnings from each tooling iteration. Invest in developer training to adapt to new capabilities, and design your architecture to accommodate changes in prompts, languages, and platform practices without destabilizing existing projects.

Comparison

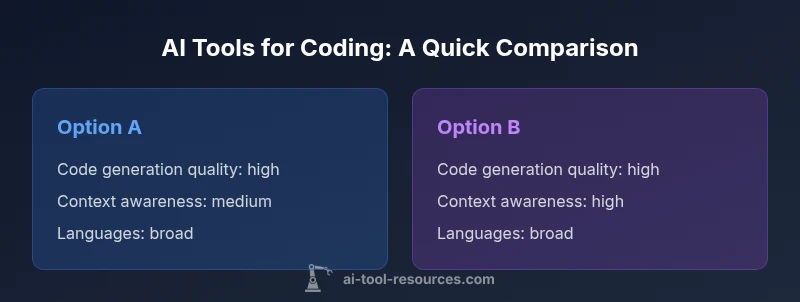

| Feature | Option A | Option B |

|---|---|---|

| Code generation quality | high | high |

| Context awareness | medium | high |

| Language coverage | broad | broad |

| Integrations | GitHub, VS Code, JetBrains | GitHub, VS Code, GitLab |

| Privacy model | on-device & cloud | cloud-only |

| Pricing model | per-seat subscription | usage-based |

| Data provenance | prompts stored locally or opt-out | prompts may be logged for model tuning |

| Best for | teams needing broad coverage | coding-heavy environments |

Upsides

- Potentially dramatic boosts in coding speed

- Improved code quality with templates and linting

- Supports rapid prototyping across languages

- Flexible pricing and deployment options

- Helpful for learning and onboarding

Weaknesses

- Quality varies across languages and domains

- Over-reliance may reduce deep understanding

- Data privacy concerns depending on vendor

- Cost can scale with team size

AI coding tools offer speed and precision, but choose based on governance and team needs

Balance speed with safety and privacy. Select tools that align with your stack, governance policies, and long-term cost considerations.

FAQ

What should I include in a tool evaluation checklist?

Include accuracy, language coverage, latency, safety, privacy, integrations, licensing, and cost. Add governance and onboarding impact for teams. Use real-world tasks from your projects to test.

Build a checklist covering accuracy, safety, and integrations, then test with real tasks.

Do AI coding tools replace developers or simply augment them?

They augment developers by handling repetitive tasks, suggesting improvements, and accelerating learning. Human review remains essential for correctness and design decisions.

They augment, not replace, developers; humans stay essential for judgment.

What about data privacy when using these tools?

Review vendor data policies, whether prompts are stored or used for training, and if on-premise options exist. Prefer tools offering opt-out and strict access controls.

Check policies, on-prem options, and opt-out choices.

How should pricing influence tool selection?

Consider total cost of ownership, not just monthly fees. Include hidden costs like data transfer, onboarding, and required plan upgrades for team growth.

Look beyond sticker price to total cost.

Can these tools handle multiple languages and frameworks?

Excellent tools cover popular languages and commonly used frameworks; for niche stacks, verify explicit support or plans for expansion.

Check language and framework coverage before deciding.

What is the best practice for adopting AI coding tools in teams?

Pilot with cross-functional teams, establish guardrails, document usage policies, and continuously collect feedback to refine tooling choices.

Pilot, guardrails, and ongoing feedback are key.

Key Takeaways

- Define your evaluation rubric before testing tools

- Prioritize language support and integration depth

- Benchmark with real tasks from your codebase

- Assess data handling and governance upfront

- Pilot with cross-functional teams before full rollout