AI Tools Comparison: A Comprehensive Side-by-Side Guide

An analytical guide to compare AI tools across deployment models, features, and cost, helping developers, researchers, and students pick the right tool.

An ai tools comparison helps researchers, developers, and students quickly assess tooling by deployment model, features, and cost. This guide highlights the main differentiators and a practical framework to evaluate hosted platforms, open‑source toolkits, and self‑hosted infrastructures. By the end, you’ll know which category best fits your project and how to structure a fair, apples‑to‑ apples assessment.

Why an Analytical AI Tools Comparison Matters in 2026

In a field as dynamic as artificial intelligence, an ai tools comparison is essential for teams trying to stay ahead. According to AI Tool Resources, a structured ai tools comparison saves time, reduces risk, and aligns tool selection with project goals and governance requirements. As tool ecosystems proliferate, users—developers, researchers, and students—need a repeatable method to separate hype from practical value. A rigorous comparison helps you map capabilities to real use cases, avoid vendor lock‑in, and plan for long‑term maintenance. This is not just about choosing the fastest model; it is about choosing the tool that best matches your data strategy, regulatory constraints, and collaboration needs. Think of the ai tools comparison as a living framework: revisit it whenever your objectives or data environments evolve.

AI Tool Resources Analysis, 2026 emphasizes that the best decisions come from transparent criteria and repeatable processes, not one‑off impressions from demos. In practical terms, this article will show you how to structure your evaluation, what metrics matter for most AI workloads, and how to document trade‑offs so stakeholders can understand the rationale behind each choice. As you read, look for the core patterns that recur across tool categories and deployment models.

Quick Take on the Core Idea

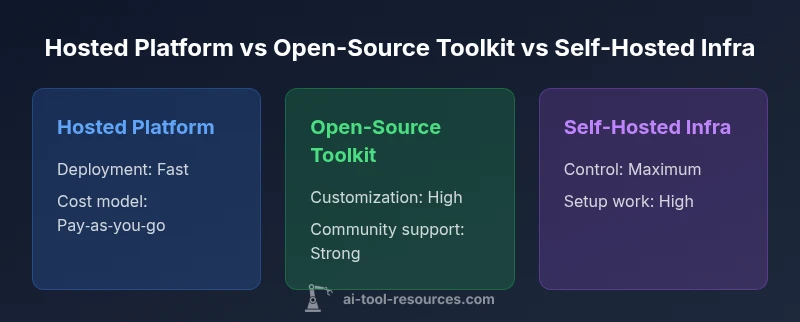

A thorough ai tools comparison helps you identify which deployment model supports your goals—speed, control, or a balance of both—while aligning with cost, security, and integration needs. This article uses a consistent framework to assess hosted platforms, open‑source toolkits, and self‑hosted infrastructures, with practical guidance you can apply in real projects.

-1layer_continue

Feature Comparison

| Feature | Hosted AI Platform | Open-Source Toolkit | Self-Hosted Infra |

|---|---|---|---|

| Deployment Complexity | Low–fast setup | Medium—requires setup and integration | High—full environment configuration |

| Cost Visibility | Visible ongoing SaaS fees | Licensing plus compute; scalable | CapEx for hardware; ongoing maintenance |

| Data Ownership | Provider manages data | User controls data pipelines | Full control over data storage and governance |

| Customizability | Moderate; provider constraints | High; code access for customization | Maximum; complete control over models and infra |

| Best For | Teams needing speed and scale | Researchers needing flexibility and transparency | Regulatory-heavy environments needing control |

Upsides

- Faster time-to-value with minimal setup

- Clear pricing, SLAs, and vendor support

- Strong security and governance options in managed platforms

- Good for prototyping and pilots

Weaknesses

- Vendor lock-in risk and limited customization

- Data residency and export restrictions may apply

- Higher long-term cost with scale if usage grows

- Potential performance gaps for highly specialized tasks

Hosted platforms tend to win for speed and governance, while open‑source and self‑hosted options excel in customization and control.

Choose a hosted AI platform for rapid deployment and strong support. Opt for open‑source toolkits or self‑hosted infra when you need deep customization, data sovereignty, or bespoke model architectures.

FAQ

What is the goal of an ai tools comparison?

The goal is to evaluate capabilities, costs, deployment models, and governance to select the tool that best fits a given use case.

The aim is to compare capabilities, costs, and deployment options so you pick the right tool for your use case.

Which deployment models are commonly contrasted in AI tools comparisons?

Hosted platforms, open‑source toolkits, and self‑hosted infrastructures are the three core categories compared.

Most comparisons look at hosted platforms, open‑source toolkits, and self‑hosted setups.

What factors should I consider beyond price?

Performance, scalability, data ownership, security, integration with existing systems, and vendor support.

Beyond price, focus on performance, data control, security, and how well it fits your stack.

How can I estimate total cost of ownership?

Estimate upfront licensing (if any), ongoing compute and storage, maintenance, and labor required for operation.

Add up initial licensing or setup, ongoing compute, and maintenance costs over the project horizon.

Key Takeaways

- Define your use case before evaluating tooling

- Map deployment needs to data governance requirements

- Consider total cost of ownership over the project lifecycle

- Pilot with a representative workload to validate performance

- Document decisions for non‑technical stakeholders