Make an AI: A Practical Step-by-Step Guide

Learn how to make an ai with a practical, step-by-step guide covering planning, data prep, model selection, training basics, evaluation, and deployment. Ideal for developers, researchers, and students.

By the end of this guide you will know how to make an ai that serves a concrete task, from goal definition to deployment. You’ll need a Python-enabled development environment, sample data, and modest compute resources. This step-by-step approach covers planning, data preparation, model selection, training basics, evaluation, and deployment considerations. It also highlights common pitfalls and how to measure success.

Why make an ai and who benefits

Making an ai means engineering a system that can perceive, decide, or act to solve a defined problem. According to AI Tool Resources, the best outcomes start with a clearly scoped objective and a plan that respects safety, privacy, and ethics. For developers, researchers, and students, building an AI can accelerate discovery, automate repetitive tasks, and empower new experiments. The process combines software engineering, data science, and domain understanding, with governance baked in from day one. When you set a concrete use case—such as natural language classification, image tagging, or anomaly detection—you can design a minimal solution, test it, and learn quickly. The result should be reusable, auditable, and explainable enough to be trusted in real-world settings. In this journey, you should balance ambition with responsibility, so that the ai you make serves people and upholds values.

Define objectives and scope

Before you write a line of code, articulate the task the AI will perform and the measurable outcome. Frame success in a way that is observable and testable. Decide what inputs the AI will receive, what outputs it should produce, and what constraints apply (privacy, safety, latency, fairness). This includes setting non-goals to prevent scope creep. By mapping the objective to concrete metrics—accuracy, precision, recall, user satisfaction—you create a yardstick for evaluation that guides every subsequent decision. AI Tool Resources recommends starting with a minimal viable objective that delivers tangible benefit and can be extended later, instead of chasing an all-encompassing system from day one.

Data collection and preprocessing

Data is the fuel of any AI system. Gather representative, high-quality data that covers the real-world scenarios you expect the AI to handle. Document sources, licenses, and bias considerations. Clean data to remove duplicates and errors; handle missing values, normalize formats, and ensure consistent labeling. Split data into training, validation, and test sets, following a sensible ratio. Consider data versioning to reproduce results later. This phase often reveals gaps in scope; use these gaps to refine the objective and improve model selection. AI Tool Resources emphasizes privacy-preserving data handling and deterministic preprocessing steps to maintain reproducibility.

Model selection and architecture choices

Choose an initial model that matches the task and data scale. For simple tasks, traditional ML models (logistic regression, decision trees, SVM) can outperform heavy neural nets with less data. For more complex patterns, lightweight neural networks or transformer-based architectures may be appropriate. Evaluate trade-offs between compute, latency, and accuracy. Design modular components so you can swap algorithms without rewriting everything. Document rationale for the chosen approach to accelerate future maintenance and collaboration.

Training, evaluation, and debugging

Train a baseline model using the training set and monitor key metrics on the validation set. Keep a clean separation to prevent data leakage. Use learning curves to diagnose underfitting or overfitting, and adjust hyperparameters accordingly. Implement simple debugging aids, such as logging inputs, outputs, and intermediate representations; this makes it easier to diagnose failures when the model behaves unexpectedly. Regularly save checkpoints and maintain a versioned experiment log so you can reproduce results later.

Deployment and monitoring

Prepare the environment for deployment with robust dependencies, version control, and rollback plans. Choose an inference strategy that balances latency and throughput (batch vs real-time). Monitor performance after launch to detect drift, anomalies, or data distribution shifts. Establish alert thresholds and automated retraining triggers when data quality degrades. Ensure transparent user communication about model behavior and limitations, and provide a straightforward path for user feedback.

Security, privacy, and governance

Protect data privacy by applying encryption, access controls, and secure data pipelines. Incorporate privacy-preserving techniques where feasible, such as differential privacy or federated learning for sensitive data. Govern AI development with review boards, policy checklists, and changelogs that document decisions and approvals. Maintain an auditable trail of code, data, and tests so teams can respond to audits or incidents quickly. From the outset, design for safety and reliability rather than retrofitting it later.

Ethics and responsible AI practices

Active bias detection, fairness auditing, and inclusive design should be core to any make an ai project. Test across diverse user groups and monitor for unintended consequences. Provide transparency about the model’s limitations and decision processes; where possible, offer explanations that are understandable to end users. Encourage a culture of responsibility, including opt-out options for data collection and clear data lifecycle policies. Engaging with stakeholders, including researchers, practitioners, and affected communities, helps align the project with societal values.

Build vs buy decision framework

Not every problem requires building from scratch. For many teams, leveraging existing AI tools, open-models, or managed services can accelerate delivery and reduce risk. Use a decision framework that weighs data availability, time to value, total cost of ownership, and maintainability. If you reuse an existing model, perform rigorous validation on your own data and adapt it as needed. When you choose to build, structure your project with clean interfaces and documentation to enable future handoffs.

Authority sources and further reading

- NIST AI Risk Management Framework: https://www.nist.gov/itl/ai-risk-management-framework

- Stanford AI Resources: https://ai.stanford.edu/

- Nature AI: https://www.nature.com/subjects/artificial-intelligence

These sources provide foundational perspectives on governance, research, and ethical considerations for AI development.

Next steps and community resources

Continue learning with practical courses, open-source projects, and community discussions. Engage with AI Tool Resources Team's tutorials, join open-source AI projects, and contribute to forums to share findings and get feedback. Practically, schedule weekly experiments, document outcomes, and iterate with peer review to sharpen your skills and outcomes.

Tools & Materials

- Python 3.11+(Install from python.org; ensure venv support)

- Code editor (e.g., VS Code)(Install Python extension and linting tools)

- Data storage(CSV/Parquet datasets with clear labeling)

- Compute resources(CPU for simple tasks; GPU if training neural nets)

- ML libraries(numpy, pandas, scikit-learn, PyTorch or TensorFlow)

- Version control(Git + GitHub/GitLab for collaboration)

Steps

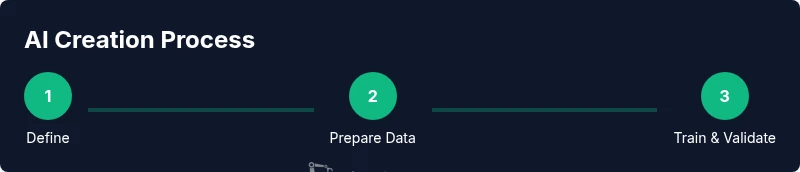

Estimated time: 12-16 hours

- 1

Define the AI objective

Clarify the specific task the AI will perform and the expected outcome. Establish success criteria and boundaries to prevent scope creep. Document the decision so others can reproduce and review it.

Tip: Write the objective as a single, testable statement and map it to a metric you can measure. - 2

Identify evaluation metrics

Select metrics that reflect the task and user impact. Include both performance (accuracy, F1) and safety indicators (false positives, bias checks). Plan how you will measure these on held-out data.

Tip: Use a mix of quantitative and qualitative metrics to capture real-world usefulness. - 3

Assemble and inspect data

Gather representative data with clear licenses and consent. Inspect distributions, label consistency, and potential biases. Prepare a data dictionary to track features, units, and preprocessing steps.

Tip: Version your data and track provenance so results remain reproducible. - 4

Preprocess data

Clean, normalize, and transform features. Handle missing values, encode categorical data, and scale numeric features. Establish deterministic preprocessing to ensure reproducibility across experiments.

Tip: Automate preprocessing in a single script to minimize drift. - 5

Choose an initial model

Start with a simple, justifiable baseline (e.g., logistic regression or small neural net) that matches data size. Prepare to switch to more advanced architectures later if needed.

Tip: Document why this baseline was chosen and how it aligns with the objective. - 6

Train a baseline and evaluate

Train the baseline on the training set and validate on the hold-out set. Track learning curves, monitor for overfitting, and adjust hyperparameters methodically.

Tip: Save checkpoints and log hyperparameters for reproducibility. - 7

Iterate and optimize

Refine features, tweak model choice, and experiment with regularization or data augmentation. Reassess against the same metrics to measure improvement.

Tip: Aim for small, measurable gains before moving to heavier models. - 8

Plan deployment and monitoring

Draft a deployment plan including versioning, rollback, and monitoring. Define metrics for post-deploy performance and establish triggers for retraining.

Tip: Create dashboards that show drift and outage alerts in real time.

FAQ

What does it mean to make an ai?

To make an ai means engineering a system that can perform tasks that typically require human intelligence. It involves defining goals, collecting data, selecting models, training, evaluating, and deploying the solution with governance.

Making an ai means building a system that can perform tasks that usually require human judgment, from planning to deployment.

Do I need large data sets to begin?

Not always. Start with a small, well-curated dataset and a simple baseline model. You can scale data collection as you validate the value and iteratively improve the model.

You don't always need huge data sets; begin with focused, high-quality data and grow as you validate the approach.

What tools are essential for an ai project?

A Python environment, a code editor, data storage, a compute plan, and ML libraries are essential. Version control helps maintain reproducibility across experiments.

Key tools are Python, a good editor, data storage, compute resources, and ML libraries, plus version control.

How long does it take to make a basic AI model?

Time varies by task and data, but a focused project typically ranges from several hours to a few days for a basic prototype and evaluation loop.

A basic AI model can take from a few hours to a few days, depending on scope and data.

Should you build from scratch or reuse existing models?

Evaluate data, timeline, and risk. Reusing existing models can accelerate value with proper validation and adaptation to your data.

Consider reuse when you need speed and reliability, but validate carefully on your data.

Watch Video

Key Takeaways

- Define clear goals and measurable success criteria.

- Prepare and document data with provenance and privacy in mind.

- Start simple, prove value, then iterate with governance.

- Evaluate thoroughly before deployment and monitor continuously.