Build AI Tool: A Practical Step-by-Step Guide

Learn how to build a practical AI tool from problem definition through deployment. This guide emphasizes data strategy, tooling, evaluation, and governance for developers and researchers.

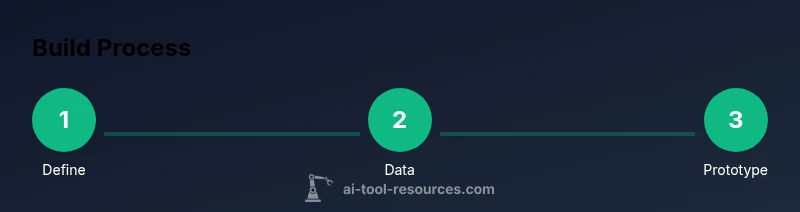

By the end of this guide you'll be able to build an AI tool from ideation to deployment. You’ll define the problem, select data sources, prototype a baseline model, and set up a repeatable workflow. According to AI Tool Resources, a methodical, safety-first approach accelerates delivery and reduces risk. You’ll come away with a practical blueprint you can adapt for future projects.

Define the problem and success metrics

Defining the problem is the foundation of any successful tool-building effort. Start by clarifying who benefits from the AI solution and what real-world task it will automate or augment. Write a concise user story that describes the context, inputs, and expected outputs. Establish success metrics that matter to users and stakeholders—such as accuracy, latency, reliability, or ease of use—without overloading the project with vanity metrics. Document constraints, regulatory considerations, and deployment environments early. By aligning the problem statement with the team's capabilities, you set a clear target for data collection, model selection, and integration. According to AI Tool Resources Analysis, 2026, teams that begin with well-defined objectives tend to stay aligned and avoid scope creep. This clarity informs data sourcing, labeling requirements, and the design of evaluation tests, making it easier to justify tool choices as you progress. The result is a living specification that guides every backward step and keeps the project focused on delivering real value. When you finish this step, you’re ready to move to data strategy with confidence and purpose.

Data strategy and governance

A robust AI tool starts with high-quality, well-governed data. Define data sources, labeling pipelines, and data quality checks early. Plan data splits for training, validation, and monitoring to prevent data leakage and ensure fair evaluation. Establish privacy, security, and compliance controls appropriate for your domain, and document data provenance so you can trace decisions downstream. Build a data dictionary that captures feature definitions, data formats, and preprocessing steps. Align data strategy with your success metrics so you can demonstrate progress to stakeholders while maintaining guardrails against biased or unsafe outcomes. Regular data audits help catch drift and keep the tool reliable in production. As you scale, automate data lineage reporting and change management to protect the integrity of your AI system.

Model and architecture selection

Choosing the right model and architecture is critical to balancing performance, cost, and latency. Start with a baseline model that is simple to implement and easy to interpret, then iterate toward more capable architectures only as needed. Compare approaches based on your evaluation criteria—not just accuracy, but also inference time, memory usage, and robustness to edge cases. Consider using pre-trained components to speed up development, while planning adaptation and fine-tuning for your exact task. Design the system modularly so you can swap components (data preprocessors, encoders, decoders) without rewriting the entire pipeline. Keep an eye on drift and plan monitoring that detects when model quality degrades over time.

Building the pipeline and tooling

A repeatable pipeline speeds up iteration and reduces human error. Establish version-controlled data and code, automated training runs, and standardized evaluation dashboards. Create clear interfaces between data processing, model training, and deployment so team members can work in parallel. Use experiment tracking to capture hyperparameters, dataset versions, and outcomes, enabling reproducibility and easier debugging. Automate testing for data integrity, model performance, and safety checks before any deployment. Document decisions and rationale so future teammates understand why a particular approach was chosen and how to adapt it for new tasks.

Safety, ethics, and deployment considerations

Deploying AI tools responsibly requires explicit safety and governance practices. Define guardrails to minimize bias, protect privacy, and prevent unsafe outputs. Plan for monitoring in production, including automatic alerts for performance degradation or anomalous behavior. Establish rollback procedures and a clear handoff path to maintainers. Consider regulatory requirements, security best practices, and user consent in your deployment strategy. From a practical standpoint, containerize the service, implement robust logging, and ensure observability so you can detect and respond to issues quickly. This discipline helps ensure your build ai tool remains trustworthy as it scales.

Practical tips and common pitfalls

Before you begin, remember to set clear boundaries for scope and success metrics. Start small with a minimal viable product and expand iteratively. Regularly review data quality and fairness, and avoid overfitting by validating on diverse data. Document every decision, including why certain data sources or models were chosen. Beware of brittle pipelines and single-point failures; design with fault tolerance and observability in mind. Finally, prioritize safety and compliance from day one to prevent costly redesigns later.

Tools & Materials

- Computer with decent CPU/GPU(Recommended: 16-32 GB RAM; GPU helps with model experimentation)

- Access to dataset(s)(Ensure licensing, privacy, and usage rights)

- Python environment(Use Conda or virtualenv with Python 3.8+)

- ML frameworks(Examples: PyTorch or TensorFlow)

- Experiment tracking tool(E.g., MLflow, Weights & Biases)

- Version control(Git + repository hosting (GitHub, GitLab, or similar))

- Evaluation metrics sheet(Define metrics such as accuracy, F1, AUC, or custom KPIs)

- Compute resources(Cloud GPU/TPU access if needed for large experiments)

- Deployment platform(Docker, Kubernetes, or serverless options for production)

Steps

Estimated time: 6-8 weeks

- 1

Define your problem and scope

Articulate the user story, the task to automate, and the expected outcome. Specify acceptance criteria and a measurable target for success. This step anchors the entire build-ai-tool effort and prevents scope creep.

Tip: Write a crisp user story with explicit success criteria. - 2

Assemble your data strategy

Identify data sources, labeling requirements, and preprocessing steps. Plan data splits to enable honest evaluation and guard against leakage. Document provenance and privacy constraints from the start.

Tip: Create a data catalog and lineage trace for every dataset. - 3

Prototype a baseline model

Start with a simple, robust baseline to validate the pipeline end-to-end. Use small datasets and clear evaluation metrics to learn quickly before scaling. Iterate quickly on the baseline to establish a reliable reference.

Tip: Keep the baseline interpretable and easy to reproduce. - 4

Build the pipeline and tooling

Construct modular components for data processing, model training, evaluation, and deployment. Use version control and an experiment tracker to capture configurations and results. Aim for a clean API between components.

Tip: Automate experiments and keep components loosely coupled. - 5

Evaluate, iterate, and validate

Run comprehensive tests across multiple datasets and scenarios. Check fairness, robustness, and safety, not just accuracy. Use ablations to identify bottlenecks and adjust features or architectures accordingly.

Tip: Automate validation against edge cases and drift checks. - 6

Prepare for deployment and monitoring

Containerize the service, implement monitoring dashboards, and set up alerts for anomalies. Define rollback plans and update processes to keep the AI tool reliable in production.

Tip: Establish logging, metrics, and a simple rollback mechanism.

FAQ

What is the first step to build an AI tool?

Define the problem and establish clear success metrics. Create a user story and outline acceptance criteria before touching data or models.

Start by defining the problem and how you’ll measure success.

How should I choose data sources for training?

Select data that reflects real user scenarios and covers edge cases. Ensure licensing, privacy, and provenance are well-documented from the outset.

Choose diverse, licensed data with clear provenance.

What is a good baseline approach?

Pick a simple model and a small dataset to validate the pipeline quickly. Iterate from there based on solid evaluation results and resource constraints.

Start simple and validate the pipeline with a baseline model.

How can I ensure safety and ethics?

Incorporate guardrails, monitoring, and bias checks from the beginning. Plan governance around deployment and user impact.

Build in safety checks and governance from day one.

What should I monitor after deployment?

Track performance, drift, and anomalies. Set up alerts and a clear rollback plan to maintain trust and reliability.

Monitor, alert, and be ready to roll back if needed.

Is deployment the final step?

Deployment is ongoing work. Maintain, update, and retrain as data and requirements evolve, with ongoing safety checks.

Deployment is ongoing; keep updating and monitoring.

Watch Video

Key Takeaways

- Define scope with measurable success criteria.

- Plan data governance and validation early.

- Prototype with a simple baseline to validate the pipeline.

- Build modular, auditable pipelines for reliability.

- Monitor safety, fairness, and performance in production.