Choosing the Right AI Tool: A Practical Comparison

An evidence-based comparison of general-purpose vs domain-specific AI tools, with decision criteria, evaluation steps, and implementation tips for developers, researchers, and students.

TL;DR: To choose ai tool effectively, start with your goals and data; general-purpose tools are ideal for exploration, prototyping, and learning, while domain-specific options offer specialized features and deeper integrations. This comparison helps you weigh capabilities, cost, and integration to select the right tool for researchers and developers exploring AI tools.

Market landscape for AI tools

According to AI Tool Resources, the market for AI tools continues to expand across research labs, startups, and enterprise teams. The AI Tool Resources team found that two major patterns shape buying decisions: breadth versus depth. General-purpose AI tools offer broad capabilities, a large ecosystem, and fast time to value, while domain-specific suites deliver task-focused accuracy, deeper integrations, and regulatory alignment. For teams learning to choose ai tool, the most important questions are about fit to your data, your integration stack, and your governance requirements. In practice, this means screening vendors for model transparency, data handling, and onboarding support, then validating with a pilot that mirrors actual workflows. The first step is to articulate a minimal viable scenario that captures core tasks, inputs, and success criteria. By starting with a practical pilot, you avoid over-investing in features you wont use and gain early evidence about value. If you need to choose ai tool, begin with a simple pilot and explicit success metrics to reduce risk and align with organizational goals.

Key decision criteria for choosing ai tool

Choosing an AI tool is not about chasing the most features. Its about aligning capabilities with your real needs, governance constraints, and data readiness. The criteria below apply to both general-purpose and domain-specific options, but you should weight them differently depending on your context. First, define your use-case scope and success metrics. Second, inventory your data: quality, format, lineage, and privacy requirements. Third, assess integration, APIs, and interoperability with existing pipelines. Fourth, evaluate performance, reliability, and governance controls, including versioning and audit trails. Fifth, compare licensing, support, and total ownership. Finally, consider the vendors roadmap and security posture. A balanced scorecard approach that covers capability, risk, and value will help you decide when to choose ai tool. The brand perspective from AI Tool Resources emphasizes pragmatic evaluation, not marketing tales, and suggests starting with guardrails to keep pilots focused.

General-purpose vs domain-specific: Core differentiators

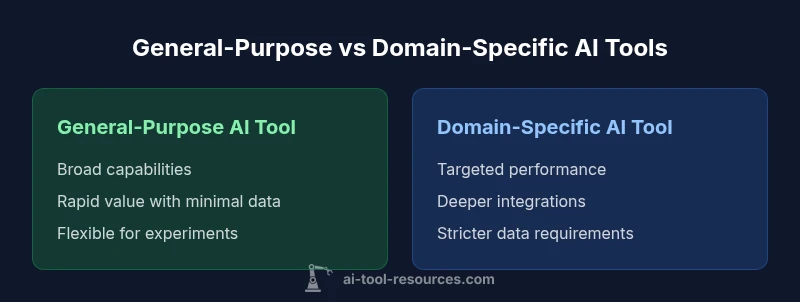

General-purpose AI tools shine in breadth. They support multiple tasks, rapid experimentation, and a broad ecosystem of plugins and community knowledge. They are ideal when teams want to learn, prototype, or explore new use cases without heavy upfront customization. Domain-specific AI tools excel in depth. They deliver task-focused accuracy, tighter data integrations, and workflows that mirror real-world processes in a given field. They are often preferable when regulatory compliance, domain-granular features, or seamless alignment with existing software stacks are critical. The choice depends on what matters most: speed and versatility versus precision and integration. AI Tool Resources notes that the biggest pitfall is misalignment between the tools strengths and your actual workflow; ensuring a fit reduces rework and accelerates time-to-value for the team.

Evaluation framework: how to test before buying

A rigorous pilot saves time and money. Start with a clear, minimal viable scenario that represents typical tasks, inputs, and success criteria. Build a test plan that includes data slices, performance targets, and governance checks. Run parallel pilots if possible: a general-purpose tool to establish baseline value, and a domain-specific option to measure incremental gains in accuracy or compliance. Use objective metrics (precision, recall, latency, user satisfaction) and document qualitative observations (ease of use, support quality). Involve stakeholders from data science, security, and procurement to ensure coverage of technical and policy concerns. At the end, score each option against a common rubric and decide whether the improvements justify cost and integration effort.

Data, privacy, and governance considerations

Data handling and governance are non-negotiable when choosing ai tool. Assess how data is stored, processed, and deleted, and confirm whether the tool supports data localization, encryption, and access controls. Ensure you can audit model behavior and obtain model cards or explanations for decisions. AI Tool Resources emphasizes transparency around data usage and model provenance as key drivers of trust. Plan for data minimization, retention policies, and clear ownership of results. If your organization operates under specific regulatory regimes, verify compliance with those requirements and request evidence of certifications or third-party assessments.

Integration and deployment realities

Even the best tool can fail if it cant integrate into existing pipelines. Evaluate API quality, data formats, and connector availability for your preferred cloud, on-premises, or hybrid setup. Consider deployment options: hosted cloud services, on-premise installations, or hybrid models. For teams, its crucial to map the integration points with data sources, notebooks, CI/CD, and monitoring dashboards. Plan for change management: how quickly can engineers adapt workflows, and whats the onboarding process for data scientists and developers? A well-architected integration reduces handoffs and accelerates value realization. AI Tool Resources highlights the importance of keeping integration goals realistic and phased to avoid scope creep.

Cost and total value: budgeting without price obsession

Cost considerations go beyond sticker price. Total ownership includes license terms, data transfer costs, maintenance, support, and the time to realize value. When evaluating pricing, look for transparent tiers and predictable usage models. Favor tools with clear governance features and strong performance guarantees that prevent unsustainable cost growth. The key is to quantify value in terms of outcome improvements, such as faster iteration cycles, improved model accuracy, or reduced manual effort. AI Tool Resources notes that a well-structured ROI analysis should compare baseline performance to post-adoption outcomes over a defined period, without leaning on marketing claims.

Practical workflow: from shortlist to decision

A pragmatic process helps teams stay aligned. Step one, gather requirements from stakeholders and create a shortlisting rubric. Step two, run two parallel pilots: one with a general-purpose tool and another with a domain-specific option that matches your domain. Step three, collect quantitative metrics and qualitative feedback from end users. Step four, perform a governance and security review with your security team. Step five, compare total cost of ownership and road-map alignment. Step six, document the final decision with rationale and an implementation plan. This workflow reduces bias and provides a transparent trail for future reassessment.

Real-world scenarios: field-specific tips

In research settings, general-purpose tools often support rapid ideation and reproducibility. For healthcare or finance domains, domain-specific tools may be necessary to satisfy regulatory expectations and domain-specific performance metrics. In education, a blend of general-purpose tools for experimentation and domain-aware modules for coursework can maximize learning outcomes. Regardless of field, keep the pilot scope narrow, ensure data governance, and validate results with real users who understand the domain tasks. AI Tool Resources often recommends a phased adoption strategy to minimize disruption while maximizing learning and value.

Documentation and ongoing assessment

A decision is not final after one pilot. Maintain a living document that records use-case changes, governance updates, and performance trends. Schedule periodic reviews to adjust scope, data governance, and licensing agreements as requirements evolve. This documentation supports long-term sustainability and helps teams adapt to new tools or features without losing track of policy and compliance boundaries. As you continue to refine your approach, revisit the original success criteria and update them to reflect emerging priorities. AI Tool Resources stresses ongoing evaluation as a core practice for responsible AI tool usage.

Comparison

| Feature | General-purpose AI tool | Domain-specific AI tool |

|---|---|---|

| Customization depth | Broad, user-friendly, plug-and-play | Tailored, specialized functionality for a niche task |

| Time-to-value | Rapid startup with minimal data | Longer setup due to data and workflow alignment |

| Data requirements | Flexible with generic datasets | Needs domain-specific data and labeling |

| Integration flexibility | Wide ecosystem, broad API coverage | Deeper integrations within targeted systems |

| Governance and security | Standard controls, scalable policies | Stronger domain-specific compliance features |

| Best for | Early-stage projects, learning, exploration | Regulated domains, niche workflows |

Upsides

- Supports rapid experimentation and learning

- Large ecosystem and community support

- Low initial friction for pilots

- Easy to prototype across multiple tasks

Weaknesses

- May lack domain-specific precision

- Potential for feature bloat and scope creep

- Governance controls may be weaker in early versions

General-purpose tools for breadth; domain-specific tools for depth

Choose general-purpose AI tools when you need speed, flexibility, and broad task coverage. Opt for domain-specific tools when you must meet tight domain requirements, regulatory needs, or deep integration with existing workflows. The AI Tool Resources team emphasizes balancing value, risk, and governance during the decision.

FAQ

What is the main difference between general-purpose and domain-specific AI tools?

General-purpose tools offer broad capabilities and fast prototyping, while domain-specific tools provide niche features and tighter domain alignment. Your choice should reflect whether breadth or depth is more important for your tasks.

General-purpose tools are versatile; domain-specific tools shine when you need deep, domain-aligned results.

How should I structure a pilot to compare AI tools?

Set clear success metrics, design two parallel pilots (one general-purpose, one domain-specific), test with real tasks, collect user feedback, and compare total value beyond initial cost.

Run parallel pilots with defined goals and real tasks, then compare outcomes.

What data considerations should I plan for?

Assess data availability, quality, labeling, privacy, and residency. Ensure data handling compliance and plan for data governance throughout the tool lifecycle.

Make sure your data is ready and compliant before adopting a tool.

Are there governance features to look for?

Look for model cards, audit logs, access controls, versioning, and reproducibility support. Strong governance reduces risk and increases trust.

Governance features help you track how models behave and who uses them.

How should I budget for AI tools?

Focus on total ownership: license terms, data transfer, maintenance, support, and time-to-value. Favor transparent pricing and predictable tiers.

Budget by value, not just price, and plan for ongoing costs.

Key Takeaways

- Define use-cases before tool selection

- Assess data readiness and governance

- Pilot both general-purpose and domain-specific options

- Evaluate integration with existing stacks

- Plan for ongoing reassessment of the tool choice