ai tool vs: Practical AI Tool Comparison for Developers

A rigorous, developer-focused comparison of AI tools, covering integration, governance, security, deployment, and cost. Learn how ai tool vs decisions shape projects in 2026 with guidance from AI Tool Resources.

In brief, ai tool vs decisions hinge on scope, governance, and total cost of ownership. Off-the-shelf tools deliver speed and ecosystem access, while custom-built options provide tailored control but higher setup effort. The AI Tool Resources team emphasizes starting from your top use case and compliance needs.

Context and motivation

In 2026, teams face a rapidly expanding landscape of AI tools promising speed, accuracy, and scale. For developers, researchers, and students, the central question shifts from merely identifying the single best tool to selecting a tool that fits specific workflows, data practices, and risk appetites. The concept of ai tool vs captures this spectrum—from plug-and-play cloud services to bespoke architectures built around exact data governance needs. According to AI Tool Resources, success begins by clarifying objectives, constraints, and success metrics before evaluating features like performance, reliability, and total cost of ownership. This context helps you measure what truly matters: latency, accuracy, governance, integration, and operational costs over the tool’s life cycle.

Defining AI tools in this comparison

For purposes of this guide, an AI tool is any software or platform that applies machine learning or AI models to generate insights, automate tasks, or augment decision-making. This includes hosted services, model APIs, and integrated AI modules within larger platforms. The question ai tool vs becomes a practical decision when you compare plug-and-play capabilities against custom-built components designed to fit unique data schemas, regulatory requirements, or industry-specific workflows. AI Tool Resources highlights that the line between generic tools and specialized implementations often shifts with data governance needs and integration complexity.

Key evaluation criteria

A robust comparison rests on a few core axes. First, performance and accuracy: does the tool meet your target quality under representative data? Second, governance and data handling: who owns the data, where is it stored, and how is it accessed? Third, integration and interoperability: how easily does the tool connect with existing pipelines, data lakes, and identity systems? Fourth, deployment and scalability: does the tool scale with demand, and is it resilient to outages? Fifth, cost and total cost of ownership: what are the upfront and ongoing costs, including maintenance and support? Sixth, security and compliance: what controls exist to protect data and meet regulations? These criteria guide a fair ai tool vs assessment.

Use-case driven differences

Use-case determines the weight of each criterion. For research prototypes or internal automation, speed and ease of experimentation may trump deep customization. In contrast, for regulated industries or mission-critical workloads, strict data residency, auditable model governance, and enterprise-grade support can justify significant upfront customization. AI Tool Resources emphasizes mapping use cases to requirements before shortlisting tools, helping you avoid feature-spotting without real-world value.

Integration and data flows

Real-world AI systems rarely operate in isolation. The value lies in how well an AI tool fits into data pipelines, notebooks, model registries, and monitoring stacks. Consider data ingress/egress controls, API compatibility, and streaming vs batch processing. A strong ai tool vs choice minimizes data duplication, supports lineage tracking, and enables secure, auditable access controls. Planning for data contracts between teams—data owners, data stewards, and platform engineers—reduces friction during deployment and scale.

Governance, privacy, and compliance

Governance defines how data is collected, stored, and used. If you’re handling sensitive data (PII, financial data, health information), you’ll need clear data residency, encryption standards, access audits, and incident response procedures. Tools with robust governance features—policy enforcement, data masking, and configurable retention—make it easier to align with internal controls and external regulations. AI Tool Resources notes that defining governance requirements early prevents expensive retrofits later in the tool lifecycle.

Security considerations

Security is a shared responsibility between tool providers and customer teams. Evaluate model integrity, input/output protections, threat modeling, and vulnerability management. Look for features like role-based access control, secure API gateways, and transparent risk disclosures. In an ai tool vs decision, selecting a tool with mature security practices reduces the surface area for breaches and helps maintain trust with users and stakeholders. Regular security assessments should accompany any implementation plan.

Deployment models and architecture

Cloud-based AI tools offer quick start and elasticity, while on-premises or hybrid deployments provide control over data and compliance. Your architecture should define where model inference runs, how data is partitioned, and how results are cached. Hybrid setups can balance speed and governance, but require clear orchestration between cloud services and local infrastructure. The analysis should include latency budgets, regional availability, and disaster recovery planning.

Cost of ownership and ROI factors

Total cost of ownership includes licenses or subscriptions, data transfer costs, infra for hosting, and internal maintenance. Off-the-shelf tools deliver predictable monthly costs and lower initial investment, while custom builds imply higher upfront costs but potentially lower long-term operating expenses if the workload is stable and highly specialized. AI Tool Resources’ analysis shows that ROI hinges on alignment with business outcomes, data quality, and the ability to automate high-value tasks at scale.

Vendor ecosystems and support

Choosing a tool means joining an ecosystem of connectors, plugins, and communities. Consider the breadth of integrations, the quality of documentation, and the availability of professional services. A vibrant ecosystem reduces integration risk and accelerates value realization. Support levels—SLA responsiveness, dedicated customer success, and proactive monitoring—play a pivotal role in successful deployments and long-term satisfaction.

Real-world scenarios: teams of different sizes

Small teams often prioritize speed and simplicity, leaning toward off-the-shelf AI tools with clear pricing and minimal setup. Mid-sized teams may benefit from a hybrid approach, combining ready-made components with custom connectors. Large enterprises frequently demand bespoke governance, data control, and scale, which favor custom or heavily customized solutions alongside managed services. Across sizes, the decision should reflect the organization’s data maturity, risk tolerance, and product roadmap.

Pilot planning and success metrics

A disciplined pilot starts with clearly defined objectives, success criteria, and a representative data set. Establish baseline metrics for accuracy, latency, and user satisfaction. Use a documented evaluation plan with exit criteria to avoid scope creep. Track governance and security outcomes, not just performance. AI Tool Resources stresses that pilots should be time-limited, with explicit go/no-go criteria and a path to broader rollout if successful.

Pitfalls and anti-patterns

Beware of feature-led selection without real use-case validation, vendor lock-in risk, and insufficient data governance planning. Skipping a pilot, ignoring security or compliance implications, and overestimating model capabilities can lead to disappointing outcomes and costly retrofits. A bias toward the newest tool can obscure practical constraints like data quality, team readiness, and integration complexity.

A pragmatic decision framework

Adopt a structured framework: (1) articulate goals and constraints, (2) map data flows and governance requirements, (3) shortlist candidates by criteria alignment, (4) run a controlled pilot, (5) measure outcomes against defined KPIs, (6) decide on a hybrid approach when appropriate, and (7) plan for governance, security, and ongoing optimization. This approach reduces improvisation and increases confidence in ai tool vs choices.

When to retire or switch tools

No tool lasts forever. Establish criteria for sunset when performance degrades, governance becomes impractical, or total cost of ownership outpaces benefits. Maintain an exit strategy, including data extraction, interoperability checks, and migration plans. The AI Tool Resources team recommends periodic reassessment to ensure tools remain aligned with evolving requirements and regulatory landscapes.

AUTHORITY SOURCES

- National Institute of Standards and Technology (NIST): https://www.nist.gov/topics/artificial-intelligence

- Stanford University CS Department: https://cs.stanford.edu

- MIT CSAIL: https://www.csail.mit.edu

Comparison

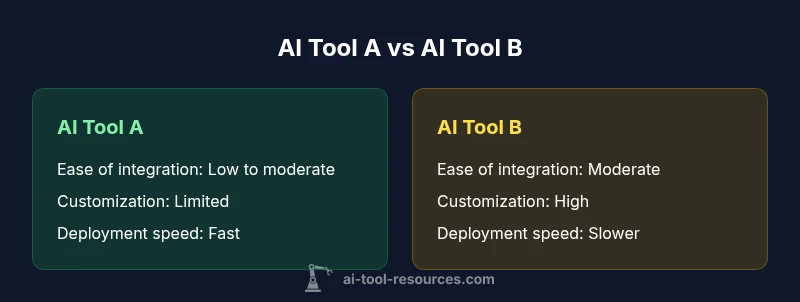

| Feature | AI Tool (off-the-shelf) | Custom-Built AI Solution |

|---|---|---|

| Ease of integration | Low to moderate | High |

| Customization level | Limited | Extensive, tailored |

| Time to value | Days to weeks | Months to quarters |

| Data governance & residency | Shared data handling | Full control over data |

| Security controls | Vendor-managed security | Custom security architecture |

| Ongoing maintenance | Vendor updates | In-house upkeep |

| Vendor support | Standard support tiers | Dedicated team & SLA |

| Total cost of ownership | Predictable subscription | Capex + ongoing ops |

Upsides

- Rapid deployment and faster value realization

- Lower upfront complexity and simpler vendor management

- Broad ecosystem and regular updates

- Scalability with minimal internal resources

Weaknesses

- Less control over data governance and customization

- Ongoing subscription costs can accumulate over time

- Potential misalignment with niche workflows

- Dependence on vendor roadmap and security posture

Off-the-shelf AI Tool wins for speed; custom-built wins for deep customization

Choose the off-the-shelf option when speed and predictable pricing matter. Opt for a custom-built solution for niche processes and strict data control; many teams benefit from a hybrid strategy.

FAQ

What counts as an AI tool in this comparison?

An AI tool includes any software or service that applies ML/AI to automate tasks, generate insights, or augment decisions. This covers hosted services, APIs, and AI modules within larger platforms.

An AI tool is software that uses machine learning to automate tasks or provide insights. This includes hosted services, APIs, and AI features inside platforms.

How do you measure total cost of ownership for AI tools?

TCO includes upfront licensing or subscriptions, data transfer and storage, infrastructure for hosting, and ongoing maintenance. It also accounts for internal labor and any migration or integration costs.

TCO includes subscription fees, data hosting, infrastructure, and ongoing maintenance as well as internal labor costs.

What are common integration challenges in ai tool vs decisions?

Challenges include data compatibility, API rate limits, authentication and access control, data governance alignment, and ensuring monitoring across disparate systems.

Expect data format mismatches, API limits, and governance hurdles when integrating AI tools.

When is a custom solution worth the extra effort?

Custom solutions justify themselves when data residency, domain-specific tailoring, or unique performance requirements necessitate architecture not offered by off-the-shelf tools.

Custom solutions pay off when you need deep custom behavior or strict data controls.

How should data governance be addressed with third-party tools?

Define ownership, access controls, retention policies, and audit trails. Ensure the vendor supports data localization, encryption, and compliant data processing agreements.

Set clear data ownership and security policies when using third-party AI tools.

How do you run an effective pilot for ai tool vs?

Select a representative dataset, establish success metrics, set a time limit, and define go/no-go criteria. Include governance and security checks in the pilot.

Pilot with real data, set clear success criteria, and decide early whether to proceed.

Key Takeaways

- Define goals and constraints before evaluating tools

- Assess integration, governance, and cost early

- Pilot with representative data to avoid overfitting

- Consider hybrid approaches for balance

- Prioritize vendor support and security posture