Submit AI Tool: A Practical How-To Guide

Learn to prepare, validate, and submit an AI tool across platforms with clear docs, safety checks, and reproducible demos. This educational guide from AI Tool Resources helps developers, researchers, and students navigate the submission process in 2026.

Submitting an AI tool involves packaging a clear description, safety and licensing docs, and a runnable demo, then choosing a submission channel (app marketplace, research repository, or tool catalog) and following each platform’s guidelines. Run validation tests, address privacy and security checks, and await review. The AI Tool Resources team recommends documenting data sources and usage limits for trust.

Understanding submission frameworks for AI tools

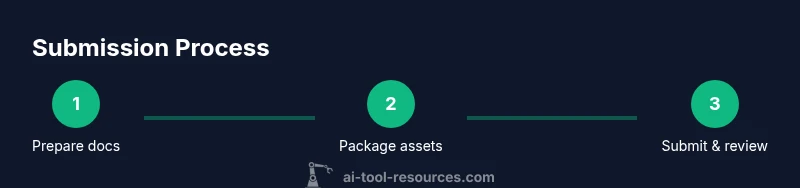

Submitting an AI tool is more than uploading files; it’s aligning with platform expectations for description, safety, reproducibility, and governance. Most platforms offer a submission framework that revolves around three pillars: documentation, a runnable demo, and compliance materials. The submit ai tool workflow can vary, but the core principles stay the same: clarity, safety, and verifiability. According to AI Tool Resources, a well-structured submission increases the chance of a smooth review and faster availability to users. In 2026, many developers report that robust submissions reduce back-and-forth with reviewers and accelerate adoption. The user journey typically begins by selecting a submission channel and compiling the necessary artifacts. Different channels reward different artifacts: marketplaces emphasize user-facing docs and privacy; research repos focus on reproducibility and licensing; enterprise catalogs look for governance and audit trails. For developers, researchers, and students, understanding these frameworks helps plan the submission project and allocate time efficiently. The first decision is how you intend users to access your tool: via an API, a stand-alone model, or an embedded component in another product. Each option dictates the type of artifacts you will prepare and the validation you must perform. The phrase submit ai tool appears throughout the narrative as a guiding action that shapes your preparation and presentation.

Core documentation you must prepare to submit ai tool

A solid submission relies on clear, complete documentation. At minimum, prepare a user-facing description that highlights the tool’s purpose, intended use cases, and limitations. Include a license statement that defines permissible uses and redistribution terms. Safety and privacy docs should outline data handling, model behavior safeguards, and potential risk scenarios. Data provenance and licensing details should be explicit, along with any third-party dependencies. The goal is to give reviewers confidence that your submit ai tool adheres to governance and compliance standards. Remember to document input/output formats, API endpoints (if applicable), rate limits, and error handling conventions. A well-structured README and a concise data usage note can significantly reduce back-and-forth with reviewers and speed up the journey toward platform approval. The AI Tool Resources Analysis, 2026 emphasizes the importance of reproducible artifacts, including clear setup steps and environment specifications, to support validation across ecosystems.

Choosing the right submission channel and plan

Not all submission channels are the same. A consumer-oriented app marketplace prioritizes UX, licensing clarity, and privacy reviews, while a research repository prioritizes reproducibility, open data considerations, and licensing flexibility. An enterprise tool catalog often requires governance, audit trails, and security attestations. Create a channel plan that maps each artifact to its audience: technical documentation for developers, governance notes for compliance teams, and a public README for end users. When you plan submit ai tool, set expectations for review timelines, potential iterations, and post-launch maintenance. A phased rollout can help: begin with a limited, testable version to gather feedback, then expand documentation and coverage as reviews progress. This planning stage is where many teams align on success metrics and define what “done” looks like for each channel.

Building a robust runnable demo and safety materials

The runnable demo is your best evidence that the tool works as advertised. Package a minimal, self-contained example that exercises core capabilities without exposing sensitive data. Include sample inputs, expected outputs, and a clear demonstration of failure modes and how the tool handles them. Safety materials should cover model guardrails, data handling, opt-in telemetry, and user notices about potential biases. If your tool processes personal data, include a privacy impact assessment and an explanation of data retention practices. The demo should be reproducible across environments, so provide environment specifications, container scripts, or setup instructions that enable reviewers to run the demo with minimal friction. A well-crafted demo not only showcases capability but also communicates responsibility—an increasingly important factor in submit ai tool journeys.

Validation, testing, and compliance checks

Validation tests validate both functionality and reliability. Develop unit tests for core functions, integration tests for API calls, and end-to-end tests for typical user flows. Include test data that mirrors real-world usage but avoids exposing sensitive information. Compliance checks should cover licensing, data sourcing, and usage limitations. Note any safety constraints and how the tool mitigates misuse. Reviewers often look for traceability: where code came from, how models were trained, and how provenance is maintained. The AI Tool Resources Analysis, 2026 highlights that transparent validation results and a clear risk mitigation plan improve reviewer confidence and speed up approval when you submit ai tool. Provide a test report or link to test artifacts and ensure versioning is clearly documented.

Submitting and responding to platform reviews

With artifacts prepared, submit your AI tool through the chosen channel. Ensure all metadata fields are accurate and aligned with the platform’s schema. After submission, monitor notifications regularly; reviewers may request clarifications, additional data, or test results. Respond promptly with precise, evidence-backed answers to avoid delays. If a rejection occurs, review the feedback carefully and update the submission package accordingly. Keep a change log and version notes for each resubmission, so reviewers can see how the tool evolved. Throughout the process, maintain open lines of communication with platform admins and stakeholders, and reiterate how submit ai tool adheres to safety, licensing, and governance standards.

Maintaining submissions and long-term quality

Post-approval maintenance is as important as the initial submission. Plan for updates, deprecations, and security patches, and communicate changes clearly to users and reviewers. Track performance metrics, user feedback, and safety incidents, and incorporate improvements into subsequent releases. Regularly refresh documentation to reflect changes in APIs, data handling, or governance requirements. When you continue to submit ai tool, keep stakeholders informed about ongoing compliance checks and testing outcomes. The long-term health of your submission depends on proactive maintenance and transparent communication with the community and platform partners.

Tools & Materials

- Package manifest (README with scope, licensing)(Describe scope, usage scenarios, and licensing terms)

- Demo runnable sample(Self-contained example that demonstrates core features)

- License documentation(Specify redistribution and usage rights)

- Safety and privacy policy document(Outline data handling, guardrails, and risk mitigation)

- Dependency list and environment spec(Include versions and setup steps)

- Submission form or metadata template(Provide accurate metadata for discovery)

- Evidence of tests (unit/integration) results(Optional but recommended)

- Privacy impact assessment(Optional but helpful for data-sensitive tools)

Steps

Estimated time: 2-4 weeks

- 1

Prepare artifacts

Assemble documentation, license texts, safety notes, and a runnable demo. Ensure all files are clearly named and organized in a dedicated submission package. This step sets the foundation for a smooth review.

Tip: Create a single source of truth for all artifacts to avoid mismatches. - 2

Package for submission

Bundle artifacts into a clean archive or repository with version tagging. Include a changelog and a quick-start guide so reviewers can replicate results quickly.

Tip: Keep sensitive data out of the demo package; use placeholders if needed. - 3

Choose the submission channel

Select the platform that best aligns with your audience: consumer marketplace for end users, research repository for reproducibility, or enterprise catalog for governance. Map artifacts to channel requirements.

Tip: Document target audience and reviewer expectations for the chosen channel. - 4

Submit the tool

Upload artifacts and complete all metadata fields. Double-check licensing, data usage, and safety notes before submission.

Tip: Verify all links and references resolve correctly in the submission form. - 5

Monitor and respond to review

Check notifications frequently. Provide precise answers to reviewer questions and supply any requested artifacts promptly.

Tip: Prepare a template response to common reviewer questions to speed up replies. - 6

Plan post-approval maintenance

Define a plan for updates, security patches, and user communications. Schedule periodic reviews to keep the submission current.

Tip: Maintain a public changelog to demonstrate ongoing stewardship.

FAQ

What qualifies as a runnable demo for submitting an AI tool?

A runnable demo should be a self-contained example that clearly demonstrates core features without requiring sensitive data. Include input-output examples, environment setup, and a minimal dataset to reproduce results.

A runnable demo is a self-contained example showing core features with setup steps and a sample dataset.

Which platforms support AI tool submissions?

Common channels include app marketplaces, research repositories, and enterprise tool catalogs. Each has unique requirements for documentation, licensing, and governance.

Common channels include marketplaces, research repos, and enterprise catalogs with platform-specific requirements.

How long does a typical review take?

Review times vary by platform and submission complexity. Plan for a few days to several weeks and maintain communication with reviewers.

Review times vary; expect days to weeks and keep in touch with reviewers.

What if my submission is rejected?

Reviewers will provide feedback. Update artifacts accordingly, version the submission, and resubmit with a clear explanation of changes made.

If rejected, use reviewer feedback to revise artifacts and resubmit with notes on changes.

Do I need to publish data sources?

Yes, disclose data provenance and licensing where applicable to increase trust and reviewer confidence.

Data provenance and licensing should be disclosed to build trust.

Is there a risk assessment requirement for submit ai tool?

Many platforms require a safety and risk assessment to outline potential misuse and mitigation strategies.

A safety and risk assessment helps reviewers understand risk and mitigation.

Watch Video

Key Takeaways

- Submit ai tool artifacts must be complete and transparent

- Choose channel aligned with audience and governance needs

- Provide runnable demos and reproducible environments

- Maintain ongoing updates and clear communications