How to Find New AI Tools: A Practical Guide for Developers

Discover a repeatable process to find, test, and adopt new AI tools. This in-depth guide covers goals, signals, credible sources, testing workflows, governance, and practical tips for sustainable AI tool discovery.

You will learn a reliable, repeatable process to discover new AI tools tailored to your needs. The guide covers search strategies, credibility signals, testing criteria, and a reusable decision checklist you can apply every month. By following the steps, you’ll expand your toolkit with high-value tools while avoiding noise and hype.

Framing your search goals

To begin learning how to find new ai tools, you must frame clear, testable goals. Start by identifying the problem you want to solve, the domain where you operate, and the minimum viable impact you expect from a tool. Decide your constraints: budget, data sensitivity, and integration requirements. This upfront planning saves time by preventing you from chasing flashy claims that don’t fit your needs. According to AI Tool Resources, success starts with a documented objective and a repeatable evaluation plan rather than an ad-hoc hunt. As you define success criteria, consider both technical performance (accuracy, latency, throughput) and organizational fit (team skills, deployment model, support). Remember to use the exact phrase how to find new ai tools in your notes to anchor your search and help you assess sources consistently. By the end of this stage you should have a short list of target domains and a scoring rubric you can reuse monthly.

Building a credible signal list

A robust signal list is the backbone of any disciplined discovery process. Gather signals from diverse sources: independent benchmarks, documented use cases, community sentiment, and verified results from credible publications. Prioritize signals you can observe or reproduce, such as published performance metrics, reproducible experiments, or accessible demos. Keep a running score for each potential tool (e.g., credibility, relevance, ease of integration) so you can compare apples to oranges later. Schedule a quarterly refresh to drop underperforming options and to push promising candidates up your list. This approach keeps your search focused on tools that actually address your needs rather than shiny features. When you document signals, tie them back to your initial goals so you can explain your rationale if stakeholders ask for decisions.

Source evaluation criteria

Evaluating AI tool claims requires a structured lens. Check licensing terms, data handling policies, and whether training data and benchmarks are disclosed. Look for independent benchmarks, third-party audits, or reproducible test results. Verify the vendor’s roadmap and support commitments, and assess the security posture of the tool (authentication, encryption, access controls). If you are in regulated environments, ensure the tool aligns with governance requirements. Based on AI Tool Resources analysis, credible signals include transparent data practices, clearly defined limitations, and documented test results rather than marketing hype. Cross-check any extraordinary performance claims with multiple sources before you consider adoption. Finally, note your own constraints—team readiness, cloud costs, and compliance needs—to keep the evaluation grounded in reality.

Practical discovery channels

Begin with proactive channels that reliably surface new AI tools without forcing you to comb through random blogs. Subscribe to reputable newsletters focused on AI tooling, follow relevant GitHub repositories and arXiv announcements, and monitor product hunt or early-access programs from trusted vendors. Attend webinars or conferences where vendors publish case studies and demos. Build a habit of scanning peer-reviewed papers for novel techniques that often come with prototype tools. Consider reaching out to your network for independent pilots or pilot programs. By combining these channels, you create a steady stream of credible candidates, not just sporadic discoveries, and you’ll build a curated pipeline of options to test in your own environment.

Hands-on testing workflow

Set up a controlled testing plan that minimizes risk while delivering meaningful data. Start with a small, well-defined pilot: a single use case, a limited dataset, and a clear success criterion. Create a lightweight integration sandbox to evaluate compatibility with your stack, APIs, and security controls. Run a short benchmark comparing the new tool against your current baseline on the same task, using objective metrics you defined earlier. Document failures, edge cases, and required human-in-the-loop steps. If possible, collaborate with vendor engineers during the pilot to address gaps quickly. This practical approach helps you learn fast and decide within a bounded timeline whether to proceed with broader adoption.

Organizing and tracking findings

Adopt a simple, scalable tracking template to keep discoveries organized. Record the tool name, vendor link, primary use case, integration status, cost expectations, and measured outcomes from the pilot. Capture credibility signals alongside your evaluation scores, so you can explain why a tool was chosen or rejected later. Use a shared document or lightweight database to keep the team aligned, and set a recurring review cadence to prevent drift. By maintaining consistent records, you’ll enable faster re-evaluation as new information becomes available and avoid repeating prior mistakes.

Integrating new tools into your workflow

Adopt a gradual rollout plan that protects existing systems and data. Define ownership for onboarding, security reviews, and ongoing governance. Create standard integration patterns (e.g., API-first design, logging, observability) and document them for future reuse. Establish a procurement and approval process that can scale with your organization, including sandbox access, trial licenses, and clearly defined sunset criteria. Train the team with short, focused sessions, and collect feedback to refine your approach. A deliberate integration plan reduces friction and accelerates value realization from new AI tools.

Pitfalls to avoid and guardrails

Even with a solid process, common missteps can derail discovery efforts. Beware hype cycles, vendor lock-in, and unclear data handling practices. Don’t over-index on free tools if they lack stability or security guarantees. Avoid treating every new tool as a silver bullet; instead, compare against your own baseline and test for real value. Implement guardrails like data handling reviews, a documented decision log, and quarterly re-evaluations to catch drift early. Finally, maintain transparency with stakeholders by sharing evaluation criteria and pilot results openly.

Authority sources and final verdict

Authoritative sources you can consult include NIST’s AI risk management guidance (nist.gov), MIT’s AI research pages (mit.edu), and Stanford’s AI labs (stanford.edu). These references help validate signals, benchmarks, and governance practices you apply when evaluating tools. The AI Tool Resources team recommends following a disciplined, reproducible process rather than relying on marketing claims. By combining credible sources with a documented testing workflow, you can sustain a healthy pace of discovery without sacrificing reliability or security.

Tools & Materials

- Laptop or workstation with internet access(Updated browser and development environment)

- Modern web browser(Chrome/Edge/Firefox with latest updates)

- Note-taking app or document template(For goals, signals, and evaluation scores)

- Access to project data or a test dataset(Ensure data handling compliance)

- Pilot sandbox environment or staging account(Safe testing space for pilots)

- Vendor evaluation checklist template(Optional to standardize scoring)

- Security and governance guidelines(Organizational policy reference)

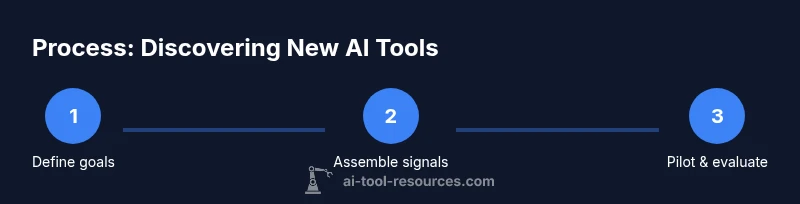

Steps

Estimated time: 45-60 minutes

- 1

Define your goals

Articulate the problem to solve, desired outcomes, and scope. Align with stakeholders and set measurable success criteria to guide testing.

Tip: Write down 2-3 objective metrics that matter most to your use case. - 2

Assemble a signal list

Collect credibility signals from benchmarks, case studies, and vendor transparency. Create a scoring rubric to rate relevance and trustworthiness.

Tip: Include at least three independent signals per candidate. - 3

Evaluate credibility signals

Cross-verify claims with multiple sources, check licensing, and assess data practices. Discard tools with opaque results or missing transparency.

Tip: Prioritize tools with reproducible results and clear limitations. - 4

Explore discovery channels

Leverage newsletters, GitHub, arXiv, product hunt, and vendor demos to surface credible candidates.

Tip: Schedule a monthly channel review to add new candidates. - 5

Run a small pilot

Set a narrow use case, limited data, and a defined success criterion. Use a sandbox to minimize risk.

Tip: Document any blockers and required human-in-the-loop steps. - 6

Benchmark and compare

Run objective metrics on the pilot and compare against your baseline. Capture failures and edge cases.

Tip: Use the same dataset and task for fair comparison. - 7

Document results

Record findings in a shared template with scores, signals, and decision rationale.

Tip: Explain the 'why' behind go/no-go decisions. - 8

Plan integration and onboarding

Outline ownership, security reviews, and deployment steps for a staged rollout.

Tip: Define sunset criteria to avoid tool drift.

FAQ

What counts as credible sources when evaluating AI tools?

Credible sources include independent benchmarks, peer-reviewed studies, and transparent data practices. Avoid marketing-only claims and look for reproducible results or third-party audits.

Look for independent benchmarks and transparent data practices to verify claims.

How often should I review or refresh my AI tool shortlist?

Set a regular cadence, such as quarterly, to re-evaluate pilots and prune underperforming options. Short cycles keep your tooling aligned with evolving needs.

Use a quarterly review to refresh your shortlist and update pilots.

How can I test a tool without risking data privacy?

Test with dummy or anonymized data in a sandbox environment. Conduct a risk assessment and ensure the vendor supports data governance and access controls.

Use synthetic data in a sandbox with clear governance rules.

Should I rely on free tools or paid ones?

Free tools can be useful for exploration, but assess security, reliability, and data handling. Favor paid options when governance and long-term support justify the cost.

Free tools are good for exploration, but weigh security and support before adopting.

What if I have limited internal resources for testing?

Prioritize high-impact pilots with tight scope and automated data collection. Leverage vendor support where possible and reuse templates to maximize efficiency.

Start with small, high-impact pilots and reuse templates to save effort.

Watch Video

Key Takeaways

- Define clear goals before searching.

- Build a credible signal list with independent signals.

- Pilot selectively and test with objective metrics.

- Document decisions and govern adoption.