Guide to AI Tools: A Practical How-To for Developers and Researchers

A developer-focused guide to ai tools that teaches how to select, evaluate, and deploy AI tools. Learn criteria, governance, integration, cost, and best practices for scalable adoption.

With this guide to ai tools, you will learn to select, compare, and deploy AI tools across research, development, and education. The approach blends evaluation criteria, governance, and practical workflows so you can build a repeatable toolset. You’ll gain a framework for budgeting, risk, and integration that scales from pilots to production across teams and projects today.

What is an AI tool and why it matters

An AI tool is a software component that leverages artificial intelligence to perform tasks, assist decision making, or automate processes. In the context of a comprehensive guide to ai tools, you’ll encounter a spectrum from simple automation scripts to complex platform ecosystems that support model training, deployment, and monitoring. The purpose of these tools is not just to replace human effort, but to augment capabilities, accelerate experimentation, and unlock insights that would be difficult to obtain manually. For researchers, developers, and students, selecting the right mix of tools means aligning capabilities with problems, data availability, and the desired outcomes. This guide helps you translate abstract AI promises into concrete, repeatable workflows that deliver measurable value.

Throughout, you’ll see practical examples and checklists designed to keep projects focused, compliant, and scalable. The language here is intentionally broad: ai tools can range from coding assistants that generate boilerplate to data platforms that orchestrate ML pipelines. By understanding core categories and evaluation criteria, you’ll be prepared to make informed decisions rather than chasing every new feature.

Why a structured approach matters for ai tools

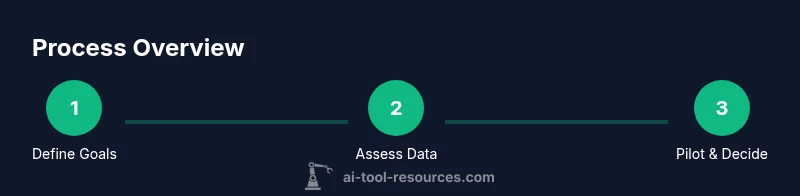

Guided adoption reduces risk and accelerates value capture. When you treat AI tool selection as a project with goals, milestones, and governance, you create clarity for stakeholders and teams. A structured approach helps you avoid vendor lock-in, data privacy pitfalls, and misaligned expectations. The strategy begins with clarifying the problem, identifying required data, and defining success metrics. From there, you map tools to use cases, estimate total cost of ownership, and establish oversight policies. In this guide, you’ll see how to balance exploration with discipline, ensuring you can scale from a pilot to a production workflow while maintaining compliance and ethical considerations.

AI Tool Resources suggests that a thoughtful framework improves adoption outcomes and ensures that AI capabilities align with organizational objectives. This alignment is essential for teams that rely on AI to drive research insights, software quality, or education outcomes.

Core categories of AI tools you should know

Modern AI ecosystems include several broad families, each serving different goals:

- ML and data platforms: for building, deploying, and monitoring ML models at scale.

- Data analysis and automation: tools that enable rapid exploration, transformation, and operationalization of data workflows.

- Coding assistants and copilots: AI that helps write, refactor, and debug code.

- Image, audio, and video generation: content creation and multimedia processing capabilities.

- Natural language processing and chat tools: agents that understand or generate human language.

- Experimentation and evaluation suites: platforms for running controlled experiments and benchmarking.

Each category has distinct evaluation criteria, licensing models, and integration patterns. A well-rounded toolkit typically combines elements from several categories to support end-to-end workflows—from data collection to model deployment and monitoring.

Evaluation framework: criteria that matter

A robust evaluation framework helps compare tools objectively. Key criteria include:

- Capability and fit for the use case: does the tool offer the required features, accuracy, and latency?

- Data handling and privacy: how data is stored, used, and protected; whether data residency is required.

- Integration and interoperability: API quality, existing tech stack compatibility, and data format support.

- Cost and total cost of ownership: licensing, usage tiers, and scalability expenses.

- Security, governance, and compliance: access controls, audit trails, and policy enforcement.

- Reliability and support: SLA terms, uptime history, and community or vendor support quality.

Document your scoring for each criterion to facilitate apples-to-apples comparisons. When possible, design a lightweight scoring rubric with weighted criteria for your team’s priorities.

Data privacy, security, and governance considerations

AI tools handle sensitive information in various ways. Before committing, map data flows: where data enters the tool, how it is processed, and where outputs are stored. Establish data minimization practices and ensure you have explicit consent and security measures for handling personal or proprietary data. Governance should cover access control, retention policies, and incident response plans. Consider conducting a lightweight risk assessment for potential model bias, data leakage, or unintended data exposure. Keeping stakeholders aligned on data governance reduces risk and enables compliant adoption across departments.

Integration and workflow: connecting AI tools to your stack

A successful tool integration plan centers on interoperability. Start by defining data contracts—formats, schemas, and exchange mechanisms—so tools can communicate without bespoke adapters. Plan for versioning and testing of data flows, including rollback strategies. Choose middleware or orchestration layers that support your preferred data formats and authentication methods. Finally, design dashboards and alerts so you can monitor performance, track usage, and spot anomalies quickly. A well-designed workflow minimizes manual handoffs and accelerates insight generation.

Cost models and budgeting strategies

AI tools commonly use subscription-based pricing, usage-based billing, or a hybrid mix. To manage costs, create a tiered budget aligned with project stages: discovery, pilot, and production. Estimate software costs, data storage, compute usage, and potential training needs. Monitor utilization and re-evaluate tool choices as teams gain experience. Build in a cost-tracking policy and assign ownership for ongoing financial governance to prevent runaway expenses and ensure alignment with project ROI.

Pilot projects: running small-scale experiments to learn

Run controlled pilots to validate hypotheses and establish practical benchmarks. Define a narrow scope, realistic success metrics, and a clear exit criterion. Use lightweight data subsets to reduce risk and speed iteration. Document results, including what worked, what didn’t, and why. Use these learnings to adjust requirements and refine your evaluation criteria before broader rollout. A well-executed pilot helps you quantify value and build a compelling case for broader adoption.

Adoption and change management in teams

Adoption hinges on people as much as technology. Communicate goals clearly, provide hands-on training, and create champions within teams. Align incentives and integrate AI workflows into existing processes to minimize disruption. Establish governance channels and a feedback loop to capture concerns and ideas. Build a culture of experimentation and responsible use, where researchers, developers, and students can iterate safely while maintaining quality and ethics.

Practical checklists and templates you can reuse

Utilize ready-made templates to accelerate deployment:

- Evaluation rubric template with weighted criteria

- Pilot plan outlining scope, metrics, and exit criteria

- Data governance checklist for privacy and security

- Integration blueprint with data contracts and authentication details

- Risk assessment worksheet for bias and leakage risks

Adapting these templates to your organization helps maintain consistency and speeds up future tool evaluations.

Common pitfalls and how to avoid them

Common pitfalls include overestimating automation benefits, underestimating data needs, and ignoring governance. Avoid vendor lock-in by prioritizing open standards and clear exit strategies. Don’t rush production without a robust testing plan and monitoring. Ensure stakeholders agree on data handling, privacy, and ethical considerations up front to prevent misalignment later.

Ethical and responsible AI usage: guidelines for teams

Embed ethics and responsibility into every stage of tool selection and use. Establish bias mitigation practices, transparency about AI-generated outputs, and user consent where appropriate. Keep logs of decision rationales and maintain explainability wherever possible. Regularly review AI systems for safety, fairness, and compliance with internal policies and external regulations. This ongoing discipline helps protect users and builds trust in AI-enabled workflows.

Tools & Materials

- Computer with internet access(Modern browser, updated OS, and sufficient RAM for local testing)

- Account access to at least one AI tool platform(Include both a development sandbox and a production-ready environment if possible)

- Documentation templates(Evaluation rubric, pilot plan, data governance checklist)

- Data samples or synthetic data(Use synthetic data if real data is restricted by policy)

- Security policy and compliance references(Align with internal standards and external regulations)

Steps

Estimated time: 2-4 weeks

- 1

Define objectives and success metrics

Clarify the specific problems you want AI tools to solve and list measurable outcomes. Include constraints such as data availability, latency, and acceptable risk levels.

Tip: Document outcomes in concrete terms (e.g., time saved, accuracy improvements, or throughput). - 2

Inventory data and capability needs

Map data you have, data you can access, and the capabilities required from AI tools. Consider data quality, governance, and privacy implications.

Tip: Create a data map showing inputs, transformations, and outputs for each use case. - 3

Set a shortlisting criteria

Define criteria such as integration ease, cost, security, scalability, and support. Weight criteria by importance to your use case.

Tip: Prioritize criteria that affect long-term viability and governance. - 4

Pilot candidate evaluation

Run a controlled pilot with a small data subset to compare a few tools against your criteria. Capture results with a standardized rubric.

Tip: Use a fixed dataset and identical tasks to ensure fair comparison. - 5

Plan integration and governance

Draft an integration plan, data contracts, and access controls. Define responsibilities for monitoring, updates, and incident response.

Tip: Include rollback paths for each integration scenario. - 6

Conduct a risk and ethics review

Assess potential biases, data leakage risks, and compliance gaps. Prepare mitigation strategies and documentation.

Tip: Establish a review cadence to revisit ethics and risk regularly. - 7

Scale with phased rollout

Expand tool usage in stages, monitor performance, and adjust based on feedback and metrics. Maintain guardrails and audits.

Tip: Avoid large-scale deployments until pilots demonstrate stable value.

FAQ

What is an AI tool and who should use it?

An AI tool is software that uses artificial intelligence to perform tasks or assist decisions. Developers, researchers, and students use these tools to accelerate experiments, automate workflows, and improve outcomes. The best practices involve aligning tool capabilities with clear use cases and governance.

An AI tool is software leveraging AI to perform tasks or help decisions. It's used by developers, researchers, and students to speed up experiments and automate work, with strong governance and clear use cases.

How do I evaluate AI tools for research projects?

Start with a defined objective and success metrics. Compare tools using a standardized rubric that covers capabilities, data handling, integration, and cost. Run a small pilot with controlled data to gather objective results.

Begin with clear goals, use a standardized rubric, and run a small pilot to compare options fairly.

What about data privacy when using AI tools?

Assess how data is stored, processed, and who has access. Ensure data minimization, encryption, and compliance with relevant policies. Include a data governance plan as part of the evaluation.

Check data storage, processing, access, and compliance; enforce encryption and governance plans.

How much do AI tools typically cost for a team?

Costs vary by vendor and usage. Expect a mix of subscription fees, per-use charges, and potential training or support costs. Build a budget that scales with your pilots to production.

Costs vary; expect subscriptions, per-use fees, and training costs. Budget for pilots and scaled deployments.

What is the best way to start an AI tool rollout in a team?

Begin with a small pilot in a single team, define success metrics, and document results. Use learnings to refine requirements and governance before broader rollout.

Launch with a small pilot, measure outcomes, and iterate governance before scaling.

How can I avoid vendor lock-in with AI tools?

Favor tools with open standards, export options, and API-driven data flows. Maintain decoupled components where possible to allow switching providers without major rewrites.

Choose tools with open standards and data-export options to keep options open.

Watch Video

Key Takeaways

- Define clear goals before tool selection

- Evaluate data, privacy, and governance upfront

- Pilot with rigorous metrics and documented results

- Plan integration with governance and security in mind

- Scale responsibly with phased rollout