Solve AI Tool: A Practical Step-by-Step Guide for Developers

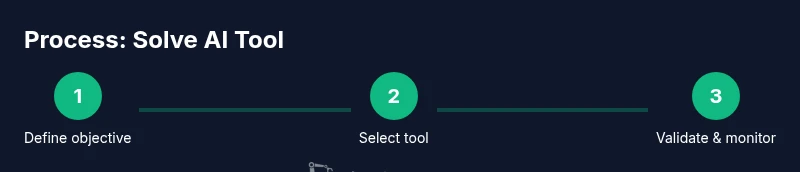

Learn to solve ai tool challenges with a structured workflow: define outcomes, choose the right tool, build robust data pipelines, validate results, and monitor performance. A developer-focused, evidence-based approach.

This guide shows you how to solve ai tool challenges by following a repeatable workflow. You’ll define outcomes, select the right tool, build a robust data pipeline, validate results, and monitor performance. Expect practical steps, checklists, and actionable tips you can apply in development, research, or education.

Framing the Problem in AI Tool Context

Solving ai tool challenges starts with a precise problem statement: what outcome do you expect, under what constraints, and what data will you use? In practice, this means translating a broad goal into measurable criteria—accuracy within a latency budget, reproducible results across environments, and auditable decisions. According to AI Tool Resources, success begins with clear objectives and a plan for validation. This article presents a structured approach you can apply to development projects, research experiments, or classroom demonstrations. Throughout, you will see how solving ai tool problems can be decomposed into repeatable steps, enabling learning and iteration rather than one-off experiments. By anchoring on concrete outcomes, you reduce drift and misalignment as tools evolve.

Understanding Tool Selection and Fit

Choosing an AI tool is not merely about speed or cost; it’s about fit to your real workflow and data ecosystem. Start by listing required features (model support, API stability, privacy controls) and hard constraints (latency, throughput, data residency). AI Tool Resources analysis shows that teams who evaluate fit against concrete use cases, data constraints, and governance policies experience fewer mid-project pivots. Build a decision matrix that scores candidates on performance, reliability, security, and interoperability. Document your rationale for traceability so future engineers can retrace why a tool was picked, which accelerates onboarding and auditing. The goal is a tool that scales across environments, not just a single experiment.

Designing Data Flows and Reproducible Workflows

A reliable AI solution depends on clean data pipelines and reproducible workflows. Define data sources, ingestion methods, transformations, and storage with versioned artifacts. Use containerized environments and explicit dependencies to ensure that anyone can reproduce results. Track data lineage to answer questions about where inputs come from and how they influence outputs. Observability matters: capture metrics, logs, and metadata so that a failure in one stage doesn’t cascade unnoticed. This mindset reduces surprises when you move from prototype to production and makes audits straightforward.

Validation, Testing, and Monitoring

Validation should be baked into your process from day one. Create test suites that cover unit checks for individual components and integration tests for the end-to-end pipeline. Define success criteria for model outputs, data quality, and system reliability. Invest in monitoring dashboards that surface drift, latency, and error rates in real time. Automate alerting for thresholds you care about, and establish a rollback plan if performance degrades. Regular audits and reviews keep the solution robust as data and models evolve.

Security, Privacy, and Compliance Considerations

Security and privacy aren’t afterthoughts; they are foundational. Implement access control, encryption in transit and at rest, and secure credential management for API usage. Assess data sensitivity and apply minimization techniques where possible. Comply with applicable regulations (for example, privacy laws and data-retention policies) and maintain a clear data governance policy. When missteps occur, a documented response plan minimizes risk and protects users and teams. Strong security practices also improve trust with collaborators and customers.

Collaboration, Documentation, and Versioning Practices

Cross-functional teams benefit from lightweight but robust collaboration practices. Maintain a central repository for code, tests, and configuration that is versioned and reviewable. Write concise, actionable docs for setup, data schemas, and decision logs. Use versioned experiments to compare alternative approaches and capture what worked and why. Regular reviews help distribute knowledge, reduce bottlenecks, and ensure everyone understands the current state and next steps.

Practical Scenarios: Three Use-Cases Across Domains

Consider three common scenarios: (1) a developer integrating an AI tool into an existing data pipeline, (2) a researcher validating a new model’s behavior on a controlled dataset, and (3) a student building a classroom demo to illustrate end-to-end machine learning work. For each case, map the objective, select tools that fit constraints, design an appropriate validation plan, and prepare a clear demonstration path. These scenarios illustrate how the same framework adapts to coding projects, academic experiments, and learning environments, reinforcing the versatility of the solve ai tool approach.

Measuring Impact and ROI: Beyond Accuracy

ROI for AI tools isn’t just about precision. It includes time-to-value, reliability, and maintainability. Define KPIs such as cycle time for updates, mean time to detect issues, and user satisfaction with results. Track how often validation tests pass across iterations and measure improvements in data quality. A disciplined approach to metrics supports continuous improvement and helps justify investment in tooling and training.

Getting to Production: Deployment, Rollout, and Guardrails

Transitioning from proof-of-concept to production requires guardrails: feature flags, canary deployments, and rollback procedures. Establish clear ownership for monitoring, incident response, and data governance in production environments. Plan for scalability by evaluating resource usage, concurrency, and fault tolerance. Regularly update documentation to reflect the live system, including any changes to data flows or model updates. A well-planned production path reduces risk and accelerates ongoing improvements.

Tools & Materials

- IDE with Python support(e.g., Visual Studio Code with Python extension)

- API access to AI tool or SDK(Credentials stored securely (e.g., environment vars or secret manager))

- Sample or synthetic dataset(Used for validation and testing; should be representative)

- Notebook environment(Jupyter, Colab, or local JupyterLab for prototyping)

- Version control (Git)(Git + GitHub/GitLab for collaboration)

- Workflow/orchestration tool(Airflow or Prefect to manage data flows (optional but helpful))

- Monitoring/observability setup(Prometheus/Grafana or equivalent dashboards)

- Documentation tooling(Markdown templates, README with data schemas and decisions)

Steps

Estimated time: 2-4 hours

- 1

Define objective and success criteria

Articulate the desired outcome, constraints, and evaluation metrics. Create a clear problem statement that translates user needs into measurable success.

Tip: Document the objective and link each criterion to a data source or process. - 2

Map data requirements and constraints

Inventory data sources, formats, quality, and privacy requirements. Define data preprocessing steps and any data augmentation you’ll perform.

Tip: Create a data catalog entry that describes fields, types, and constraints. - 3

Select tool and integration plan

Choose an AI tool or model that fits the data, latency, and security constraints. Draft how it will integrate with your pipeline and where it will run.

Tip: Keep a backup option in case the primary tool underperforms in production. - 4

Set up environment securely

Create isolated environments, manage credentials securely, and pin library versions to ensure reproducibility.

Tip: Use secret managers and versioned configuration files. - 5

Implement the integration and data flow

Code end-to-end data ingestion, transformation, and tool interaction. Keep components modular to simplify testing.

Tip: Write interfaces and tests that mock external tool calls. - 6

Build validation tests

Develop unit tests for individual components and integration tests for the entire pipeline. Define acceptance criteria for outputs.

Tip: Automate tests to run on every change and on a schedule. - 7

Deploy with monitoring

Launch in staging first, enable observability, and monitor drift, latency, and failures. Prepare rollback procedures.

Tip: Use feature flags to control production exposure. - 8

Iterate based on feedback

Collect results from monitoring, user feedback, and data quality checks. Refine models, data, and workflows accordingly.

Tip: Maintain a changelog to track improvements over time.

FAQ

How long does it take to implement a robust AI tool solution?

Time varies by scope, data, and tooling. A well-scoped project with clear objectives and a validated plan can progress from days to weeks, with production rollout following after successful validation.

Time varies, but a well-scoped plan with clear objectives speeds up delivery.

What is the first step to solve ai tool?

The first step is to define the objective and success criteria. Without a clear target, tool selection and validation become guesswork and increase risk.

Start with a clear objective and measurable success criteria.

What metrics should I use to measure ROI?

Look beyond accuracy. Include metrics like time-to-value, data quality improvements, reliability, maintenance effort, and user satisfaction. Tie metrics to business or research outcomes.

ROI includes time-to-value, reliability, and quality improvements.

What are common failures when solving AI tool problems?

Common failures include scope drift, insufficient data governance, brittle integrations, and lack of monitoring. Proactively address these by documenting requirements and maintaining robust tests.

Drift, governance gaps, and poor monitoring are typical failures.

Is data governance required for all AI tools?

Yes, especially when handling sensitive data. Define data provenance, access controls, retention policies, and compliance checks as part of the project.

Governance is essential for privacy and compliance.

Is this approach suitable for academic research?

Absolutely. The framework emphasizes reproducibility, rigorous validation, and transparent documentation, which align well with research best practices.

Yes—reproducibility and validation fit research needs.

Watch Video

Key Takeaways

- Define objectives before tool selection.

- Evaluate data fit and governance upfront.

- Validate early and often with automated tests.

- Document decisions for reproducibility.

- Monitor, learn, and iterate continuously.