Make AI Tool: A Practical How-To Guide

Learn how to make ai tool from scratch with practical steps, data strategies, and deployment tips. This educational guide from AI Tool Resources helps developers, researchers, and students build reliable AI tools.

By following this guide, you will learn how to make ai tool from scratch, including problem definition, data gathering, model selection, evaluation, and deployment. You’ll need a clear objective, labeled data, a development environment, compute resources, and an ethical framework to ensure safety and reliability. This article emphasizes practical steps, risk management, and reproducible workflows. Expect hands-on steps and checklists to stay on track.

Defining the problem and goals

According to AI Tool Resources, success starts with a precise problem definition and clear goals that tie to user value. Before you write a line of code, invest time in user research, stakeholder alignment, and a measurable objective. Specify what the AI tool should achieve, who will use it, and what success looks like in real terms. Define constraints such as latency, budget, and privacy requirements. Outline acceptance criteria, pass/fail conditions, and a roadmap. This foundation keeps all further work focused and audit-ready. In this section, you’ll learn how to translate vague need into a concrete problem statement that guides data collection, model choice, and evaluation metrics. Make ai tool development less error-prone by documenting the intended use, scope, and ethical guardrails from day one. The AI Tool Resources team emphasizes reproducibility and traceability as core priorities.

Data strategy and tooling prerequisites

Data is the lifeblood of any AI tool. A robust data strategy covers sourcing, labeling, quality checks, and governance. Start with a data inventory: what data exists, where it lives, who owns it, and what access controls apply. Plan data labeling processes, annotation schemas, and versioning to keep training data aligned with evolving requirements. Consider privacy, consent, and compliance in every step. If you cannot access ideal real-world data, synthetic data and augmentation techniques can help—but always validate that synthetic data preserves the real-world signal you need. Establish a data pipeline that ingests, cleans, and stores data securely, with lineage and auditing features. From an organizational perspective, identify tooling that supports your tech stack, whether you’re using open-source frameworks, cloud-native services, or hybrid environments. AI Tool Resources analysis shows that robust data governance and clear data lineage correlate with more reliable AI outcomes and easier troubleshooting.

Model selection and evaluation criteria

Choosing the right model starts with the problem type and data characteristics. For classification or regression tasks, baseline models provide a quick sanity check; more complex architectures may offer gains but require careful tuning. Define evaluation metrics aligned with user value: accuracy, precision/recall, F1, ROC-AUC, or business metrics like conversion rate. Beware data leakage and overfitting by using proper cross-validation, holdout sets, and real-world tests. Establish a lightweight baseline to gauge improvements from experiments, then iterate with additional features, hyperparameters, or architectures. Document experiments meticulously to enable reproducibility and auditability. When evaluating, consider inference latency and resource usage, not just model score. This disciplined approach helps you make ai tool decisions grounded in evidence rather than hype, and it reduces the risk of deploying fragile models.

Architecture and system design

A well-designed architecture separates concerns across data ingestion, model training, evaluation, deployment, and monitoring. Start with a modular data pipeline that handles raw data, preprocessing, feature extraction, and storage. Choose an orchestration layer (e.g., a lightweight workflow engine) to manage experiments and deployments. Define clear interfaces between components to enable replaceable models and scalable inference. For production readiness, incorporate robust logging, monitoring, and alerting for performance, accuracy drift, and data quality issues. Adopt MLOps practices: reproducible environments, versioned artifacts, and automated testing. Security and access controls should protect data at rest and in transit, with compliance checks baked in. A thoughtful architecture reduces technical debt and speeds up iteration as you scale the AI tool.

MVP building and iteration plan

Begin with a minimal viable product (MVP) that delivers core value with the smallest possible feature set. Prioritize features based on user impact, feasibility, and safety. Build the MVP as a single integrated flow, then gradually decouple components to test scalability. Plan short iteration cycles to incorporate user feedback, measure usage, and fix issues quickly. Maintain a transparent backlog and a clear definition of “done” for each sprint. The MVP should demonstrate the essential capabilities, be easy for non-experts to try, and include a quick-start guide for onboarding. As you iterate, align updates with governance policies and ethical safeguards to ensure consistent, responsible development.

Deployment, monitoring, and governance

Deployment decisions influence reliability and user trust. Start with a staged rollout (development, staging, production) and implement feature flags to test changes safely. Instrument monitoring for performance, latency, error rates, and model drift. Establish alerting thresholds and a rollback plan to minimize user impact. Governance should cover data handling, privacy, bias mitigation, and accountability. Create an auditing trail for decisions, experiments, and model versions. Regularly review governance policies and update them as the tool matures to maintain alignment with organizational standards and regulatory requirements.

Ethics, privacy, and safety considerations

Building an AI tool responsibly means designing with ethics and privacy at the center. Assess potential biases in data and model outputs, and implement fairness checks or debiasing strategies where appropriate. Limit the collection and retention of personal data, and clearly communicate data usage to users. Implement safety nets such as content filters, rate limiting, and abuse detection to prevent harmful outcomes. Ensure accessibility and usability so the tool serves a diverse audience. Maintain transparency about model limitations and disclaimers to manage user expectations. Align development with legal and ethical guidelines, and document risk assessments for auditability.

Practical examples and case studies

Consider two hypothetical but plausible scenarios. Case A: an AI-powered code assistant for internal developers. It requires integration with code repositories, strong data governance, and rigorous testing to avoid introducing bugs. Case B: an AI-assisted data-cleaning tool for analysts. It emphasizes annotation quality, validation rules, and clear human-in-the-loop checks. These examples illustrate how problem definition, data strategy, evaluation, and governance come together to deliver practical tools. While these are illustrative, the core principles remain applicable across domains.

Common pitfalls and how to avoid them

Common pitfalls include vagueness in problem framing, underestimating data quality needs, and skipping validation in production. To avoid them, start with explicit success criteria and a data quality plan, implement a simple baseline, and enforce continuous monitoring. Avoid over-optimistic claims about performance without real-world tests. Ensure your team maintains documentation, version control, and reproducible experiments. Finally, involve end users early to catch usability issues and align the tool with real workflows. Following these best practices minimizes risk and accelerates successful AI tool development.

Authority sources and further reading

To deepen your understanding, consult these foundational resources: AI Tool Resources indicates the importance of problem framing and governance across projects. See NIST guidance on trustworthy AI for risk management and standardization, and explore university-led research on responsible AI practices. For deeper technical depth, reference major publications and peer-reviewed work in AI safety and evaluation.

Authority sources (extended): practical links

- https://www.nist.gov/ (U.S. National Institute of Standards and Technology, governance and risk management)

- https://www.mit.edu/ (Massachusetts Institute of Technology, research on machine learning and AI systems)

- https://www.nature.com/ (Nature publications on AI safety, fairness, and evaluation)

Tools & Materials

- Development environment(Python 3.x, virtualenv or conda, IDE (VSCode or PyCharm))

- Compute resources(Access to GPUs/TPUs or cloud-based training environments as needed)

- Data management stack(Data lake or warehouse, data labeling tool, versioning system)

- Experiment tracking(MLflow, Weights & Biases, or similar for reproducibility)

- Monitoring and logging(Logging stack, metrics dashboards, alerting (Prometheus, Grafana))

- Security and governance tools(Access controls, data lineage, privacy safeguards)

- Baseline model and libraries(Lightweight baseline (e.g., logistic regression, simple transformers), essential libs)

- Documentation tooling(Docs portal or wiki for maintainability)

- Ethics and safety checklist(Bias checks, risk assessment templates, user disclosures)

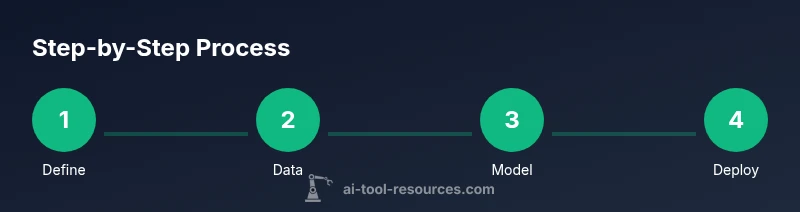

Steps

Estimated time: 8-12 weeks

- 1

Define problem and success metrics

Articulate the user need, scope, and measurable outcomes before coding. Create a one-page problem statement and list acceptance criteria. Clarify who will benefit and how you will measure impact.

Tip: Create a lightweight success scorecard that you can reuse in later iterations. - 2

Assemble data and set governance

Identify data sources, ensure quality, and document provenance. Establish labeling protocols and privacy controls. Build a data lineage plan to track changes across versions.

Tip: Start with a small, representative dataset to validate assumptions early. - 3

Choose baseline model and tooling

Pick a simple baseline to establish a reference point. Ensure the toolchain supports reproducibility and scalability from day one.

Tip: Keep dependencies lean to minimize maintenance burden. - 4

Build MVP architecture

Create a modular pipeline: data intake, preprocessing, training, evaluation, and deployment. Use clear interfaces so improvements don’t ripple across the system.

Tip: Document interface contracts to keep teams aligned. - 5

Train, evaluate, and iterate

Run experiments with proper cross-validation and holdout sets. Compare results against the MVP baseline and iterate with small, auditable changes.

Tip: Log every experiment to avoid reinventing the wheel. - 6

Deploy safely with monitoring

Use staged rollout, feature flags, and automated tests. Implement monitoring for latency, accuracy, and drift to detect issues early.

Tip: Have a rollback plan and clear criteria for deprecating features. - 7

Governance and compliance

Document decisions, privacy considerations, and bias mitigation steps. Align with organizational policies and regulatory requirements.

Tip: Regularly review governance docs as the tool evolves. - 8

Scale and document

Plan for scale by modularizing components and refactoring as needed. Keep comprehensive documentation for onboarding and auditing.

Tip: Automate repetitive maintenance tasks to reduce errors.

FAQ

What is the first step to make ai tool?

The first step is defining the problem and success criteria with stakeholders. Without a clear goal, subsequent work risks misalignment and wasted effort.

Start by defining the problem and success criteria with stakeholders to guide data, model choices, and deployment.

How much data do I need to start?

Start with enough data to demonstrate a signal. Use representative samples and document labeling schemas. You can begin with a small, curated dataset and scale up as you validate the approach.

Begin with a representative, labeled dataset and scale as you validate the approach.

What about privacy and safety?

Incorporate privacy by design, minimize data collection, and implement safety checks. Create governance documentation to address bias, fairness, and user disclosures.

Protect privacy by design and implement safety checks with clear governance.

Should I build from scratch or fine-tune an existing model?

Choose based on the problem, data, and compute. Fine-tuning a pre-trained model can save time, but requires careful evaluation and alignment with your data.

Fine-tuning pre-trained models can save time if you have good data and clear evaluation metrics.

How do I measure success after deployment?

Track user impact, latency, error rates, and drift. Use A/B testing when appropriate and maintain a feedback loop from users.

Monitor impact, latency, and drift; use user feedback for ongoing improvements.

What is the recommended approach for scaling?

Scale incrementally with modular components, ensure robust testing, and maintain governance. Plan for data growth and infrastructure needs as usage rises.

Scale in modular steps with solid testing and governance.

Watch Video

Key Takeaways

- Define a precise problem and success metrics.

- Build a modular data-driven workflow with governance.

- Use a lightweight MVP and iterate quickly.

- Monitor, audit, and document every decision.

- The AI Tool Resources team recommends applying these practices for responsible, scalable AI tool development.