AI Tool or Threat: A Side-by-Side Comparison for AI Researchers

A balanced, analytical comparison of ai tool or threat, analyzing capabilities, risks, governance, and deployment patterns to guide developers and researchers toward safe, effective AI solutions.

AI Tool Resources observes that ai tool or threat presents a dual-edged landscape: potent AI tools enable breakthroughs, while threats from misuse, data leakage, or adversarial manipulation can undermine efforts. This TL;DR compares core dimensions such as capability, risk, governance, and cost to help developers and researchers quickly decide which approach fits their goals.

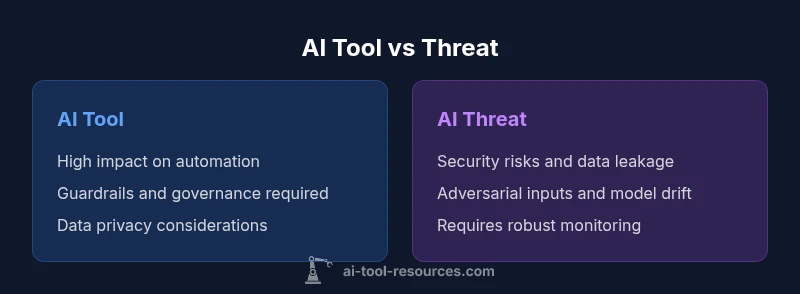

The dual nature of ai tool or threat

ai tool or threat embodies a paradox: the same technologies that empower remarkable advancements can also introduce new forms of risk if not properly managed. For researchers and developers, this tension demands a structured lens to evaluate both the opportunities and the hazards. AI tools offer speed, scale, and new capabilities, but without thoughtful governance, data privacy concerns, and ongoing monitoring, risks can escalate quickly. This section outlines how the spectrum shifts based on context—whether you are prototyping a novel model, deploying a user-facing service, or conducting academic research. The goal is not to deter experimentation but to elevate the discipline of risk-aware innovation. The framing here references insights from AI Tool Resources Analysis, 2026, and aligns with industry best practices for trustworthy AI.

Core dimensions to compare

When weighing ai tool or threat, you should examine four core dimensions that typically drive decision-making in research settings: capability, safety controls, governance requirements, and cost of ownership. Each dimension interacts with others—more capable tools often demand stronger safety and governance, while strict controls can constrain speed. Consider who will use the tool, the sensitivity of the data, and the regulatory context. Throughout this article, the central question remains: does the potential value justify the investment in safety, compliance, and oversight? The following sections break down these dimensions with practical examples and guidance.

Capabilities and risk vectors

Capability describes what an AI tool can do—from data processing and pattern recognition to autonomous decision-making and complex reasoning. Risks arise when capabilities outpace safeguards or when models are exposed to adversarial inputs. Mitigation strategies include robust validation, red-teaming, explainability features, and access controls. For researchers, it is essential to map capability to risk endpoints: data integrity, system reliability, privacy, and safety. This mapping informs both tool selection and experiment design, helping teams avoid overreach while still achieving scientific or engineering objectives.

Governance, ethics, and compliance considerations

Governance is the backbone of responsible AI work. Clear ownership, data-handling policies, consent mechanisms, and audit trails help ensure that ai tool or threat adoption aligns with organizational values and legal requirements. Ethical considerations go beyond compliance: transparency about data usage, model behavior, and potential biases fosters trust with stakeholders, users, and the broader community. In many settings, governance also encompasses incident response planning, risk assessments, and periodic reviews of policies in light of new findings or regulatory changes. AI Tool Resources emphasizes that governance should be proportional to risk and proportional to data sensitivity.

Decision framework: when to deploy vs pause

A practical decision framework starts with objectives and risk appetite. If the anticipated impact is high and uncertainty about safety controls is significant, consider a staged rollout, feature flags, or pilot programs with close monitoring. Conversely, when data flows are well-understood, controls are proven, and there is strong governance, a broader deployment can be pursued. In both cases, establish success criteria tied to safety metrics, incident response readiness, and the ability to halt or roll back if issues arise. The aim is to reduce risk while enabling meaningful experiments and user value.

Deployment patterns that influence risk and value

Patterns such as modular architectures, API-based integrations, and containerized environments can influence both risk and value. Modular designs enable isolated risk assessment for each component, while API boundaries and access controls limit exposure. Privacy-preserving techniques—such as data minimization, differential privacy, and on-device processing—can reduce data-risk while preserving utility. Observability, including logging, metrics, and anomaly detection, is essential to detect unintended behavior early. These patterns support sustainable, scalable AI work while aligning with governance and ethical standards.

Case studies in abstract terms: lessons learned

Consider a research project that prototyped an autonomous decision system in a simulated environment, then transitioned to a controlled real-world setting. Key lessons include the importance of threat modeling from the outset, continuous evaluation of data quality, and the need for a strong incident response plan. In another scenario, a team adopted an open-source tool with built-in guardrails and a transparent governance process, which facilitated peer review, reproducibility, and safer collaboration. These narratives illustrate how thoughtful design choices and governance practices can tilt the balance toward opportunity while mitigating risk.

Economic considerations: ROI, cost of ownership, and risk budgeting

Economic analysis for ai tool or threat must balance upfront costs, ongoing maintenance, and risk-related expenditures. Cost considerations include licensing, cloud compute, data storage, and the human capital required to implement, monitor, and audit the system. A robust cost-of-ownership model accounts for the value of reduced cycle times, improved decision quality, and the potential cost of incidents or data breaches. The aim is to align investment with measurable outcomes, so teams can justify the tool choice to stakeholders while maintaining accountability and resilience.

Future trends: governance, resilience, and scaling

As AI ecosystems scale, governance frameworks must evolve to keep pace with increasing complexity. This includes more sophisticated threat models, automated compliance checks, and more rigorous verification pipelines. Resilience becomes a design principle—systems should degrade gracefully under attack, with rapid recovery options and continuous improvement loops. The future of ai tool or threat will likely emphasize explainability, security-by-design, and collaborative governance that involves developers, researchers, and end-users in ongoing risk assessment.

Comparison

| Feature | ai tool | ai threat | |

|---|---|---|---|

| Impact on projects | High value for automation, insight, and experimentation | Potential for disruption, data exposure, and operational risk | |

| Mitigation required | Structured governance, guardrails, and ongoing monitoring | Security controls, threat modeling, and incident response planning | |

| Governance requirements | Clear ownership, data handling policies, and audits | Threat modeling, risk assessments, and compliance checks | |

| Data privacy risk | Data minimization, consent management, and anonymization where possible | Leakage protection, access controls, and monitoring for anomalies | |

| Cost/ROI perspective | Variable based on scale and usage, with measurable productivity gains | Cost of security, monitoring, and potential remediation | Note |

| Best for | Innovation-driven, governance-enabled initiatives | Risk-averse or security-critical contexts requiring strict controls |

Upsides

- Clear decision framework reduces risk of misapplication

- Promotes responsible innovation with governance and transparency

- Cost-effective when paired with proper monitoring

- Standardizes tool selection across teams and projects

- Supports reproducibility and auditability in research

Weaknesses

- Governance overhead can slow experimentation

- Overly rigid controls may hinder creativity and speed

- Requires ongoing staffing for monitoring, audits, and updates

Governed AI deployments win; prioritize tool capabilities with robust safety controls.

A balanced approach is best: leverage capable AI tools while embedding governance, safety, and monitoring. The threat side remains critical, but proactive risk management and continuous auditing reduce exposure and improve outcomes.

FAQ

What is meant by ai tool or threat?

Ai tool or threat refers to the dual realities of AI—powerful tools that enable progress and risks from misuse or failures. Understanding both sides helps teams design safer, more effective systems. This framing supports risk-aware experimentation and governance.

Ai tool or threat means you weigh value against risk when using AI. Start with governance, then assess safety and data policies as you experiment.

How do you evaluate AI tools vs threats?

Begin with objectives and risk tolerance, map data flows, assess safety features, run threat modeling, and set up monitoring and incident response. The process should be iterative, not a one-time check.

Start by clarifying goals, assess safety and data handling, model threats, and set up ongoing monitoring.

What governance practices reduce risk?

Establish clear ownership, data policies, consent mechanisms, and audit trails. Regular reviews, transparent reporting, and documented decision criteria help keep AI work accountable.

Define who owns what, keep good data policies, and audit what you’re doing.

What tools are better for researchers?

Prefer tools with strong guardrails, open documentation, and an active community. Favor privacy-preserving options and those that support reproducibility and safe experimentation.

Look for guardrails, clear docs, and privacy features that help you reproduce results safely.

What are common threats to consider?

Data leakage, model drift, adversarial manipulation, and supply chain risks are common concerns. Proactive threat modeling and robust monitoring help detect and mitigate these issues.

Watch for data leaks, drift, and adversarial tricks.

How to start a risk assessment for ai tool or threat?

Map data flows, assign ownership, model threats, and run tabletop exercises. Establish a clear incident response plan before deployment.

Map data, assign owners, model threats, and drill response plans.

Key Takeaways

- Prioritize governance after tool selection

- Balance capability with safety controls

- Implement continuous monitoring and auditing

- Define clear data handling and privacy policies

- Reassess regularly as tech and regulations evolve