AI Tool vs Agent: A Practical Side-by-Side Comparison

A rigorous comparison of AI tools and AI agents, highlighting when to use each, how they differ, and how to design effective hybrid workflows for researchers and developers.

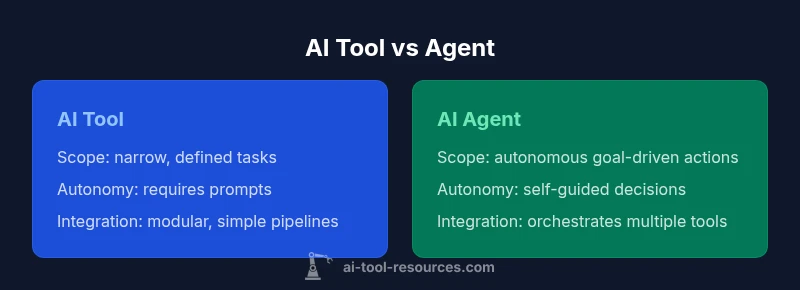

ai tool vs agent: At a high level, an AI tool is a software component that performs a defined task, while an AI agent acts autonomously to pursue goals within a given environment. This quick comparison highlights when each approach excels, guiding developers and researchers toward effective tool selection and workflow design.

What Are AI Tools and AI Agents?

In practice, the phrase ai tool vs agent is used to distinguish two architectural patterns for automating work. An AI tool is a software artifact designed to perform a specific function, often under direct user control. Think of a language model-based summarizer, a code formatter, or a data-visualization recommender. An AI agent, by contrast, embodies a level of autonomy: it can observe a problem, select actions, and pursue outcomes without step-by-step prompts for every move. In this sense, an agent can orchestrate multiple tools, set goals, monitor outcomes, and adapt behavior to changing inputs. For researchers and developers, the distinction matters because it maps to governance, testing strategies, and risk management. The AI tool can be treated as a high-fidelity instrument whose outputs are deterministic within defined boundaries, while an AI agent becomes a decision-maker that requires safeguards, monitoring, and sometimes human oversight. The practical implication is that the two patterns support different workflow archetypes: repeatable, auditable tasks versus dynamic, goal-driven execution. Across domains—from data science to robotics—the choice between ai tool and agent depends on the task complexity, data availability, and the desired level of autonomy.

According to AI Tool Resources, understanding this distinction helps teams align governance, testing, and risk management with the intended use case.

Core Capabilities: Task Narrowness vs Autonomy

Tools excel when tasks are narrow and repeatable. Agents shine when the environment is uncertain or when goals require strategic planning. In a typical pipeline, a tool might generate data, clean it, or format results; an agent might decide which tools to invoke, in what order, and how to adjust strategy when results diverge from expectations. The distinction matters for testing: tools are easier to unit test; agents require end-to-end validation, monitoring, and guardrails. For AI Tool Resources users, the practical effect is that you can stage risk by starting with a well-scoped tool and only graduate to an agent once you understand data quality, latency, and governance requirements. In the end, ai tool vs agent becomes a spectrum, not a binary choice, with many real-world systems sitting between the two poles.

Decision Framework: When to Use Each

- Define the task and expected outcomes clearly. If the outcome is well-defined and repeatable, a tool is often the safer starting point.

- Assess autonomy needs. If the environment is dynamic, dynamic decision-making may justify an agent.

- Consider governance and safety constraints. If you require strict auditing, a tool-first approach with human-in-the-loop is often preferable.

- Prototype with a tool. Use fast feedback loops to validate inputs, outputs, and error handling before scaling to an agent.

- Extend to agent only if metrics justify it. If latency, reliability, and risk are acceptable then gradually introduce autonomous decision-making.

- Monitor, log, and iterate. Establish dashboards that surface decision traces, actions taken, and outcomes achieved.

Hybrid Patterns: Tools and Agents Together

Many real-world systems blend both approaches: a tool performs specialized tasks, while an agent coordinates the task flow, manages exceptions, and adapts strategy. This hybrid pattern is particularly effective in data pipelines, software automation, and research experiments. Design considerations include clear handoffs, contextual memory, and guardrails that prevent unintended actions. In practice, you might reserve agent autonomy for high-level sequencing and use tools for precise computations, data transformations, and verification steps. The hybrid approach can reduce risk while preserving the flexibility needed to handle evolving requirements, data quality issues, and changing constraints. For teams exploring ai tool vs agent choices, a staged rollout—start with tooling, then validate autonomous orchestration—often yields the best balance between safety and productivity.

Governance, Safety, and Compliance Considerations

Autonomy introduces governance challenges. Agents must operate within defined safety envelopes, with explicit constraints, logging, and escalation paths. Data governance becomes critical when agents access sensitive datasets or external APIs. Developers should implement fail-safes, monitoring dashboards, and audit trails that capture decisions, tool selections, and outcomes. Bias and fairness considerations should be baked into evaluation criteria, especially for agents that influence user-facing decisions. As AI Tool Resources frequently notes, establishing clear ownership, versioning for tools and agents, and robust testing regimes helps mitigate risk. When designing ai tool vs agent systems, you should ask: Who owns the decision? How will the system be audited? What happens when the agent malfunctions or encounters novel inputs?

Integration and Ecosystem Fit

Both tools and agents rely on a broader ecosystem of libraries, APIs, and data sources. A tool typically integrates as a microservice or module with well-defined inputs and outputs, making it easier to version, test, and deploy. An agent integrates by coordinating multiple services, maintaining state, and reacting to feedback, which can complicate deployment and monitoring. From an engineering perspective, AI Tool Resources emphasizes the importance of interface contracts, observability, and dependency management. When assessing ai tool vs agent, map out your system's data model, latency targets, and failure modes. A well-designed integration plan reduces friction when you scale from a single tool to a multi-tool, multi-agent system.

Cost, Maintenance, and Total Cost of Ownership

Cost models for tools and agents can differ significantly. Tools often require per-use charges or fixed subscriptions, with predictable cost of ownership when workloads are stable. Agents may incur higher upfront integration costs and ongoing compute or orchestration expenses due to state management and decision-making overhead. In practice, planning for total cost of ownership means evaluating not just the price of each component, but the cost of governance, monitoring, and potential risk mitigation. AI Tool Resources notes that the most economical solution is often the one that minimizes rework and manual intervention while delivering high-quality outputs.

Real-World Scenarios and Hypothetical Case Studies

Consider a research team building an automated literature summarizer. A tool could handle summarization with a fixed prompt template, while an agent could decide which sources to prioritize, request new data, and re-run analyses if results diverge. In a customer support setting, a tool might format responses, but an agent could orchestrate multiple tools (translation, sentiment analysis, knowledge base lookup) to craft context-aware replies. These examples illustrate how ai tool vs agent decisions affect throughput, accuracy, and user experience. For developers, the lesson is to pilot with deterministic tooling and then scale to autonomous orchestration when the benefit justifies the added complexity and risk.

Best Practices, Pitfalls, and a Practical Roadmap

- Start with well-scoped tools before introducing agents. This reduces risk and accelerates learning.

- Build strong monitoring and auditing for both tools and agents. Trace decisions, inputs, and outcomes.

- Establish governance gates: human-in-the-loop reviews for critical decisions and high-risk tasks.

- Define clear success metrics aligned with business and research goals. Monitor feedback and adjust thresholds as needed.

- Document interfaces, versioning, and rollback plans for all components. Avoid speculative behavior by designing testable, deterministic paths.

Authority Sources

- https://www.nist.gov/

- https://www.mit.edu/

- https://ai.google/research

Comparison

| Feature | AI Tool | AI Agent |

|---|---|---|

| Scope | Narrow, well-defined tasks | Broad, goal-driven capabilities |

| Autonomy | Requires explicit prompts and orchestration | Operates autonomously within a defined environment |

| Decision-Making | Deterministic outputs within prompts | Dynamic decision-making with feedback loops |

| Control & Oversight | High user control and visibility | Guarded autonomy with escalation paths |

| Integration | Modular, easy to plug into pipelines | Orchestrates multiple services with state management |

| Cost & Maintenance | Predictable, per-use or per-seat costs | Higher upfront integration with ongoing compute |

| Best For | Repeatable tasks, auditable results | Complex, evolving workflows requiring decisions |

Upsides

- Clear boundaries and auditable results

- Lower complexity for straightforward tasks

- Faster setup for simple workflows

- Easier governance and compliance controls

- Predictable performance within defined constraints

Weaknesses

- Limited adaptability beyond defined tasks

- Requires manual orchestration for multi-step processes

- Less capable in highly uncertain environments

- Risk of tool sprawl without governance

AI tool vs agent: Neither is universally superior; a hybrid approach often delivers the best balance.

Start with tools for defined tasks and progressively add agent capabilities as needs grow. Prioritize governance, data access, and risk management to determine the right balance for your workflow.

FAQ

What is an AI tool?

An AI tool is a software module designed to perform a specific task under user control, with defined inputs and outputs. It excels at deterministic, repeatable operations and is easier to test and audit.

An AI tool is a software module that performs a specific task under user control, ideal for repeatable operations.

What is an AI agent?

An AI agent operates autonomously to achieve goals within an environment, often coordinating multiple tools and adapting to changing inputs. It requires governance, monitoring, and safeguards.

An AI agent acts on its own to reach goals, coordinating tools and adapting to new data.

Can I combine tools and agents?

Yes; many workflows blend both, using tools for tasks and an agent to orchestrate those tasks toward outcomes. Start with a tool and incrementally add agent capabilities as needed.

Yes—hybrid workflows are common and effective.

How do I measure success?

Define clear success metrics aligned with outcomes, such as accuracy, latency, governance adherence, and the rate of human intervention. Track these metrics over time and iterate.

Set clear metrics like accuracy and latency, and monitor governance.

What are common risks?

Risks include misalignment, bias, data leakage, and unpredictable agent behavior. Mitigate with tests, logging, escalation paths, and robust safety constraints.

Be aware of misalignment, bias, and security risks; protect data and implement safeguards.

Is a tool or agent better for education or research?

Education and research often benefit from tools for controlled experiments and agents for exploring autonomous strategies. Choose based on tasks, supervision needs, and governance requirements.

Tools for experiments, agents for autonomous exploration in research.

Key Takeaways

- Define the task to pick the right pattern

- Agents offer autonomy but require governance

- Tools are safer for auditable, repeatable tasks

- Hybrid patterns provide flexibility and resilience

- Plan for monitoring, logging, and governance from day one