AI Agent vs AI Tool: A Comprehensive Comparison

Compare AI agents and AI tools—autonomy, use cases, governance, ROI, and integration. A practical guide for developers, researchers, and students to choose the right approach.

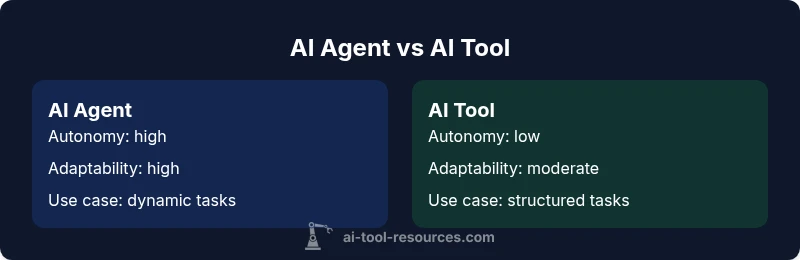

AI agents and AI tools describe two distinct patterns of automation. An AI agent operates with a degree of autonomy, selecting actions to achieve goals in changing environments, while an AI tool supports human tasks with AI-powered capabilities. When deciding between them, assess how much autonomy you can safely manage, how dynamic the workflow is, and the level of integration you can sustain. This quick comparison frames the decision around autonomy, governance, and ROI for complex projects.

What is an AI agent?

ai agent vs ai tool is a common framing in modern AI discussions. An AI agent is designed to act with a degree of autonomy: it selects actions, pursues goals, and adapts to changing circumstances without needing constant human input. This autonomy enables end-to-end task execution, multi-step decision making, and the ability to recover from unexpected events. However, autonomy introduces governance and safety considerations—policies, monitoring, and fail-safes are essential to ensure alignment with intent. According to AI Tool Resources, the decision to deploy autonomous agents hinges on whether the environment is stable enough to predict risk and whether the organization can implement the necessary feedback loops and oversight. For developers, researchers, and students, recognizing this distinction early helps map data flows, API surfaces, and evaluation metrics to real-world goals. The broader context includes agent orchestration, explainability needs, and the potential for agents to collaborate with humans to augment cognitive work. The AI Tool Resources team emphasizes that success depends on clear autonomy boundaries and robust risk controls.

In practice, agents are most valuable when tasks require continual adjustment, learning from results, and operating without micromanagement. They often rely on a combination of planning, perception, and action-selection components, plus a medium-to-large data footprint to improve over time. As you explore ai agent vs ai tool, consider how much autonomous behavior you can safely support, what monitoring you require, and how quickly you need to respond to failures or drift.

What is an AI tool?

An AI tool is typically a software component or service that augments human effort rather than replacing it. It accepts user input, offers AI-assisted capabilities (such as generation, classification, or optimization), and returns results that a human then acts upon. AI tools are designed to fit into human workflows: they are modular, API-driven, and often require explicit task framing. In many organizations, teams start with AI tools to prototype capabilities, validate use cases, and establish data pipelines before scaling to autonomous agents. Governance for tools tends to emphasize auditable inputs, versioned models, and clear human-in-the-loop controls. The AI Tool Resources framework notes that a tool-first approach can reduce risk when autonomy targets are unclear or when regulatory constraints limit automated decision-making. For learners, hands-on experiences with tools illuminate data requirements, input/output contracts, and how feedback loops influence performance. Tools also enable rapid experimentation and modular integration into existing systems.

From a practical standpoint, starting with AI tools helps teams prove value quickly, establish baselines, and design the data infrastructure that will later support more autonomous solutions if needed.

Core differences: autonomy, adaptability, and control

Autonomy: AI agents are designed to act with self-directed initiative, whereas AI tools rely on human guidance. Adaptability: Agents adjust strategies based on ongoing feedback and changing contexts; tools operate within predefined rules and scopes. Control: Agents require runtime governance, safety rails, and continuous monitoring; tools emphasize explicit permissions, traceability, and human-in-the-loop oversight. Scope: Agents tackle complex, multi-step problems and dynamic environments; tools address narrower, well-defined tasks. These core differences shape architecture, data requirements, and risk profiles. A well-chosen path often blends both—deploying tools in early stages and gradually layering agent capabilities as governance maturity and data quality improve.

In practice, organizations should map autonomy boundaries, define success metrics, and align them with regulatory and safety requirements. This framing—ai agent vs ai tool—helps you decide on orchestration layers, data feedback loops, and how you allocate responsibility between machine and human operators. The AI Tool Resources approach emphasizes incremental progression: start with controlled tooling, validate outcomes, then extend toward autonomous agents where the payoff justifies the added complexity.

For researchers and developers, the distinction informs choice of architectures (planning, perception, actuation for agents vs model-driven components for tools) and influences testing strategies, from unit tests to simulation-based validation.

When to choose AI agent vs AI tool: Use cases by complexity

When the task demands end-to-end automation, dynamic decision making, and continuous adaptation, an AI agent often offers the best return on investment. Use cases include process orchestration across multiple systems, autonomous data gathering and decision loops, and tasks where timing and context shift outcomes. Agencies and organizations pursuing operational resilience may prefer agents for flightful workflows, incident response, or real-time optimization where human intervention would be too slow. On the other hand, AI tools excel in well-defined, repeatable tasks with clear input/output expectations, strict auditability requirements, and limited risk of cascading failures. They are ideal for rapid prototyping, content generation within controlled boundaries, and enhancing human productivity in decision support, where humans retain ultimate authority. For introductory pilots, tools enable rapid feedback and knowledge transfer while preserving governance and safety constraints. The AI Tool Resources framework suggests starting with tools to validate the use case and data pipelines before considering agent-level automation.

In education and research settings, tools often provide a low-friction path to explore capabilities and measure potential ROI. In industrial environments with regulatory constraints, a tool-first approach can reduce risk while teams build the data quality and governance foundations needed for agents later. The decision hinge is a practical balance between autonomy, control, and the organization’s risk tolerance.

In summary, choose an AI agent when the task requires autonomous, adaptive behavior; choose an AI tool when the task is structured, predictable, and human-guided.

Integration, data governance, and risk considerations

Adopting AI agents or AI tools requires careful attention to data governance, security, and compliance. Agents demand robust data pipelines, runtime monitoring, and explainability to ensure actions align with policy and intent. You’ll need to design feedback loops, model versioning, and audit trails that show how decisions were made and refined over time. Privacy and data minimization become central as agents access broader context and potentially sensitive signals. Tools, while generally easier to audit, still require clear input contracts, version control, and access governance to prevent misuse and data leakage. Regardless of path, establish a governance framework that defines ownership, risk appetite, and escalation procedures. Consider safety guardrails, consent models, and transparent logging to support regulatory reviews. AI Tool Resources highlights that governance complexity grows with autonomy; plan for staged capability increases and independent validation as you scale.

Infrastructure considerations include orchestration layers, observability, and scalability. Agents benefit from modular microservices, event-driven architectures, and simulation environments to test behavior before deployment. Tools often thrive in well-defined APis, SDKs, and plug-and-play components that accelerate integration with existing systems. The key is to align data quality, governance maturity, and security posture with the desired level of automation in your organization.

Finally, risk management should encompass drift detection, containment strategies, and resilience planning. Prepare for failure modes—what happens if an agent misinterprets signals or a tool produces biased outputs? Establish incident response playbooks and rollback procedures to minimize operational impact. When combined thoughtfully, AI agents and AI tools can complement each other, enabling safer experimentation and scalable automation.

Evaluation framework: How to compare options

To compare AI agents and AI tools effectively, adopt a structured evaluation framework that emphasizes clarity, reproducibility, and safety. Start with defining success criteria: objective outcomes, such as time-to-delivery, error rates, and user satisfaction, along with governance metrics like auditability and compliance adherence. Next, quantify autonomy using a simple scoring rubric: low, medium, high autonomy based on decision rights, control handoffs, and escalation triggers. Assess data-readiness: data availability, quality, labeling effort, and feedback loops. Consider integration effort: API compatibility, required adapters, and deployment complexity. Examine risk exposure: potential failure modes, safety controls, and monitoring needs. Finally, pilot with a staged approach—begin with a tooling prototype to establish baselines, then incrementally add autonomy where governance and data maturity permit. Document results, iterate, and set go/no-go criteria for scaling. This practical framework helps you translate the abstract ai agent vs ai tool debate into concrete, measurable decisions. AI Tool Resources recommends explicit, auditable pilots to manage uncertainty and build confidence over time.

Industry scenarios: examples across domains

Finance and fintech: an AI agent could orchestrate risk checks across multiple data feeds, execute corrective actions, and trigger alerts, while AI tools may assist analysts with pattern recognition and report generation. In research and development, agents can orchestrate experimental workflows, optimize parameters, and adapt plans as results emerge. Education and academia benefit from AI tools that generate tutoring materials, summarize literature, and draft experiments; agents could gradually assume more guidance for automated experimentation. Healthcare use cases require strict governance: tools can support triage and documentation, while agents might manage clinical pathways only within tightly controlled environments and under supervision. In customer support, tools can classify queries and draft responses; agents can autonomously route issues to correct queues and trigger remediation workflows when policy allows. Across sectors, the key is matching complexity and risk with the right balance of autonomy and human oversight. The AI Tool Resources framework emphasizes staged progression—from tool-first pilots to agent-enabled scaling as governance and data maturity improve.

Common pitfalls and mitigation strategies

Common pitfalls include over-automation without sufficient monitoring, data drift that corrupts decision quality, and governance gaps that permit unsafe or biased behavior. Mitigation starts with clear autonomy boundaries, robust logging, and explicit escalation policies. Invest in test environments and simulation to detect failures before production, and maintain continuous model and policy reviews. Bias and fairness require ongoing auditing and representative data curation. Ensure that decision rationales are traceable and that there are human-in-the-loop hooks for sensitive decisions. Establish incident response playbooks and rollback mechanisms to handle unexpected agent actions or tool outputs. Regular governance reviews help keep alignment with organizational values and compliance requirements. By preempting these issues, teams can realize safer and more reliable AI-enabled workflows.

Practical steps to adopt: roadmap and checklist

- Define autonomy boundaries: decide which tasks are safe to automate and which require human oversight. 2) Map data flows and quality: inventory sources, labels, access controls, and retention policies. 3) Start with AI tools: prototype capabilities, measure impact, and establish baselines. 4) Incrementally increase autonomy: introduce agent capabilities in controlled environments with monitoring and safety rails. 5) Build governance: establish ownership, risk tolerance, audit trails, and escalation processes. 6) Measure ROI and iterate: track efficiency gains, error rates, user satisfaction, and governance maturity to inform next steps.

Comparison

| Feature | AI Agent | AI Tool |

|---|---|---|

| Autonomy and decision-making frequency | High autonomy; selects actions without constant human input | Low autonomy; relies on human input for decisions |

| Context awareness | Dynamic environment handling; adapts strategies | Narrow context; follows predefined rules |

| Integration complexity | Requires orchestration across services; can be complex | Typically API-driven with modular integration |

| Data requirements | Extensive data feedback loops for learning | Smaller datasets; explicit task inputs suffice |

| Control and governance | Runtime monitoring, safety rails, risk controls | Auditability, permissions, and human oversight |

| ROI drivers | End-to-end automation; potential long-term savings | Productivity gains and faster task completion |

| Best for | Complex, multi-step, changing environments | Human-in-the-loop workflows and modular reuse |

Upsides

- Enables automation of complex, multi-step processes

- Can reduce human workload and speed up tasks

- Promotes consistent decision-making across scenarios

- Supports iterative learning and improvement

- Can scale operations beyond manual capacity

Weaknesses

- Higher implementation and governance overhead

- Increases complexity of monitoring and safety requirements

- Risk of misalignment or drift if not properly controlled

- Potential data privacy concerns with autonomous data access

AI agents win for autonomy in dynamic environments, while AI tools win for controlled, human-guided workflows

Choose an agent-driven approach when autonomy and adaptability are critical, and governance can scale. Opt for tools when predictable, auditable tasks with human oversight suffice. The AI Tool Resources team notes that most organizations benefit from a staged path—start with tools, validate value, then gradually introduce agents as maturity grows.

FAQ

What is the essential difference between an AI agent and an AI tool?

The essential difference lies in autonomy. An AI agent acts with minimal human input to achieve goals in dynamic environments, while an AI tool augments human work with AI-powered capabilities within defined workflows. Governance and risk considerations scale with autonomy, so plan accordingly.

An AI agent acts on its own to complete tasks, while an AI tool assists humans with AI-powered outputs.

Can an AI tool become an AI agent over time?

Yes, by increasing autonomy, adding decision-making capabilities, and implementing governance controls. A staged approach—start with a tool, measure outcomes, then progressively grant more autonomy as data quality and safety measures improve.

You can upgrade from a tool to an agent as maturity and governance improve.

What governance considerations apply to AI agents?

Governance should cover autonomy boundaries, risk controls, monitoring, explainability, and incident response. Establish clear escalation flows, audit trails, and regular reviews to ensure alignment with policy and user safety.

Governance is crucial to safely scale autonomous AI.

How do you measure ROI for AI agents vs AI tools?

Measure both efficiency gains (time saved, error reduction) and qualitative outcomes (user satisfaction, trust). For agents, include maintenance and safety costs; for tools, track integration speed and impact on human productivity.

ROI depends on both speed and risk—assess both.

Are AI agents suitable for mission-critical tasks?

They can be, but only with stringent safety, governance, and verification processes. Start with non-critical domains, validate reliability, and implement containment and fallback strategies before approaching mission-critical use cases.

Only with strong safeguards and validation.

What are common pitfalls when adopting AI agents?

Over-automation, drift in decision logic, insufficient monitoring, and unclear ownership. Mitigate with incremental rollouts, robust logging, and explicit escalation paths.

Beware drift and missing accountability as you scale.

Key Takeaways

- Define autonomy boundaries early

- Prototype with AI tools before agents

- Prioritize governance and audits with any autonomous system

- Match use case complexity with the right level of automation

- Plan a staged rollout to manage risk and measure ROI