Tool AI vs AGI: A Practical, Analytical Comparison

Explore the differences between tool AI and AGI, focusing on scope, learning, deployment, risks, and use cases. This analysis helps developers, researchers, and students decide where to invest today.

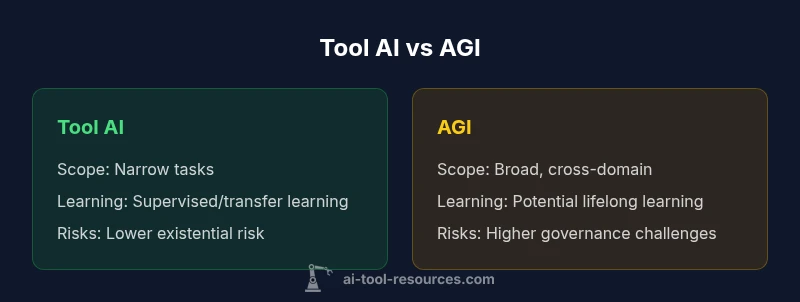

tool ai vs agi: In practice, tool AI refers to task-specific systems that excel at defined problems, while AGI (artificial general intelligence) denotes a hypothetical system with broad, human-like reasoning across domains. For now, tool AI delivers fast, reliable results in narrow tasks; AGI, if achieved, would adapt to new problems with minimal instruction. The distinction shapes how organizations plan projects and governance.

The Landscape: Clarifying Tool AI and AGI

Tool AI vs AGI describes two ends of a spectrum in artificial intelligence research and deployment. Tool AI, often called narrow AI, comprises systems designed to perform specific tasks with high proficiency. AGI, or artificial general intelligence, is a proposed level of machine intelligence that could understand, learn, and apply knowledge across domains as humans do. In the context of this article, we compare today’s tool AI deployments with the long-term prospects for AGI. According to AI Tool Resources, the majority of real-world AI initiatives sit in the tool AI camp, delivering measurable value across finance, healthcare, and engineering. The AI Tool Resources team found that organizations gain consistent performance by focusing on well-scoped problems rather than broad attempts at general intelligence; this guiding principle shapes both strategy and risk management when evaluating tool ai vs agi.

Core Concepts: Narrow Intelligence vs General Intelligence

At a high level, narrow intelligence targets a narrow domain with predefined rules and data boundaries. Tool AI excels at pattern recognition, optimization, or automation within constrained contexts. AGI, by contrast, aspires to universal problem-solving capabilities—learning new tasks without task-specific programming, transferring knowledge across domains, and reasoning with minimal human guidance. The debate around AGI is not only technical but also ethical and social, touching governance, accountability, and long-term societal impact. From the vantage point of AI Tool Resources, most practical projects today remain squarely in the tool AI category, with measurable ROI from automation, data processing, and decision support. The distinction matters because it guides resourcing, risk planning, and evaluation metrics in tool ai vs agi efforts.

Capabilities and Limits: What Each Can Do Today

Tool AI shines in well-defined environments: ledger reconciliation, image classification in manufacturing, chat assistants for customer service, and code analysis tools for software development. These systems benefit from robust benchmarks, repeatable results, and auditable behavior. AGI, if realized, would purportedly demonstrate cross-domain learning, common-sense reasoning, and the ability to formulate novel solutions across unfamiliar tasks. In practical terms, most organizations do not require AGI to achieve value; they optimize existing processes with tool AI and incremental improvements. The AI Tool Resources analysis highlights that capability gaps—such as contextual understanding and robust transfer across domains—remain the biggest barriers to AGI adoption in the near term.

Learning, Adaptation, and Knowledge Transfer

Continual learning and transfer learning are central to differentiating tool AI from AGI. Tool AI typically relies on curated datasets, supervised learning, and task-specific fine-tuning. Adaptation usually involves retraining or domain-specific modules. AGI envisions lifelong learning, where a single system accumulates knowledge across tasks and retains it with minimal forgetting. While researchers explore meta-learning and curiosity-driven approaches, real-world tool ai vs agi deployments emphasize controlled learning to mitigate brittleness and data privacy concerns. AI Tool Resources notes that balancing data requirements and compute efficiency remains a practical constraint for any ambitious AI program.

Data, Compute, and Deployment Considerations

Tool AI deployments optimize data pipelines, model architectures, and hardware utilization for particular tasks. They benefit from well-defined data schemas, established monitoring, and clear rollback plans. AGI would demand unprecedented generalization capabilities, larger-scale simulation environments, and safety architectures to prevent unintended consequences. Compute demands for AGI are speculative but likely orders of magnitude higher than current tool AI workloads. In the real world, teams pursuing tool ai vs agi must design scalable data governance, standardization, and auditing practices to maintain quality, compliance, and traceability while exploring longer-term AGI possibilities.

Safety, Governance, and Control Mechanisms

Safety considerations for tool AI are anchored in explainability, bias mitigation, and robust testing. Governance structures must address accountability in automated decisions and guardrails to prevent harmful actions. AGI raises deeper concerns: alignment with human values, controllability, long-range risk assessment, and international coordination. The industry consensus, echoed by AI Tool Resources, is to advance responsibly with incremental progress, clear oversight, and risk-based prioritization. Even as researchers explore AGI, organizations benefit from strong tool ai vs agi governance to reduce exposure during experimentation and deployment.

Real-World Use Cases: Tool AI in Practice

Across industries, tool AI delivers tangible value with predictable performance. In finance, it automates risk assessments and fraud detection; in manufacturing, it optimizes production lines; in software development, it assists with code reviews and automated testing. In education and research, tool AI assists with data analysis and literature review. The more constrained the task, the more reliable the outcome. For those evaluating tool ai vs agi strategies, the practical emphasis remains on solving concrete problems quickly, safely, and transparently, while monitoring broader AGI advances for potential long-term opportunities.

Economic and Strategic Implications

Investing in tool AI often yields faster time-to-value and clearer return-on-investment than speculative AGI programs. The cost-benefit calculus favors well-scoped automation with auditable safety controls and straightforward governance. AGI ambitions carry high uncertainty, substantial risk, and potentially dramatic shifts in labor markets and regulatory landscapes. From a strategy perspective, most organizations should consider a staged approach: deploy robust tool AI solutions now, invest in platform-level capabilities that enable cross-domain reuse, and maintain a cautious horizon for AGI research and governance evolution.

The Road to AGI: Feasibility, Timelines, and Research Agendas

Experts debate timelines for AGI, with no consensus on when, or if, broad, human-like intelligence will emerge. The challenges span cognitive science, machine learning, and safety engineering. The prevailing position is that progress toward AGI will be incremental, integrating advances in perception, reasoning, and planning. In practical terms, this means that while tool ai vs agi efforts proceed in parallel, resource allocation should favor near-term gains and risk management. AI Tool Resources suggests organizations map core capabilities today and keep a watchful but critical eye on AGI developments while avoiding over-commitment to speculative outcomes.

Decision Framework: When to Use Tool AI vs AGI

When choosing between tool AI and AGI-oriented research, start with business objectives, risk tolerance, and regulatory constraints. Tool AI is typically the best choice for well-defined tasks with measurable success criteria, fast deployment, and clear explainability. AGI belongs on a long-term roadmap, reserved for research programs with strong governance, safety infrastructures, and cross-domain collaboration. The pragmatic takeaway for tool ai vs agi is to separate near-term deployments from long-horizon ambitions, ensuring that day-to-day operations remain stable while monitoring breakthroughs and preparing for responsible scaling if AGI-like capabilities become feasible.

Summary: A Practical View of the Two Paths

In the near term, tool AI remains the practical engine for automation, optimization, and decision support. AGI remains a strategic horizon that requires governance, safety, and international cooperation. By applying the lens of tool ai vs agi to project planning, teams can deliver reliable results today while staying informed about breakthroughs that could redefine the landscape tomorrow. The AI Tool Resources framework emphasizes disciplined experimentation, transparent metrics, and incremental progress as the safest path forward.

Comparison

| Feature | tool AI | AGI |

|---|---|---|

| Definition | Narrow, task-specific AI with predefined objectives | Hypothetical broad-domain intelligence akin to human cognition |

| Scope | Limited to the designed domain and data | Intended to operate across multiple domains without task-specific programming |

| Learning | Supervised/unsupervised learning; domain-bound fine-tuning | Theoretical lifelong learning and autonomous knowledge transfer |

| Adaptability | High within a fixed context; limited cross-domain transfer | Broad adaptability across unfamiliar tasks (theoretical at present) |

| Performance Metrics | Benchmarks tied to specific tasks; easy to quantify | No standard benchmarks; performance is undefined until realized |

| Data Requirements | Curated, labeled data tailored to the task | Massive, diverse data needs; strong generalization promises |

| Safety & Control | Well-defined safety limits; auditable behavior | Complex safety architectures; greater governance challenges |

| Cost & Deployment | Lower upfront cost; faster time-to-value | Uncertain cost and scale; speculative deployment timelines |

| Best For | Repeatable, domain-specific automation | Exploratory research with long-term strategic goals |

| Limitations | Brittleness outside scope; requires frequent retraining | Speculative capabilities; significant safety and policy questions |

Upsides

- Predictable behavior and easier auditing

- Faster deployment for defined tasks

- Lower risk of catastrophic failure due to limited scope

- Clear accountability and regulatory compliance for specific apps

Weaknesses

- Limited to narrow domains; cannot generalize across tasks

- Requires task-specific data curation and tuning

- Cannot exhibit true understanding or common-sense reasoning

- AGI ambitions impose governance and safety challenges that affect strategy

Tool AI remains the practical default; AGI is a long-term horizon

Prioritize tool AI for near-term value with strong governance. Monitor AGI progress to align strategy, but avoid over-committing resources to speculative timelines.

FAQ

What is the essential difference between tool AI and AGI?

Tool AI is narrow and task-specific, delivering reliable results within a defined scope. AGI is a theoretical, broad-capability system that could handle many domains with minimal guidance. The choice between them shapes risk, governance, and the pace of deployment.

Tool AI is narrow and task-focused, while AGI would be broad and general. For now, most deployments are tool AI with clear boundaries. The difference matters for risk and governance.

Are there real-world examples of AGI today?

There are no confirmed, fully realized AGI systems in production today. Researchers treat AGI as a long-term objective, while many organizations gain value from tool AI in specialized domains. The gap lies in broad generalization and robust safety guarantees.

There aren’t real AGI systems in production yet; most work today is in tool AI across specific tasks.

What are the main risks of pursuing AGI?

AGI research raises governance, alignment, and safety concerns. Potential misalignment with human values, unexpected behavior, and global coordination failures are key risk areas. Careful policy, oversight, and incremental progress are recommended when exploring an AGI-focused agenda.

AGI carries big safety and governance risks; alignment and control matter most.

When should an organization use tool AI instead of AGI?

Use tool AI for well-defined problems with clear success metrics, fast deployment, and auditable outcomes. Reserve AGI exploration for long-term research programs with strong governance, safety standards, and cross-domain collaboration.

Go with tool AI for now, and keep AGI work on a long-term research track.

What challenges exist in evaluating progress toward AGI?

Evaluating AGI is difficult due to the lack of standard benchmarks and the risk of overestimating capabilities. Progress is often incremental, domain-specific, and entangled with safety experiments, making cross-domain assessment and governance integration essential.

There aren’t universal AGI tests yet, so progress is hard to measure across domains.

Key Takeaways

- Define clear business goals before choosing AI type

- Prefer tool AI for reliable, auditable outcomes now

- Plan long-term AGI strategies with strict safety controls

- Invest in cross-domain platforms to enable future reuse

- Balance risk, governance, and resource allocation