How Long Does It Take to Create an AI Tool? Timelines, Phases, and Best Practices

Explore realistic timelines for building an AI tool, from ideation to deployment. Learn phase-by-phase durations, data readiness, and risk considerations to plan projects confidently.

How long does it take to create an ai tool? Timelines vary from weeks for a small prototype to months for a production-grade solution. A typical bootstrap prototype lands in about 6-12 weeks, with 3-6 months for robust data pipelines, safety checks, and deployment. In practice, complex integrations can push totals toward 9-12 months. For many teams, how long does it take to create an ai tool varies by domain.

Phase 1: Framing the Problem and Defining Success Metrics

Timelines begin with a crystal-clear problem statement and measurable goals. Stakeholders must agree on what a successful AI tool looks like, including user experience, accuracy, latency, reliability, and operational cost. Early scoping sessions should identify key success metrics (e.g., precision, recall, false positive rate), target users, and the critical business questions the tool will answer. Without explicit success criteria, teams risk scope creep and misaligned expectations. A practical approach is to draft a lightweight impact map and a one-page requirements document that teams can revisit after each sprint.

- Define primary use cases and success metrics

- Map user journeys and expected outcomes

- Establish constraints (privacy, latency, compute costs)

- Set a realistic, reviewable milestone plan

This phase typically lasts 2-4 weeks for smaller projects and can extend in larger organizations where governance and stakeholder alignment are more involved.

Phase 2: Data Strategy and Governance

Data is the lifeblood of any AI tool, and its quality directly influences timelines. During this phase, teams inventory data sources, assess data quality, plan labeling and annotation workflows, and establish governance policies for privacy and security. Data readiness often becomes the primary bottleneck; delays in obtaining representative, labeled data can push the schedule by weeks or months. Teams should define data-versioning practices, retention rules, and access controls early to avoid late-stage bottlenecks.

- Audit available datasets and identify gaps

- Design labeling and annotation pipelines

- Plan data privacy, consent, and compliance measures

- Establish data versioning and lineage tracking

Typical duration: 4-12 weeks, depending on data complexity and governance requirements.

Phase 3: Model Exploration and Proof of Concept

With data in hand, teams explore candidate models and establish baselines. This phase emphasizes rapid experimentation: selecting architectures, evaluating baselines, and demonstrating a working concept that meets minimum viable requirements. The goal is to prove that the approach can achieve the target metrics within the given constraints before scaling. Decisions made here influence later infrastructure and engineering work, so thorough documentation and transparent evaluation are essential.

- Run baseline experiments and compare models

- Define evaluation criteria and dashboards

- Validate alignment with user needs and safety constraints

- Decide on a viable MVP feature set

Expect this phase to take 6-20 weeks, influenced by data complexity and the number of explored architectures.

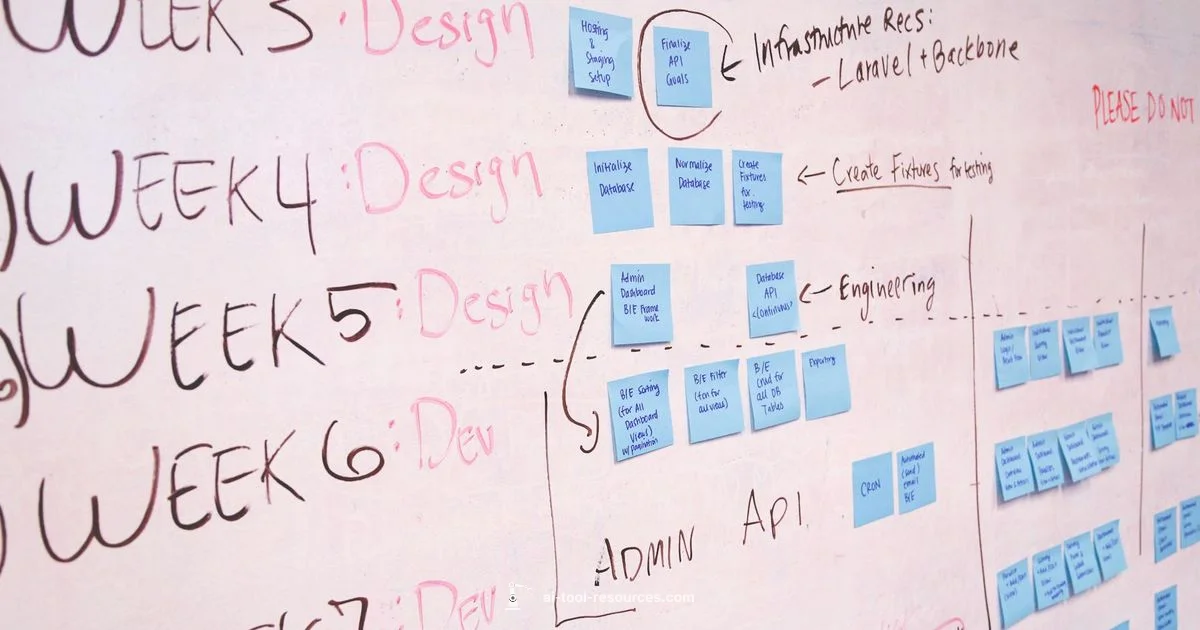

Phase 4: System Architecture and Infrastructure

A robust architecture framework ensures the AI tool can scale from MVP to production. This includes choosing data pipelines, model hosting, API design, monitoring, logging, and security controls. Decisions about on-premises versus cloud, latency targets, batch vs. streaming data, and retraining schedules set the pace for later development. Establishing reusable components, CI/CD for ML, and a modular deployment strategy reduces future rework and accelerates iteration cycles.

- Define data pipelines and storage strategy

- Choose model hosting and API patterns

- Plan ML Ops practices (retraining, monitoring, rollback)

- Establish security, access control, and audit trails

Typical duration: 6-12 weeks for MVP-level infrastructure, longer for complex, compliant environments.

Phase 5: Building the MVP

The MVP focuses on delivering the core value with a minimal, testable feature set. This phase benefits from tight iteration loops, automated testing, and user feedback integration. The MVP should be designed for easy updates, with a plan for incremental improvements rather than a perfect, initial product. Clear acceptance criteria help teams know when to pivot or persevere, reducing time wasted on features that do not add measurable value.

- Implement core features and a basic UI/API

- Establish end-to-end testing and monitoring

- Collect early user feedback for rapid iteration

- Prepare a plan for scale and data refresh

Typical duration: 4-12 weeks, depending on feature scope and integration complexity.

Phase 6: Testing, Safety, and Compliance

This phase emphasizes rigorous evaluation and risk management. Beyond traditional accuracy metrics, teams assess robustness, fairness, interpretability, and security. Safety checks may require additional audits, red-teaming, or external reviews. Early and ongoing governance reduces late-stage surprises and helps preserve timelines when regulatory requirements emerge. This stage also covers ethical considerations and user privacy protections.

- Define safety and fairness criteria

- Conduct adversarial testing and red-teaming

- Verify privacy and compliance requirements

- Document risk mitigation and remediation plans

Duration varies but often adds 4-10 weeks, especially in regulated domains or where external validation is needed.

Phase 7: Deployment and Monitoring

Deployment marks a transition from a research prototype to an operational tool. Building a reliable deployment pipeline (CI/CD for ML), setting up monitoring dashboards, and establishing alerting for drift or failures are critical. This phase also includes user onboarding, rollout strategies, and a plan for parallel running with legacy systems if needed. Early monitoring helps catch issues before they affect users and supports faster iteration.

- Create deployment pipelines and rollback plans

- Set up monitoring for performance, latency, and data drift

- Implement user onboarding and documentation

- Coordinate with IT/ops for production readiness

Expected duration: 4-12 weeks for MVP rollouts; longer for enterprise integrations and comprehensive monitoring setups.

Phase 8: Iteration, Scale, and Support

After deployment, the emphasis shifts to iteration and scale. This includes refining models with new data, expanding features, enhancing reliability, and supporting broader user adoption. Scaling often requires additional compute, more robust monitoring, and governance expansions. A sustainable cadence of retraining, feature reviews, and user feedback loops helps ensure the tool remains valuable and compliant over time.

- Plan for incremental feature improvements

- Scale infrastructure and team capabilities

- Maintain governance and data stewardship

- Establish ongoing support and training programs

Typical duration varies widely, but expect ongoing cycles that can last years as the tool matures.

Practical Scenarios and Timeline Scaffolds

To make the timelines tangible, consider three common scenarios:

- Small team, limited data: 6-12 weeks for prototype, 2-4 months for MVP, with 6-9 months total for deployment and initial monitoring.

- Mid-size team, moderate data: 12-20 weeks for MVP, 4-6 months for scale, and 9-12 months to enterprise readiness.

- Enterprise, regulated domain: 6-9 months for MVP, 12-18 months for full deployment, with ongoing governance and retraining cycles thereafter.

These scaffolds are rough guides and should be adjusted for domain-specific risks, data availability, and regulatory constraints.

Typical AI tool development lifecycle (AI Tool Resources Analysis, 2026)

| Phase | Estimated Duration | Key Deliverables |

|---|---|---|

| Scoping & Planning | 2-4 weeks | Problem statement; success metrics; project plan |

| Data Strategy & Prep | 4-12 weeks | Data catalog; governance; labeling plan |

| Model Development & Evaluation | 6-20 weeks | Baseline models; evaluation results; UI mockups |

| Deployment & Monitoring | 4-12 weeks | Deployment pipeline; dashboards; alerting |

FAQ

What is the typical timeline for a small AI tool prototype?

For small-scoped prototypes, most teams expect 6-12 weeks to demonstrate core viability, with a clear path to MVP. The exact duration depends on data access, tooling maturity, and stakeholder alignment.

Most teams prototype in about 6 to 12 weeks, depending on data access and tooling maturity.

How does data readiness affect project duration?

Data readiness often dominates timing. Delays in data collection, labeling, or quality assurance can add weeks or months, regardless of modeling prowess.

Data readiness is usually the biggest driver of timeline delays.

What factors extend timelines beyond development?

Deployment, integration with existing systems, and regulatory compliance can add months. Planning for bump-ups in governance helps avoid late surprises.

Deployment and compliance can stretch timelines; plan for governance early.

Do templates and off-the-shelf components help shorten timelines?

Yes—leveraging reusable components and ML ops templates can shave weeks, but they still require domain adaptation, testing, and validation.

Templates can speed things up, but you still need to test and customize.

How should maintenance affect long-term planning?

Ongoing maintenance—retraining, monitoring, and updates—requires sustained time and resources beyond initial deployment.

Maintenance is an ongoing effort that lives beyond the initial launch.

Is 12 months always enough for enterprise AI tools?

Not always. Large organizations may need 12-18 months or more, depending on governance, data sophistication, and legacy integrations.

Large enterprises often require longer timelines due to governance and legacy systems.

“Building an AI tool is a marathon of data, safety, and iteration. Realistic timelines come from disciplined scoping, repeatable processes, and early safety checks.”

Key Takeaways

- Define clear, measurable success metrics early

- Data readiness is a major driver of timelines

- Prototype fast; validate with real users

- Plan for governance, safety, and compliance from day one

- Build modular, scalable architecture for faster iteration

- Expect ongoing iteration beyond initial deployment