Difference Between AI Tool and Agent: A Practical Comparison

Explore the difference between AI tools and AI agents with a practical, analyst-style comparison. Learn how scope, autonomy, governance, and cost impact decisions for development teams.

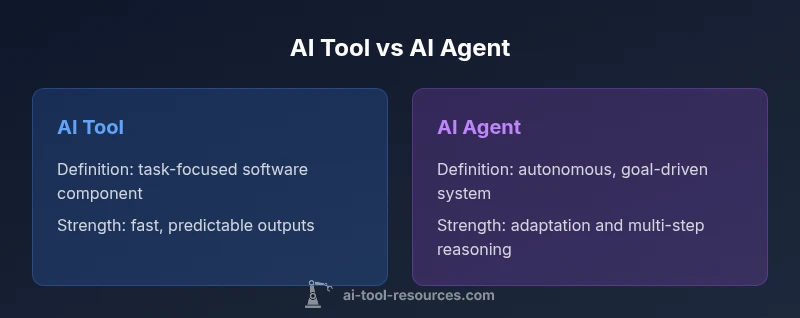

Difference between ai tool and agent: at a glance, tools are focused components that perform predefined tasks, while agents are autonomous, context-aware systems. For developers, this quick comparison clarifies when to deploy each, how governance changes, and what to expect in terms of deployment speed and risk management.

The difference between ai tool and agent: an essential framing

Understanding the difference between AI tool and agent is foundational for developers and researchers. The terms are often used interchangeably in marketing, but they describe distinct capabilities, lifecycles, and governance needs. According to AI Tool Resources, choosing the wrong approach can lead to misaligned expectations and wasted resources. This article uses the keyword difference between ai tool and agent as our guide to map the space, clarify definitions, and provide concrete decision criteria. At a high level, an AI tool is a focused software component that executes a defined task or set of tasks. An AI agent, by contrast, behaves more like a companion in an environment: it can sense its state, plan actions, and adjust to changing conditions with a degree of autonomy. For teams, the distinction translates into practical outcomes: faster delivery of narrowly scoped features with an AI tool, or iterative, context-aware automation with an AI agent. Throughout this guide we’ll compare capabilities, trade-offs, and governance considerations so you can pick the right approach for your project.

What is an AI tool? Definition, scope, and typical use cases

An AI tool is a software component designed to perform a specific task or set of tasks with machine intelligence. Tools are typically stateless, operate on defined inputs, and produce deterministic outputs or probabilistic results based on trained models. They excel at repeatability and speed when the task is narrow and well-scoped. Common examples include text completion modules, code linters, or data transformation pipelines that follow preset rules. For development teams, AI tools provide low-friction integration points into existing workflows. Because they are modular and composable, teams can assemble larger systems by chaining multiple tools into pipelines. The main risks with AI tools involve overfitting to narrow prompts, data leakage through APIs, and insufficient monitoring of results in production. When used correctly, tools can accelerate delivery, improve consistency, and reduce manual effort. The difference between ai tool and agent is a helpful lens here: tools do not independently pursue goals; they execute given commands or prompts.

What is an AI agent? Definition, scope, and typical use cases

An AI agent is an autonomous entity capable of perceiving its environment, formulating goals, planning actions, and executing tasks that move toward those goals. Agents can maintain state over time, adjust behavior based on feedback, and operate in uncertain, dynamic settings. They are commonly used in automation that involves multi-step reasoning, planning under constraints, or interacting with other systems or humans. In practice, agents enable workflows like proactive monitoring, autonomous incident response, or goal-directed data collection. However, autonomy adds complexity: agents require governance, safety checks, and robust auditing to ensure alignment with user intent and policy. When applied to a broad domain, an agent can orchestrate a suite of tools, coordinate data sources, and adapt to changing requirements. The core idea behind the distinction is that agents bring intent and adaptability, while tools provide reliability and structure.

The key differentiators: autonomy, control, and decision boundaries

The most salient differences between AI tools and AI agents lie in autonomy, control, and scope. Autonomy refers to the degree to which the system can act without explicit prompts; tools typically require direct commands, while agents generate plans and execute actions with feedback loops. Control and orchestration describe who sets the goals and how those goals are refined; agents often operate within higher-level goals set by humans or other systems and may negotiate sequences of tasks autonomously. Decision boundaries define how outcomes are determined and what happens when the environment changes; tools rely on deterministic rules or single-shot inference, whereas agents employ planning, belief updates, and risk assessments to steer decisions. When you map capabilities along these axes, you’ll see clear trade-offs: tools win on predictability, speed, and safety; agents win on adaptability, long-horizon problem solving, and resilience to changing contexts. For teams, this means choosing the right orchestration approach and ensuring governance that matches the desired risk profile.

When to choose an AI tool vs an AI agent

Choosing between an AI tool and an AI agent depends on task complexity, risk tolerance, and integration needs. If your project has a narrow scope with well-defined inputs and outputs, an AI tool is typically the faster, safer option. Tools enable rapid prototyping, easier debugging, and clearer ownership because each component has a single purpose. If your workflow involves uncertain environments, evolving requirements, or multi-step reasoning, an AI agent can adapt over time, handle diverse data sources, and coordinate other components. Agents are especially valuable when monitoring, incident response, or autonomous decision-making is required. In practice, many teams adopt a hybrid approach: agents orchestrate a set of tools, monitor outcomes, and adjust prompts or policies as needed. The optimal choice often hinges on governance: define decision boundaries, logging standards, and rollback strategies early in the design process.

Technical architecture: interfaces, APIs, and data flows

From an architectural perspective, AI tools and AI agents differ in how they interact with data and other systems. Tools are typically invoked via straightforward APIs or library calls that accept inputs and return results; they favor stateless design and clear data contracts. Agents, in contrast, require a planning loop: sensing the environment, selecting goals, generating action plans, and executing steps while incorporating feedback. This loop may involve memory modules, state persistence, and a policy engine that governs choices under uncertainty. Data governance is essential for both: define data provenance, access controls, and privacy safeguards. Interfacing layers matter too: agents might need message buses, agent cores, or orchestration platforms to coordinate actions with multiple tools. For practical systems, expect a hybrid stack where an agent orchestrates several tools, each performing specialized tasks under guardrails and telemetry.

Performance, reliability, and governance considerations

Performance metrics differ: tools are assessed on latency, accuracy, and repeatability; agents on adaptability, resilience, and goal-alignment. Reliability requires robust monitoring, auditing, and rollback capabilities; agents introduce additional risk surfaces due to autonomous decision-making. Governance frameworks should cover alignment with policies, safety constraints, and traceability of decisions. Observability is critical: instrument your systems to capture decision paths, prompts, tool invocations, and outcomes. Security must address data exposure through tool APIs, prompt leakage, and potential adversarial manipulation. Finally, consider the organizational impact: staff training, change management, and how governance will enforce boundaries without stifling innovation. The overarching takeaway is that both tool and agent layers must be designed with clear ownership, testing regimes, and clear escalation paths.

Economic considerations: cost, ROI, and total cost of ownership

Costs hinge on usage patterns, data volumes, and integration complexity. AI tool costs can be predictable when you pay per call or per seat, but scale and data transfer may inflate budgets. AI agents may require more upfront design, governance investments, and ongoing maintenance to sustain autonomy without drift. From a financial perspective, total cost of ownership depends on maintenance, monitoring, and risk management, not just upfront licensing. AI Tool Resources analysis emphasizes evaluating both direct costs and opportunity costs, including the value of faster delivery, reduced manual effort, and the cost of potential misalignments. When budgeting, plan for incident response, logging, and security audits as non-negligible line items. In practice, teams should outline a cost model that includes development, deployment, governance, and long-term support for the chosen approach. This framing helps prevent surprises and improves alignment with business goals.

Industry case studies and examples

In manufacturing, an AI tool might automatically normalize sensor data and generate alerts, while an AI agent could autonomously orchestrate the response to detected anomalies, coordinating maintenance requests and supplier communications. In software development, tools accelerate code generation or review, whereas agents manage multi-step pipelines, track dependencies, and adapt to changing requirements. In education and research, tools can process large datasets or draft summaries, with agents guiding long-running experiments and coordinating data collection across teams. Across finance, healthcare, and logistics, the combination of tool-based automation and agent-driven orchestration helps teams scale operations while maintaining governance. The key is to align the approach with risk and complexity: simple, rule-based tasks suit tools; dynamic, cross-domain workflows benefit from agents.

Common misconceptions and myths

Myth: More autonomy is always better. Reality: autonomy should match risk tolerance and governance. Myth: Tools and agents are mutually exclusive. Reality: many systems blend both. Myth: Agents eliminate the need for human oversight. Reality: humans must define goals, constraints, and audit trails. Myth: All AI decisions are equally explainable. Reality: some decisions rely on probabilistic models with opaque reasoning. Myth: You must choose one approach for all tasks. Reality: hybrid architectures often deliver the best balance of speed and adaptability.

How to evaluate and run a comparison test

Start with a clear success criteria: performance metrics, governance standards, and risk limits. Build a small pilot that compares a focused AI tool against an autonomous agent across a defined workflow. Measure outcomes: latency, accuracy, reliability, and the quality of decisions. Debrief with stakeholders; adjust the scope, prompts, and governance. Iterate, monitor, and document results; ensure traceability. Finally, document the rationale for the chosen approach to guide future decisions.

The future: trends shaping AI tools and agents

Expect increasing hybrid architectures, where agents orchestrate specialized tools and learn from feedback to improve prompts and policies. Greater emphasis on safety, explainability, and compliance; more standardized governance patterns to manage risk. Tool marketplaces, modular architectures, and open standards will simplify integration. As AI accelerates, teams should focus on designing flexible systems that can adapt to shifting requirements without compromising security or control. The landscape will reward organizations that pair robust governance with scalable, modular components.

Comparison

| Feature | AI tool | AI agent |

|---|---|---|

| Autonomy | low autonomy; requires explicit prompts | high autonomy; plans and acts with minimal prompts |

| Control & orchestration | human-driven prompts; API calls | goal-oriented planning and execution with feedback loops |

| Data handling & memory | stateless per call | stateful with session memory and history |

| Lifecycle management | short-lived, modular sessions | persistent, evolving behavior over time |

| Best for | narrow, well-defined tasks | complex, multi-step workflows with goals |

Upsides

- Clarifies decision boundaries for teams

- Speeds up delivery on defined tasks

- Facilitates modular, testable architectures

- Supports safer governance and auditing

Weaknesses

- Limited adaptability in unpredictable environments

- Can introduce integration overhead and orchestration complexity

- Autonomy adds governance and safety requirements

- Potential for scope creep if not managed properly

Hybrid architectures often deliver the best balance: use AI tools for reliability and AI agents for adaptability.

Tools shine when tasks are well-defined and predictable; agents excel in dynamic, multi-step scenarios. A staged, governance-led approach that combines both can maximize speed, reliability, and control.

FAQ

What is the difference between AI tool and AI agent?

The AI tool is a focused component that performs predefined tasks, while an AI agent is autonomous, capable of sensing, planning, and acting toward goals. Tools are predictable; agents handle dynamic contexts.

AI tools perform defined tasks; agents set goals and adapt actions on the fly. The choice depends on complexity and risk tolerance.

When should I use an AI tool?

Use AI tools for narrow tasks with clear inputs and outputs. They are quick to deploy, easier to debug, and safer for tightly scoped functionality.

Choose tools for well-defined tasks with predictable results.

When should I use an AI agent?

Use AI agents for complex, uncertain environments that require planning, memory, and adaptation over time. They can coordinate multiple components toward long-term goals.

Pick agents for adaptive, multi-step workflows.

Can AI tools and agents work together?

Yes. Agents can orchestrate tools, invoking them as needed while maintaining overall goals and monitoring outcomes. This hybrid approach often provides balance.

Agents can coordinate tools to achieve broader goals.

What governance considerations exist when using agents?

Governance should cover safety checks, accountability, prompt/version control, data handling, and traceability of decisions.

Governance is essential to manage autonomy and risk in agents.

Key Takeaways

- Define decision boundaries early and clearly

- Prefer tools for simple, repeatable tasks

- Leverage agents for adaptive, multi-step workflows

- Invest in governance, observability, and auditing

- Consider a hybrid approach to balance speed and flexibility