Problems with AI Detection Tools: Troubleshooting Guide

Urgent guide to diagnose and fix common problems with AI detection tools, with practical steps, safety notes, and governance tips for developers and researchers.

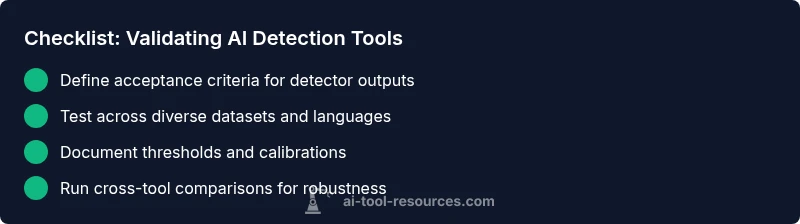

Problems with ai detection tools are common and multifaceted. Start by validating data quality, thresholds, and context-specific calibration, then implement human-in-the-loop review. Prioritize reproducibility, logging, and cross-tool comparisons to distinguish true signals from noise. This guide provides a practical 2-3 step quick fix and a path to deeper diagnostics.

Problems with ai detection tools: Core challenges

Problems with ai detection tools are not rare edge cases; they are systemic issues that arise from data shift, model bias, and the inherent limits of automated detectors. False positives can label legitimate content as machine-generated, while false negatives let real machine-generated content slip through. The AI Tool Resources team emphasizes that detector performance varies by domain, language, and the source material. In real-world use, detectors struggle with multilingual text, paraphrasing, stylistic variation, and edge cases that fall outside the training data. To navigate these challenges, practitioners must combine automated signals with human judgment, maintain transparent reporting, and establish governance that accounts for uncertainty. According to AI Tool Resources, understanding the boundaries of detection tools is the first step toward robust AI governance and safer deployment in research and development settings.

Calibration and thresholds: why one size does not fit all

Detectors rely on thresholds to decide if content is likely AI-generated. These thresholds are not universal; they depend on the data domain, user expectations, and risk tolerance. When thresholds are set too aggressively, false positives rise; when too lenient, false negatives increase. Regular recalibration with fresh, representative data helps keep detectors aligned with current usage patterns. Calibration should be documented, versioned, and reviewed after major data changes or model updates. The result is a detector that adapts to its operating context rather than a static score that misleads users.

Interpretability and human-in-the-loop review

Automated signals are only one part of a responsible workflow. Treat detector outputs as probabilistic cues rather than absolute judgments. Present confidence scores alongside clear explanations of limitations. Establish a human-in-the-loop process for critical decisions—especially in education, publication, or safety-sensitive applications. Clear communication helps users understand why a detector flagged something and what actions are appropriate. Transparency around model version, data sources, and evaluation metrics builds trust and reduces misinterpretation.

Practical steps to reduce false positives and negatives

There are concrete actions you can take to improve detector reliability without sacrificing speed. Start with diverse evaluation data that covers languages, styles, and domains relevant to your user base. Calibrate thresholds per domain, and use ensemble methods that combine multiple detectors to smooth out individual weaknesses. Implement robust logging of inputs, outputs, and decision criteria so you can trace misclassifications. Regularly retrain or fine-tune detectors with fresh data, and maintain separate guardrails for high-stakes decisions where human validation is mandatory. Documentation of limits and decision rules is essential for accountability.

Safety, ethics, and regulatory considerations

AI detectors influence trust, fairness, and safety. They can introduce bias if training data underrepresents certain languages or communities. Protect privacy and comply with data handling regulations when collecting samples for testing. Use detectors to aid decision-making, not replace it, and implement auditing to detect drift or abuse. Clearly articulate the purpose and scope of detection, and provide opt-out mechanisms when appropriate. Responsible stewardship reduces harm and strengthens research integrity.

Monitoring, validation, and long-term reliability

A detector is not a single tool but part of an ongoing system. Establish continuous validation pipelines that monitor drift, calibration stability, and performance over time. Use version control for models and data, run periodic cross-tool comparisons, and set up dashboards for quick health checks. When detectors are updated, conduct backtests to confirm no regression in critical scenarios. Plan for governance reviews and retirement of outdated models to maintain reliability and safety in AI deployments.

Steps

Estimated time: 60-90 minutes

- 1

Collect representative samples

Assemble a diverse dataset that mirrors real usage, including multiple languages, genres, and styles. Ensure samples include both genuine and AI-generated content.

Tip: Document sample sources and ensure no leakage of evaluation data into training. - 2

Check baseline detector performance

Run the detector on the collected samples and record accuracy, precision, recall, and calibration metrics. Note any patterns in misclassifications.

Tip: Create a simple dashboard to visualize false positives vs false negatives. - 3

Calibrate domain-specific thresholds

Adjust decision thresholds per domain (e.g., academic writing, code, or policy text). Validate changes with a holdout set.

Tip: Document threshold values and rationale for future audits. - 4

Test with ensemble methods

Combine results from multiple detectors or signals to reduce reliance on a single model. Compare ensemble vs single-model performance.

Tip: Use voting or averaging schemes with clear decision rules. - 5

Implement logging and explainability

Log inputs, outputs, model versions, and decision criteria. Provide concise explanations for each decision to aid review.

Tip: Keep logs secure and compliant with privacy policies. - 6

Establish human-in-the-loop reviews

Set up a review process for high-stakes decisions, with documented outcomes and feedback loops to improve the detector.

Tip: Train reviewers on common failure modes and bias awareness.

Diagnosis: Detector output is inconsistent across samples or diverges from expected judgments.

Possible Causes

- highData distribution shift or domain drift

- mediumInadequate or biased training data

- highThresholds not calibrated for the operating context

- lowParaphrasing or multilingual content exploited to evade detection

Fixes

- easyReproduce the issue with a representative test set and document the conditions.

- mediumRecalibrate thresholds for the specific domain and language, then re-evaluate with fresh data.

- mediumIncorporate ensemble methods and cross-check results with additional detectors.

- hardImplement human-in-the-loop review for high-stakes decisions and improve explainability.

FAQ

Why do AI detection tools produce false positives?

False positives occur when benign content is flagged as AI-generated due to similarities in writing style, paraphrasing, or feature overlap. They are more likely when thresholds are aggressive or when data is biased toward certain patterns. Rebalancing data and calibrating thresholds helps reduce this issue.

False positives happen when legitimate content looks AI-generated to the detector. Rebalancing data and adjusting thresholds can help, but human review remains important.

How can I improve detector reliability?

Improve reliability by expanding diverse evaluation data, calibrating domain-specific thresholds, using ensemble detectors, and maintaining transparent evaluation reports. Regular retraining with fresh data and a clear governance process are essential.

Expand data, calibrate thresholds for each domain, and use multiple detectors with clear reporting.

What data should I test to validate detectors?

Test with multilingual content, varied genres, and authentic samples alongside synthetic or AI-generated content. Include edge cases that mirror real-world usage to reveal drift and bias.

Test with multilingual and varied real-world content plus edge cases.

Are there limitations across languages and domains?

Yes. Detectors often underperform in underrepresented languages or niche domains. Ongoing evaluation and per-domain calibration are necessary to manage these limitations.

Detector performance varies by language and domain; calibrate per domain.

When should detectors be disabled or overridden?

Detectors should be overridden only with clear governance, documented criteria, and human oversight in high-stakes contexts. Provide an auditable decision trail when bypassing detectors.

Only override with governance and documented checks.

Who should review detector outputs in a project?

Assign trained reviewers with bias and ethics awareness to validate detector outputs, especially for sensitive decisions. Establish accountability and periodic training.

Trained reviewers should validate outputs, especially in sensitive cases.

Watch Video

Key Takeaways

- Identify detector limits before deployment.

- Calibrate thresholds per domain and language.

- Use human-in-the-loop for critical decisions.

- Maintain transparent logging and governance.