How to check if ai tool: A practical guide for developers and researchers

Learn a structured, vendor-agnostic approach to check if ai tool before adoption—covering claims, data handling, model details, performance, and governance.

Goal: Learn how to check if ai tool is trustworthy and suitable for your project. This quick guide summarizes essential checks: confirm vendor claims, verify model details, assess data handling, and evaluate performance under realistic scenarios. Following these steps helps reduce privacy and bias risks while improving reproducibility. By applying a structured process, developers, researchers, and students can make safer, more informed AI tool selections.

Why verifying an AI tool matters

Trust and reliability matter when you integrate AI into development, research, or education workflows. A poorly chosen AI tool can leak data, produce biased results, or fail under real-world loads. If you need to check if ai tool is fit for your project, you should perform structured checks that cover vendor transparency, data handling, model details, and measurable performance. According to AI Tool Resources, rigorous verification reduces risk and accelerates responsible adoption. This article guides you through practical steps, checklists, and governance practices to make informed decisions. The AI Tool Resources team found that many teams overlook governance and data policies, which often leads to compliance gaps and unexpected liabilities. A disciplined approach helps you build auditable records and maintain stakeholder trust.

What counts as an AI tool?

An AI tool refers to software that uses machine learning or generative models to produce outputs, predictions, or decisions. This includes cloud APIs, on-device models, and hybrid systems that combine rule-based logic with learning components. The key indicator is that the tool’s core behavior is driven by learned patterns rather than fixed rules. For researchers and developers, distinguishing traditional software from AI-enabled tools is crucial for choosing the right evaluation methods. Not all tools labeled as AI deliver the same capabilities, so you should verify the underlying approach rather than rely on marketing language alone.

Quick initial checks you can perform

Begin with high-level diligence before diving into technical deep-dives. Check the vendor’s claims against public documentation, request model type (e.g., transformer, diffusion, or rule-based with ML augmentation), and confirm whether inputs are stored or used for training. Ask for data usage terms, update frequency, and any third-party audits. Confirm access controls and whether the tool supports explainability features. Document any gaps and plan follow-up questions to turn marketing assertions into verifiable facts.

Model details you should request

Request transparent information about the model family, version, and training data scope. Seek details on data sources, licensing, tokenization, and handling of edge cases. Ask whether the model supports deterministic outputs under fixed prompts, the seed management strategy, and the expected drift over time. Clarify latency, throughput, and regional availability if performance constraints are present. A clear model-facing glossary helps teams communicate risk and capabilities with stakeholders.

Data handling and privacy considerations

Privacy and data governance are central to responsible AI use. Verify whether inputs are stored, aggregated, or used for model training; confirm data retention periods and deletion rights; and check encryption in transit and at rest. Review the vendor’s data processing agreement for ownership, access rights, and breach notification timelines. If you work with sensitive or regulated data, insist on data locality, access controls, and independent security certifications. Inaccurate assumptions here are common risks that can derail projects later on.

Reproducibility and evaluation methods

To trust an AI tool, you should be able to reproduce results under controlled conditions. Use a fixed test dataset, run multiple seeds where applicable, and log prompts and outputs. Compare results across versions and environments, and document any non-determinism. Establish clear evaluation metrics aligned with your use case (accuracy, precision, recall, F1, safety metrics) and set acceptable thresholds. This discipline helps teams demonstrate progress to stakeholders and regulators alike.

Safety, ethics, and governance

Assess bias, fairness, and potential for harm. Request guardrails and human-in-the-loop workflows where needed, especially for high-stakes decisions. Confirm compliance with relevant regulations (data protection, export controls, sector-specific rules). Review the vendor’s incident response plan and how safety concerns are prioritized and resolved. A strong governance framework reduces risk and supports sustainable AI adoption.

Practical checklist and sample questions to ask vendors

- What data do you collect, store, and train on? Are there alternatives to using customer data for training?

- Can you share model cards or documentation describing capabilities, limits, and expected failure modes?

- How do you handle prompt leakage, data exfiltration, and model inversion risks?

- Do you offer an independent security audit or certification? Are there third-party assessments?

- What are the update cadence and rollback options if issues arise?

- How do you monitor drift and maintain performance over time?

- Can we reproduce results on our own datasets, with versioned deployments?

- What logging, auditing, and access controls are provided for governance?

Documentation and audit trails: how to record findings

Create a structured audit file for each AI tool you evaluate. Include a project name, evaluation date, version/endpoint, data sources, prompts used, outputs, metrics, and decisions. Attach vendor documents, security reports, and test results. Maintain version history so you can track changes over time. A well-kept audit trail simplifies compliance reviews and future re-evaluations. The audit should also capture stakeholder approvals and a go/no-go decision with rationale.

Pitfalls and common blind spots

Avoid assuming safety or compliance based on marketing materials alone. Don’t skip reproducibility checks or rely solely on reported metrics without testing on your data. Beware that cloud-based tools may have data-use implications not evident in public docs. Lastly, ensure you’re not over-indexing on a single metric; a balanced evaluation across multiple dimensions reduces risk.

Long-term monitoring and governance of AI tools

Adopt a lifecycle approach: schedule periodic re-evaluations, track version changes, and adjust risk controls as the tool evolves. Maintain ongoing transparency with stakeholders and update governance policies as requirements change. This proactive stance helps teams stay aligned with best practices and industry norms, as emphasized by AI Tool Resources in their guidance for sustainable AI adoption.

Quick reference: printable checklist for teams

- Confirm data usage terms and training data scope

- Verify model type, version, and update policy

- Assess privacy, security controls, and regulatory compliance

- Run reproducible tests with a fixed dataset

- Document findings, risks, and decisions

- Plan ongoing monitoring and governance

Final thoughts and recommended next steps

As you close an evaluation, document a clear go/no-go decision with rationale and publish it to your stakeholders. The process should be repeatable across tools and teams. The AI Tool Resources team recommends integrating this verification workflow into your standard procurement and deployment lifecycle to ensure responsible AI adoption across the board.

Tools & Materials

- Evaluation checklist template(Printable or digital form for quick reference)

- Vendor documentation (data policy, model cards)(URLs or PDFs)

- Test dataset (non-sensitive)(Representative of real use)

- Secure testing environment access(Isolated sandbox with logging)

- Notebook or spreadsheet for results(Structured rubric: metric, threshold, notes)

- Access to security/compliance reports(If available)

- Prompt and output logging mechanism(Timestamped records)

- Pen and highlighter(For quick on-paper notes)

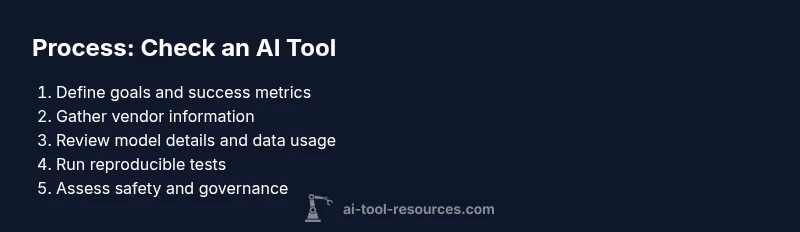

Steps

Estimated time: 60-90 minutes

- 1

Define goals and success criteria

Clarify the use case, success metrics, and failure handling. Align with stakeholders on what constitutes acceptable risk and performance.

Tip: Write down 3 objective questions your evaluation must answer. - 2

Gather vendor information

Collect model specs, data usage policies, security certifications, and deployment details from the vendor.

Tip: Ask for a data flow diagram and a sample contract appendix. - 3

Verify model details

Confirm model type, version, training data scope, and known limitations. Request a model card if available.

Tip: Document any gaps and request clarifications in writing. - 4

Assess data privacy and governance

Review data retention, deletion rights, encryption, and access controls. Ensure alignment with your compliance needs.

Tip: Check whether inputs are used for training and how long data is kept. - 5

Run controlled tests

Use a fixed test set to evaluate accuracy, bias, and robustness. Repeat with different seeds if applicable.

Tip: Record prompts, outputs, and any non-deterministic behavior. - 6

Evaluate safety and fairness

Check guardrails, explainability options, and potential biases. Assess risk in edge cases relevant to your domain.

Tip: Document bias checks and mitigation plans. - 7

Governance and auditing

Verify available logs, versioning, rollback options, and incident response plans.

Tip: Ensure there is a responsible escalation path for issues. - 8

Document findings and decide

Compile results into a transparent report and make a go/no-go decision with stakeholder approval.

Tip: Include rationale and next steps for remediation.

FAQ

What counts as an AI tool?

An AI tool uses machine learning or generative models to produce outputs or decisions. It includes cloud APIs, on-device models, and hybrid systems. Verify the underlying approach rather than marketing claims.

An AI tool uses machine learning to generate outputs, so always check the model type and data practices, not just the name.

What questions should I ask vendors?

Ask about data usage, training data, model versioning, security certifications, and how outputs are evaluated. Request model cards and documentation for reproducibility.

Ask about data handling, model details, and security certifications to confirm trustworthiness.

How can I test accuracy without exposing sensitive data?

Use non-sensitive, representative test data and run controlled experiments. Keep inputs and results in a private, audited environment.

Use safe test data and keep everything in a controlled, auditable environment.

Are cloud-based AI tools riskier for data privacy?

Cloud tools can introduce data-use and retention concerns. Verify data processing agreements, data localization, and deletion rights.

Cloud tools may affect privacy; check contracts and data handling terms carefully.

How often should I re-evaluate AI tools?

Schedule regular re-evaluations tied to version updates, policy changes, and evolving risk factors in your domain.

Plan periodic re-evaluations as tools evolve and policies change.

What if results are biased or unsafe?

Document detected biases or safety gaps, implement mitigation, and consider human-in-the-loop for high-stakes use cases.

If bias or safety issues appear, mitigate and escalate through governance channels.

Watch Video

Key Takeaways

- Use a structured, repeatable vetting workflow

- Demand transparent model and data details

- Prioritize data privacy, security, and governance

- Document findings for stakeholder trust

- Plan ongoing monitoring after deployment