How to test a test ai tool: A practical guide

Step-by-step instructions to evaluate a test ai tool with clear criteria, controlled experiments, and actionable reporting for developers, researchers, and students.

This quick guide shows you how to test a test ai tool in a repeatable, evidence-based way. You’ll define success criteria, assemble representative datasets, run controlled experiments, and document results for stakeholders. You’ll need a safe test environment, access to the tool’s API, sample data, and a metrics plan covering accuracy, latency, robustness, and safety. Follow the step-by-step approach below.

What 'test ai tool' means in practice

Testing a test ai tool means more than running a handful of inputs and hoping for consistent results. It requires a disciplined approach that combines real-world use cases with controlled experiments, clearly defined acceptance criteria, and transparent reporting. According to AI Tool Resources, effective evaluation blends task-based scenarios with structured metrics so teams can separate true capability from incidental performance. The goal is to quantify how the tool behaves under varying conditions, identify failure modes, and build a defensible case for deployment or iteration. This section outlines how to articulate the scope of testing, what to measure, and how to calibrate expectations against the intended use. It also covers the ethical and safety considerations that should accompany any AI evaluation, emphasizing reproducibility and traceability across tests.

Defining success criteria for your test ai tool

Good tests start with clear definitions of success. Begin by mapping the AI tool’s intended tasks to measurable outcomes. For example, if the tool performs classification, define target accuracy and acceptable false-positive/false-negative rates. If it generates content, specify quality thresholds, coherence, and safety constraints. Establish minimum viable criteria (the bare minimum you must see before considering a release) and aspirational targets (stretch goals that guide future improvements). Document these in a living test plan that aligns with stakeholder expectations and regulatory considerations where applicable. Remember to include edge cases and out-of-distribution inputs to probe resilience and reliability. AI Tool Resources Team notes that explicit, testable criteria dramatically reduce ambiguity during analysis and reporting.

Designing experiments and selecting datasets

Experiment design is the backbone of credible testing. Use a mix of representative, synthetic, and edge-case data to reveal strengths and gaps. Structure tests to be repeatable: fix seeds, version the test data, and isolate the test environment from production to prevent contamination. When selecting datasets, prioritize coverage of real-world scenarios your users will encounter, including multilingual inputs, varied formats, and noisy data. Plan for bias and fairness issues by including diverse demographic groups and contexts where appropriate. Establish controls and baselines, such as running the tool against a proven reference model or a simple heuristic, to contextualize results. AI Tool Resources analysis shows that well-curated datasets paired with principled experimentation reduces ambiguity and increases trust in outcomes.

Metrics that matter for AI testing

Choose a balanced mix of quantitative and qualitative metrics. Core metrics typically include accuracy or task-specific correctness, latency (response time), throughput, and reliability under load. Add robustness checks with perturbations (noise, formatting changes, partial inputs) to measure stability. Evaluate bias, fairness, and safety indicators to ensure outputs do not amplify harm. Track explainability where possible, noting how easily results can be interpreted or challenged. Document drift indicators—how model performance changes as data shifts over time. Finally, capture maintainability signals such as test script longevity and ease of reproducing results. A thorough metrics plan helps teams compare iterations and communicate progress clearly to stakeholders.

Documenting results and communicating findings

Documentation should be precise, structured, and actionable. Record all test configurations, data versions, seed values, and tool versions in a centralized report. Present results with visual dashboards, tables, and concise narratives that explain what passed, what failed, and why it matters for deployment decisions. Include a risk assessment highlighting the severity and likelihood of each issue, along with recommended mitigations and owners. Use a standardized template so every run is comparable over time. The AI Tool Resources Team emphasizes that clear, accessible reporting accelerates decision-making and reduces the back-and-forth often seen in tool evaluations. Close with concrete next steps and a plan for re-testing after fixes are implemented.

Authority sources and further reading

For further reading on best practices in AI evaluation, consult established sources and guidelines:

- National Institute of Standards and Technology (NIST) AI Testing Frameworks: https://www.nist.gov/topics/artificial-intelligence

- Stanford HAI Policy and Governance resources: https://hai.stanford.edu

- Harvard Data Science Review on responsible AI testing: https://dsr.mit.edu

Remember to adapt external guidance to your domain and regulatory context. The sources above provide foundational concepts, but your organization will tailor metrics, data governance, and deployment criteria to fit your use case.

Tools & Materials

- Test environment (sandbox or staging server)(Isolate from production; reproduce failing scenarios safely.)

- Access to test ai tool API(Use API keys with least-privilege permissions; rotate credentials after tests.)

- Representative datasets(Include edge cases, noisy data, and diverse formats.)

- Metrics dashboard(Set up charts for accuracy, latency, and reliability.)

- Notebook or code editor(Document experiments and runbooks in one place.)

- Test scripts and configuration files(Versioned, auditable, and reproducible.)

- Data labeling guidelines(Helpful if you need ground-truth labels for evaluation.)

Steps

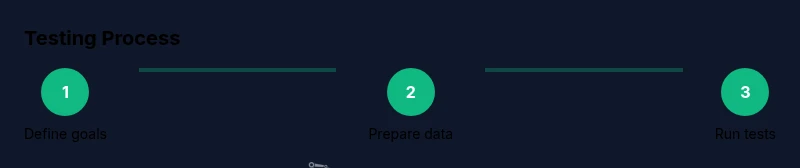

Estimated time: 2-4 hours

- 1

Define objectives and acceptance criteria

Clarify the primary task, success metrics, and minimum viable criteria for deployment. Align these goals with user needs and regulatory constraints to avoid scope creep.

Tip: Write measurable targets (e.g., 92% accuracy on the test set) and document what constitutes a pass or fail. - 2

Prepare the test environment and data

Set up a sandbox that mirrors production conditions but is isolated. Curate input data, seeds, and configurations so tests are reproducible.

Tip: Lock data versions and tool versions to prevent drift between runs. - 3

Configure the AI tool for testing

Install test-specific settings, enable verbose logging, and seed randomness where appropriate to enable reproducibility.

Tip: Capture environment details so others can reproduce the test exactly. - 4

Run baseline evaluation on a small dataset

Execute a baseline run to establish a reference point. Compare results to a simple heuristic or a trusted model.

Tip: Record both passes and failures to understand edge-case behavior. - 5

Scale tests with diverse inputs

Increase data volume and introduce variations (noise, language differences, formatting shifts) to probe robustness.

Tip: Document failure modes and categorize by severity. - 6

Analyze results and identify gaps

Summarize outcomes, highlight strong and weak areas, and map issues to potential mitigations and owners.

Tip: Prioritize issues by risk and impact on user experience. - 7

Report findings and suggest next steps

Create a concise, actionable report with recommendations and responsibilities. Outline a re-test plan after fixes.

Tip: Include a one-page executive summary for non-technical readers. - 8

Plan iteration and re-testing

Implement fixes or improvements and re-run the relevant tests to verify progress. Iterate until criteria are met.

Tip: Schedule recurring test cycles to maintain confidence over time.

FAQ

What is a test ai tool and why should I use it?

A test ai tool is software designed to evaluate AI models against predefined metrics in a controlled environment. It helps teams understand accuracy, latency, robustness, and safety before deployment.

A test ai tool lets you measure AI performance in a safe setup so you can make informed deployment decisions.

Which metrics matter most when testing AI tools?

Core metrics include accuracy or task-specific correctness, latency, throughput, and reliability. Also assess bias, fairness, and safety, especially for high-stakes applications.

Key metrics are accuracy, latency, robustness, and safety, with bias assessment always part of the review.

How often should I re-test after updates?

Re-test whenever there are substantial updates to the model, data, or configurations. Establish a minimum cadence for regression checks and a plan for continuous monitoring.

Test again after major updates and set a regular regression check schedule.

What are common pitfalls in AI tool testing?

Avoid testing with non-representative data, ignoring edge cases, and mixing environment data with production data. Misinterpreting metrics can also lead to overconfident conclusions.

Common pitfalls include data drift and not testing edge cases; plan for both.

Where can I find credible guidelines for AI evaluation?

Look to established AI governance and standards bodies, university research, and peer-reviewed publications for evaluation frameworks. Adapt them to your domain and compliance needs.

Credible guidelines come from universities and standards bodies; adapt them to your context.

Watch Video

Key Takeaways

- Define clear, measurable success criteria

- Use reproducible, isolated test environments

- Diversify data to reveal weaknesses and biases

- Document results with actionable next steps