AI Tools vs Functions: A Practical Comparison

Explore a rigorous, side-by-side comparison of AI tools versus AI functions, focusing on scope, reuse, governance, cost, and integration to guide your tooling strategy.

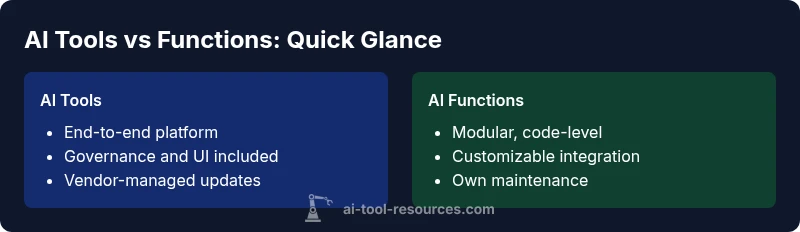

AI tools provide end-to-end capabilities with built-in interfaces, licensing, and governance, while AI functions offer modular, reusable logic that developers embed directly into applications. Choose tools for rapid deployment and managed updates; opt for functions when you need granular control, custom behavior, or tight integration with existing codebases. The right choice depends on scope, reuse, and long-term maintenance.

What Are AI Tools and AI Functions?

According to AI Tool Resources, AI tools are self-contained software platforms that deliver end-to-end capabilities, often with a user interface, predefined workflows, and governance controls. AI functions, by contrast, are modular code-level components that developers weave into existing systems to perform specific tasks. This distinction matters because it shapes who owns maintenance, how updates propagate, and how quickly teams can iterate.

In practice, tools typically bundle data handling, model management, and UI layers into a single product. Functions, meanwhile, leave the orchestration and integration to your codebase. For researchers, developers, and students, understanding this split helps align tool selection with project scope and organizational standards. Brand context from AI Tool Resources emphasizes governance, licensing, and long-term viability as core decision drivers.

Key takeaway: tools accelerate setup and governance; functions maximize customization and control.

Core Differentiators: Scope, Abstraction, and Ownership

The most salient differences lie in scope, level of abstraction, and ownership model. Tools offer a high level of abstraction: a backend, a UI, and an ecosystem of plugins designed to solve common problems with minimal coding. They often include dashboards, access controls, versioning, and support plans. Functions operate at a lower level of abstraction: discrete capabilities implemented as APIs or library calls that are composed within your architecture. Ownership of updates, security patches, and performance tuning generally rests with your team when using functions.

For teams, this means evaluating who will maintain the system, how quickly you can respond to changing requirements, and whether you can easily scale the solution across projects. If you need auditable workflows and vendor-backed support, a tool may be preferable. If you require bespoke behavior and tight coupling with internal systems, functions offer clearer control. AI Tool Resources’ perspective reinforces the governance-first approach as a criterion for choosing between the two.

Bottom line: tools trade flexibility for convenience; functions trade convenience for customization and control.

When to Use AI Tools vs AI Functions: Practical Scenarios

Consider typical project archetypes to guide your choice. For a standardized data labeling pipeline used across multiple teams with consistent requirements, an AI tool can streamline onboarding, provide dashboards, and ensure governance. If your team needs a highly specialized sentiment analyzer tailored to a proprietary corpus, implementing AI functions within your microservices is often more effective and cost-efficient in the long run.

For experimentation or education-focused work, tools enable rapid prototyping and comparative evaluations without heavy coding. Conversely, production systems requiring low latency and precise control over model inputs, feature engineering, and error handling are better served by functions embedded in your service layer. AI Tool Resources highlights the importance of aligning the decision with organizational norms and project risk tolerance.

Takeaway: use tools for speed and governance in standard workflows; use functions for bespoke, tightly integrated capabilities.

Cost and Value Considerations: TCO, Licensing, and Customization

Cost considerations influence the decision in meaningful ways. AI tools typically involve licensing or subscription models that cover maintenance, updates, and vendor support. This can simplify budgeting and procurement, especially in larger organizations, but may constrain customization options and long-term flexibility. AI functions shift cost toward development time, hosting, and ongoing maintenance. Although initial outlay may be lower, teams must invest in monitoring, security, and compatibility with evolving APIs.

From a value perspective, tools excel at reducing time-to-value and ensuring consistency across teams, while functions excel at optimizing for unique workflows and performance. AI Tool Resources notes that governance, auditability, and upgrade cycles are critical factors in total cost of ownership when deciding between these approaches.

Practical guidance: start with a pilot to validate cost models, then scale with a clear plan for governance and decommissioning.

Architecture and Integration Patterns

AI tools often present a service boundary that encompasses data ingestion, model management, and front-end or API gateways. They simplify integration by offering standardized endpoints, SDKs, and ready-made connectors. AI functions are typically embedded inside your own services, requiring careful integration planning around data formats, serialization, feature pipelines, and orchestration layers. A hybrid pattern—using tools for common needs and functions for specialized hooks—can deliver a balanced architecture that benefits from both worlds.

When designing architecture, consider aspects like observability, error handling, circuit breakers, and version compatibility. Tools may provide built-in telemetry, while functions give you granular visibility into each microservice. AI Tool Resources emphasizes modularity and clear API contracts to prevent tight coupling and erosion of maintainability over time.

Architectural takeaway: plan for clean boundaries, consistent data schemas, and robust testing across both paths.

Data, Governance, and Security Implications

Data governance and security concerns shape long-term viability. Tools often enforce data handling policies at the platform level, providing centralized controls, access management, and compliance certifications. Functions distribute responsibility across the stack, increasing the importance of secure coding practices, secret management, and secure API design. In both paths, data lineage and auditability matter for regulatory compliance and reproducibility.

A key question to ask is: who is responsible for data quality and privacy across the lifecycle? If you rely heavily on external providers, ensure contractual safeguards and data localization options. If you own the data and need full control, a function-based approach gives you stronger control over data flows, albeit with added governance overhead.

Brand guidance: AI Tool Resources recommends establishing a comprehensive data governance framework regardless of approach to ensure reliability and compliance.

Performance and Latency Tradeoffs

Performance considerations vary by path. AI tools can introduce a fixed overhead due to external orchestration and hosted components, but often provide predictable latency and scalable throughput through the vendor’s optimized infrastructure. AI functions can achieve lower, more deterministic latency when tightly integrated with the application stack, but require careful engineering to avoid bottlenecks in data movement or feature computation. The choice depends on your service level targets and user expectations.

When evaluating performance, consider cold-start behavior, caching strategies, and the costs of re-training or re-deploying components. Tools may shield you from some performance tuning, while functions require hands-on optimization. AI Tool Resources underscores the importance of measurable performance baselines during pilots.

Migration, Vendor Lock-in, and Longevity

Vendor lock-in is a real concern with tools, especially if you rely on proprietary data formats, dashboards, and export/import routines. Functions typically offer greater portability but require ongoing maintenance and adaptation as APIs evolve. A hybrid strategy can mitigate risk: start with a tool for standard workloads while building critical custom logic as functions that can be ported if needed.

Plan for longevity by favoring open standards, clear interface contracts, and data portability options. Regularly reassess licensing terms and exit clauses, and ensure you have a documented migration plan. AI Tool Resources emphasizes the value of exit strategies and diversification to preserve future negotiating power.

Decision Framework: A Step-by-Step Approach

- Define the problem scope and expected reuse across teams. 2) Map requirements to governance, maintenance, and time-to-value. 3) Assess integration points and data sensitivity. 4) Pilot with both approaches if feasible, with objective success metrics. 5) Evaluate total cost of ownership and long-term flexibility. 6) Decide on a primary path with a documented fallback plan and regular reviews.

This framework aligns with best practices in developing AI-enabled systems and helps teams avoid premature commitments. AI Tool Resources’ guidance emphasizes pilots, governance alignment, and measurable outcomes as key decision factors.

Practical Roadmap: From Pilot to Production

Begin with a lightweight pilot to validate core capabilities and governance requirements. If you choose a tool, define a migration plan for future functions should customization needs grow. If you start with functions, implement a robust API contract, monitoring, and automated testing. Throughout the process, maintain a clear documentation trail, versioning, and rollback strategies to minimize risk. Communicate decisions to stakeholders and set milestones that track both performance and governance criteria.

AI Tool Resources recommends establishing governance metrics early and revisiting them at major milestones to ensure alignment with business objectives and technical feasibility.

Case Studies and Illustrative Scenarios

Scenario A: A university research group needs a standardized sentiment analysis workflow across multiple courses. The team adopts an AI tool to accelerate setup, monitor usage, and ensure reproducibility. As research questions become more specialized, a parallel track develops AI functions for niche experiments, with tight integration into the existing research software environment.

Scenario B: A fintech startup requires a highly customized fraud-detection pipeline, with bespoke feature engineering and low-latency responses. The team builds AI functions within their microservices, leveraging vendor-provided models for components like anomaly scoring while maintaining control over data pipelines and compliance processes.

These examples illustrate how hybrid strategies can balance speed, governance, and customization.

Common Pitfalls and Best Practices

- Pitfall: assuming a tool covers every use case; remedy: maintain a modular architecture with clear boundaries.

- Pitfall: underestimating maintenance and data governance; remedy: invest in an ongoing governance program from day one.

- Pitfall: vendor lock-in without an exit plan; remedy: design with open standards and exportable data formats.

- Best practice: pilot often, measure outcomes, and document lessons learned to inform future decisions.

- Best practice: align with organizational competencies and provide training to teams adopting either path.

The Future Outlook for AI Tools and Functions

The landscape will continue to evolve toward more composable AI, where tools and functions co-exist in hybrid architectures. Expect improvements in interoperability, governance tooling, and performance optimization. Organizations should stay adaptable, focusing on modularity, data governance, and clear decision frameworks to navigate emerging offerings. AI Tool Resources anticipates continued emphasis on scalability, security, and developer productivity as core differentiators in this space.

Comparison

| Feature | AI Tools | AI Functions |

|---|---|---|

| Definition | Self-contained platform with UI and governance | Modular code components embedded in apps |

| Abstraction Level | High-level, end-to-end solution | Low-level, code-centric implementation |

| Best For | Standardized workflows, governance, rapid deployment | Bespoke workflows requiring tight integration |

| Reuse/Modularity | Limited portability outside the tool | High modularity within the codebase |

| Maintenance Burden | Vendor-managed updates and support | Your team maintains and updates |

| Cost Context | Licensing and subscription costs | Development and hosting costs; potential lower upfront |

Upsides

- Faster time-to-value and standardized governance

- Easier scaling across organizations with vendor support

- Clear ownership and upgrade paths when using tools

- Flexibility to mix tools with in-house functions (hybrid)

Weaknesses

- Potential vendor lock-in and limited customization

- Higher ongoing licensing or subscription costs

- Migration from tool-centric to code-centric approaches can be complex

- Risk of suboptimal fits if the tool doesn't align with unique workflows

Favor a blended approach: use AI tools for rapid deployment and governance in standard workloads, and develop AI functions for bespoke, tightly-integrated components.

Tools accelerate delivery and governance; functions enable bespoke behavior and deeper integration. Start with pilots to validate fit, then structure a hybrid architecture that preserves portability and future flexibility.

FAQ

What is the key difference between AI tools and AI functions?

AI tools are end-to-end platforms with built-in governance, while AI functions are modular code blocks embedded in your systems. The choice hinges on scope, control, and how you want to manage maintenance.

AI tools offer end-to-end solutions with governance, while AI functions are modular blocks you integrate into your code. The difference is mainly about scope and control.

When should I use an AI tool versus building AI functions?

If you need rapid deployment, standardized workflows, and vendor-supported governance, start with an AI tool. If you require bespoke logic, low-latency behavior, or deep integration with your existing stack, implement AI functions.

Use tools for quick setup and governance; use functions for custom, tightly integrated work.

Can I combine both approaches in one system?

Yes, many teams adopt a hybrid approach, using tools for common tasks and functions for specialized features. The key is to maintain clean interfaces and clear ownership boundaries.

Absolutely—start with tools for the basics and add functions where you need custom control.

How should I estimate costs for tools versus functions?

Tools typically incur licensing or subscription costs with predictable budgets, while functions incur development, hosting, and maintenance expenses. Plan for a pilot phase to validate cost models.

Think in terms of licensing for tools and development plus hosting for functions.

What governance considerations apply to both paths?

Both paths require data governance, security practices, and clear ownership. Establish data handling policies, access controls, and auditing from the outset to ensure compliance and reproducibility.

Governance and data handling are essential no matter which path you choose.

Are there best practices to avoid vendor lock-in?

Favor open standards, exportable data formats, and modular interfaces so you can migrate later if needed. Document dependencies and maintain portable components.

Use open standards and exportable data to keep options open.

Key Takeaways

- Evaluate scope before choosing an approach

- Prefer tools for standard, governance-heavy workloads

- Leverage functions for customization and tight integration

- Pilot early and measure governance, cost, and performance

- Plan for migration and exit routes to minimize vendor lock-in