Different AI Tool: Side-by-Side Comparison Guide 2026

A detailed, objective comparison of different ai tool categories to help developers and researchers pick the right tool based on use-case, interoperability, cost, and governance.

Two to three sentence TL;DR: Different ai tool describes a category of tools rather than a single product. This article compares three archetypes—general-purpose platforms, code-focused assistants, and domain-specific copilots—to help you choose based on use-case, interoperability, and governance. AI Tool Resources emphasizes testing in pilots and prioritizing governance to avoid vendor lock-in.

What a different ai tool means in practice

Understanding the phrase requires moving beyond hype toward task focused evaluation. A different ai tool is not a single product but a category that spans general purpose platforms, coding copilots, data science helpers, and domain specific copilots. According to AI Tool Resources, success comes from matching tool type to a real task, while prioritizing interoperability and governance. In practice, you might compare a broad AI workspace against a domain specific copilot, or weigh a code focused assistant against a data preprocessing helper. The goal is to design a tool stack that supports your workflow rather than chasing novelty. This section sets the stage for a practical decision framework that can be reused across projects, teams, and disciplines. The keyword different ai tool should be treated as a prompt to map capabilities to outcomes, not as a guarantee of performance. First, identify the core tasks such as data preprocessing, literature review, and prototype development, then map criteria to outcomes and run controlled pilots.

Key criteria for comparison

When evaluating different ai tool options, there are several non negotiable criteria. First, task alignment: does the tool support your core use case, whether it is research, software development, or content generation? Second, interoperability: can the tool connect via stable APIs, common data formats, and reliable authentication? Third, governance and security: what controls exist for data handling, audit trails, and compliance with industry standards? Fourth, performance and latency: is the tool responsive enough for your workflows, and does it scale with data volume? Fifth, cost and total ownership: what is the ongoing cost, what are the licensing terms, and how easy is it to predict future expenses? Sixth, ecosystem and support: are there active communities, training resources, and vendor support channels? AI Tool Resources analysis shows that teams that document these criteria before pilots tend to pick tools that fit their environment more quickly.

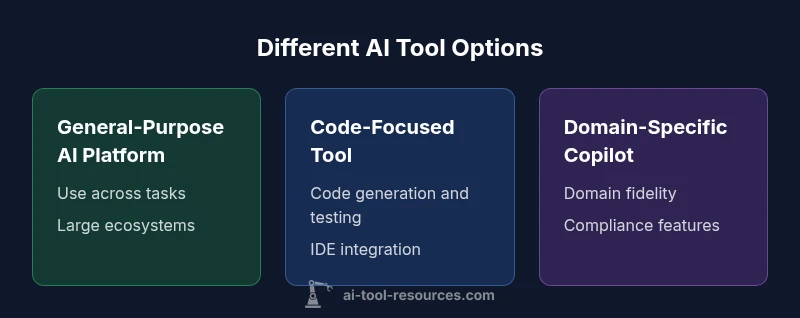

Categories of AI tools

AI tools fall into several broad categories that help you frame the decision. General purpose AI platforms offer versatility across tasks and typically provide large model ecosystems and broad integrations. Code focused AI tools specialize in software development tasks such as code completion, testing, and refactoring, and often embed into IDEs. Domain specific copilots are tailored to fields such as finance, biology, or education, with built in constraints and compliance features. There are also offline or on prem options that emphasize data sovereignty, as well as hybrid models that blend cloud and edge deployments. Understanding these categories helps map team skills, data flows, and governance requirements to the right tool type. The keyword different ai tool should appear here as well to reinforce SEO.

Interoperability and data governance considerations

A core differentiator among these tool types is how they integrate into your existing stack. Interoperability covers APIs, authentication methods, data formats, and support for standard ML workflows. Data governance deals with privacy, data retention, auditability, and regulatory compliance. For researchers and students, governance helps ensure reproducibility and transparency. For developers, interoperability reduces friction of embedding tools in CI CD pipelines. In 2026, most teams benefit from selecting tools with clear data handling policies, robust access controls, and well documented API contracts. The strategy should include versioned APIs, schema contracts, and performance SLAs to avoid surprises during deployment.

Cost models and total ownership implications

Cost considerations for different ai tool options vary widely. General purpose platforms may charge per user seats, per API call, or per workspace, with scaling discounts and usage caps. Code focused tools often use per seat or per project pricing. Domain specific copilots may have license tiers tied to the domain and data volumes. Beyond sticker price, total ownership includes data transfer costs, storage, compute, and the effort required to onboard teams. Experienced teams run pilots to measure incremental value over several weeks and compare against a baseline of manual effort. AI Tool Resources notes that a thoughtful cost model emphasizes predictability and alignment with expected outcomes rather than chasing the cheapest option.

Real world deployment patterns and best practices

In practice, teams deploy different ai tool types in layered configurations. A typical pattern combines a general purpose platform for broad tasks with one or more specialized copilots for critical domains. For development teams, a code focused tool paired with a version control integrated assistant accelerates iteration without sacrificing quality. Education and research groups might pair a general platform with a data analysis copilot that enforces reproducible workflows and citation generation. A disciplined deployment uses staging environments, feature flags, and clear exit criteria. Finally, invest in governance artifacts such as model cards, data sheets, and risk registers so that stakeholders understand capabilities and limitations. The AI Tool Resources team recommends documenting governance expectations early in pilots.

Evaluation checklist for a pilot project

A practical pilot includes these steps: define success metrics tied to real outcomes, assemble a small cross functional team, and establish a test plan with clear criteria. Select two or three tool types that cover core tasks, then run parallel experiments with explicit data sets. Monitor performance, latency, and user satisfaction. Collect qualitative feedback on usability and trust. At the end of the pilot, compare results against a simple ROI model and decide whether to scale, adjust, or sunset. Include a post pilot review with a clear action plan and governance updates. This checklist helps teams avoid premature commitments and ensures you learn rapidly before committing to a larger rollout. The process aligns with best practices described by AI Tool Resources.

Authority sources and external perspectives

- National Institute of Standards and Technology (NIST): AI risk management framework and guidance on responsible AI use. https://www.nist.gov/topics/ai

- Stanford AI Lab: research and practical perspectives on AI tool adoption and governance. https://ai.stanford.edu

- Nature: peer-reviewed coverage of AI methods, ethics, and policy implications. https://www.nature.com These sources provide complementary viewpoints on capability, risk, and policy, helping you triangulate claims in this article.

Putting it into practice: a decision workflow

To apply the framework, start with a clear decision tree. Step 1 define the problem scope and success metrics. Step 2 select candidate tool types that map to the tasks. Step 3 run a controlled pilot with a small data sample. Step 4 measure impact on cycle time, error rate, and user satisfaction. Step 5 review governance and cost signals, and adjust accordingly. Step 6 finalize a transition plan that includes training and documentation. By following this workflow, teams can move from theory to reliable action with a repeatable process. The AI Tool Resources team emphasizes that iteration and governance are the keys to long term success.

Feature Comparison

| Feature | General-Purpose AI Platform | Code-Focused AI Tool | Domain-Specific Copilot |

|---|---|---|---|

| Primary Use Case | Broad tasks and multi-domain workflows | Code generation, testing, and integration | Niche tasks tailored to a domain (finance, health, education) |

| Strengths | Versatility, large ecosystems, strong integrations | Deep coding assistance, IDE compatibility | Domain accuracy and governance features |

| Limitations | Can be complex; depth may lag specialty tools | Risk of hallucinations outside code; narrower scope | May require customization and domain data access |

| Cost Model | Medium to high, usage and workspace driven | Per-seat or per-project pricing, predictable tiers | License tiers tied to domain and data volume |

| Best For | Teams needing flexibility across tasks | Developers needing fast code support | Organizations requiring domain-specific governance |

Upsides

- Broad applicability across use-cases

- Strong ecosystems and vendor support

- Good for teams seeking interoperability and governance

- Scalable for diverse research and development tasks

Weaknesses

- Can be overkill for simple tasks

- Higher total cost if not carefully managed

- Learning curve and feature bloat can slow teams

General-Purpose AI Platform remains the versatile default; domain-specific copilots excel in niche tasks.

Start broad and layer domain copilots as needed. The AI Tool Resources team recommends piloting multiple options with governance in mind to maximize long-term value.

FAQ

What does different ai tool mean in practice?

It refers to the broad spectrum of AI tools that differ in scope, specialization, and deployment. This article compares three archetypes to help you choose.

It means choosing from broad platforms, coding assistants, or domain-specific copilots.

How should I structure a fair comparison of AI tools?

Define your tasks, establish evaluation criteria, run controlled pilots, and measure outcomes against predefined success metrics.

Start with tasks, set criteria, run pilots, and compare results.

What top criteria should guide a pilot before committing?

Use-case fit, interoperability, governance and security, performance, and total cost of ownership.

Focus on fit, connections, governance, and cost when piloting.

Are there security concerns when using multiple AI tools together?

Yes, data access, retention, and auditability matter. Use clear data handling policies and enforce access controls.

Security and data handling matter when combining tools.

Which tool type is best for researchers?

Researchers benefit from general-purpose platforms for flexibility, supplemented by domain copilots for reproducibility and domain-specific constraints.

Researchers can start with a general platform and add domain copilots as needed.

Can I switch tools mid-project without major disruption?

Yes, but plan for data compatibility, migration paths, and staff training. Use staged rollouts and governance documentation.

A staged switch with clear migration plans minimizes disruption.

Key Takeaways

- Assess use-case first to pick the right tool type

- Prioritize interoperability and governance from day one

- Balance upfront cost with total ownership over time

- Leverage domain-specific copilots for compliance-focused needs

- Pilot multiple options to validate value before scaling